Erik Kokalj will host the Hack Chat on Wednesday, December 1 at noon Pacific.

Time zones got you down? Try our handy time zone converter.

A lot of what we take for granted these days existed only in the realm of science fiction not all that long ago. And perhaps nowhere is this more true than in the field of machine vision. The little bounding box that pops up around everyone's face when you go to take a picture with your cell phone is a perfect example; it seems so trivial now, but just think about what's involved in putting that little yellow box on the screen, and how it would not have been plausible just 20 years ago.

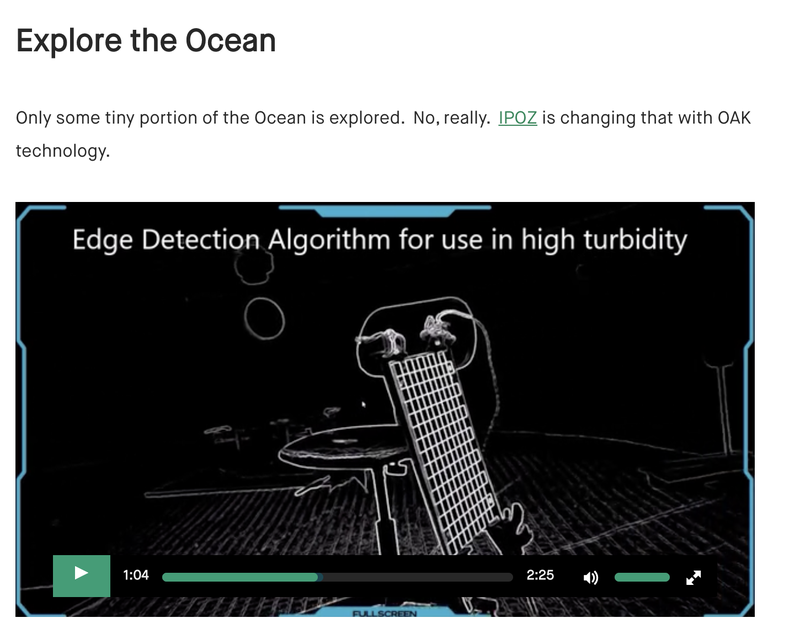

Perhaps even more exciting than the development of computer vision systems is their accessibility to anyone, as well as their move into the third dimension. No longer confined to flat images, spatial AI and CV systems seek to extract information from the position of objects relative to others in the scene. It's a huge leap forward in making machines see like we see and make decisions based on that information.

To help us along the road to incorporating spatial AI into our projects, Erik Kokalj will stop by the Hack Chat. Erik does technical documentation and support at Luxonis, a company working on the edge of spatial AI and computer vision. Join us as we explore the depths of spatial AI.