-

Plato's Ideal World

10/22/2023 at 08:09 • 0 comments![Life of Plato of Athens - World History Encyclopedia]()

"The third-party target market is like Plato’s ideal world,” says Ilyadis. “That’s the point where you have interoperability, plug and play, between devices. It requires having a packaging technology that is more accessible. It needs to be democratized so smaller companies have access. But it’s a journey.”

https://semiengineering.com/is-ucie-really-universal/

The Open Source Autarkic Motherboard Project is about designing an interoperable hardware standard for the assembly of mobile devices such as phones, tablets and laptops. Interoperability, as well as repairability, are key components of democratizing the hardware development. It also allows designers to reuse components such as displays, keyboards, and connectors.

Everyone has seen this: https://m.xkcd.com/927/

![Standards]() Yet, there is much to be said about certain standards- Intel patented the ATX standard in 1995, yet it is not something AMD fans seem to mind. The mobile market is an integrated design, and customers inside the homebrew/hacker community do not typically create patents and attend ISO meetings to deliberate over hardware standards for low volume products.

Yet, there is much to be said about certain standards- Intel patented the ATX standard in 1995, yet it is not something AMD fans seem to mind. The mobile market is an integrated design, and customers inside the homebrew/hacker community do not typically create patents and attend ISO meetings to deliberate over hardware standards for low volume products.Open Hardware Design, allows the potential to create many more compatible components with worldwide customers, but most accessory/case products typically follow the lead recommendations of designs by larger SBC makers.

Interoperability can exist at multiple levels- chiplets are too small to make a difference towards a motherboard dimensions- the benefit of interoperability here is at the connectors for display, power, USB, microSD ports, m.2, wireless modems, and other cards. By allowing some flexibility of design, display ribbons and keyboard connectors can be positioned in locations that make them a standard fit for a laptop chassis without needing to run cables across the PCB, potentially exposing them to the heatsink and limiting airflow.

AMD recently released an Embedded APU, called the R2514: https://us.dfi.com/product/index/1598

Most striking is that the I/O is on the long side, rather than the short-edge. This is the opposite of most SBCs such as the Raspberry Pi and Raxda. While this might not make much of a difference in a custom built chassis or one designed for that board, a laptop designed to have a backplate for the I/O would not be the same width as one that has the I/O on the long end. While the difference is small, it could be the difference between needing to purchase a new chassis and not.

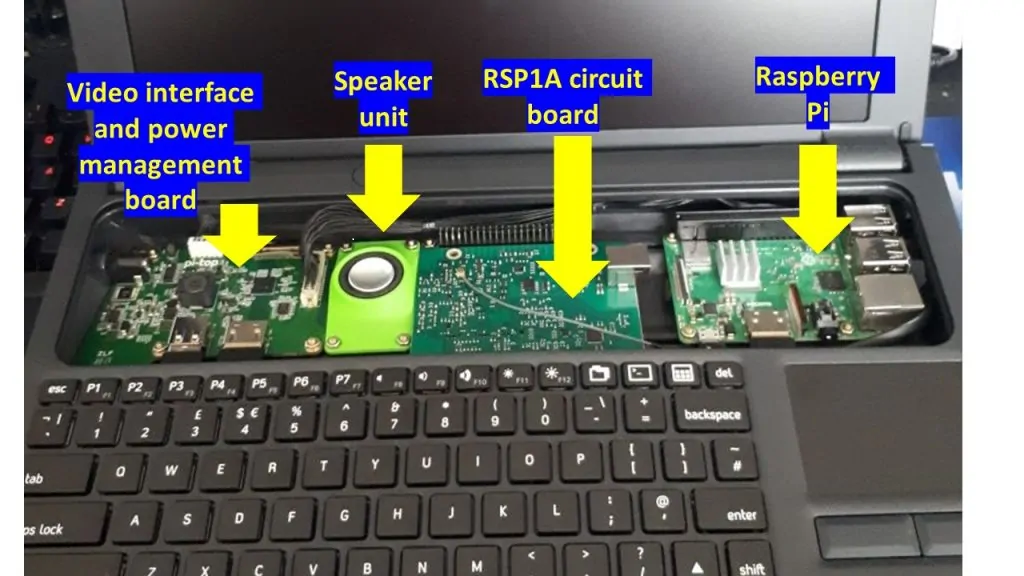

![]() One designer included an SDR radio under the keyboard, next to a Raspberry Pi:

One designer included an SDR radio under the keyboard, next to a Raspberry Pi:![]()

Many SBCs can include this on the board itself, but for certain instruments, it can be beneficial for using the full space under the keyboard for peripheral devices, such as heatsinks (if the board will be using some power:

![]()

![]()

![]()

Having a heatsink pipe or pipes dissipate heat away from the SBC horizontally (excluding radiant heat intrinsic to the SBC components), which can be centered in the laptop, can allow for improved airflow without needing to make a chunky tall laptop base. Fans under the keys or near the fins could also help the airflow towards the sides and back of the laptop.

A low powered SBC wouldn't need a fan and could be passively cooled. But with the amount of leftover space on a PiTopv2, it might be a practical addition in a future PiTop-like chassis for the Raspberry Pi 5, which will need air cooling for heavier workloads.

The benefits of Plato's Ideal World is that 3D printers, which have been out for over a decade, all can lower the barriers to development of novel designs that can serve many customers and hobbyists.

-

Non-Code Contributors- I Want You!

06/16/2023 at 02:19 • 0 commentsOpen-source is as much as about the non-technical as it is the technical: https://github.com/readme/featured/open-source-non-code-contributions

![]()

"It’s difficult to overstate how important non-code contributions are to open source. “Even if you write an amazing program, no one will use it if you don’t explain what it does and how to use it"

Yet, there is much to be said about certain standards- Intel patented the ATX standard in

Yet, there is much to be said about certain standards- Intel patented the ATX standard in