In the logs up to this point I've been pretty quiet about the different parameters that need to be tuned to get the system to run efficiently. Now that I am trying to use this method to tackle more difficult problems however, the parameters are starting to make a significant impact on whether a given swarm succeeds or fails.

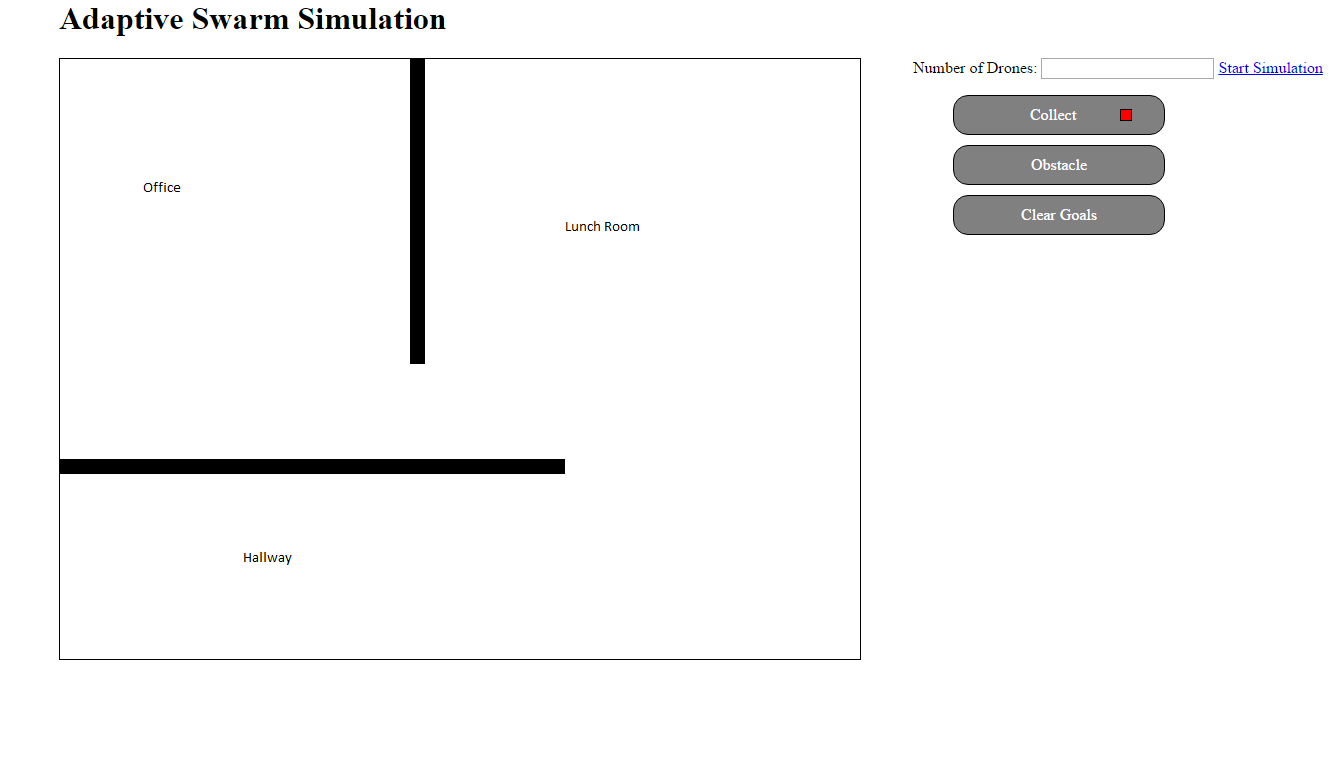

Consider the following test scenario I've attached below. Here the swarm is being asked to work in an office environment. The swarm is released in the office and expected to examine different positions throughout the workplace. This challenge is significant as it the swarms neither have any pre-built map of the world, nor do they make one.

With no map, they depend on the wander behavior to make it though the doorway to any goals in the Lunch Room or Hallway. The ability to make it though that doorway depends strongly on the parameters that have been chosen. Currently these parameters are static and global - the swarm individuals cannot vary their own parameters, and all have the same identical settings.

Those parameters are:

- Sensor Range

- Safety Distance - distance that a drone will allow itself to get to an obstacle before turning away.

- Emergency Stop Distance

- Maximum Speed

- Radio Distance - how far away a drone can signal another drone.

- Speed / Orientation increments - how much to turn / adjust speed

- wander probability of turn

- wander probability of change in speed

Many of these parameters would be intrinsic to the drones themselves and will be assumed to never vary. Specifically Maximum Speed, Sensor Range, and Radio Distance are limitations set by the hardware. The remaining parameters are under the control of our algorithm to a certain extend.

It would not be user friendly to expect an end user to have to adjust these parameters to fit their problem, so simply allowing the user to specify these and hope for the best is not a route I am going to take with this project. Instead I am investigating three possible methods of varying the parameters.

- Randomize the parameters when the machine starts up. This should give a wide range of individuals in the swarm, although it runs the risk of having "holes" in the parameter space where say a certain set of parameters would allow individuals to pass through the door between the Lunch Room and Office are simply not actualized on a real machine. This could of course be mitigated by simply adding more drones, but that seems mildly unreasonable in many real life scenarios.

- Use the signaling system to trigger the drones to "adapt" their parameters. In this scenario drones that have found goals could broadcast successful parameters to other drones, which could adjust their current settings closer to the successful members of their swarm. In this way drones can learn the best parameters that work for them individually.

- As in option 2, use the signaling system to share successful parameters. Instead of an approach where the receiver moves their parameters closer to the successful ones, it simple copies the useful parameters over to itself. In addition, with some preset probability the copy will be imperfect, and result in either a mutation of the original, a cross over operation with the original, or both (I will explain further in a future update). This is analogous to the evolutionary algorithms, where fitness here is all or nothing. Either the parameters succeed is getting the goal, or they die.

It was my original plan to wait until the final step to work on varying the parameters, I am changing course now because of the difficulties seen in the office test where I am forced to manually tune the parameters to get results. Also, from my perspective, this is the most interesting part of the project so I won't pretend I wasn't biased to skip to it early to begin with.

Next will show the behavior of the system using method 1 to vary the parameters. I'll provide a few videos and collect some performance metrics to use as a baseline to compare to methods 2 and 3 which will be done in subsequent updates.

Gene Foxwell

Gene Foxwell

Discussions

Become a Hackaday.io Member

Create an account to leave a comment. Already have an account? Log In.