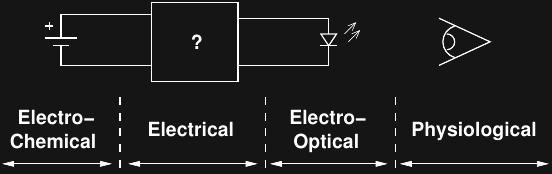

...at least my first crack at a comprehensive approach. Here's a model for the parts of the system that can be optimized:

Electro-chemical (a.k.a. batteries)

This one is pretty easy. Of the four readily available lithium battery chemistries, three take top spots for highest energy density in primary batteries. All of them have decently flat discharge curves (nearly constant voltage over life), and work in cold temperatures.

| Chemistry | Voltage (Nom.) | Wh/kg | Wh/L | Example |

| Li-MnO2 | 3 V | 280 | 580 | CR2032 |

| Li-(CF)x | 3 V | 360-500 | 1000 | BR2032 |

| Li-SOCl2 | 3.5 V | 500-700 | 1200 | TL-2450 |

| Li-FeS2 | 1.5 V | 297 | - | Energizer L91 |

[ data from Wikipedia: Lithium Battery]

Lithium thionyl chloride (Li-SOCl2) has the highest energy density (mass and volume). You can buy them from DigiKey, but they're expensive, toxic, and can explode when shorted [wikipedia]. They have an awesomely flat discharge curve and work in extreme cold. I have a few to play with, but I don't think they're practical for this use.

Lithium managanese dioxide (Li-MnO2) are the "CR" lithium coin cells we usually think of (e.g. the ubiquitous CR2032). According to the wikipedia article, they rank third in energy density, but I think the table is wrong (see below); from the datasheets, they appear to be in second place. They're cheap and I've seen them in department stores, home improvement stores, pharmacies, and supermarkets.

Lithium carbon monofluoride (Li-(CF)x) are the "BR" coin cells compatible with the "CR" series (e.g. BR2032). The datasheets show a 190 mAh rating for the BR2032 compared to 240 for the CR2032, so I don't understand how the energy densities in the table can show this chemistry as superior. In any case, they're available, but nowhere near as common as the "CR" batteries, and so I'm ruling them out.

Lithium iron disulfide (Li-FeS2) are the lithium "AA" and "AAA" batteries, most commonly found as Energizer brand. As replacements for alkaline batteries, they have a higher capacity, work better in the cold, are much lighter, have a flatter discharge curve, perform much better at high discharge rates, have amazing shelf life (20 years), and cost a lot more. Because of their superior performance in digital cameras, they're also widely available. The real downside for glow markers is that they're only available in larger sizes (no coins), and worse, you need a pair of them because they're approximately 1.5V.

Rechargeable batteries are interesting, but they suffer three problems. First, they have low capacity and high self-discharge compared to primary chemistries. You can recharge them (maybe from solar or other energy scavenging sources), but their service life isn't very long - think about how many years you get out of a cell phone or lithium camera battery before it no longer holds a charge and you need to replace it. With the discharge rates of glow markers, primary batteries can outlast rechargeables even if you are re-charging them.

Super-capacitors might be more interesting if you have a power source to periodically charge them (solar?), but they have terrible energy-density compared to batteries. If you have a few cloudy days in a row, your light might go out.

Bottom line: "CR"-series coin cells where size is a concern, "AA" or "AAA" lithium batteries for high brightness markers or super-long-term use where the size can be tolerated.

Electrical

For any light output level which is below the LED efficiency peak, it makes sense to run the LED in a pulsed mode near the most efficient point. So far, I've used microcontrollers to control the LED pulse, and this is the most flexible approach, although I am exploring using ultra-low-power timer chips as well. With a microcontroller, there are two basic contributions to inefficiency: sleep current and active current.

Obviously, choosing a micro with the lowest sleep current makes sense. Active current is a little more complex. My first thought was to choose an LED which was most efficient at high current levels (hundreds of mA, if possible), so that the active current of the micro would be small in comparison, and hence keep efficiency high. Now that it seems the largest LEDs I am likely to be able to use may have efficiency peaks in the tens of mA region, so this may not be possible. For example, the PIC12LF1571 maximum active current at 16MHz/3V is above 1mA. That's at least a 10% efficiency loss if driving the LED with a constant pulse of 10mA; more if using an inductor to create a triangular/sawtooth drive - and this doesn't count the time required for the internal oscillator to stabilize.

Ignoring the actual pulse generation circuit for now, it seems like for a given micro/LED combination, it will make sense to trade-off LED efficiency for micro overhead. For example, driving with a little more LED current will reduce the electrical-to-optical efficiency, but improve the ratio of microcontroller overhead (the duty cycle can be reduced to keep the same overall brightness). This should be easy to simulate and find a maximum overall efficiency point.

Finally, the pulse generation circuit needs to be optimized. This may be the most straightforward, given the wealth of literature available on optimizing switching DC-DC converters. Inductor and MOSFET switch selection are likely the largest contributions, once pulse current and waveform are chosen.

Electro-optical

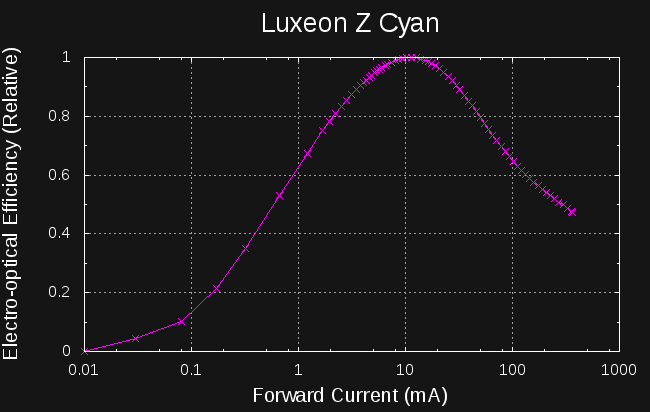

Assuming a selected LED (color choice depends on physiological and expected ambient conditions discussed below), the electrical-to-optical efficiency is a function of LED current. I've spent a lot of time (and build logs) on this topic, so I'll just add one plot I made today for my old favorite LED:

This LED is most efficient driven at 10 mA, more than 2x that at the nominal rated current of 500 mA. For low light output, it's most efficient to drive it in PWM with pulses as close as possible to 10 mA. I had previously been driving this LED at 100 mA (the datasheet provided no guidance), so was losing almost 40% efficiency just based on drive level (I'm excited to make a new version that exploits this possible gain).

When choosing between LEDs, of course, we have to compare their absolute electrical-to-optical efficiency. This, again, may be a difficult figure to glean from the datasheet, but with some calculations and simple experiments, reasonable comparisons can probably be made.

There's also a third effect to take into consideration. With green/blue LEDs, there can be a moderate shift in the peak wavelength with current drive level. This can make the LEDs more or less efficient at stimulating the human visual system, depending on the LED and the dark adaptation of the observer.

Physiological

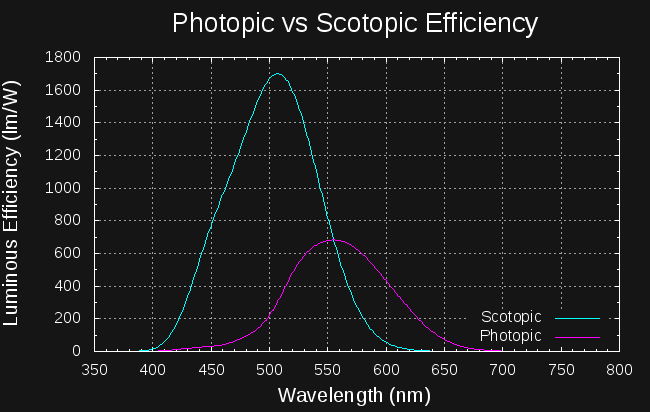

There may not be much we can do to improve the detector in this system, but we can try to understand it. The human visual system has a variable sensitivity to light depending on the wavelength and adaptation of the eye (bright vs dark-adapted). Here are the sensitivity curves for photopic (daytime) and scotopic (fully dark adapted) vision:

Photopic vision has a peak sensitivity of 683 lm/W at 555 nm, while scotopic vision peaks at 507 nm with 1699 lm/W. For dark adapted eyes, you're about 2.5x better off using cyan light than green. But, for daytime vision, you're 2.3x better off with green than cyan. Both of these figures assume a narrow spectrum; for wider sources, like LEDs, you need to do a little math using these curves to evaluate the luminous efficiency. I'm working on digitizing the LED spectra from the datasheets to do this kind of comparison. The data for these curves can be downloaded from http://www.cvrl.org/lumindex.htm. I multiplied the normalized values by the peak sensitivities for the two curves.

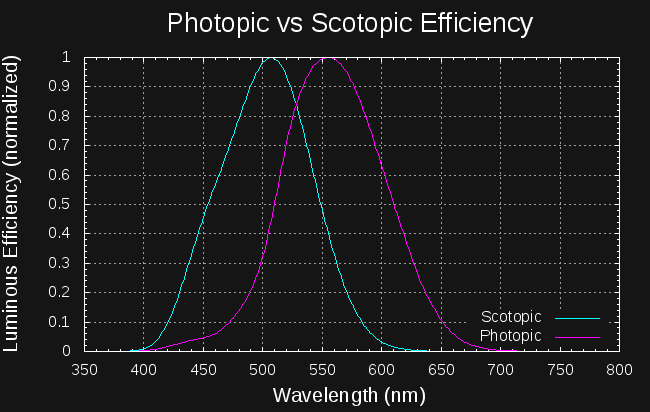

There is probably a compromise that can be made. Plotting both curves with their peaks normalized to unity,

we see that light at a wavelength of around 528 nm stimulates both curves at about 84% of their peak: this light would be relatively efficient with either light or dark adaptation. Given that we probably spend most of our nights in the mesopic region between these extremes of adaptation, an LED with a peak near 528 nm may be a good choice.

Pulsed Light Perception

I initially thought that part of the "brightness gain" I had observed with pulsed LEDs over low-current DC was due to a physiological effect that makes blinking lights appear brighter, mostly due to this article. When this project was blogged on hackaday, one of the commenters, [Paul] put it pretty eloquently:

That Shimizu paper from Nikkei claims the “pulsed LEDs are brighter” effect is due to the Broca-Sulzer effect, which is horseshit — the Broca-Sulzer effect, though totally real, happens only with relatively long pulses (dozens of milliseconds). (see, for example, http://webvision.med.utah.edu/book/part-viii-gabac-receptors/temporal-resolution/ ) It absolutely isn’t an effect on the sub-millisecond pulse lengths. What Shimizu is seeing is the poor efficiency of the LED driven at low current, and higher efficiency when run at 20x the current but 5% duty.

after measuring the relative efficiency curves for a few LEDs, I can understand how easy it would be to make this mistake. But, I'm still intrigued by the notion that there might be something to gain by pulsing at the right frequency or pulse width. Unfortunately, testing this requires human subjects and careful experimental design. It's tempting, but I think I'm going to put this off for now.

Ted Yapo

Ted Yapo

Discussions

Become a Hackaday.io Member

Create an account to leave a comment. Already have an account? Log In.