The various developments that have gone into developing the software and backend will be explained in this log. This log also explains about the problems we’ve faced in trying to get a live feed from the Picamera onto the webpage.

We are not having any problem with direct feed given by picamera library; it runs fine, but it does not run in a browser. (Still working on that problem).

The other option to overlay the preview on top of the browser did not work. It showed multiple conflicts with the custom version of Raspbian – Wheezy that we are using.

The Builds 2.2 to 2.5 of OWL (refer Github repository) have worked on work around making the simple GUI work and also the display of the feed.

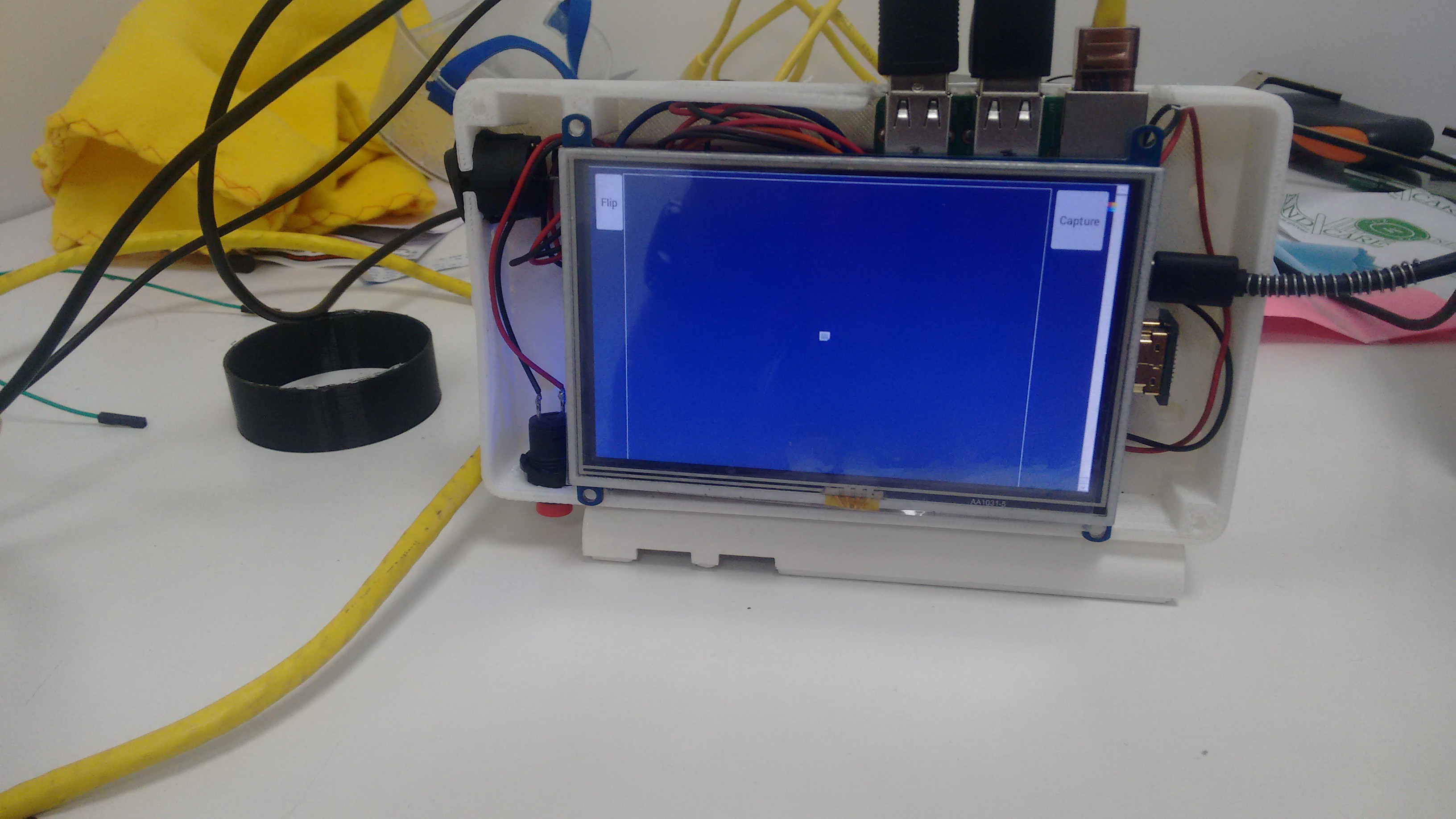

The simple GUI with a flip and a capture button has been done as shown:

Flip was to be used when the image got inverted due to lens optics.

The layout and style was changed later. This was only the test version.

The following problems came while working with the feed.

- Lag

- Blur

- Memory Limiting

A feed of Picamera, is formatted in h264 video format. So using html5 tags for the videos was of no use (ref. <video> ).

The first method we used to solve the problem was to to use openCV. The process was as follows:

- The stream was sent to Fundus_Cam.py frame by frame and each frame was converted into jpeg which was then sent to the webpage where it was displayed using the <img> tag. The feed was shown but the lag in the stream was around 2-3 seconds. Changing input and frame rate and resolution did not have much effect on the latency.

- VLC streaming: The second method adopted wast to stream a raspivid HTTP stream directly to the webpage which has VLC webPlugin installed. The lag was even more although the video was fluid. Running the same thing directly on the VLC player itself did not solve the problem.

-Next we change the HTTP stream to RTSP steam. This did not solve the problem either. (Learn more here: http://www.remlab.net/op/vod.shtml )

This made us realise that the problem associated with the stream is not connected to either browser or the application that we are using. On carefully checking all the associated logs we found that the stream sent via HTTP itself was laggy. If a frame was 'late', the server waited for it to be displayed. This caused the delay. Similar delay was not seen with USB cam though. Or the webcam.

So, to better the solution we checked for protocols used in live conversation and video conferencing.

We found out that the problem was in the transport layer. Till now all our attempts used TCP to transfer and display the stream. For, a live stream UDP is the better transport method since only the 'live' packets are focused on and lagging ones are dropped. This is the next thing we are going to try for the stream.

In other things, a JQuery keyboard based on https://mottie.github.io/Keyboard/ was implemented. Now the text box/patient details can be entered directly using the touchscreen input.

One other major change that is implemented is the usage of iceweasle (Firefox) instead of Chromium due to no support of it for Rasbian. There were a number of problems coming up due to absence of official support of Chromium. The kisosk mode is supported in Iceweasle too and anything associated with Chromium can be seen here.

Also, x-vlc-plugin was not officially supported in Chrome since chromium 42. This made us switch to Iceweasle (Firefox).

The next part for the Webpage - form development is to store patient data.

Ayush Yadav

Ayush Yadav

Discussions

Become a Hackaday.io Member

Create an account to leave a comment. Already have an account? Log In.