Background

This is a concept that I first read about a few years ago. At the time I made some plans to build one using a couple of AVR microcontrollers, a VGA CMOS camera and a dual-port RAM, but I never followed it up. It's easier now to use a Raspberry Pi to capture and convert video data, simplifying the custom circuitry needed.

There is a commercial "tongue vision" device available, but it costs $10,000. I aim to create something similar using low-cost hardware, with all design information freely available, so that anybody can make one. The total hardware cost will be less than $100.

The following paper describes how the brain can adapt to use the tongue for vision in this way:

Brain plasticity: ‘visual’ acuity of blind persons via the tongue

This is the commercial device I am aware of (though I haven't actually seen one):

I don't know know any details about how the commercial unit works, as the only information I have is from the website above. So it could be that my design ends up to be quite different in terms of the actual signals that are generated.

Overall concept

The project will consist of the following elements:

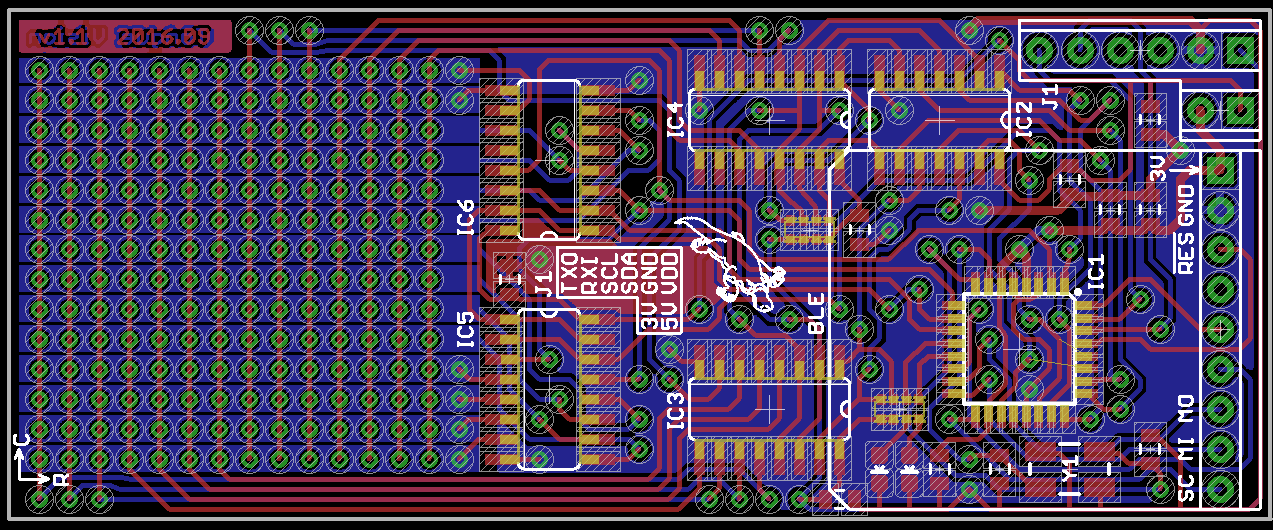

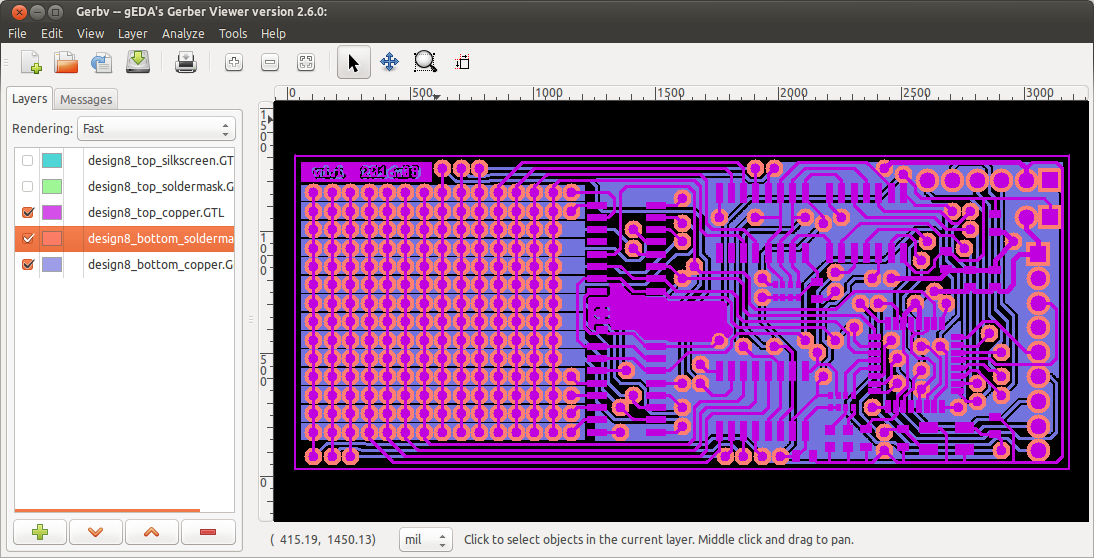

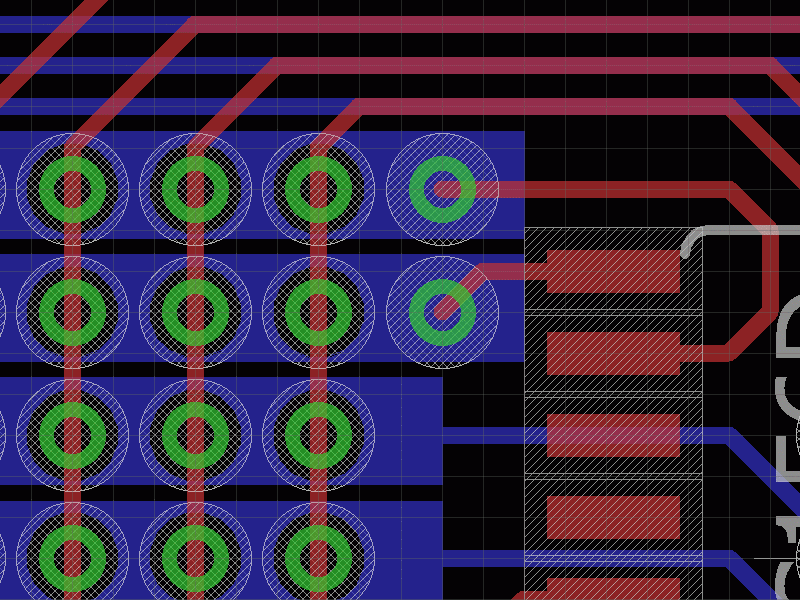

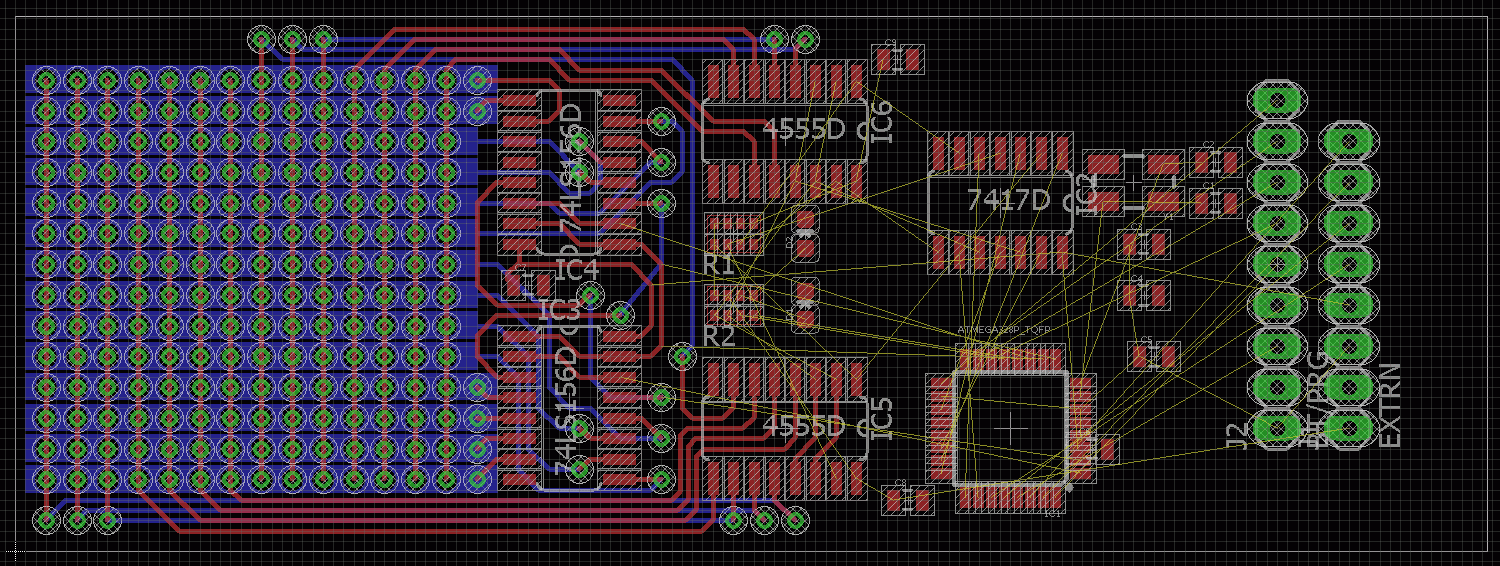

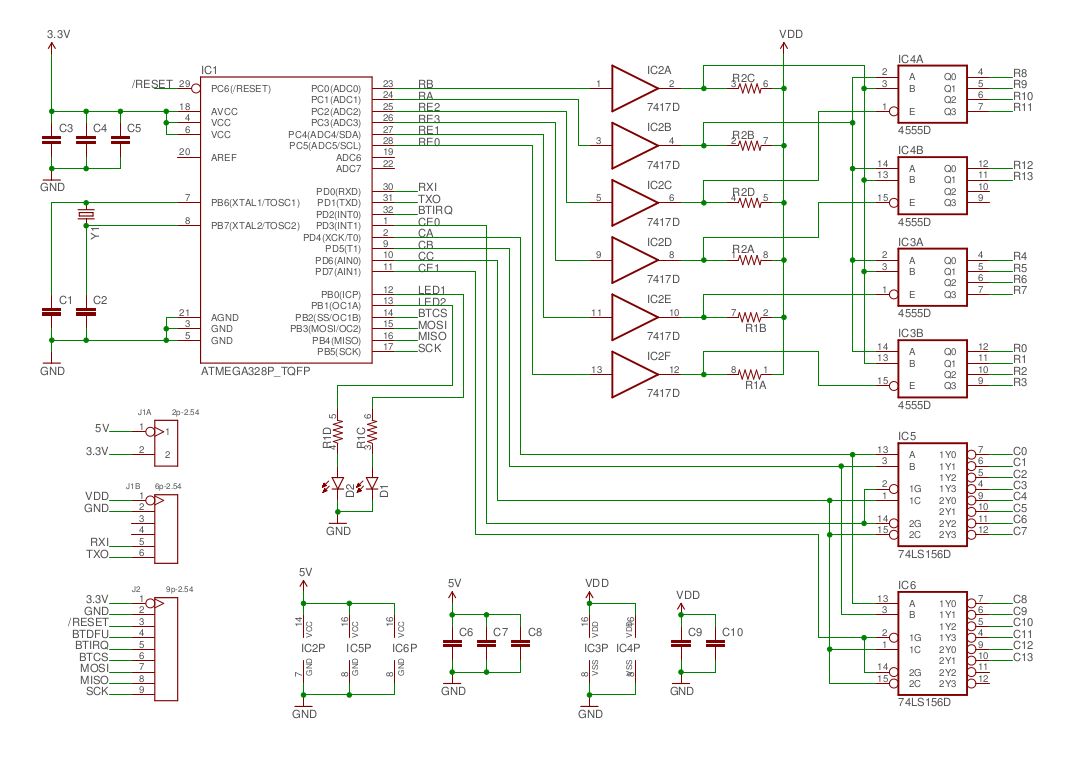

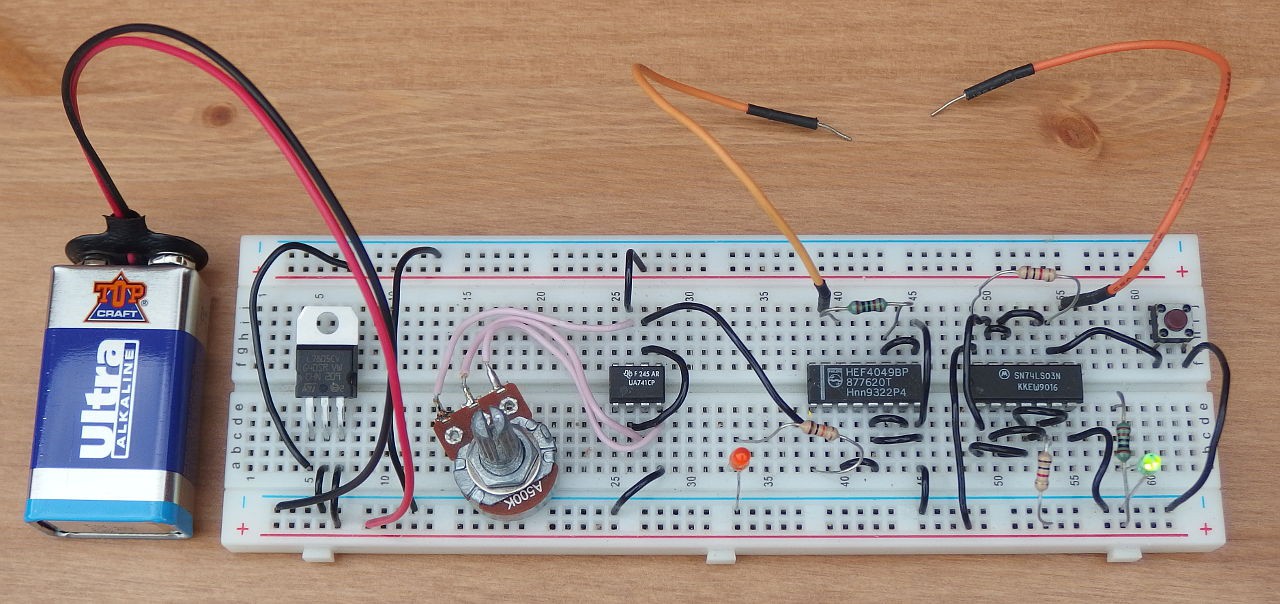

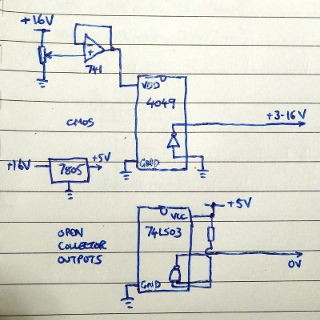

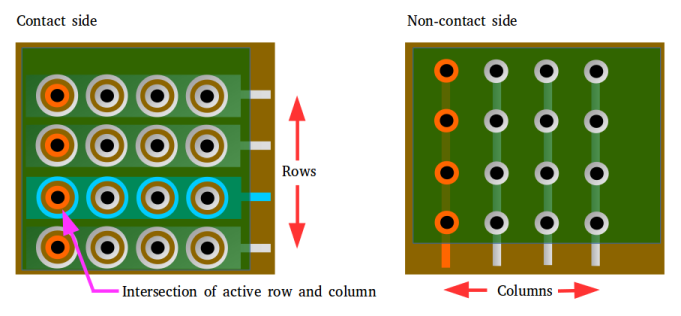

- Interface lollipop - the user will place this inside their mouth, against the tongue. The lollipop will have a rectangular array of contacts on one side that provide electrical stimulation to the tongue.

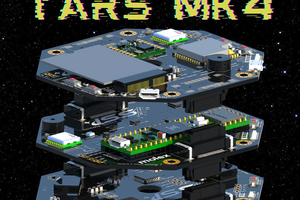

- Wearable camera/control unit - this will connect to the lollipop via a thin wire. The unit will send a stream of live pre-processed image data to the interface lollipop. It will also provide power to the lollipop and include controls to adjust the image intensity. As a wearable device it could be incorporated into an item of clothing , such as a hat.

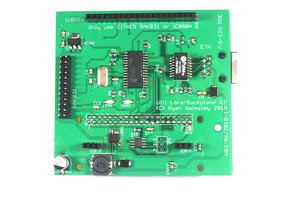

I intend to base the interface lollipop around the Atmel ATMega328P microcontroller, and the camera/control unit around the Raspberry Pi Zero.

Block diagram

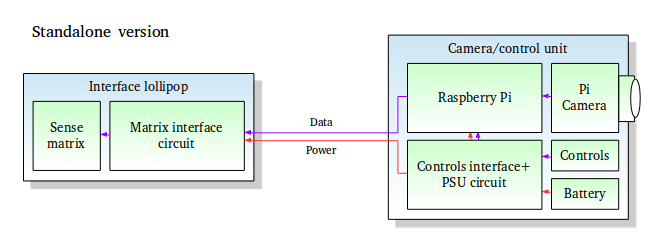

The first block diagram shows the concept as described:

Inside the camera/control unit, the Raspberry Pi will run software to process video data from the camera, convert it into a suitable low resolution format and send it to the interface lollipop. A power supply circuit will be needed to power the Pi from a rechargeable battery and also power the lollipop. The lollipop itself will contain the microcontroller-based interface circuit to drive the array of contact points.

Inside the camera/control unit, the Raspberry Pi will run software to process video data from the camera, convert it into a suitable low resolution format and send it to the interface lollipop. A power supply circuit will be needed to power the Pi from a rechargeable battery and also power the lollipop. The lollipop itself will contain the microcontroller-based interface circuit to drive the array of contact points.

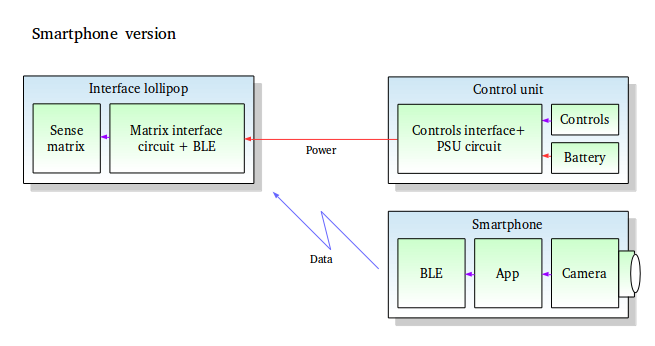

The second block diagram shows a possible alternative design where the image data is captured via a smartphone and sent to the interface lollipop via bluetooth. This would allow the cost of the control unit to be minimised, as it would only need to provide power and adjustment controls for the interface lollipop.

For this project I will focus on the standalone concept, but will include the option for bluetooth in the interface lollipop in order to add compatibility with the smartphone concept. Eventually, it should be possible for the interface lollipop to be completely wireless, but this will involve putting a battery inside it and I don't intend to do this yet.

Project goals

- Demonstrate a working prototype with low cost, easily manufactured design

- Develop software to provide the basic functionality

- Experiment to find the most effective stimulation methods

- Make all design data and software freely available

Illinois Space Society AV

Illinois Space Society AV

Ryan Walmsley

Ryan Walmsley

Hii this is Janavi here I was keen of making a similar project like you ..but i have no idea about how to make the lollipop PCB if possible can you help us with the design and it's making if possible .