Right now the following video discription is probably the best.

Neotype: Haptic Computing

Communication platform: Text can be felt, as it is expressed

Communication platform: Text can be felt, as it is expressed

To make the experience fit your profile, pick a username and tell us what interests you.

We found and based on your interests.

Right now the following video discription is probably the best.

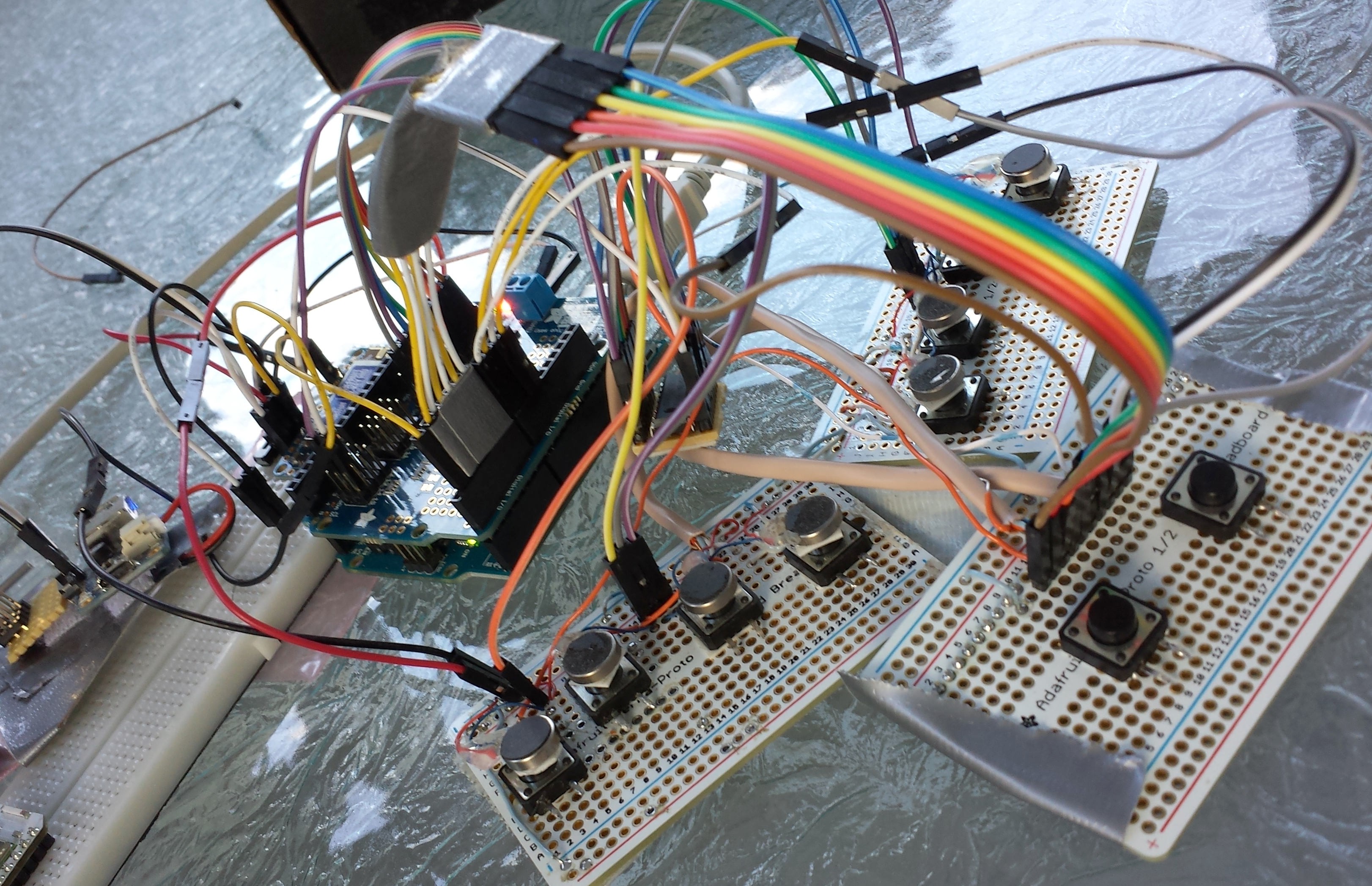

Today in response to my frustration with numerous fails in showing this project off I converted the primary build to use the Arduino Micro instead of the Yun. Lets just be honest about the Yun for the record. It takes 90 seconds to boot and the serial connection between its two chips is flaky. Works fine at home but these issues will rear their ugly heads when showing to anyone else. Lets not even get into how ridiculous it is to prototype with. Arduino's are for folks too lazy... otherwise occupied to read the data sheet, Yun fails to meet the spec. Interpret that how you may...

( Case you're wondering I fall in the middle, will read data sheet in act of desperation. )

This simple switch to using the Arduino Micro coincidentally makes the project much more approachable for someone who wants to see for themselves why character by character chording will never work out against the alternatives or wants a simplified key interface for their project.

Figured a update was in order.

This specific project is currently dormant, but that doesn't mean the overall goal of the project is dead. I'm still working hard on creating interface technologies that facilitate more efficient communications. The solution to this problem can take many forms and right now I'm exploring a more software oriented method that plays more heavily on the UI context of communication as opposed to the physical aspect of making digital communication efficient. The software side of this problem is a crowded and competitive space, but it hardly matters to me when I'm hard pressed to find the technologies that take digital communication anywhere near comparable to person to person interaction. There are big innovations to be seen for a product that meets the requirements that I have imagined for neotype, so I do plan to revisit it in the future. Hopefully a future in which I'm better poised to dedicate more resources.

Final typing top speed with Tenkey: around 30 wpm / retention after 2 months cold turkey: around 20wpm 95% memory of layout

I stopped typing with Tenkey for the following reasons; The key assignment was too similar to my full keyboard layout so cross confusion started to reduce my full keyboard speed. Which normally varies between 38-70wpm depending on what is being typed. (Averaging around 45wpm for standard test) Cross confusion reduced my Dvorak speed by 10wpm, though I'm sure more practice would have reduced that figure. The second issue was that I started feeling the onset symptoms of developing a repetitive stress injury (RSI) after about 3 months use of tenkey. This is because of two reasons, 1 ) the prototype was not correctly designed with long term use in mind, 2 ) Some chords or finger combinations currently used in the layout are too stressful to be used as part of a regular key mapping. I would imagine the second issue would create a great challenge to a gesture base system of character entry. Each gesture would need to be ergonomically analysed against character or syllable commonality.

Key findings (pun intended) : The body is physically capable of a maximum rate of movement that varies from person to person and whether that movement is using fine or gross motor control. In the case of communication this rate is further limited my reaction time in the cases response and change of thought. Fine independent motor control of all 10 fingers is likely max 12-16hz (for a Usain Bolt of finger motion). When talking about fine combined/chord motor control of all ten fingers this number is much less, because these figures are hard to measure in a definitive way, I will say anecdotally chording is only capable of half to 3 quarters of the rate of independent finger motion optimistically speaking. Honestly, I would say stenographers would be better with a full keyboard, but the efficiency of the skill comes from the simplification of a language which reduces the keyset anyway. The efficiency is also gained with the laborious practice of memorizing that shorthand. This is just the tip of the ice berg but I'm going to stop before this turns into a book. The results of my research have been far from my expectations and much to my discontent have only supported the dominance of the popular anomaly that is the Qwerty keyboard. Something better can and should be build to fit in with the future of computing and communication, but it is now abundantly apparently to me why it has yet to happen.

The haptics is a whole other story, maybe another time...

Had a personal goal to get to 25 WPM with the tenkey device. Yesterday I was averaging about 22, but I just was surprised to break a new personal record with this particular test.

The "your best" at the top is my top average CPM speed with dovark for comparison. (46WPM)

Now, burst speed is another story. It tends to be a less stable measurement, but I think it does well to indicate a typist level of comfort with a keyboard. After all most test get their results by measuring trancription speeds which introduces words outside individual comfort zone.

Personal Fastest Dovark burst speed: 73 WPM ( 4 years - daily driver )

Personal Fastest Tenkey burst speed: 33 WPM ( just over a month )

Personal Fastest QWERTY burst speed: 9 WPM (hunt and peck, never use typically)

Its might be good to note that I'm a slow start on motor skills and the dovark speeds have taken quite a bit on investment to get to. The average on the QWERTY test taken to get its burst number was 5 WPM. By feel, subjectively I can say that I started (years ago) slower than this. Also seeing others start with QWERTY I want to say most people start around the 7-10 range. That is a subjective analysis though. With Tenkey I started around 7wpm, this is bit of an unfair observation as I'm the one who designed the layout and "hit the ground running" if you will.

Built a new version of Tenkey with Cherry Blue Keyswitches. Really a night and day differance in usability!

With a recent update to Tenkey it has now become a haptic computer!

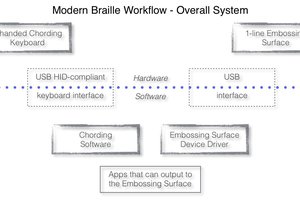

A buggy haptic computer but one none the less. Now when using the device if the " T " is held "Terminal" mode is activated. This allows the user to enter ASH commands and subsiquently feel the output. This has been achieved with the arduino Yun. The current thought its to expand this to any Linux computer with pySerial. Will take quite a bit more work though.

To reflect current development will be changing the linked repository to -> https://github.com/PaulBeaudet/tenkey

8 key is here -> https://github.com/PaulBeaudet/braille_neotype_testing

Yesterday I pushed a commit that now allows for support of ATMEGA32u4 based Arduinos like the Leonardo, Micro, Yun, and USB lilypad by comenting in a #define of "LEO" as opposed to "UNO". One would have to change the IO significantly to use the lilypad but its do-able. Personally I'm using the Yun without touching the bridge functions... Currious if I could get a Synergy server up in running up on the Linux side of the Yun.... That would be absolutly awesome. Getting really tired of Bluetooths quirks, sounds like a great idea in theory but to be honest, if pairing is a nightmare for me, it must be impossible for muggle folk. So the new hardware support allows for use as a USB Keyboard, which is honestly a breeze for use and debugging. Whilst support for adding a Bluefruit modual will continue to be maintained. After many a demo fail with bluetooth though I personally might be using wired more often. Even with Android demos via OTG. (yes BTs that cumbersome that OTG seems great)

Intergrating Synergy is an interesting concept I'll be exploring going forward, for having a wireless option.

I'll also say there has never been a better time to start hacking with this project. The code now produces reliable enough keystrokes to use a keyboard to compose messages with. Changing the layout is easy if you understand bits in bytes. Still working on making special characters easier to use. By the end of the month I really want to be using the keyboard to write code for the keyboard. Really look forward to seeing how efficient I can get with it. Particually the pontential of reading messages back to me with my eyes closed is getting me really excited. So close.

One of my friends offered to design a pcb for this project and the envitable scope creep of adding more pagers and buttons came up. Just two of either of these changes the hardware significantly because of the number of pins needed. These extras might be unnessisary based on what I have found out. However I think adding a bit of flexibility for a pcb that would be the size of a small keyboard is a good idea for sharing with folks that are more generally intested in the idea to experament with keyers. Even thinking about expandability to analog keys for testing purposes, but I think I want to get some cherry mx keys so I can really start dog fooding this project myself. Using the project in my own typing has been tough thus far because tactile buttons have really bad performance characteristics when it comes to regularly typing with them. Along with the fact that its has been though for me to program really effective chord debouncing/capture function.

Thus the tenkey proto now exist! Using the arduino uno and bluefruit. Think if we do a pcb the atmega32u4 will make more sense so folks can skip bluetooth "friction" and just get things done via wired usb. Just ironed out some of the bigger bugs a couple of hours ago. You can find the code to this project here- https://github.com/PaulBeaudet/tenkey

Maybe I'll do an instructable in the future, after the code is better ironed out.

Looking through my logs here may seem like a hodgepodge of different projects. Case and point as follows. Heres what I have been working on.

Its a PS2 keyboard converted to use Bluefruit that I plan to add key layers to. This is an exercise that is going to help me understand the scope of an important feature that I would like to be part of the neotype system.

Be assured that these are mean to the same end. The reasoning is simple, its easy to hit road block. Sometimes focusing on a hard problem can obstruct the view of the solution. So I like to focus on a different one building up the supporting "muscles" and go back to the other issues with new eyes.

The Mini Maker Faire in Dover went great! One thing that I learned with an earlier version of the project at last years faire is that people want to type right a way with a keyer. May seem fairly obvious especially with the goals of "user simplicity" in the project, but at the time expeirmentation was being done with a "learned layout". More on that in a bit.

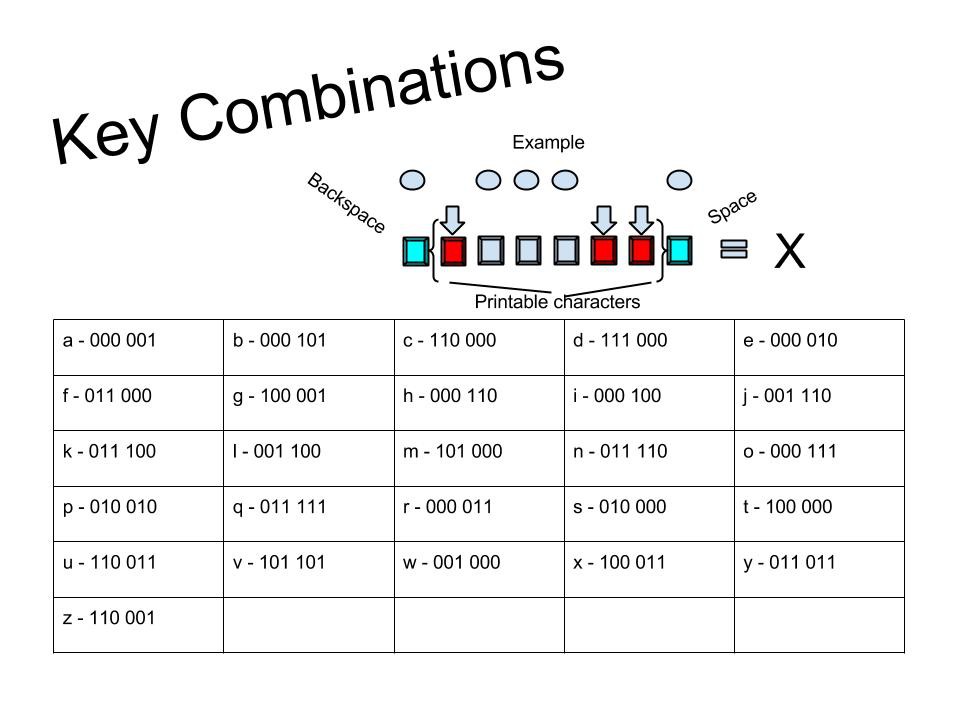

This year showed off the haptics proto which has a pre-defined layout based on patterns. The biggest help was simple on hand chart drew up in google draw.

Based on this chart and a quick explanation, a few young kids were able to type out words and even short sentences in under 5 minutes in playing with the keyer. Ironically adults seemed to be more satisfied with typing out a bunch of gibberish or just looking at it. Just some observations on expectations that indicate a real challenge with the product beyond technical. Optimistically minded can indeed show potential for proficiency with such a device, however the more skeptical among us are slow to pick up the concepts of what is going on. The skeptical seem to fall into the same demographic that the project is trying to help. This poses a significant psychological barrier that gets in the way of what we want to do.

Chart explaination: 0 denotes and undepressed key and 1 denotes a pressed key. The trick is the six 1s and 0s refer to the middle six "raised keys" or the ones with the vib motors. The outer keys are for non printables. All the way to the right is "space" and all the way to the left is "backspace".

Failure with a working idea: The concept of a "learned layout" harks back to taking advantage of the way we learn. The original prototype for what is now called Neotype assigned letters to layout as a user experimented with the device. This was done in order to curate the experience of forming the associations. All while making the learned suggestions relevant by referring to letter and word commonality. Notice in the chart, more complex patterns are assigned to uncommon letters. That effect happens naturally in the learned method. It is designed into the current layout. It is an idea that does its job even in the most simple form. However solving this particular problem is only a small subset of the challenge. Giving users the confidence that "you can" is very important and is the difference between persisting with and dropping the device.

Saturday the 23rd I will be showing off a couple of the Neotype family of devices at our local Maker Faire in Dover NH. Will be doing so alongside a great group of people from Makers in Manchester. https://www.facebook.com/groups/makersmht/ We have a collaboritive table we are going to put on with neat projects.

More information about the Faire can be found here- http://makerfairedover.com/

Create an account to leave a comment. Already have an account? Log In.

Become a member to follow this project and never miss any updates

Martijn

Martijn

Johann Elias Stoetzer

Johann Elias Stoetzer

Mike

Mike

Danny Caudill

Danny Caudill