Part 1 of the VIPER video shows a blind user using the prototype to navigate in his home:

Part 2 of the VIPER video shows opening the enclosure to reveal the internal components of the prototype:

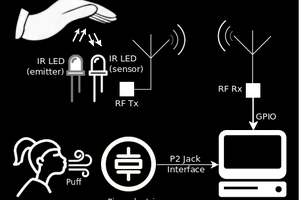

The prototype shown above uses an Atmel ATtiny13V 8-bit microcontroller to drive four n-channel MOSFETs, each of which pulses a piezoelectric transducer. The transducers used in the prototype are piezoelectric horn tweeters obtained from All Electronics. A 150-ohm resistor is connected across each tweeter. The prototype is powered by ten AA batteries, nominally providing 15 volts DC, which is switched by the mini toggle switch on the enclosure. The 15 volts DC is applied directly to the tweeters, with the MOSFETs between the tweeers and ground, energizing the tweeters when short positive pulses are applied to the gates of the MOSFETs by the microcontroller. The 15 volts DC is also applied to a 78L05 regulator to provide 5 volts DC to power the microcontroller.

The microcontroller sequentially pulses the transducers, for example, in a left, right, down, forward pattern. The directivity of the transducers provides respective sectors of coverage. The sectors of coverage need not be mutually exclusive but can provide a diversity of echolocation to the ears and brain from which a more complete and detailed spatial image may be formed.

After each sequence is performed, a pause is provided. Unlike other assistive technology that provides continuous stimulus to the user, the VIPER prototype is essentially time-division multiplexed with the ambient audio cues from the environment surrounding the user, allowing the user to receive all of those diverse cues undisturbed except during those few milliseconds during which the pulses and their echoes are heard. Thus, the VIPER prototype can provide new information to a user without appreciably disturbing the user's ability to receive information from existing sources.

Malte

Malte

Nguyễn Phương Duy

Nguyễn Phương Duy

Cassio Batista

Cassio Batista

Austin Marandos

Austin Marandos