Gameplay with a Cherishable starts with picking it up. the way in which a player holds and interacts with a Cherishable has a huge impact on Cattitude. gentle holding and rocking is soothing, and helps calm a wily cat, while rough, shaking, movements can turn a gentle cat pissy in no time flat. But, how will the device be able to "sense" these different interactions.

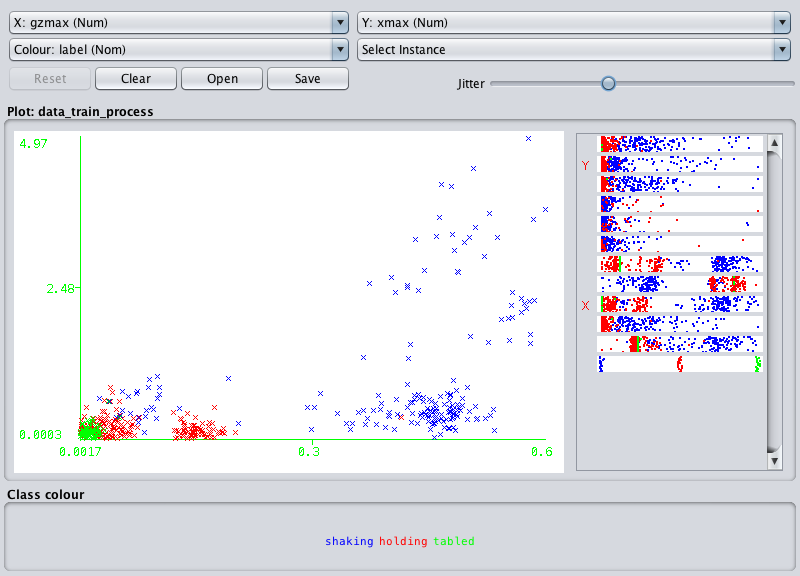

We resurrected an old tool that allows us to record data from a motion sensor, tag it, and do some machine learning magic to build n algorithm to detect different motions in real time. It's based on an RFDuino, and has a BNO055 motion sensor from Bosch. A companion app was made for a mobile device that would help in tagging data for specific motions. In this case, we started small and only focused on holding, shaking, and resting on a table. Our first attempts have been met with failure, and we suspect our feature section and creation needs a bit of work.

See how the Green and Red are all mashed up together, and some blue is leaking in as well? Need better features that will spread things out. Gotta keep em separated.

Discussions

Become a Hackaday.io Member

Create an account to leave a comment. Already have an account? Log In.