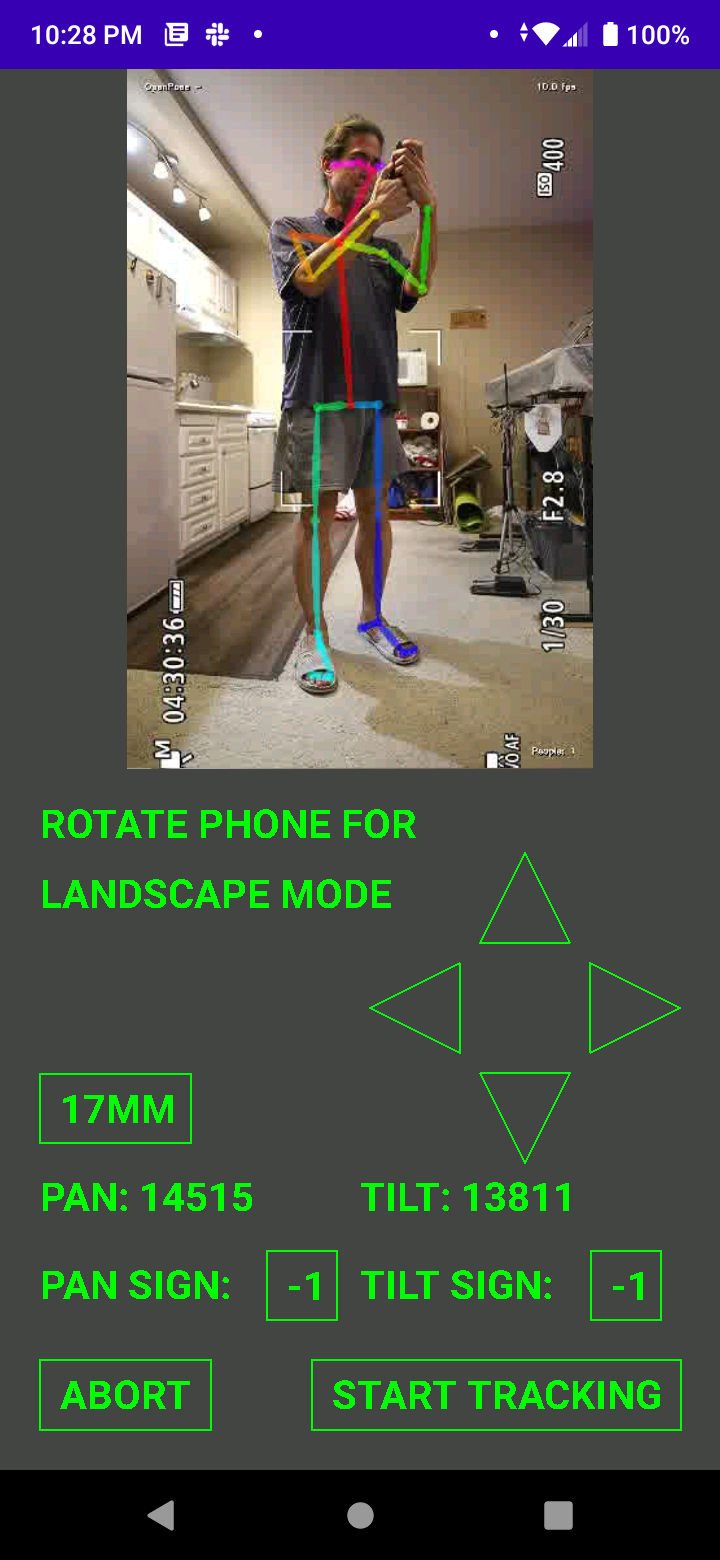

The phone GUI finally got to the point where it was usable. It crashed, took forever to get connected, had a few persistent settings bugs. It was decided that the size of the phone required full screen video in portrait & landscape mode, so the easiest way to determine tracking mode was by phone orientation.

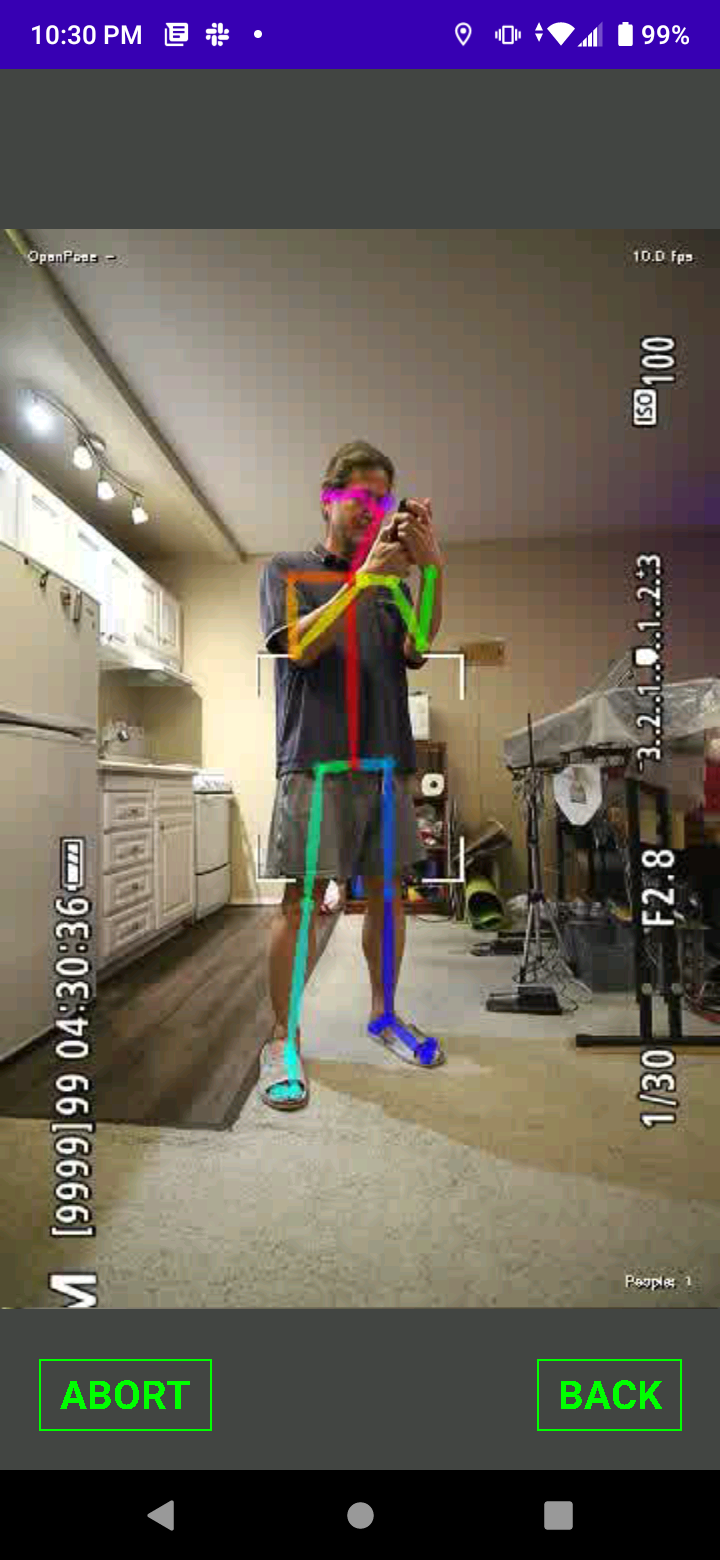

Rotating the phone to portrait mode would make the robot track in portrait mode.

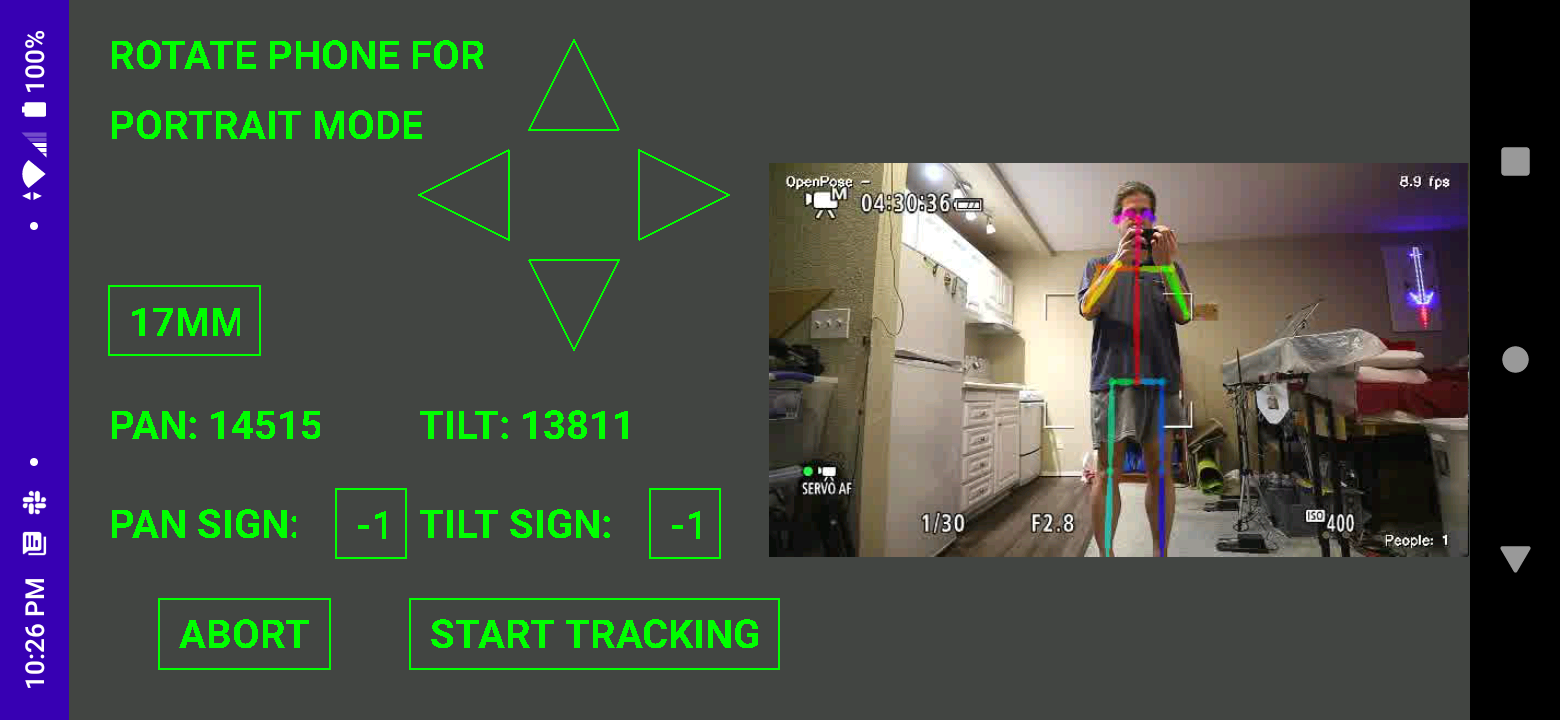

Rotating the phone to landscape mode would make the robot track in landscape mode.

Then, we have some configuration widgets & pathways to abort the motors. It was decided to not use corporate widgets so the widgets could overlap video. Corporate rotation on modern phones is junk, so that too is custom. All those professional white board programmers can't figure out what to do with the useless notch space in landscape mode.

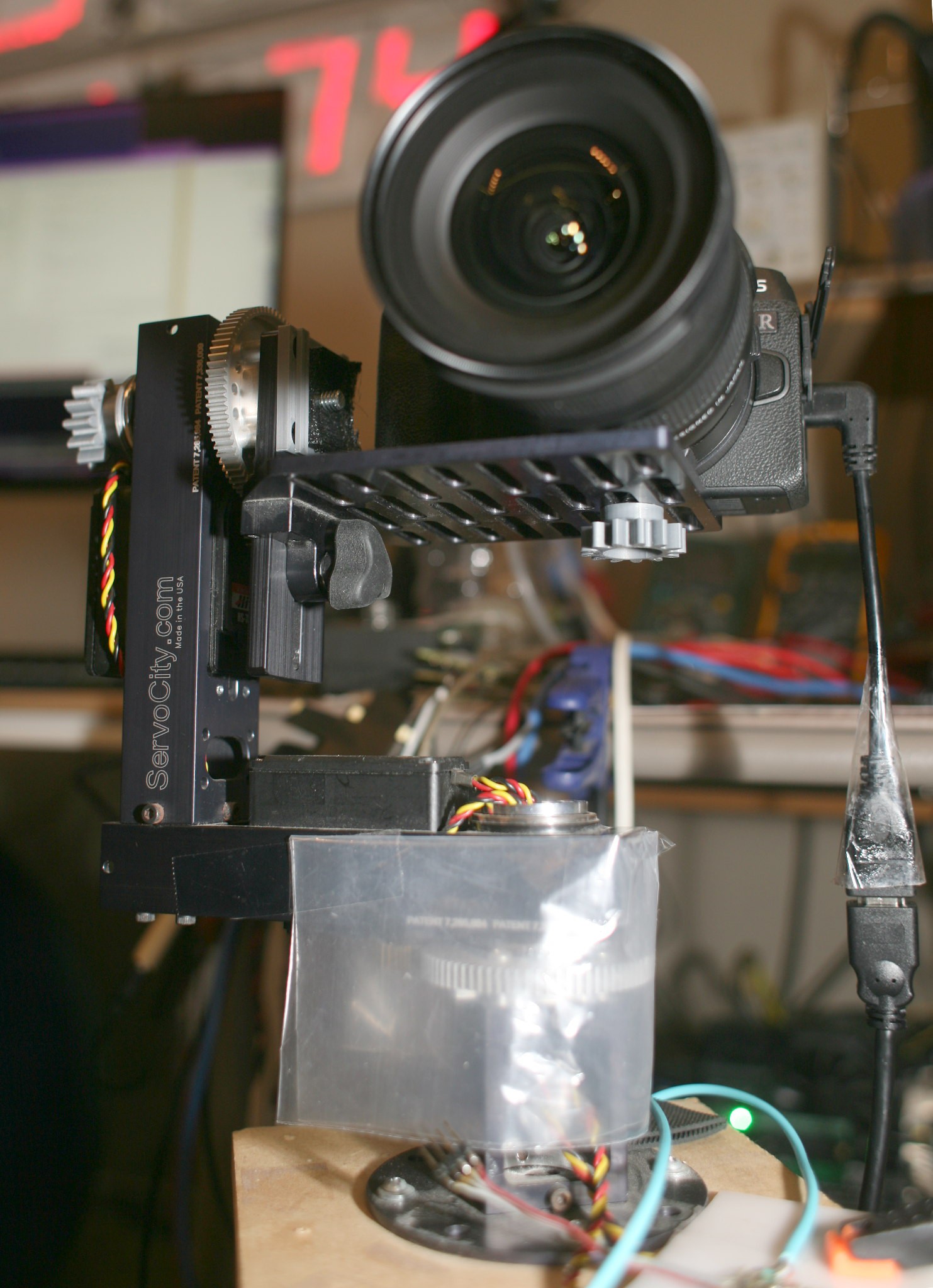

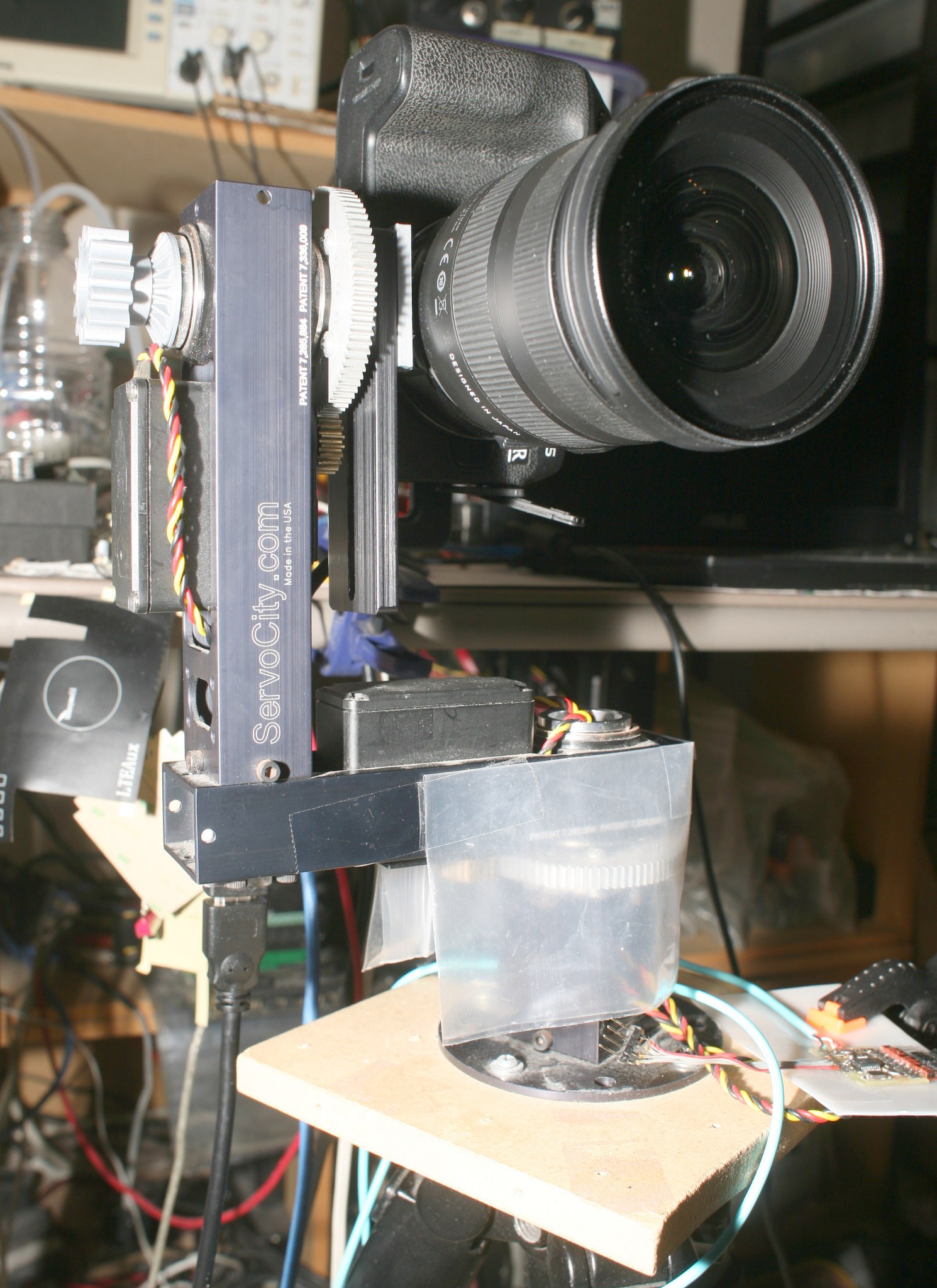

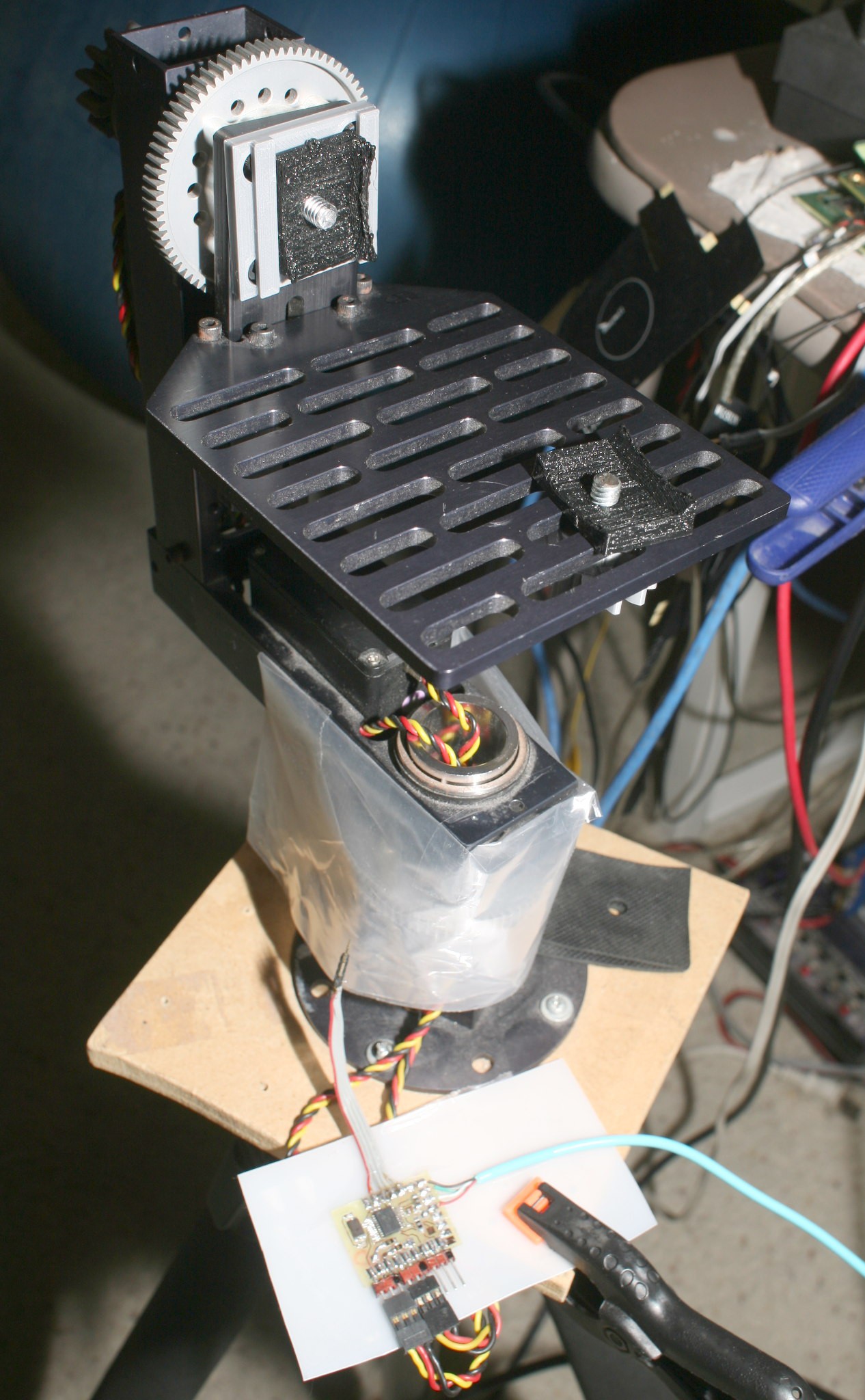

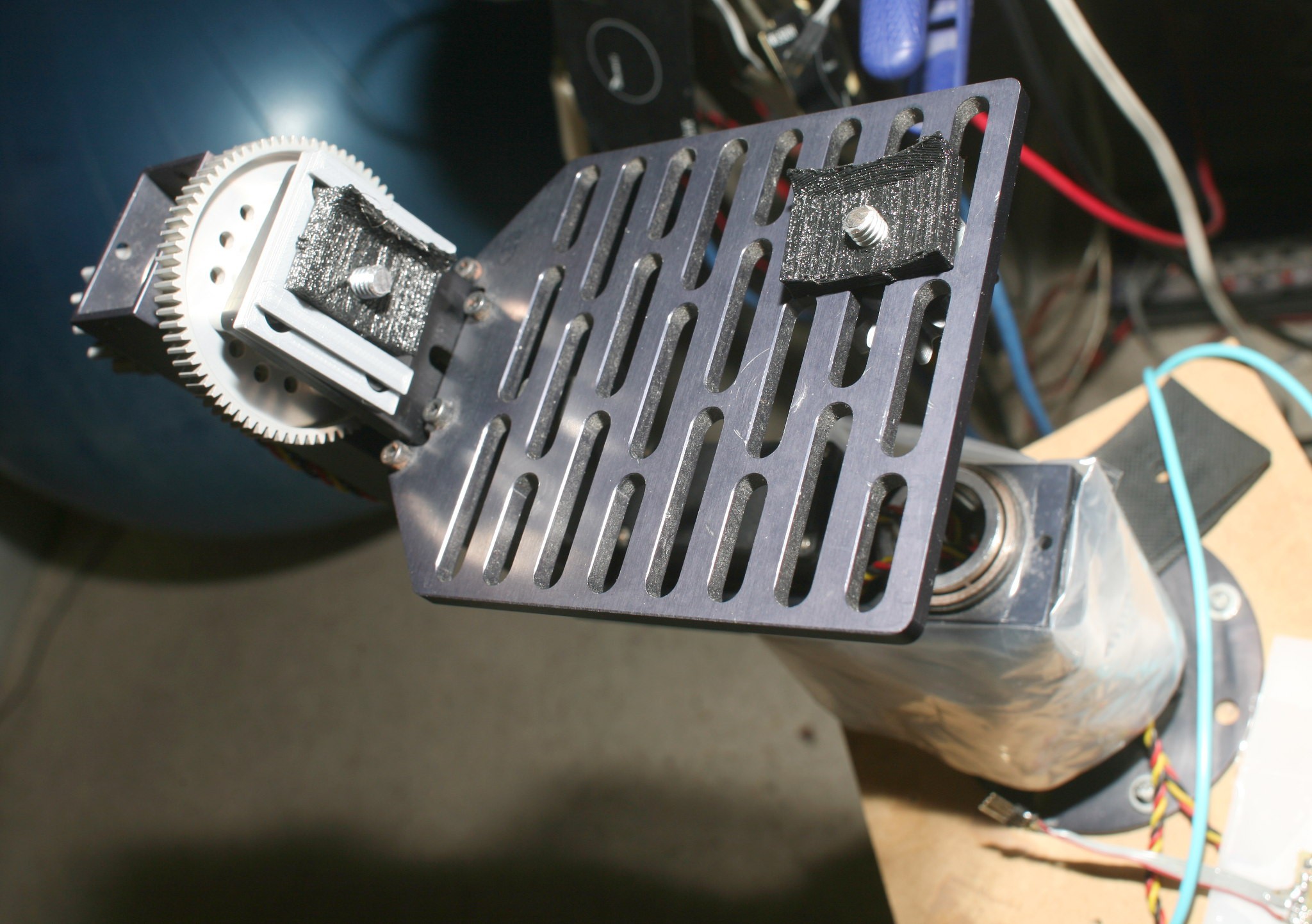

The mechanics got some upgrades to speed up the process of switching between portrait & landscape mode.

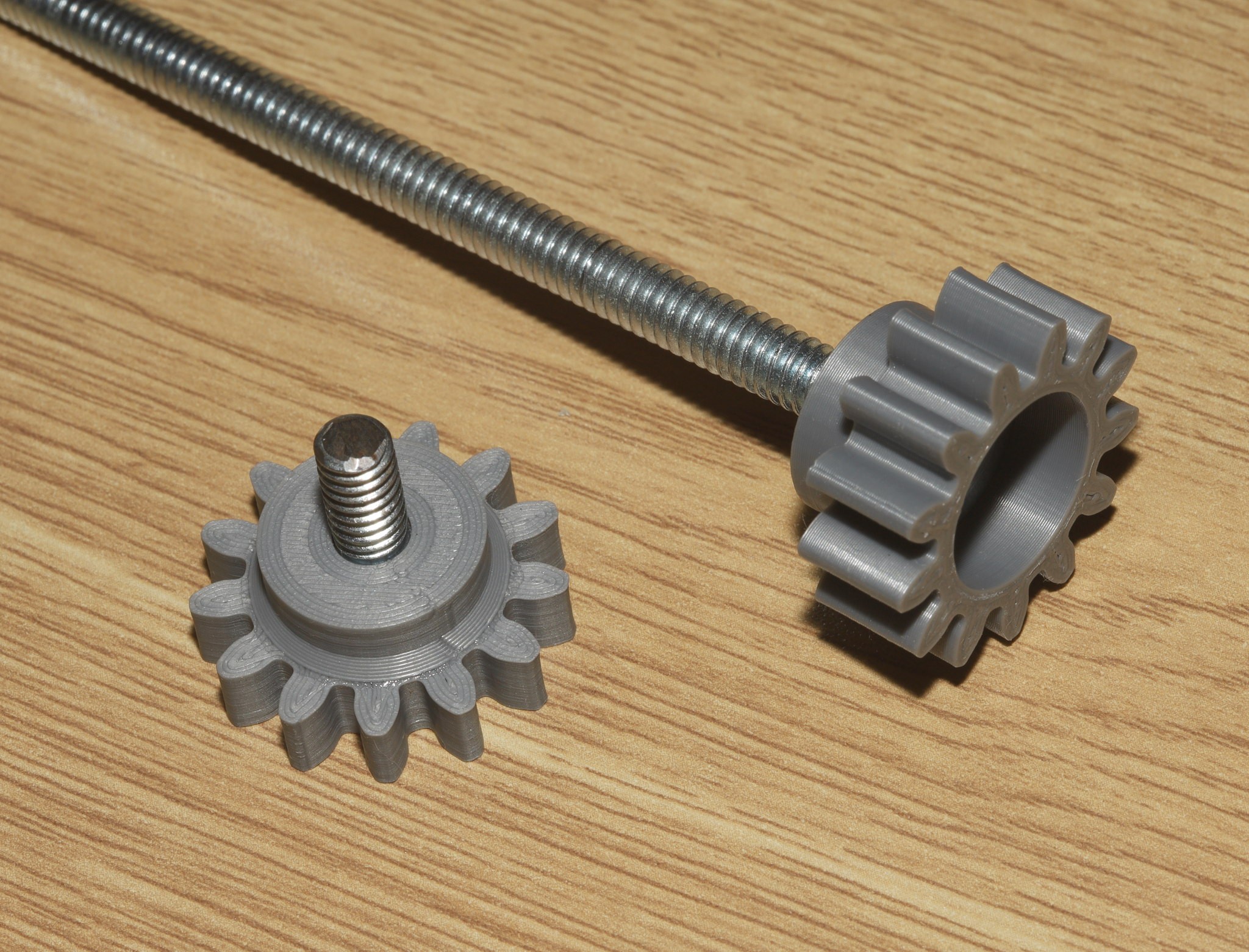

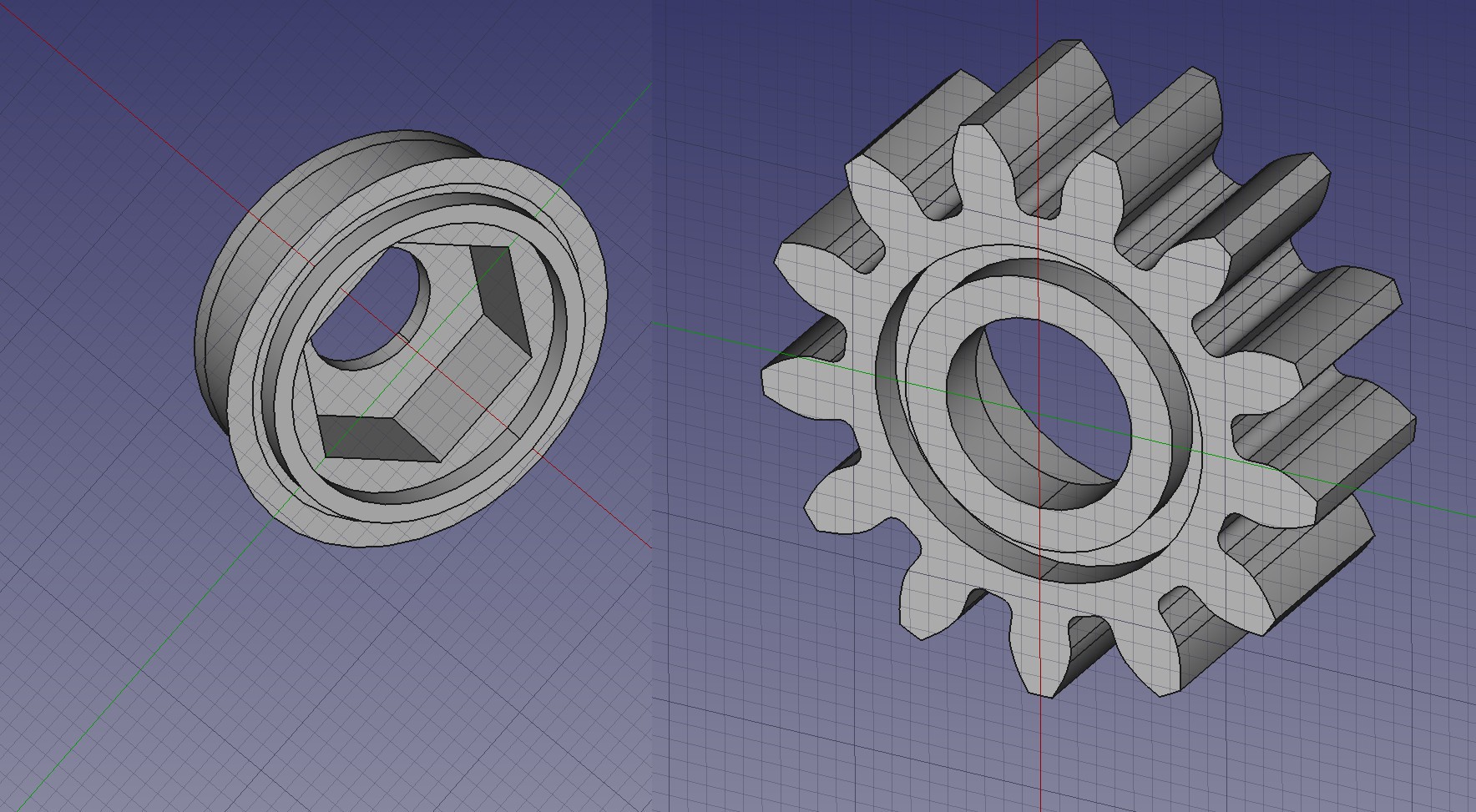

The key design was a 2 part knob for a common bolt.

Some new lens adapters made it a lot more compact, but

The lens adapters & bolts would all have to be replaced to attach a fatter lens.

The original robot required relocating the landscape plate in a complicated process, with a socket wrench. The landscape plate now can be removed to switch to portrait mode with no tools.

The quest for lower latency led to using mjpeg inside ffmpeg. That was the only realtime codec. The only latency is buffering in video4linux.

On the server, the lowest latency command was:

ffmpeg -y -f rawvideo -y -pix_fmt bgr24 -r 10 -s:v 1920x1080 -i - -f mjpeg -pix_fmt yuv420p -bufsize 0 -b:v 5M -flush_packets 1 -an - > /tmp/ffmpeg_fifo

On the client, the lowest latency command was:

FFmpegKit.executeAsync("-probesize 32 -vcodec mjpeg -y -i " + stdinPath + " -vcodec rawvideo -f rawvideo -flush_packets 1 -pix_fmt rgb24 " + stdoutPath,

The reads from /tmp/ffmpeg_fifo & the network socket needed to be as small as possible. Other than that, the network & the pipes added no latency. The total bytes on the sender & receiver were identical. The latency was all in ffmpeg's HEVC decoder. It always lagged 48 frames regardless of the B frames, the GOP size, or the buffer sizes. A pure I frame stream still lagged 48 frames. The HEVC decoder is obviously low effort. A college age lion would have gone into the decoder to optimize the buffering.

The trick with JPEG was limiting the frame rate to 10fps & limiting the frame size to 640x360. That burned 3 megabits for an acceptable stream. The HEVC version would have also taken some heroic JNI to get the phone above 640x360. Concessions were made for the lack of a software budget & the limited need for the tracking camera.

lion mclionhead

lion mclionhead

Discussions

Become a Hackaday.io Member

Create an account to leave a comment. Already have an account? Log In.