Hi everyone,

So last week we got object detection working direct from image sensor (over MIPI) on the Myriad X. MobileNet-SSD to be specific.

So how fast is it?

- 25FPS (40ms per frame)... when connected to a powerful desktop

According to 'The Big Benchmarking Roundup' (here) that's actually quite good.

But, this is connected to a big powerful computer... how fast is it when used with a Pi?

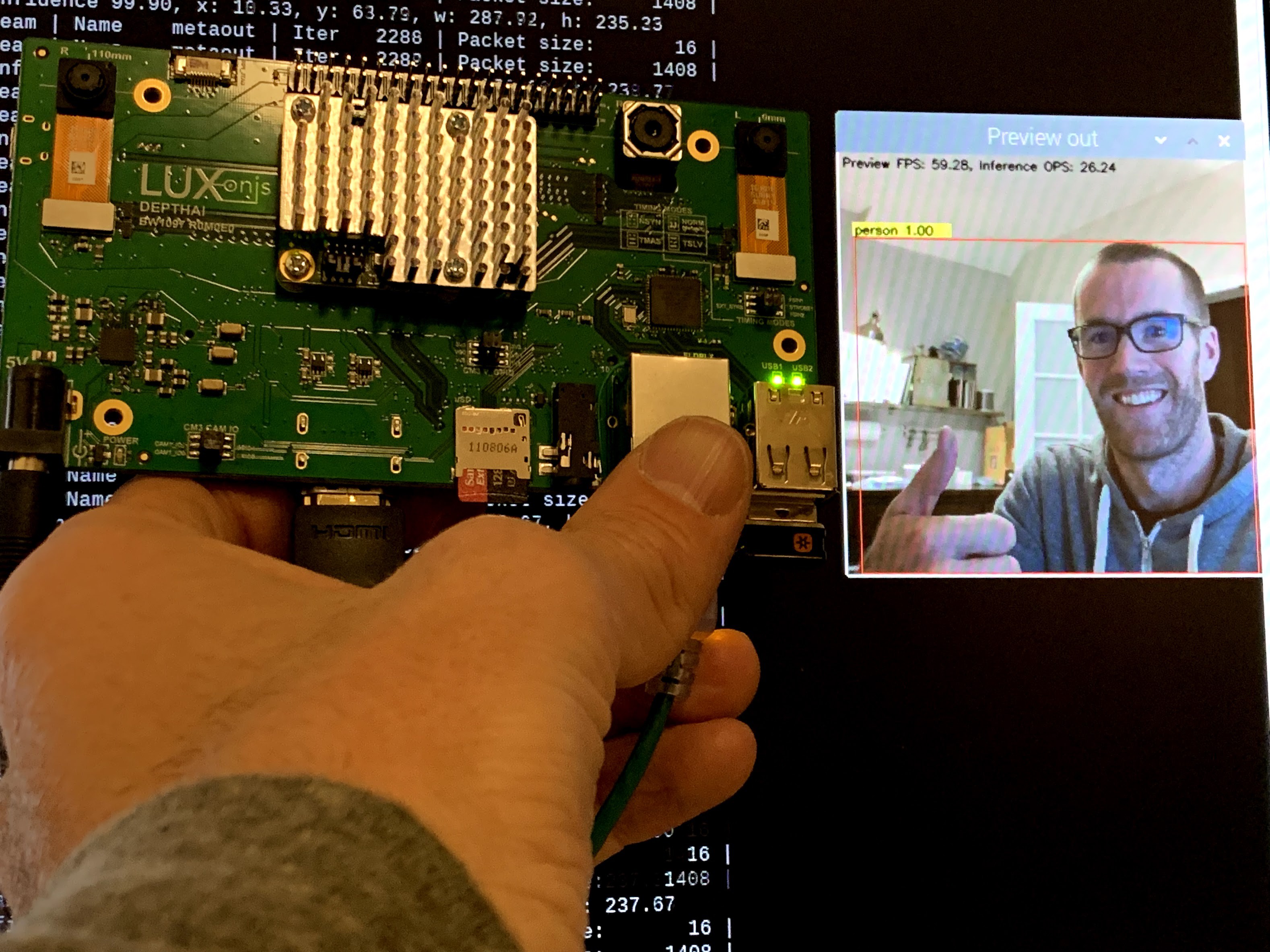

To find out, we ran it on our prototype of DepthAI for Raspberry Pi Compute Module:

And how did it fair?

- 25FPS (40ms per frame)... when connected to a Raspberry Pi Compute Module 3B+

Woohoo!

Unlike the NCS2, which sees a drastic drop in FPS when used with the Pi, DepthAI doesn't see any at all.

Why is this?

- The video path is flowing directly from the image sensor into the Myriad X, which then runs neural inference on the image data, and exports the video and neural network results to the Pi.

- So this means the Raspberry Pi isn't having to deal with the video stream; it's not having to resize video, to shuffle it from one interface to another, etc. - all of these tasks are done on the Myriad X.

In this way the Myriad X doesn't technically even need to export video to the Pi - it could simply output detected objects and positions over a serial connection (UART) for example.

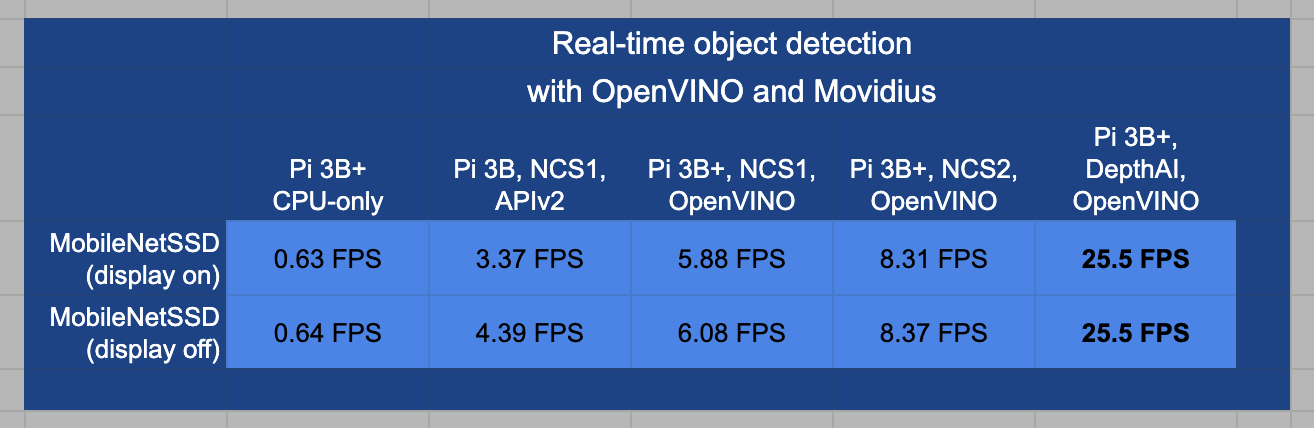

Let's compare this to results of the Myriad used with the Raspberry Pi 3B+ in its NCS formfactor, thanks to the data and super-awesome/detailed post courtesy of PyImageSearch here. (Aside: PyImageSearch is the best thing that every happened to the internet):

So this is a bump from 8.3 FPS with the Pi 3B+ and NCS2 to 25.5 FPS with the Pi 3B+ and DepthAI.

When we set out, we expected DepthAI to be 5x faster than the NCS2 when used with the Raspberry Pi 3B+ and it looks like we've hit 3.18x faster instead... but we still think we improve from here!

And it's important to note that when using the NCS2, the Pi CPU is at 220% to get the 8 FPS, and with DepthAI the Pi CPU is at 35% at 25 FPS. So this leaves WAY more room for your code on the Pi!

And as a note, this isn't using all of the resources of the Myriad X. We're leaving enough room in the Myriad X to perform all the additional calculations of disparity depth and 3D projection, in parallel to this object detection. So if there are folks who only want monocular object detection, we could probably bump up faster than by dedicating more of the chip to the neural inference... but we need to investigate to be sure.

Anyways, we're pretty happy about it:

Now we're off to integrate the code for depth, filtering/smoothing, 3D projection, etc. we have running on the Myriad X already with this neural inference code. (And find out if we indeed left enough room for it!)

Cheers,

Brandon & The Luxonis Team

Brandon

Brandon

Discussions

Become a Hackaday.io Member

Create an account to leave a comment. Already have an account? Log In.

Just as an an update we have further improved the performance and are now seeing 40+ FPS instead of the 25FPS reported here.

Are you sure? yes | no

Sorry @yomuevans , I missed your question somehow. So no drivers are needed, but OpenVINO is. And by default it will appear to Raspberry Pi just like an NCS2, usable in OpenVINO just like an NCS2.

Then we have a Python interface that allows using features in DepthAI that aren't possible w/ the NCS2 (stereo depth, for example).

Our Crowd Supply is now live, by the way:

https://www.crowdsupply.com/luxonis/depthai

Thanks,

Brandon

Are you sure? yes | no

Thanks, all!

@yomuevans so WRT drivers, yes and no. So our "movidius hat" will show up to OpenVINO by default and will be usable just like an NCS2 would be. So any existing code that folks have that works w/ that stack, will default work. (That system wouldn't allow neural inference direct from image sensors though, and wouldn't allow depth... but it would allows code written for NCS2 to run right away.)

For the additional functionality of this direct-from-image-sensor neural inference, depth data, object localization, etc. - all the stuff that you can't do w/ an NCS2 - we'll have an open-source Python code base (which we have prototyped now (read: hacked together as PoC), but not ready to share quite yet).

This Python code will interface with libraries that do the low-level talking to the Myriad X. It'll all be very similar to how the NCS2 is used right now in OpenVINO.

Also, if you have any requests on the Python interface, or thoughts on what you'd want it to look like, we're all ears.

And for the sales - we're planning on doing a CrowdSupply so that we can do a bigger initial batch build - which will help with our goal of keeping the price as low as we reasonably can (so that a bigger audience can prototype with it).

https://www.crowdsupply.com/luxonis/depthai

Thanks,

Brandon

Are you sure? yes | no

Woo hoo!

Are you sure? yes | no

This is simply amazing, kudos! Did you need any drivers connecting your "movidius hat" to the raspberry pi, or its just plug and play?

If you're planning on selling it kindly share a link

Are you sure? yes | no

Congrats guys, this looks very promising!

Are you sure? yes | no