01/27/2020

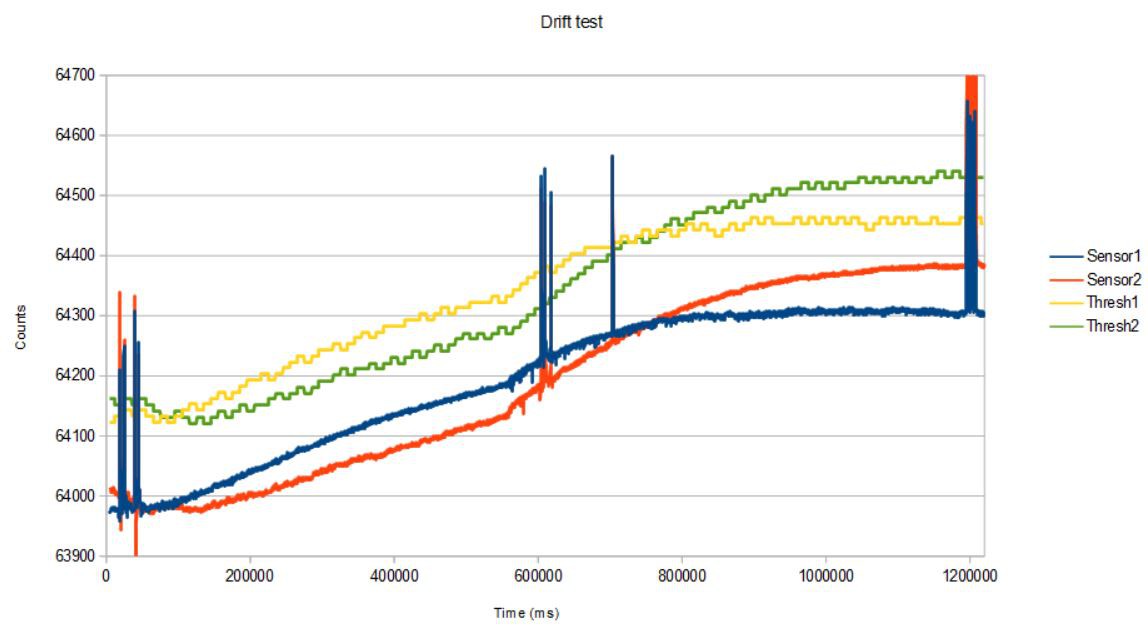

Using the new method of testing the People Counter in the intended operation mode while connected to the serial monitor I captured more data over a longer term to measure threshold drift and its effect on counting accuracy. First, I streamed data for 20 minutes at 10 Hz and walked under the People Counter (mounted on the upper jamb of a portal) a total of six times, twice every 10 minutes or so.

The ingress and egress events were captured with 100% accuracy (6/6). There was significant drift in the baseline of the sensor signals, and the threshold adjustment method was able to keep track well enough to allow the People Counter to maintain its accuracy over the entire 20 minute interval. I suspected that the baseline rise at about the 10 minute mark was due to the house heater coming on, which I verified in the next test.

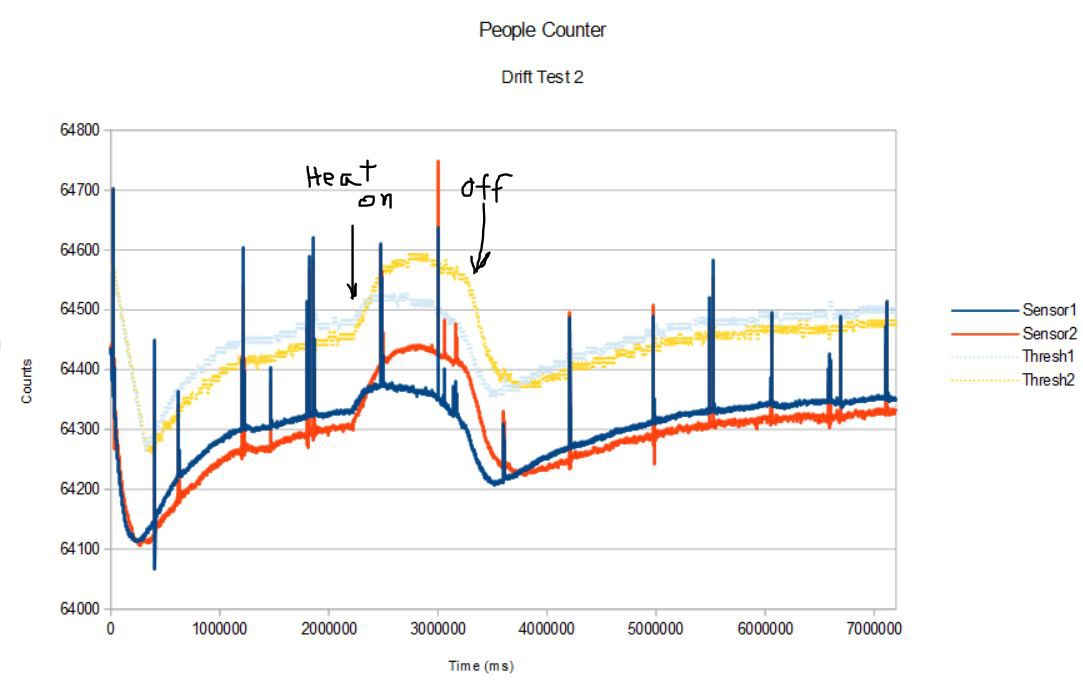

In the next test, I changed the serial monitor output rate to 1 Hz and collected data for 120 minutes, again walking back and forth under the People Counter at least twice every ten minutes or so.

The sensor signal baseline dropped rapidly as soon as the test started then slowly climbed again. At the 37 minute mark I turned on the local heating system and turned it off at the 54 minute mark. The effect in increasing the baseline is obvious. The 1 Hz serial monitor sample rate is not fast enough to capture the full transit excursions in each case (which is why some of the peaks appear to be below the thresholds) but I counted 30/29 ingresses and 28/29 egresses; one of the transit directions was reversed. So no false positives, 100% transit crossing detection, 96% direction detection success rate in this 2-hour test.

Even though I expect that the sensor baselines will eventually plateau (to be demonstrated) I also expect that changes in the building heating system and even the presence of people crossing the portal will affect the baseline so that threshold management will always be required to maintain accuracy. It seems to be working pretty well on these time scales. That is, the baseline changes are rather slow and smooth, and the threshold adjustment method can easily compensate.

So where are we in this project development? Here are the design criteria with commentary:

1) small and unobtrusive (say, 50 cc or less)

We are using a ~105 cc Bud Industries container.

2) ultra low power so it can run on two AAA batteries for 2 years or more

Average power usage at 10 minute LoRaWAN duty cycle is ~150 uA; two AAA batteries (1200 mAH) will last one year. The power usage is significantly impacted by the threshold adjustment requirement. It will also depend on the people counting rate since everytime a person crosses the line-of-sight of the device costs power, so the heavier the traffic the lower the battery life.

3) inexpensive, say $40 or less BOM cost

Initial production cost was higher, the cost per device should drop with higher production volumes such that the first 500 should cost less than $40 each.

4) connected via LPWAN (low-power wide-area network), either LoRaWAN or BLE 5.0

LoRaWAN with excellent range.

5) utterly reliable, meaning 100% no false positives or negatives

0% false positives. 96% accuracy with limited testing. The accuracy is for a portal that is 7 feet tall and 2 - 3 feet wide, a normal household door. We haven't tested the device in other environments or with different portal designs so the ~100% detection accuracy could be lower.

6) no cameras or imagers that might compromise people's demand for privacy (added to the list, but no camera-based technology can meet 2) and 3) anyway).

No camera.

Next step is deployment in the field for more rigorous testing in the intended operation mode. I will report on this next.

Kris Winer

Kris Winer

Discussions

Become a Hackaday.io Member

Create an account to leave a comment. Already have an account? Log In.

Yes, one can always use two AA batteries (3.0 V, 2400 mAH) or a 3.6 V, 2400 mAH LiSOCL2 battery but AAA are cheaper and smaller. Still, the point is I have chosen a goal for the project and am simply comparing the current status to the goal. In an actual product, where maintenance workers are responsible for changing the batteries, we would probably use two AA batteries, since these are commonly available and cheap. We would solder a battery holder onto the pcb which just fits inside the Bud Box. This could be done in production without having to worry about shipping batteries in a device from China, which is a problem. I would have to test the effect of the AA batteries on the antenna performance, but this is a reasonable solution where the 2400 mAH capacity would allow yearly battery changes.

Are you sure? yes | no

Encouraging progress. My application permits 2 x AA batteries, which provides more headroom on duration between battery changes.

Are you sure? yes | no