Progress has been slow lately, due to the difficulty of tracing the apps in how they use the models, and also my lack of free android hardware to run tests on. However, this may become completely irrelevant in the near future!

It seems Google is building speech recognition into Chromium, to bring a feature called Live Caption to the browser. To transcribe videos playing in the browser a new API is slowly being introduced: SODA. There is already a lot of code in the chromium project related to this, and it seems that it might be introduced this summer or even sooner. A nice overview of the code already in the codebase can be seen in the commit renaming SODA to speech recognition.

What is especially interesting is that it seems it will be using the same language packs and RNNT models as the Recorder and GBoard apks, since I recently found the following model zip:

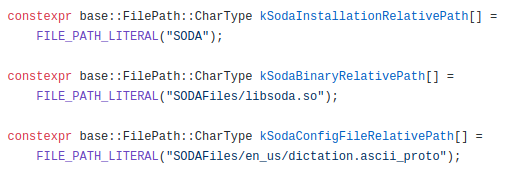

It is from a link found in the latest GBoard app, but it clearly indicates that the model will be served via the soda API. It is still speculative though, but it seems only logical (to me at least) that the functionality in Chromium will be based on the same models. There is also this code:

which indicates that soda will come as a library, and reads the same dictation configs as the android apps do.

Since Chromium is open source, it will help enormously in figuring out how to talk to the models. This opens up a third way of getting them incorporated into my own projects:

1) import the tflite files directly in tensorflow (difficult to figure out how, especially the input audio and the beam search at the end)

2) create a java app for android and have the native library import the model for us (greatly limits the platform where it can run on, also requires android hardware which i don't have free atm)

3) Follow the code in Chromium, as it will likely use the same models

I actually came up with a straightforward 4th option as well recently, and can't believe I did not think of that earlier:

4) Patch GBoard so it also enables "Faster voice typing" on non-Pixel devices. Then build a simple app with a single text field that sends everything typed (by gboard voice typing) to something like MQTT or whatever your use case might be.

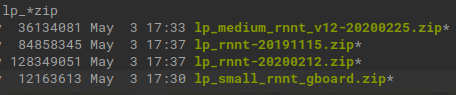

I'll keep a close eye on the Live Transcribe feature of Chromium, because I think that that is the most promising path at the moment, and keep my fingers crossed it will show how to use those models in my own code. In the mean time I found a couple more RNNT models for the GBoard app, one of which was called "small" and was only 12MB in size:

Very curious how that one performs!

UPDATE june 8:

The following commit just went into the chromium project, stating:

This CL adds a speech recognition client that will be used by the Live Caption feature. This is a temporary implementation using the Open Speech API that will allow testing and experimentation of the Live Caption feature while the Speech On-Device API (SODA) is under development. Once SODA development is completed, the Cloud client will be replaced by the SodaClient.

So I guess the SODA implementation is taking a bit longer than expected, and an online recognizer will be used initially when the Live Caption feature launches. On the upside, this temporary code should already enable me to write some boilerplate stuff to interface with the recognizer, so when SODA lands I can hit the ground running.

biemster

biemster

Discussions

Become a Hackaday.io Member

Create an account to leave a comment. Already have an account? Log In.

This is impressive work! So many potential use cases if they open source this. I'll keep an eye on your blog, it's rad.

Are you sure? yes | no

Thanks! It would be even better if SODA would be open source indeed, but for now I'd settle already for just the binary lib. I'm watching the chromium code repo closely for updates on this component, there is regularly work being done on it there.

Are you sure? yes | no

Also the model zip file with 22 files you've mentioned in this post, may I know how can I download it ?

Are you sure? yes | no

In the first log of this project I tried to explain how to obtain those models. I'm a bit reluctant to give direct links to those since they are not my own work. The GBoard app however did change the URL from where it retrieves the models, but it's not too hard to figure out the new address. Feel free to msg me directly if you're still stuck after trying the above.

Are you sure? yes | no

Hi @biemster ,

Thank you very much. Can you kindly let me know how can I download the Recorder app. I'm not an android user, is there a way I can download the app or apk file on my Mac book ? After that, I'll figure out how to uncompress/extract the app with some apk tool.

Is the Recorder App and GBoard are different ? should I download both apps ?

yeah I've downloaded the json file you've mentioned in the first post and and able to download the single link zip file . In addition to that, I'm able to download superpacks-manifest-20190601.json and able to download new lp_rnnt-20190601.zip.

Are you sure? yes | no

The Recorder app is different from GBoard. The Recorder app has the models included in the res folder, and GBoard downloads them later on via a hardcoded link.

Are you sure? yes | no

Hi,

Can you provide us the links to download the lp_small_rnnt_gboard.zip file and other zip files shown in the last screenshot. I searched here in your article and coludn't find a way to download these zip files.

Are you sure? yes | no

^^^ See my reply on your next question :)

Are you sure? yes | no

I agree this is very exciting. It would be ideal if chrome voice recognition were modified to use the offline model.

Do you have any experience with option 4? From some googling it appears that people have had difficulty decompiling and recompiling GBoard.

Are you sure? yes | no

Decompiling the resources does not work with apktool indeed, but I don't need that and the -r flag will skip it. I did not get to the recompile step yet, I'll search around a bit first what issues people run into.

Are you sure? yes | no