-

1000+ hours on V2 Voltage Reference

08/26/2022 at 14:38 • 0 commentsIt's been more than 6 weeks since I powered on the new voltage reference. It has been running continuously, except for 2 relatively short power outages. Time to find out what sort of drift is happening. Here's the data:

Temperature = 64F (~18C), HighZ input, 100plc

Reading Scale Other Error Drift

99.9913mV - 99.993mV 100mV Null, Avg 20 samples ~-8uV +1.4uV (14ppm)

1.0000829V 1V Avg 20 samples +83uV -0.4uV (-0.4ppm)

10.000070V 10V Avg 20 samples 70uV +83uV (8.3ppm)

I'm satisfied with these results. The biggest surprise is the 10V reading drifting nearly 10ppm, but it doesn't require any adjustment since it is within 100uV of the target. I'll just record these numbers on the box and use them to compare readings in the future.

Note that the 100mV reading is a range. I left the meter run continuously over several days and made multiple readings. Every time I took a null measurement and replaced the probes it would be a slightly different reading. I'm going to chalk that up to thermoelectric noise and move on.

-

New Voltage Reference Results (Preliminary)

07/01/2022 at 22:04 • 0 commentsThe new design using a single LT1021-10 with a single resistor divider appears to work fine. I was able to salvage the LT1021, TLV170 opamp, inductor and a couple capacitors from the previous board. All of the resistors are new.

The heater feedback loop works fine. I don't have the Zener diode to limit the swing of the opamp yet, but the loop settles to its steady state value in about 30 minutes. After several attempts to trim the heated 10V reference to exactly 10.00000V I gave up. Here are my initial readings with my now 3 year-old Keysight 34461B DMM:

New Voltage Reference (>3.5hr soak, 62F ambient, 100PLC, HighZ input)

Reading Scale Other Error

99.9906mV 100mV Average of 20 samples, zero null -9.4uV

1.0000833V 1V Average of 20 samples +83.3uV

9.999987V 10V Average of 20 samples -13uV

So you can see that I was able to trim the LT1021-10 to within 100uV of 10V (according to my 34461B). It's a bit disconcerting that the 1V reference is a bit to the high side while the 100mV reference is a bit low. My initial reaction is that the 34461B is drifting on the 1V scale. If I can get my hands on a calibrated 6-8 digit DMM I will know the truth.

I will keep the reference powered for 1000 hours and trim any resulting drift. After that...time will tell.

-

Striving for Simplicity

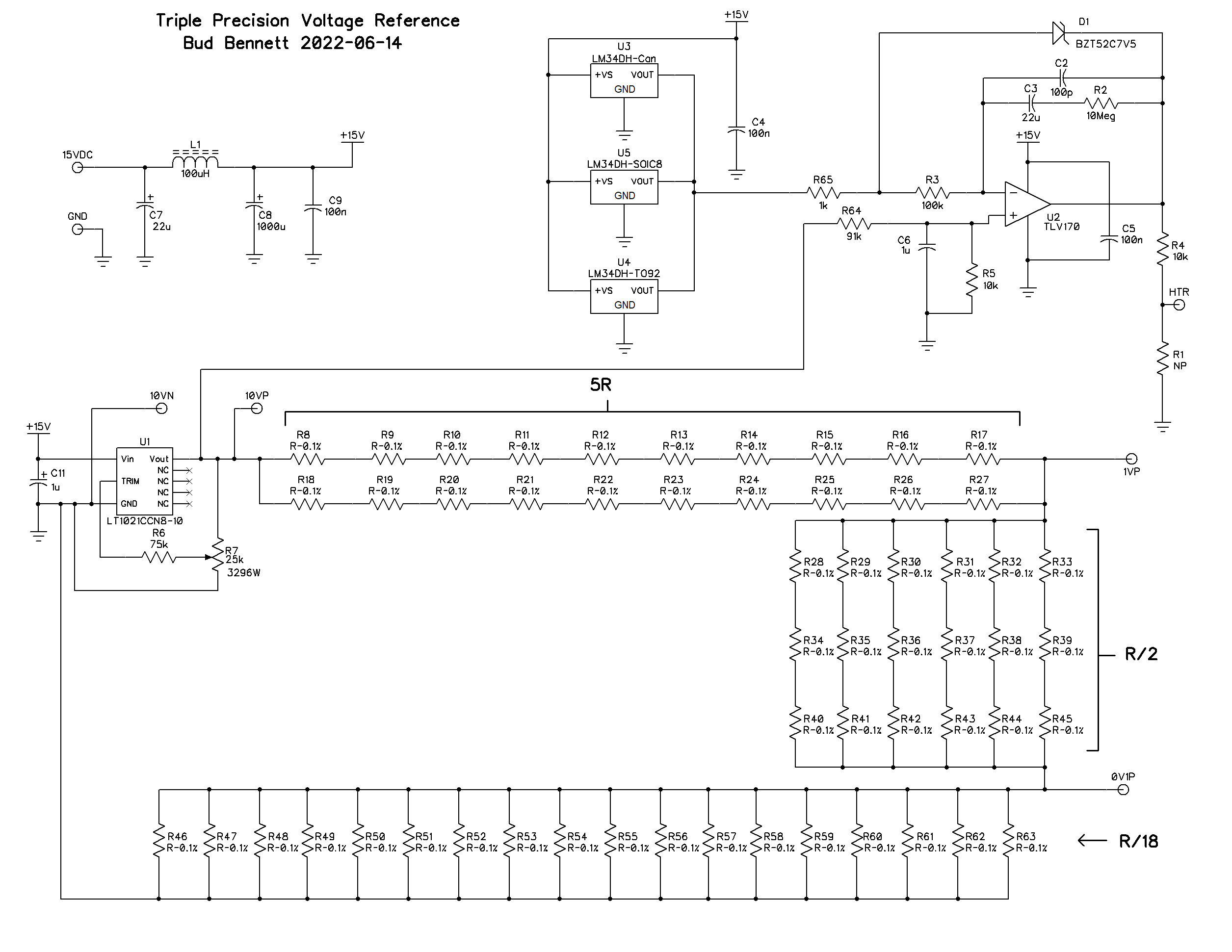

06/16/2022 at 20:22 • 0 commentsWell, in my humble opinion, the LT1021C-10 is the clear winner. The LT1021-5 is not nearly as good, as it uses external components for temperature compensation. Neither is the REF5025A. I'm starting over with an objective to produce better stability for fewer reference voltages -- 10V, 1V, 0.1V -- all derived from a single 10V reference. Here's the schematic:

![]()

There is a single trimmer pot to adjust the LT1021 to 10V+/-100uV.

A single resistor divider produces both the 1V and 0.1V. With standard deviations of 0.0016%. There are enough of the 20k 0.1% 0805 resistors left over from my original purchase to populate the PCB. (And you can still get them, as of 2022-06-15, on eBay for a reasonable price.) But any reasonable 0805 resistor value with the same tolerance should work.

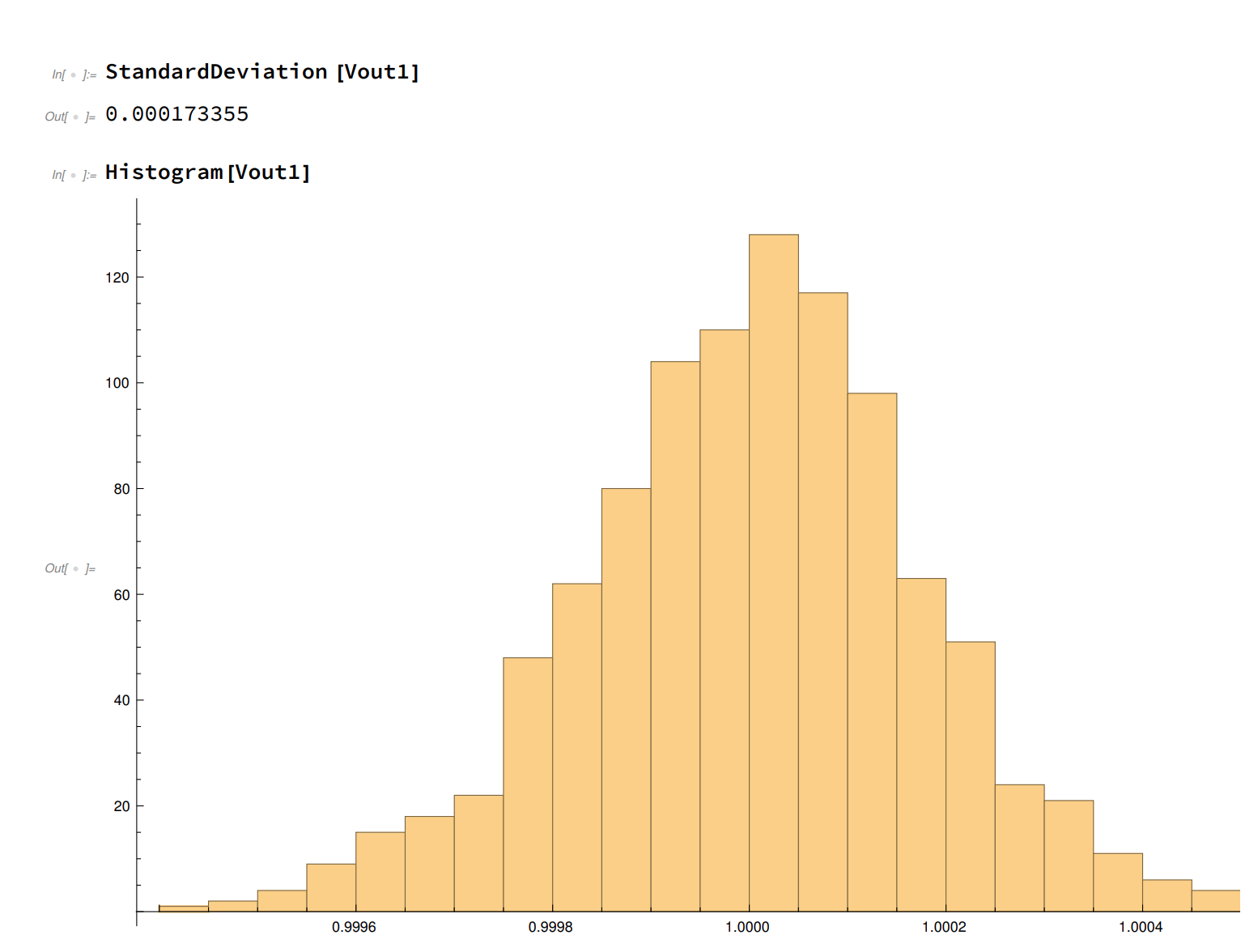

I ran a Monte Carlo simulation over 1000 cases (assuming that the 10V reference varied uniformly between +/- 100uV) , which produced this histogram for the 1V reference:

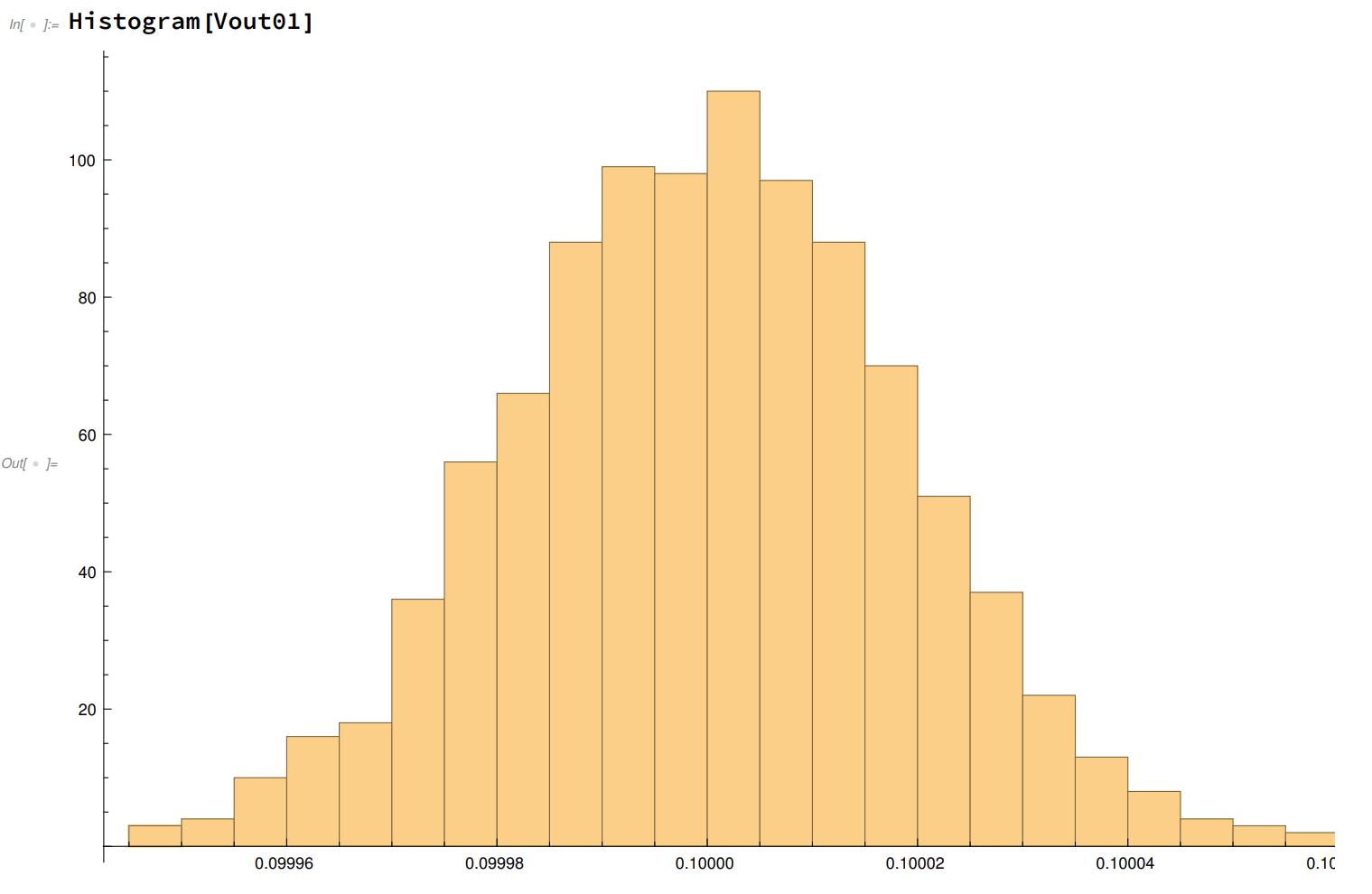

and this histogram for the 0.1V reference point:

Since the REF5025 is no longer providing a temperature output the LM34D is used instead. I have a few of these (accumulated during the 1980's most probably) in metal cans. The voltage output of the LM34D is the temperature in degrees Fahrenheit x 10mV, with an accuracy of +/- 3F. So for 100F the output would be 1.0V. No trimmer required. The LM35, providing output voltage in degrees C, could probably be used as well. These metal cans are prohibitively expensive, so I have provided for the other two possible package types (TO92 and SOIC8) to be mounted on the PCB as well -- they are less than $4/each from Digikey at the time of this log.

I added D1 to clamp the output of the PID opamp rather than let it be overdriven against either the supply or GND. This should improve the response time of the heater (I think).

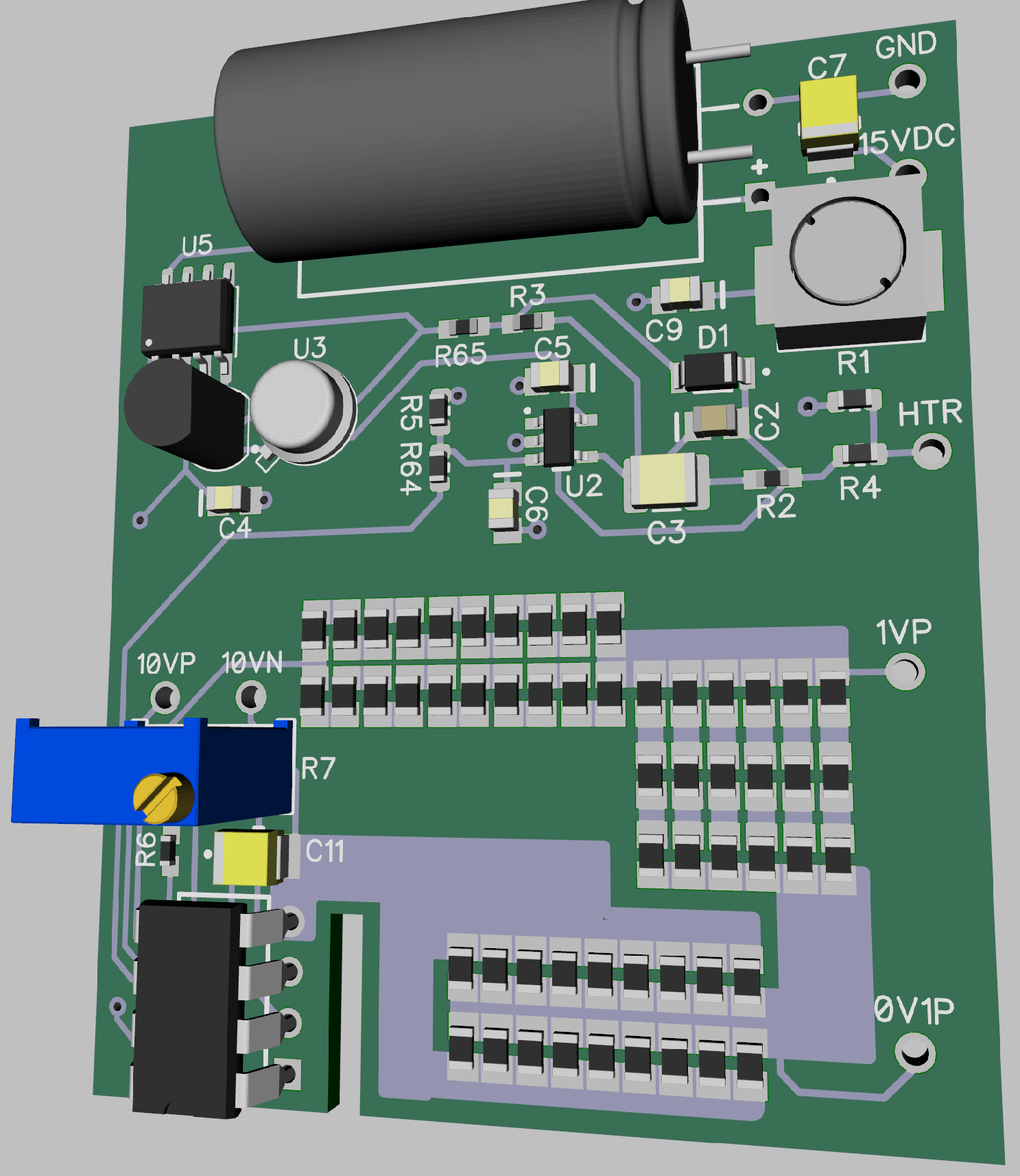

The Layout:

The upper half is devoted to the input filtering and the heater PID controller. The lower half is the voltage reference and divider. Note that there is only one negative reference point for all of the outputs -- 10VN. All sensitive connections are Kelvin connected, as before.

This reference box should not cost me very much. I'll be scavenging parts from the AliExpress box and don't need to purchase anything new, except the 7.5V Zener diode, which is pretty cheap.

Ordered the PCB from JLCPCB with super slow shipping.

-

3 Years

06/09/2022 at 22:29 • 0 commentsDigikey Voltage Box: (3.5hr soak, 62F ambient, 100PLC, HighZ input)

Reading Scale Other 1 yr Drift Total Drift (2 year spec limit)

100.00489mV 100mV Null + AVG 20 samples 3.92uV -1.61uV (+/- 10uV)

0.9999747V 1V Null + AVG 20 samples 15.6uV -48.3uV (+/- 62uV)

2.49983V 10V Null + AVG 20 samples -12uV 100uV

4.999283V 10V Null + AVG 20 samples 162uV 63uV

9.999972 10V AVG 20 samples -46uV -98uV (+/- 550uV)

AliExpress Box: (2.75hr soak, 64F ambient, 100PLC, HighZ input)

Reading Scale Other 1 yr Drift Total Drift (2 year spec limit)

100.00095mV 100mV Null + AVG 20 samples 1.13uV 8.45uV (+/- 10uV)

1.0000647V 1V Null + AVG 20 samples -2.8uV 86.7uV (+/- 62uV)

2.500199V 10V Null + AVG 20 samples 35uV 219uV

10.000073V 10V AVG 20 samples 17uV 3uV (+/- 550uV)

Current Reference:

(3 hour soak, 64F ambient, 100PLC)

Reading Scale Other 1 yr Drift Total Drift* (2 yr Spec Limit)

99.99724uA 100uA Null + AVG 20 samples -4nA 4.76nA (85nA)

0.999974mA 1mA Null + AVG 20 samples -12.7nA -9nA (660nA)

10.000667mA 10mA Null + AVG 20 samples 1.33uA 1.32uA (8uA)

*Relative to 6 month data.

Conclusions:

The only reading outside of the 2-year tolerance is the 1V reference point of the AliExpress box. I think if I had it to do over again I would ditch the LT1021-5 and divide down from the 10V reference to get the 1V reference. That LT1021-10 has stellar performance (or it just drifts in the same direction as the DVM...?) There is no concern regarding the accuracy of the DVM.

The only way to really find out whether the DVM or reference are drifting is to compare to a more accurate DVM that is still under calibration. My access to a lab with such a measurement tool is pretty much nonexistent now.

-

2 Years

06/29/2021 at 02:07 • 0 commentsDigikey Voltage Box: (2.5hr soak, 64F ambient, 100PLC, HighZ input)

Reading Scale Other 1 yr Drift (Vref + DMM)

100.00097mV 100mV Null + Avg 20 samples 1.57 uV

0.9999591V 1V Null + Avg 20 samples -51 uV

2.499842V 10V Avg 20 samples 82 uV

4.999121V 10V Avg 20 samples -279 uV

10.000018V 10V Avg 20 samples -12 uV

AliExpress Box: (6hr soak, 62F ambient, 100PLC, HighZ input)

Reading Scale Other 1 yr Drift (Vref + DMM)

99.99982mV 100mV Null + Avg 20 Samples -1.99 uV

1.0000619V 1V Null + Avg 20 Samples 50.9 uV

2.500164V 10V Avg 20 Samples 24 uV

10.000056V 10V Avg 20 Samples 26 uV

Current Reference:

64F

Value Scale 1 yr Drift (Iref + DMM)

100.00127uA 100uA Avg 20 samples 8.14 nA

0.9999867mA 1mA Avg 20 samples 9.7 nA

9.999337mA 10mA Avg 20 samples -2.137 uA

Conclusions:

After 2 years the meter and references are well within the expected tolerances for both the voltage and current ranges.

-

1+ Year

07/23/2020 at 23:13 • 2 commentsIt's been more than 1 year since I purchased the Keysight 34461A DMM. It is officially out of calibration now. Time to do a quick measurement. I powered up the voltage calibration box (the Digikey box) and the DMM for six hours prior to taking a measurement. Here's the results:

DIgikey Box:

Ambient Temp: 68°F(20C)

DMM settings: 100PLC, Auto Input Z (High Z)

Reading (not averaged) Scale

99.9994mV (nulled) 100mV

1.000011V 1V

2.49976V 10V

4.99940V 10V

10.00003V 10V

These are not very far off from the six month readings.

AliExpress Box:

(same settings and ambient temp as above, soak time 4 hours)

Reading Scale

100.00181mV (avg/20 samples) 100mV Nulled

1.000035V (avg/20 samples) 1V

2.50014V 10V

10.000114V (avg/20 samples) 10V

Current Reference(all readings 100plc, averaged with 20 samples):

Reading Scale

10.001474mA 10mA

0.999977mA 1mA

99.99313µA 100µA

General Conclusions:

All of the voltage references seem to be very stable now. No significant difference between the USA components and the Chinese components. The current readings are drifting a bit, but the specs of the 34461A are quite a bit looser for current than voltage. The only way to tell for sure is to compare readings to a calibrated DMM with >6.5digit capability. That's not happening anytime soon.

-

6+ Months

02/20/2020 at 20:51 • 0 commentsAll of the references have been sitting in a drawer, collecting dust, until today. I has been a few months since the 1000 hour tests were completed. I let the references and the DVM warm up for at least 2 hours before I took any measurements. At this point in time the DVM is expected to be within 155ppm @ 100mV, and within 80-90ppm for the 1V and 10V ranges.

The Voltage Reference using Digikey parts:

2020-02-20 2hrs warmup:

Set Point: = 0.6278V

Value Range Change

100.0166 100mV (+101ppm)

1.000036 1V (+13ppm)

2.50013 10V (+80ppm)

4.99932 10V (+20ppm)

10.00005 10V (-2ppm)I also calculated the change, in ppm, from the last readings taken at the 1000 hour point. The big disappointment was the 2.5V reference and the 100mV reference, which is derived from the 2.5V. The reading is outside the allowed range of the DVM. 80% of the change appears to be due to a big shift in the 2.5V reference voltage.

There are some differences: I believe that the 2.5V reference is a band-gap reference, where the other two references are derived from buried Zener diodes; the 2.5V reference is the only reference that is soldered to the PCB -- the other two are plastic DIP packages that are socketed.

The 10V reference is truly stellar with only a -2ppm drift.

The Voltage Reference using Chinese parts:

2020-02-20 after 2 hrs warmup:

Value Range Change

100.0043mV 100mV (+118ppm)

1.000020V 1V (+42ppm)

2.50021V 10V (+92ppm)

10.00017V 10V (-4ppm)Again, the big loser is the TI 2.5V reference (both of the TI reference ICs were sourced by Digikey); and the winner is the LT1021-10. The amount of change in the 2.5V reference is nearly the same percent (and direction) between the two PCBs.

Current Reference:

9.99935mA (-13ppm change)

0.999983mA (-11ppm change)

100.002µA (-12ppm change)

The current reference is based upon the LT1021-10 reference, so it is not surprising that the differences are quite small.

Conclusions:

Stick with the buried Zener reference and don't solder them into the PCB.

[Edit 2020-02-21: Added the following.]

****************

The day after getting the above results I took more measurements and got essentially the same results. But the 2.5V reference was not changing with the application of power, so I'm thinking it might be worthwhile to just recalibrate the 100mV reference and continue onward, which is what I did. Here's the new readings to compare with future data:

Digikey Box:

99.99952mV (averaged)

1.000027V

2.49975V

4.99935V

10.00005V

AliExpress Box:

100.00077mV (averaged)

1.000009V

2.50012V

10.00019V

I discovered that the DVM will calculate mean and SD from the readings. It is nigh impossible to get consistent readings from the 100mV source. Small temperature variations cause galvanic errors and then there is noise. Expect ±2µV uncertainty.

The reference boxes are going back on the shelf for a few months. We'll take them out and measure again near the first anniversary of the DVM.

-

Do cheap components make a difference?

08/28/2019 at 19:55 • 0 commentsI decided to make another voltage reference, this time using the cheapest components that I could find on eBay or Aliexpress. I even ordered the enclosure from an Asian source. Here are the results after more than 1000hrs.

2019-07-14 after running for 2 hours at temperature

Meter params: HighZ, 100PLC, AZ on

Value Range

100.0004mV 100mV

1.000007V 1V

2.50014V 10V

9.9999995V 10V (last digit bouncing)2019-07-21 after running for 168 hours at temperature

Meter params: HighZ, 100PLC, AZ on

Value Range

99.9912mV 100mV

1.000014V 1V

2.50001V 10V

10.00008V 10V2019-08-28 1096 hrs:

Value Range

99.9925mV 100mV (79ppm)

0.999978V 1V (36ppm)

2.49998V 10V (64ppm)

10.00021V 10V (21ppm)There doesn't appear to be any significant difference.

-

1000 Hrs!

07/02/2019 at 02:52 • 0 commentsThe voltage reference has been baking for 1000 hours, give or take a few. The power went down for a few hours during the test, but I don't think it matters much. Here's the results, using the same conditions as when the test began:

******************

2019-07-01 1000hrs at tempSet Point: = 0.627049

Value Range Aging Drift

100.0065 100mV 9 ppm ±2ppm

1.000023 1V 18 ppm ±2ppm

2.49993 10V 16 ppm ±8ppm

4.99922 10V 18 ppm ±4ppm

10.00007 10V 1 ppm ±2ppmI find it a bit suspicious that all of the voltages drifted upward over time. That would indicate a drift in the measurement tool more than the references. I put tolerances on the aging terms just to account for the uncertainty of the last digit over two measurements.

I don't really see a need to open the box to perform any trimming.

-

168 Hr Reference Aging Data

05/28/2019 at 21:21 • 0 commentsIt has been a week with the references running at the heater temp continuously. Here's the data (taken after the DVM ran for 1 hr at room temp):

2019-05-28 168hrs at temp

Meter params: HighZ, 100PLC, AZ on (Null for 1V and 100mV ranges)

Set Point: = 0.62785

Value Range

100.0068 100mV

1.000029 1V

2.49991 10V

4.99923 10V

10.00006 10VSo far so good. The 100mV reference drifted up by 1.1µV (0.0011%), tracking the upward movement of the 2.5V reference. The 5V and 1V references drifted up by 0.002%. The 10V reference did not move.

The box will remain powered for another 5 weeks or so.

Precision Voltage and Current Reference

A little something to check your digital multimeter accuracy occasionally.

Bud Bennett

Bud Bennett