-

Sine Section

08/19/2019 at 00:09 • 0 commentsTheory of operation

Much of this is abbreviated from the TS50 project so you can get more detail there. The sine bridge is an implementation of Direct Digital Synthesis (DDS). The controller produces a set of fixed frequency, duty-cycle varying drive signals connected to an H-bridge that send the modulated high-voltage square wave thru a set of LC filters to produce a low distortion 60Hz sine wave.

The bridge operates at 48kHz which allows for a sine pattern with 200 discrete values per quarter sine. Each value represents the time equivalent of a sine angle from 0 - 90 degrees. The controller ‘plays’ the pattern forward & backward for each half sine and then repeats the process for 180 - 360 degrees.

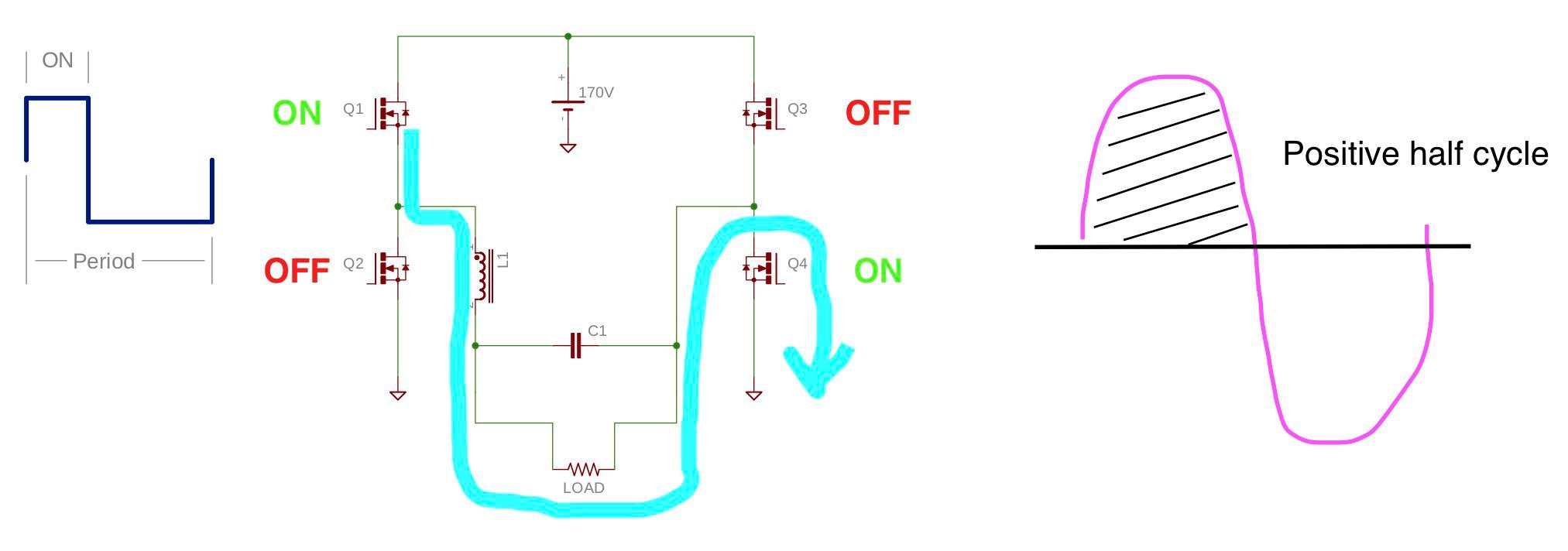

Electrically, the H-bridge functions a bit different from one driving a transformer.

![]()

During a given half cycle the low-side switch Q4 remains on (no switching) which connects the load to the high voltage supply negative pole. When the high side switch, Q1, is on, inductor L1 is charging and current flows thru the load to the negative supply pole.

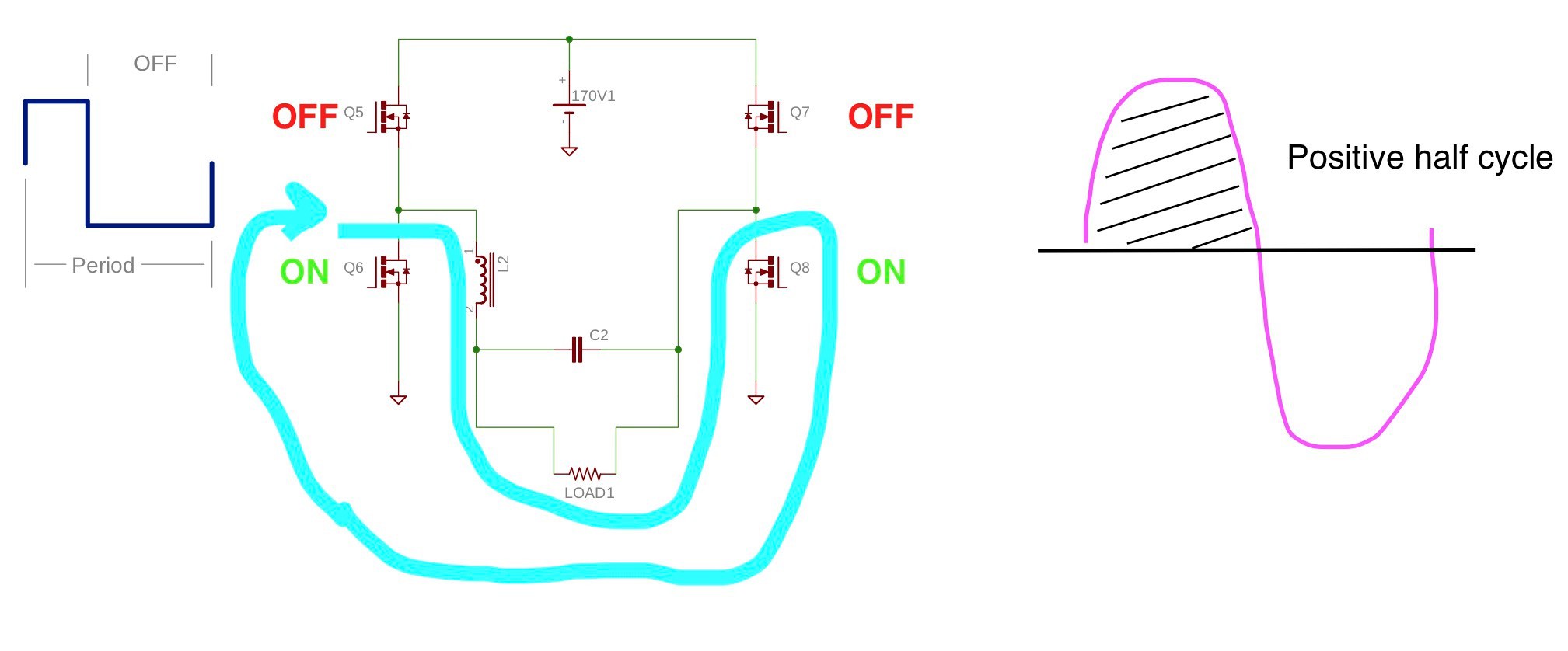

![]()

When Q1 turns off L1 reverses polarity to maintain current flow. To close the circuit Q2 turns on. Current no longer returns to the high voltage supply but instead uses the ground plane to flow thru Q2 and return to the negative node of the inductor.

This process repeats for one half sine cycle and then reverses with the other half of the bridge performing the switching. Although the diagrams only show a single LC filter, the TS350r1 has one for each leg and functions the same. The architecture could be thought of as two alternating and parallel buck converters.

Power Supplies

The sine section has three supplies. The Vcc supply is derived from a full-bridge rectifier on the transformer auxiliary winding and provides 13V via LDO U403. Vcc powers the gate drive and bootstrap circuits. Vdd provides the 5V logic supply for the controller, temperature sense, and communication isolator. An unregulated tap is also taken directly from the rectified transformer auxiliary that is used by the controller as a proxy for the high voltage supply (and is spared the dissipation of a resistive voltage divider).The high voltage supply consists of a full bridge rectifier and powers the H-bridge. Heat sinking is required, so to conserve space dual common anode/cathode TO220 packages are used. Three lower-value capacitors are placed in parallel to provide bulk capacitance. This allows for a lower maximum mounting height with no trade-off in PCB space based on the layout. The cost is just slightly more for three smaller vs. one big one.

With a two stage inverter design such as this, more capacitance for the high voltage supply isn’t necessarily better. It costs more, takes up a lot of space, and can complicate short circuit hardening for the H-bridge. It also puts more strain on the boost stage, both at startup and to keep it charged when under heavy load. This design’s transformer has poor regulation so the bulk capacitance helps reduce the sag to keep the minimum AC RMS voltage a bit higher than it would otherwise be.

Controller Card

The controller card houses the gate drivers and MCU. It’s physical design is very similar to the inverter: right angle 100mil pins, vertical mount. This allows for a more compact design and small gate drive loops.The gate drivers are the same component that is used for the inverter. And, as with the inverter section they allowed for minimal components to manage parasitics. Even though the controller to gate drive signal lines behave as a lumped system, the traces lengths are matched to ensure clean timing.

The MCU’s main job is DDS pattern generation which includes a crude form of voltage regulation. Secondarily it monitors the bridge temperature and turns the AC output on & off in response to assertion of the CMD pin.

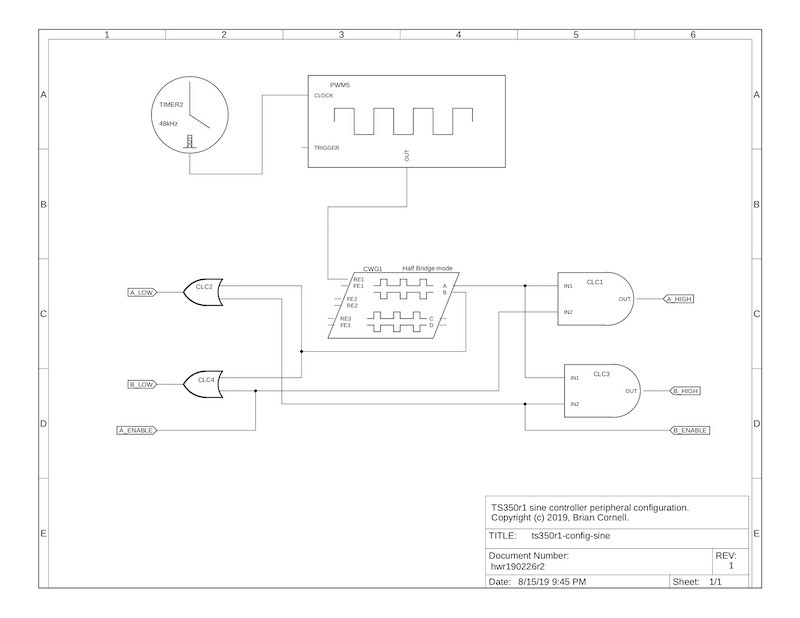

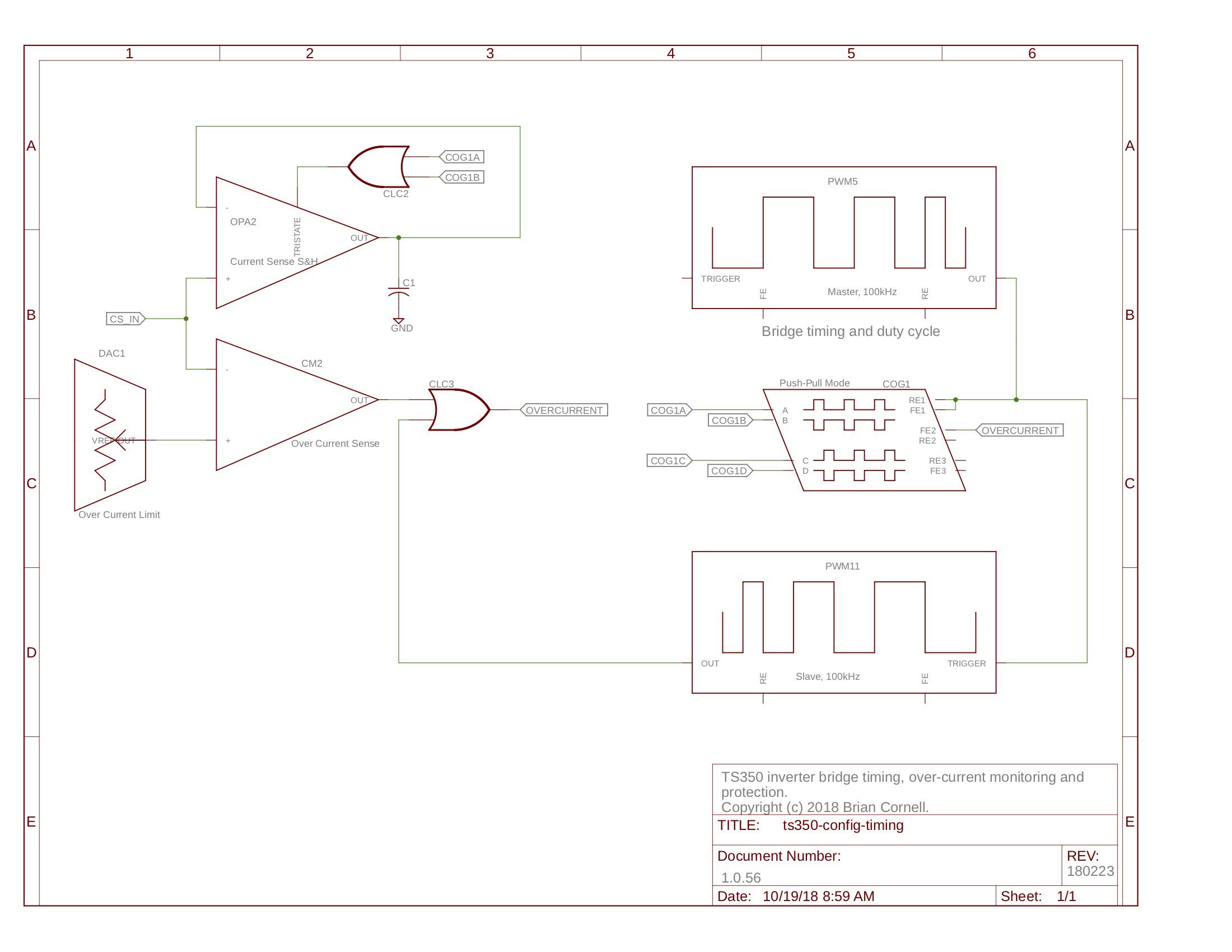

The DDS pattern generation is performed with a mix of MCU peripherals and firmware.

![]()

The bridge frequency is provided by Timer2 and drives PWM5. PWM5 drives the Complementary Waveform Generator (CWG1) which is configured as a half-bridge (HB). In HB mode the ‘A’ line is high when PWM5’s output is high (the duty cycle) and the ‘B’ output goes high at the end of the duty cycle and remains on for the duration of the period. CLCs 1-4 steer the CWG’s output to the side of the bridge that’s active. The ‘ENABLE’ tags determine which side is active.

To properly generate the sine, the duty cycle must change each period. A firmware interrupt triggered by Timer2 retrieves & loads the next duty cycle value to PWM5’s registers. At 90 degrees the pattern is loaded in reverse (high->low DC). At 180 degrees the bridge direction is reversed by reversing the ‘ENABLE’ pins, and the DC pattern loading process repeats. All of this must happen in less than one period (20.833uS) and leave sufficient time for some foreground processing.

Hence, the MCU must be run fast and is a limiting factor in the pattern generation’s resolution. That said, testing has shown that 200 samples per quarter sine is quite sufficient for a low distortion sine. The only advantage of higher speeds is a reduced output inductor but at the expense of more memory and a faster processor. Since the sine pattern is an array loaded in program flash care must be taken to minimize access times since it is much slower than SRAM. This is why each array element is first copied to SRAM (of course with enough SRAM the entire array could be copied there first).

The DDS algorithm also provides course regulation of the AC output voltage since the inverter stage doesn’t regulate. Because the MCU doesn’t have the processing power to dynamically calculate the angle timing, the DDS pattern is selected from a fixed array to keep the output voltage within 5V RMS of the nominal output. The resolution is limited by the resolution of the sine pattern and the available flash.

Array selection is done using a simple PI control loop that executes in the foreground. The rectified auxiliary voltage is sampled using the ADC and compared to a reference from which the proper array is selected. Array patterns are switched only at the start of a new sine (0 degrees) to prevent distortion.

The foreground loop starts the bridge when the Run pin is set TRUE. Once running the Acknowledgement pin is set TRUE. When the Run pin is set FALSE the bridge stops at 0 or 180 degrees which can take up to 8.333mS. The intent of this is to provide a soft (0V crossing) off for connected equipment (zero current crossing would be better but isn’t possible since the AC current waveform isn’t monitored).

The MCU also monitors the temperature of the bridge in the foreground loop using comparator C1 and the DAC. If the temperature is above the Hot threshold the bridge will not start. If it is running it will continue to do so but set the Acknowledgment line FALSE and allow the main controller to determine if operation continues. if the temperature exceeds the Overheat threshold the bridge is stopped.

Bridge

All high current & high speed switching generates a lot of EMI. Keeping the current loops as small as possible helps. When heat sinking is required, as in this design, faraday screens help reduce common mode noise on the supply. High voltage switching further complicates the layout. Placement of the ground plane must ensure short return paths but avoid placement under the switching nodes to avoid additional common mode noise thru the parasitic capacitance of the PCB.This design does a better job addressing these issues than its predecessors but the switching loops (bridge to LC filters) is still too big. Ideally the LC filters would be placed on the opposite side of the PCB opposite the bridge. This would provide a very small switching loop but would require a layout & size dimension that conflicts with other objectives in this design. Note that the mounting edges of the PCB use a via fence to contain EMI. The bottom edges of the PCB ground plane are also exposed to ensure the metal enclosure is grounded so it can function as a faraday cage.

This switching and LC arrangement works well for an isolated (non-grid connected) inverter. It’s reasonably efficient because all switching is handled by the MOSFETs (as opposed to the body diodes or external anti-parallel ones) and the dual LC filters provide a very low distortion sine.

But it does have significant drawbacks. It can’t be used in a grid connected system because the switching arrangement would create a short if operated with a supply voltage less than that of the grid. Second, the dual LC filter arrangement adds a lot of bulk and cost. Alternate designs perform all the switching behind a single LC filter and achieve good results. A single LC could be used on this too - in fact the TS50 does just that. But this significantly increases the radiated EMI because one leg of switching is done thru the entire AC loop.

Speed Matters

But not in the way you’re probably thinking. The semiconductor OEMs marketing folks will have you thinking that faster switching is always better - smaller inductors, more compact designs, etc. But faster brings with it a more complex design that is less forgiving. Note that when you get to the technical material that addresses design & troubleshooting from those same OEMs that the first recommendation for a parasitic problem is, wait for it, - slow it down!The original MOSFET I selected for the sine bridge was the STP46NF30 from ST. I selected it for its speed, low Rds(on), and generous SOA which is necessary to survive inrush and equipment shorts in inverter applications. But, as I began load testing, the low side switches would instantly fail with an applied inrush or a load exceeding a few hundred watts.

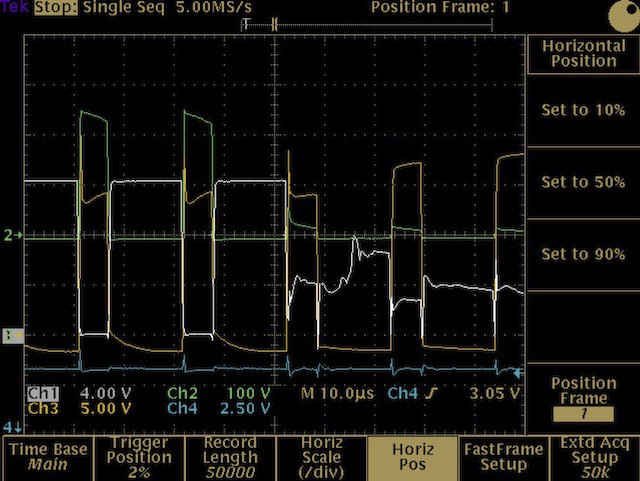

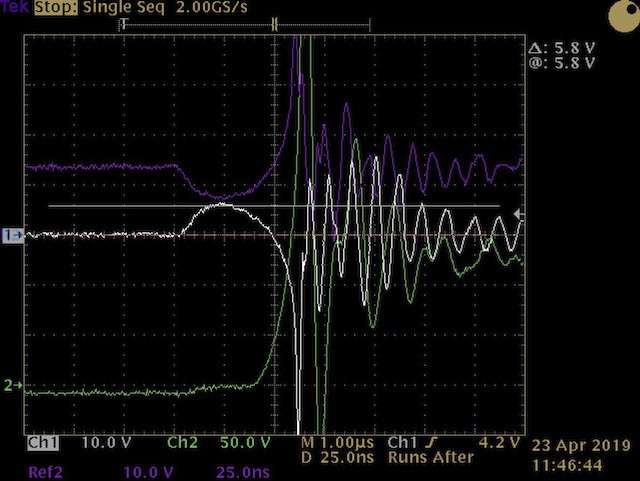

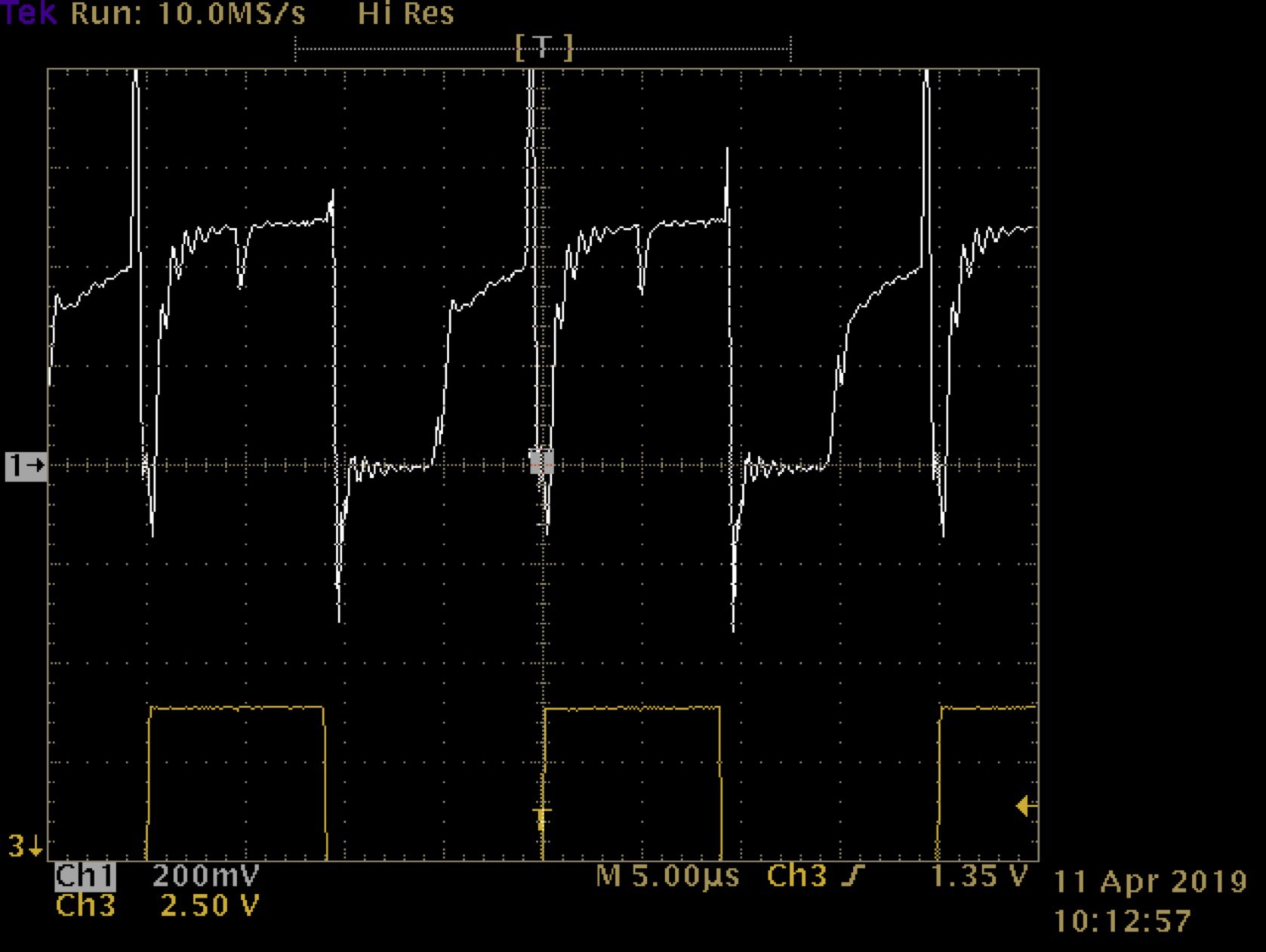

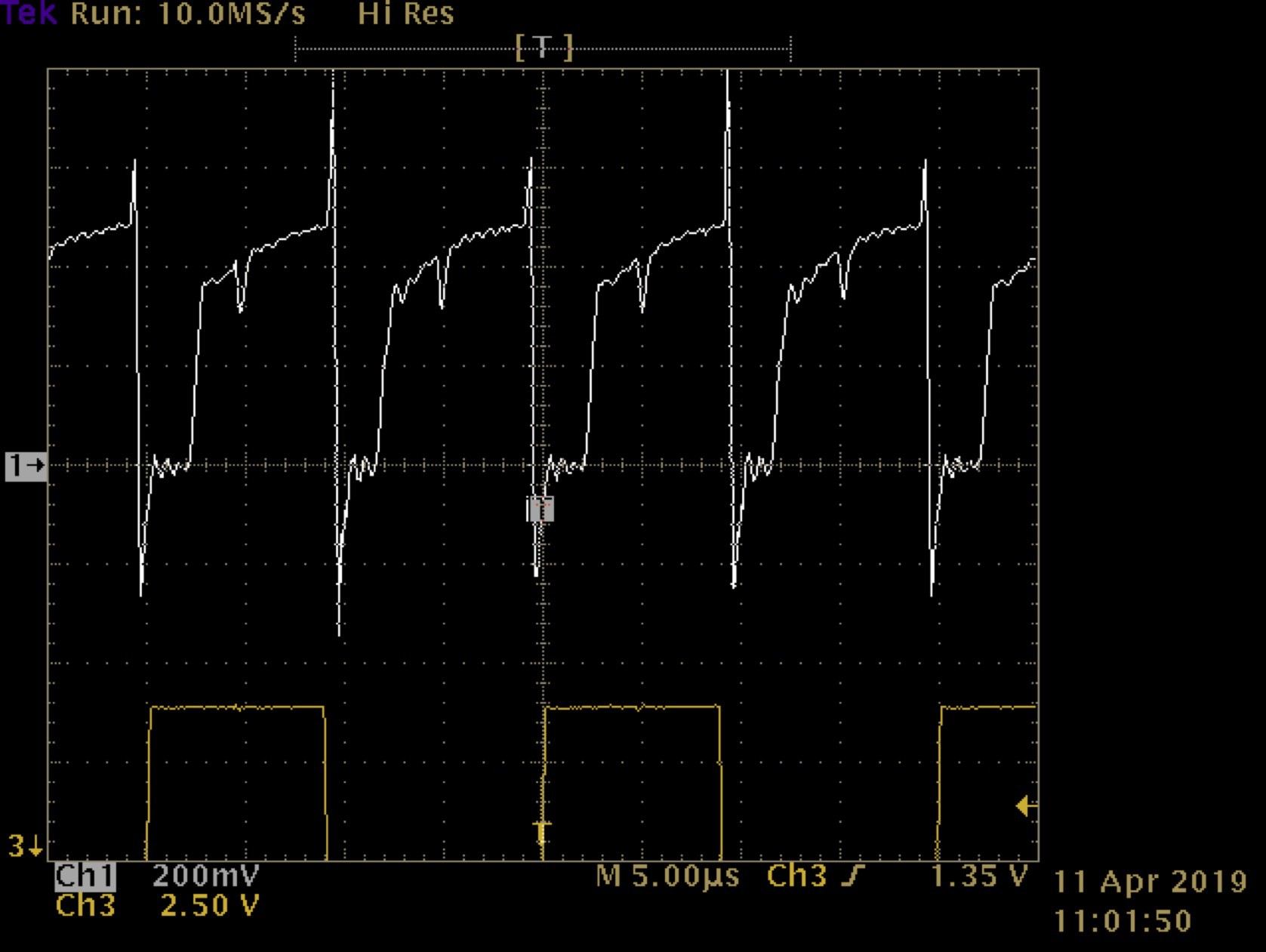

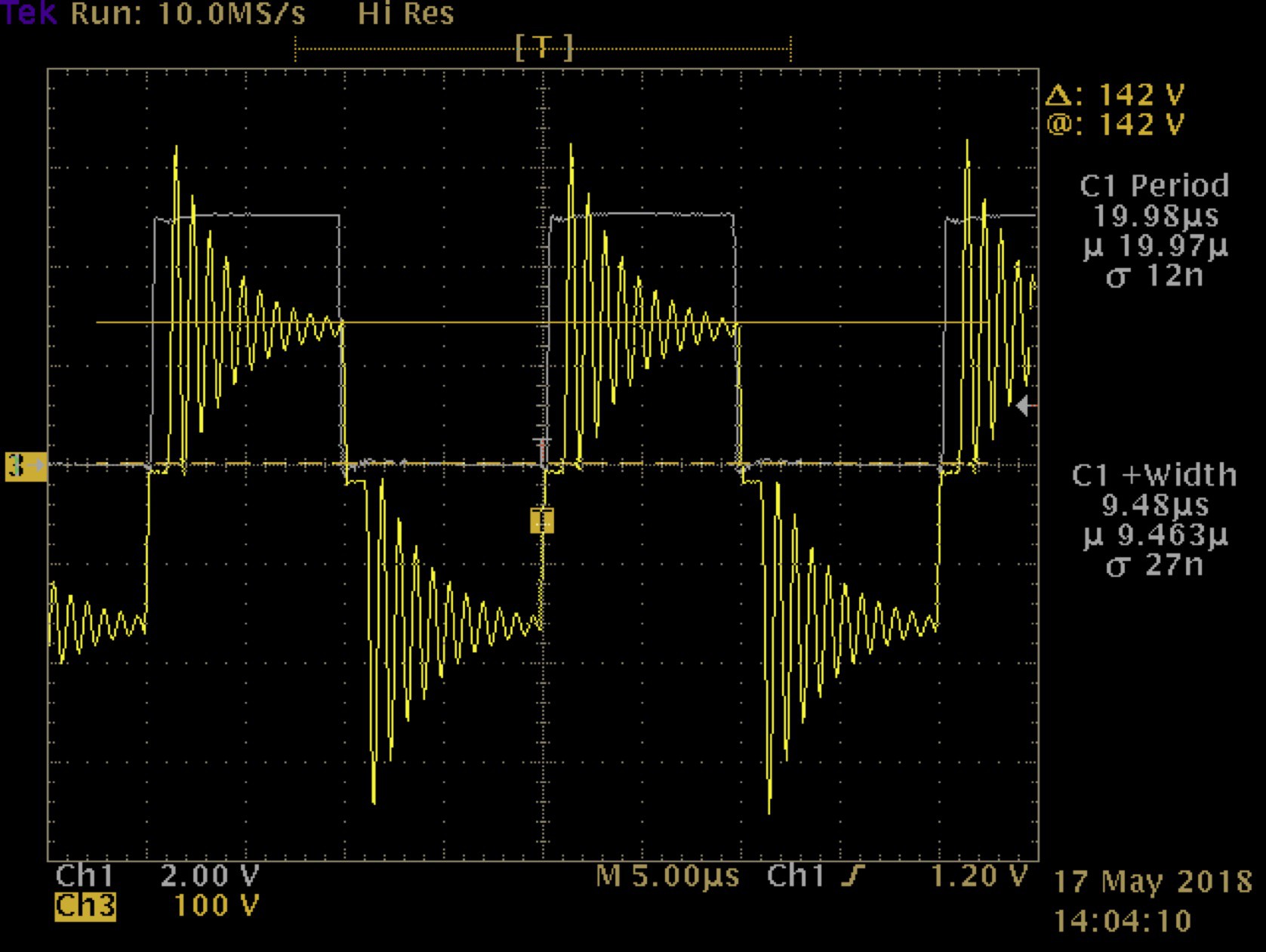

Here’s a trace of the scene of the accident.

![]()

CH1 is the low-side gate drive, CH2 is the center of one leg of the bridge (e.g. output to inductor), and CH3 is the same side high gate drive. You can see the gate break down and subsequently short the bridge. I spent weeks on it. It defied all logic: the high side transistors had all the voltage stress and they should have been the casualty. But not a single one of them failed. After finally abandoning the idea that it was an SOA issue I began searching for more subtle parasitics.

![]()

This trace is the detail behind the prior trace at the moment of failure. CH1 is the low side gate drive, CH2 is the center tap of the bridge. Notice the bump highlighted by the cursor - about 5.8V over 50nS. That bump is caused by the voltage drop across the low side gate resistor due to the high dv/dt of the high side MOSFET turning on and the resultant charging of the D-G capacitance on the low-side switch. Now, let’s look at the MOSFET’s gate & switching characteristics.

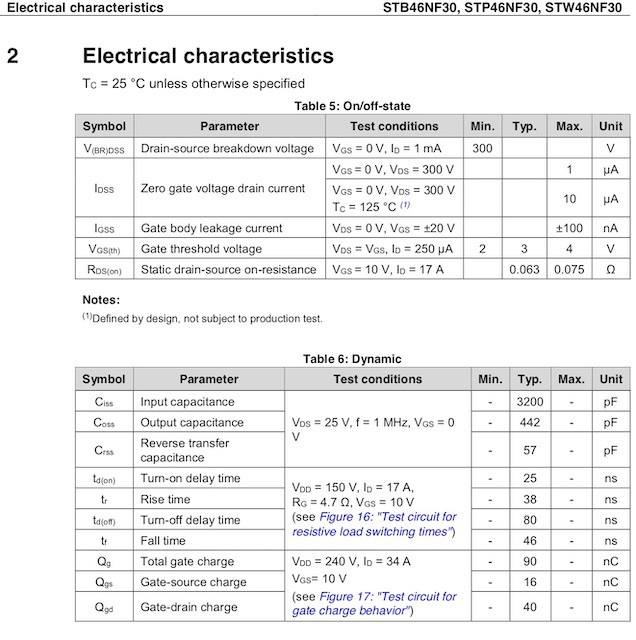

![]()

The minimum gate threshold is 2V - a poor margin for a high power design and something I should have picked up on during selection. Note the turn-on delay of only 25nS and a rise time of only 38nS. This thing is too fast for its own good. I tried lowering the gate resistor (beyond safe limits) and even a small shunt capacitor G-S but was not able to eliminate the problem; I would have to select another transistor.

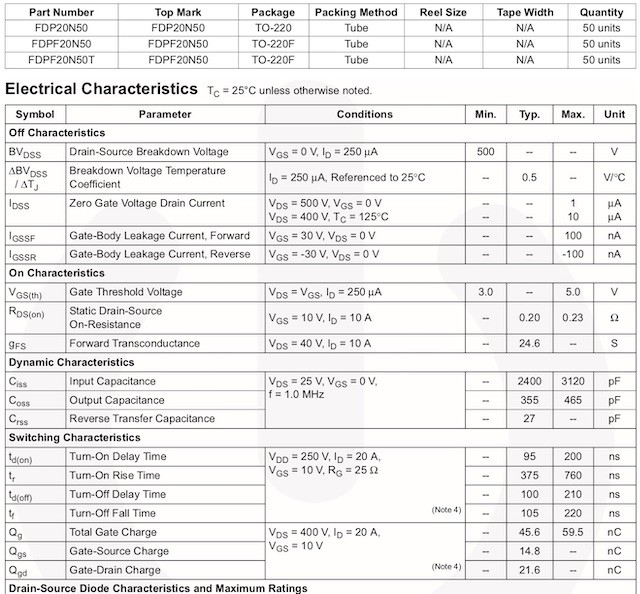

I fell back to a variant in the same family that I used on the prototype but selected one with a much better D-S rating: the Fairchild FDP20N50.

![]()

It’s slow and has an Rds(on) over three times that of the STP46NF30. But its reverse transfer capacitance is half the ST and has a better gate threshold margin. Also note the differences in total & G-D charge Here’s a trace of the same event with the Fairchild MOSFET.

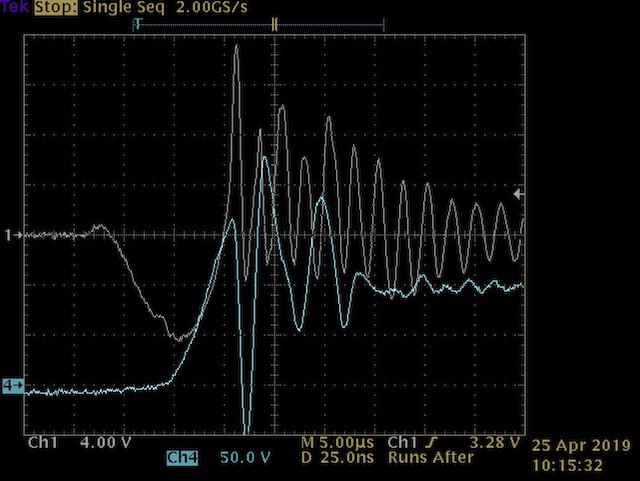

![]()

No gate bump. Note that the dv/dt of the high-side turn on is just as high the the Fairchild as with the ST. That’s because it’s induced by the inductor reversing polarity and not the switching speed of the high-side transistor. This is confirmed by the measured dissipation of the sine bridge. The thermal model for the FDP20N50 showed switching losses of over 12W but long runtime @ max load tests never got near that. I had to add a derating constant to the model to match observed results. And I haven’t gotten one to fail in destructive testing.

So why such a dramatic difference? I’m no expert on MOSFET construction but I have learned that it is very important to not only understand the parameters but to also how it relates to the transistor’s construction. Putting all the parameters together: switching speed, gate charge, gate threshold, D-S rating, etc., along with the performance graphs helps to do this. And carefully consider how the device will operate in the design and all of the stresses that adjoining components will place on it.

Last, make sure you have bench equipment fast enough to analyze high speed events. This design’s operating frequency its only 48kHz but switching events are much faster. Note the timebase in the last two traces - 25nS with a sampling rate of 2GS/s. These events weren’t even visible at 100nS. I was fortunate to have equipment fast enough to catch it.

-

Controller

08/01/2019 at 20:22 • 0 commentsIn other logs I’ve written about how specific aspects of the controller design integrate with the hardware so this one explains the architecture in more detail.

Overview

The controller is a Microchip PIC16F1776 8-bit microcontroller (MCU) with 16KB of flash program memory and 1KB of static RAM. The system oscillator is set to 16MHz with an instruction speed of 4MHz. The firmware is written in ANSI C and compiled in ‘Pro’ mode using the XC8 compiler.

On-board peripherals handle all real-time control (bridge, current protection, ADC, etc.) so, the MCU’s 4 MIPS is adequate and the firmware uses a polled architecture. Its main job is to provide operating envelope management and communicate status via the front panel LEDs or service port.

Most of the source code is well documented and supplements what’s presented here. You’ll notice that the compiled code size is large - this is to support the console interface.

Boot Process

The boot process is divided into three stages:- NVM / CPU validation

- Peripheral configuration

- Inverter startup

At boot the Power Control (PCON) and CPU status (STATUS) registers are evaluated to determine if the boot was due to a watchdog or CPU trap (e.g. stack). If any of these conditions evaluate TRUE the boot will complete with error and a status code set on the front panel LEDs. The inverter will not start without power cycling which is done for safety.

A row of program flash is used to store the system configuration and the last word contains the checksum for the row. This value is compared to the dynamically generated checksum. Similarly, a mismatch causes the boot to complete with error, set an LED status code, and prevent normal startup without power cycling.

In both of the above situations the status code will be written to NVM. In the case of the checksum error this has a self healing effect: the entire row is rewritten with default values which should reset the checksum unless there’s a flash memory failure. Also, while the inverter won’t start, the service port is active and can be used to troubleshoot.

The second boot step is peripheral configuration. This starts with loading configurable parameters from NVM and then configuring the on-board peripherals. No validations are performed during this step.

Inverter startup is performed in the run-time loop. Once the data collection block validates the operating environment (e.g. temp, current, voltages), control proceeds to the boot (autostart) block. The NVM configuration directs what happens: the unit can remain stopped, start only the inverter section, or start inverter & sine sections. When the inverter is started several things happen:

- The bootstrap capacitors are charged. High-side shorts are also detected.

- A soft-start function slowly increases the duty cycle from 0 to the set value to reduce component stress and EMI.

- The steady state duty cycle is monitored to detect shorts (or other HW malfunctions that would reduce duty cycle).

If NVM also directs the sine section to start then the sine command pin (PIN_O_SINE) is asserted and the acknowledgment pin (PIN_I_SINE) is monitored to confirm start within the timeout period.

Any error encountered during startup sets the unit to a stopped state with the front panel LEDs displaying an error code. A power cycle is required to restart.

Run Loop

The run loop is a waterfall design and consists of several discrete conditional sections that execute sequentially:- Watchdog timer: this is reset at the top of the loop.

- Data collection: system voltages, temperatures, and current. Many of these are averaged over several samples to eliminate noise and ripple. These values are used in subsequent processing blocks.

- Thermal management: turns fans on & off based on inverter temperature and thresholds set in NVM. A ‘feature’ of this block is that it can be used to measure the execution time of the control loop. This is done by setting a higher off temperature than on and measuring the duty cycle at the fan power pin. Best to do this with the fans disconnected.

- Run-time control: provides envelope protection and disables the unit if voltage, current, temperature, or power limits are exceeded. Some evaluations, like an input over-volt condition, require immediate shutdown. Others, like excessive power uptake, must be tolerated for short time periods to accommodate the operating requirements of connected equipment. These parameters are employ a simple Proportional-Derivative algorithm.

- Boot (or autostart): previously discussed.

- NVM status recording: most error codes that cause the MCU to shutdown the unit are recorded for troubleshooting.

- LED display: sets the front panel LEDs to display a status code or load in 25% increments.

- Console monitor: similar to the Unix ‘top’ command, this outputs various system values to the service port at one second intervals.

- Console service: processes commands entered via the console.

NVM Storage

The MCU’s flash program memory is arranged in rows of 32 words. A row is reserved for storage of configurable parameters by defining an array of constant unsigned characters. Only the lower byte in each word is used for data since the memory cells of the upper byte only contain six bits. The compiler knows this and will only utilize the low byte.The last two bytes of the array are reserved for the checksum which is calculated by summing bytes 0-29. The flash architecture requires that to write a new value to any byte in the row the entire row must be erased & rewritten.

NVM is updated via three pathways:

- Checksum error - previously covered in boot discussion.

- Console command that updates an NVM value. NVM is updated as part of the console routine processing the command.

- Error status code. Abnormal conditions detected by the MCU will cause it to shutdown the inverter and set an error code. All error codes except under-volt-lockout (because it happens when the unit is turned off and are considered an expected error) are recorded in NVM. Precautions are taken to ensure that Vcc is high enough so that even if the unit has lost power, the Vcc hold-up time is sufficient to allow the erase & write cycle to complete without corrupting NVM. Note that only the last status code is written to NVM and, since there’s no real-time clock function, does not include a time stamp.

Interrupts

The polled architecture eliminates the need for interrupts with two exceptions. A system timer (TMR8) is used as a rudimentary chronological countdown timer, primarily for the console monitor function. Second, the USART peripheral generates an interrupt when data is received.Data Acquisition

With the exception of the COG timing (Complementary Output Generator) all data acquisition is performed by the 10-bit ADC peripheral. An abstraction function, ReadVoltage, manages the ADC hardware interface and allows the firmware to select the analog channel and convert the result to an object of type volts. The ADC’s Vref is the internal 4V reference so no calibration is necessary. The raw ADC value is stored along with the scaled voltage and modulus (mV). The volts & mV are also stored as a composite value in an unsigned 16-bit integer. The 10K/1K places are reserved for volts and the 100/10/1 place for mV. So 3.74 volts would be stored as 3740.All of this is clunky and imposes a lot of overhead on a processor with no multiply/divide ALU, so why?

- It’s an outgrowth of development from its predecessors where a human interface to display what’s going on was necessary.

- The overhead isn’t so bad that it impedes the controller’s function or presents a safety hazard.

- Given 1 & 2, I don’t want to invest the time to clean it up!

The duty cycle of the COG (bridge) is measured using Timer5 with the gate control feature. This allows the timer to be cleared and triggered by the assertion of one of the COG pins. Timing ends when the COG pin clears. Since both the COG & timer are referenced to the same clock it provides a very accurate on-time value. The function that measures this is blocking - meaning that foreground controller execution is paused while timing. This comes out to just over 10uS. The function is written to abandon timing beyond this to prevent a hung state that would result in a watchdog reset.

COG timing is vital to the over-current protection function since it looks at this value to determine if hardware current limiting is engaged (the DC will be substantially reduced). Like the ADC composite voltage this is an odd approach: the ‘normal’ way for firmware to trap over-current events is to have the comparator trigger an interrupt which in turn sets a flag or increments a counter that the foreground code can monitor. In this design the noise on the current sense line at the end of a cycle is substantial and creates many interrupts. And, unlike the COG feature to ignore falling events (blanking time), this can’t be done with interrupts. Hence, the rapid fire of interrupts causes the MCU to reset. Some will argue that this is compensation for a poor hardware design and there is merit. I would counter that his solution allows for a simple, and cheap, design without compromising reliability or safety, although EMI may be another matter.

Service Port

The service port is a micro-USB plug that provides a TTL level RS232 interface and the ability to update the firmware using Microchip’s PICkit 3.![]()

PICkit 3 to Micro-USB Adapter The port follows the USB standard for power so that if someone accidentally plugged in a charger it should not, in theory, damage the controller (I have not tested this).

The RS232 interface provides a simple console interface to configure & control the unit’s operation. This includes starting & stopping the unit and setting NVM values. The interface runs at 115.2Kbps, 8 data bits, 1 stop, no parity, and no flow control. The RS232 service routine uses the new line (0x0A) character to terminate a command line and also recognizes the backspace (0x08) as destructive.

To connect to the console I built-up a bluetooth adapter I call BTCON. It’s another project I have posted.

![]()

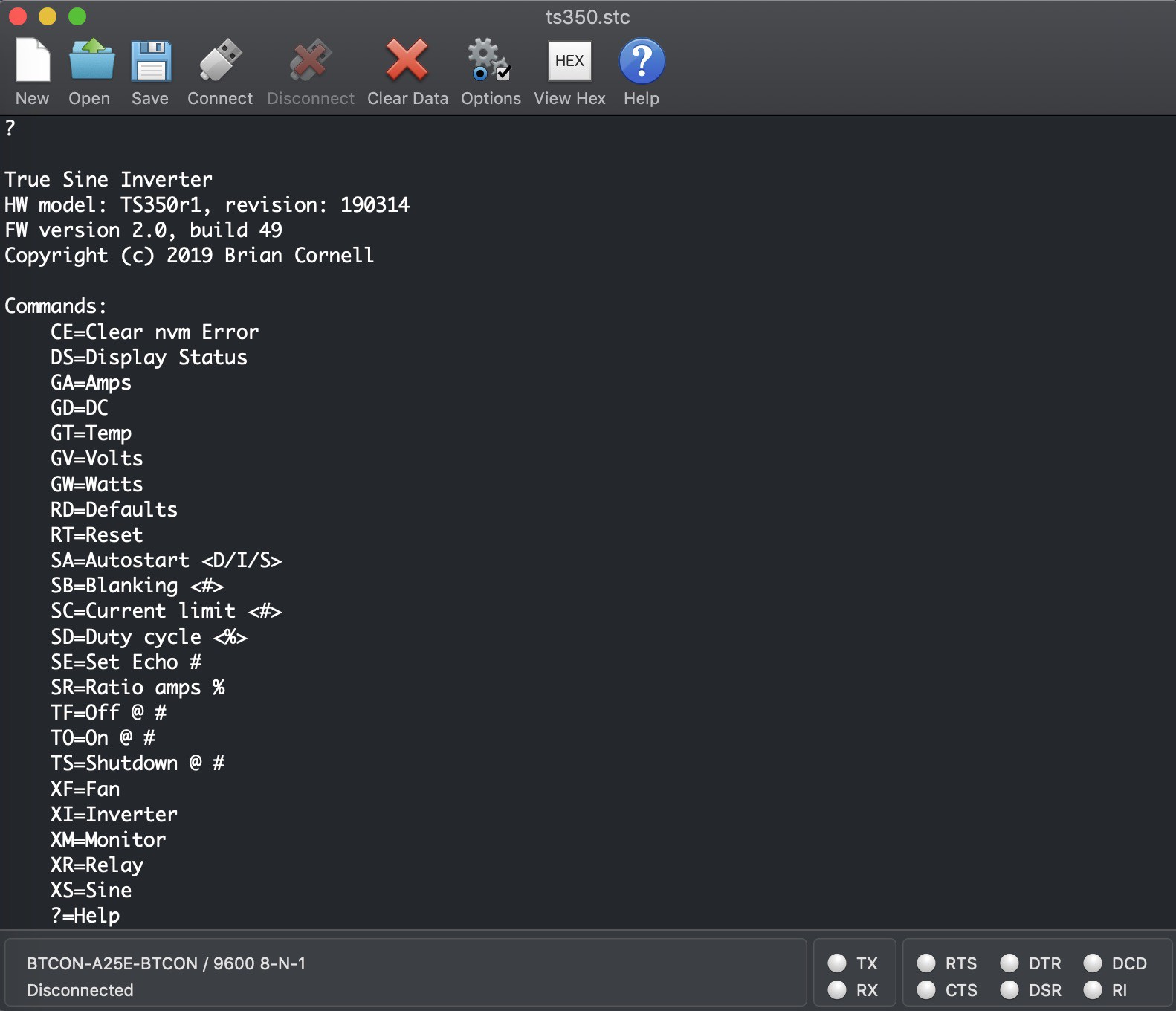

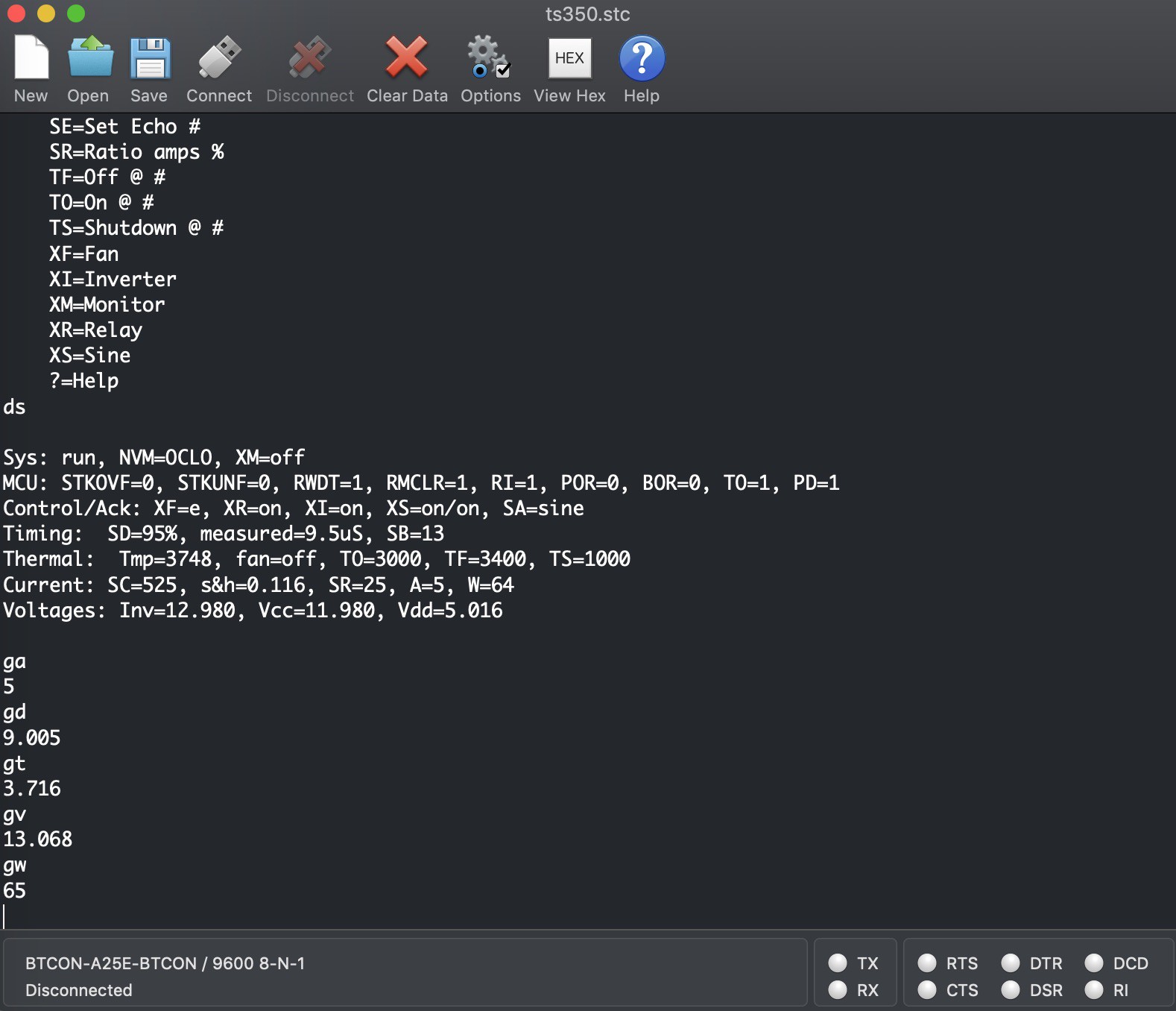

Command Set

The command set utilizes two characters and many require a single parameter. The first character of the command defines its group - like ’S’ for Set or ’T’ for Temperature. The help, response, and status displays can be a bit cryptic - that is done to conserve program space. To help, many of the status responses use the command value as the prefix for the field.![]()

Below is an explanation of each command. You can get this list by typing a question mark (?) and pressing ENTER too. Be sure you know what you’re doing before changing values - the unit could easily be destroyed with an improper setting.Miscellaneous commands.

CE Clear nvm Error.

DS Display Status. Displays all configurable parameters along with all acquired data and the unit’s operating state.The Get commands are taken from GPIB syntax in that the response only contains the value to make consumption by a data collector easier.

GA Amps. Returns an integer representing the averaged input current.

GD DC. Returns the duty cycle in uS.

GT Temp. Returns the voltage of the inverter’s NTC. Refer to the thermal tab in the design workbook to get the temperature.

GV Volts. Returns the inverter’s input voltage.

GW Watts. Returns the inverter’s input wattage (load).Reset commands.

RD Defaults. Reset NVM values to defaults.

RT Reset. Reboot the MCU.Set commands update real-time and NVM.

SA Autostart <D/I/S>. Set autostart to Disabled, Inverter only, or Sine (includes Inverter).

SB Blanking <#>. The amount of time that COG falling events are blanked (ignored). Multiply this value by the system oscillator period to obtain the time.

SC Current limit <#>. The maximum current, represented as a voltage, before current limiting begins. The valued entered here is the DAC value - not the actual voltage. To calculate the voltage: (#/1024)*4.096. Using the default of 525 gives 2.1 volts. To know how many amps this is divide by 15mV, so 2.1/0.015 = 140. That’s not a typo - 140 amps! So be careful if you think you need to increase this value.

SD Duty cycle <%>. Sets the duty cycle in percent. Enter a value between 5-95.

SE Set Echo #. Sets character echo off when #=0. Any other character sets on.

SR Ratio amps %. Reduces the current read from the S&H circuit by % percent to obtain a first order approximation of RMS. Refer to the Current Monitoring and Over-Current Protection log for a technical explanation.Temperature commands update real-time and NVM.

TF Off @ #. Turns fans off at # temperature where #=the composite voltage of the inverter NTC. The default of 3400=3.4V or ~ 36C

TO On @ #. Turns fans on at # temperature where #=the composite voltage of the inverter NTC. The default of 3000=3.4V or ~ 45C.

TS Shutdown @ #. Shuts the inverter off at # temperature where #=the composite voltage of the inverter NTC. The default of 1000=1.0V or ~ 92C.Toggle commands echo their on/off status when executed.

XF Fan. Disable / enable. Use with care.

XI Inverter. On or off. If Sine is on and inverter is turned off sine is stopped first.

XM Monitor. On or off.

XR Relay. On or off. If the unit is under load and the relay is turned off the start circuit fuse will likely open.

XS Sine. On or off.![]()

Peripheral Propagation Delay Considerations

In early testing I had an odd and seemingly random problem where one side of the bridge would miss a complete cycle. It only appeared when the duty cycle was above 92% or so. That should have been my clue but I ignored it and spent a lot of time chasing phantoms - current sense, gate drive, transformer saturation, etc. Finally I came back to that and studied the current sense timing. Remember all that noise on the sense line at the end of a cycle I’ve talked about?Turns out that noise ring has a duration just over 500nS so, when the DC is at 9.5uS it is still present at the start of the next cycle. The COG’s FE (falling event) configuration was to trip on a logic low from the comparator. Look at the logic diagram in the Current Monitoring and Over-Current Protection log. Theoretically PWM11’s output should be high and hence CLC3 should have prevented propagation of the low state from CM2. The problem was the propagation delay from PWM5->PWM11->CLC3. I didn’t bother to measure it and would guess its probably on the order of 10nS or less but it was enough.

The fix? Change the COG’s FE mode from logic low to state transition. Once more the MCU’s flexibility bailed me out. The real lesson here is to never ignore noise & rings as they will burn you in myriad ways no matter the innocence or ways you find around them. Also, don’t forget to pay attention to on-chip propagation delays.

-

Input Power Management

05/25/2019 at 17:25 • 0 commentsThis covers the input power connections, control & protections, and inverter auxiliary supplies.

In keeping with the design philosophy that minimizes the unit’s dependency on the enclosure, 45A Anderson PowerPoles are used to connect to a power source. They are quite effective in providing low connection impedance and ease of use. I do have concern for the long-term mechanical durability of the PCB mounts, particularly with a 30mil board, since the insertion force is considerable. This is why a strap is placed around the connector. Note that Anderson offers mounting “wings” for this application but they triple the width of the connector footprint.

The entire unit is fuse protected with two paralleled 30A automotive-style MINI fuses. These are the highest amperage value available in that form factor and overall occupy the smallest space. The fuses connect to the input of the Solid State Relay (SSR) and power switch S201. The switch is a low current (rated 5A) that powers the start circuit and auxiliary supply. Switching of power to the inverter bridge is handled by the SSR. This scheme avoids the use of a bulky high-current mechanical switch, enables inrush management, and eliminates sparks as a potential ignition source.

The start (switched) circuit is protected by a PCB mounted 1.6A fuse (F203). It is intended to disable the unit if an internal short develops, or connected with reverse polarity or to excessive voltage. As described in the Overview of Operation, the start circuit’s main function is to provide over-volt protection and that detail won’t be repeated here. Essentially it consists of pass transistor Q205 and provides two levels of over-volt protection. The first level is non-destructive and disables the unit if connected to a source in excess of 16V but less than 30V. The second layer engages a zener shunt that opens F203.

U201 is a 6W SMPS in a DIP package. It provides Vcc and, via LDO U202, Vdd supplies to the inverter section. It is connected to the output of pass transistor Q205. The auxiliary supply also powers the cooling fans which are switched by Q207 via controller U101.

The SSR consists of two paralleled MOSFETs, Q202 & Q204, that connect the inverter bridge to the power source. During operation their combined Rds(on) at operating temperatures ~ 90C is less than 4mΩ. When the unit is first powered on they are off and the bridge capacitors, C301-4, are charged via R210. D204 prevents power from the inverter bridge from powering the auxiliary supply when the unit is switched off.

The SSR is connected to the positive input and does not provide reverse polarity protection. The prototype had this but was eliminated to conserve space and reduce cost. The SSR requires a floating gate drive which is accomplished with a simple flyback circuit. It is driven directly by controller U101 with a fixed duty cycle, 100kHz PWM. It provides a stable 15V drive with ~ 0.5V of ripple and a turn-on time less than 100uS.

The inverter bridge contains substantial bulk capacitance - about 4800uF. This is necessary since the input impedance is unknown: connection lead length, wire gauge, and impedance of the source. More capacitance would be better but cost & space become an issue. The design criteria expects lead lengths of about a meter using 10AWG wire connected to a 12V automotive style power system. Under these conditions this bulk capacitance can source full current (~48A) for a switching cycle and will keep the switching frequency ripple under 200mV at full load. Line frequency (60Hz) ripple will be about one volt and is unavoidable.

Ignoring the impedance (e.g. cut-off frequency) of the connection wiring and given:

(A * s) / V = C (1)

Rearranging: (C * V) / s = A (2)

Solving: (0.0048 * 0.2) / 0.00001 = 96A (3)

But extending the time to 4mS, half the width of a 60Hz sine cycle and allowing a 1V drop:

0.0048 / 0.004 = 1.2A (4)

This emphasizes the importance of a low impedance source connection.

-

Inverter Gate Drive and Switching

05/21/2019 at 21:04 • 0 commentsI’ve written about the inverter’s transformer design and current management scheme in other logs so I figured I round it off with one on the gate drive and transistors.

Layout

A big goal in this design was a reduced layout in terms of both physical space and electrical distance. Going vertical seemed like a sensible way to do this. It has the added advantage of placing the low power & logic components on a separate board thereby reducing thermal stress.

This part of the design has worked well. The 100mil right-angle mounting pins aren't the best for density but they do provide good support. A separate board allows for potential reuse in other designs and for upgrades without obsoleting the base design. The main (power) board can also use heavier copper for the high currents without creating problems for the close traces required of the logic components.

Gate Drive

International Rectifier’s IRS21864, monolithic, half-bridge gate driver is used. It’s actually a high voltage model and is used on the sine section as well. It’s used here to reduce the parts mix and because it otherwise supports a 4A gate drive source/sink and is single supply. I also selected it because of prior experience with other parts from this family. They are very durable, have good noise immunity, and tolerate ridiculous amounts of ground bounce. Ground bounce refers to refer to the phenomenon of Vs and COM below ground. Both are due to high di/dt, transformer flyback, and the parasitic inductances (e.g. transistor leads, PCB traces, etc.).Vs below ground refers to the gate driver’s Vs pin that is connected to the center leg of the half-bridge. During turn-off of the low side the transformer’s primary reverses polarity by flyback action. Vs will move below ground by the MOSFET body diodes’s Vf plus series voltages induced by the parasitics and the di/dt. COM below ground refers to the gate driver’s COM pin that is connected to the source of the low-side MOSFET. This is the reverse of Vs: during turn-off the parasitic inductances in series with the transistor and the star ground reverse polarity and induce a flyback voltage based on the di/dt.

It doesn’t take much parasitic inductance to wreak havoc. Given that the instantaneous voltage across an inductor is:

L * (di / dt) = V (1)

Conservatively assuming that the total parasitic inductance of the source-ground is 7nH (a standard hight mounted TO-220 could have this much), using the Iin_max (current in, max) value for this design of 45A, and a turn-off / fall time of 84nS (ref. ‘mosfet-inv’ tab of design workbook):

7nH * (45 / 84nS) = 3.75V (2)

I haven’t done formal measurements on the design but I’m sure that they are higher than this and is a key reason why I didn’t use low-side current sensing in this design. IR guarantees the gate driver operational with COM up to 5V below ground. Beyond this damage occurs. This is due to the internal diode structures.

The ground margin on Vs is higher and depends on the gate driver’s Vcc. In this design the margin is 13v:

Max Vcc = 20V

- Design Vcc = 12V

Vcc Margin = 8V

+ Ground Margin = 5VTotal Margin = 13V

The high-side driver will latch-up when Vs exceeds 5V below ground but won’t incur damage until the total margin is exceeded. The TS350 is likely exceeding 5V but it isn’t a problem since the high-side transistor is off.

Fortunately the design didn’t require special compensation to protect from these conditions and resulted in a simple design. The gate drive resistors are sized to minimize switching times within the source/sink limits of the gate drivers. Current limit resistors in the bootstrap charge circuit protect the diodes. Generous bulk capacitance is provided between Vcc and COM since this is the current source for both bootstrap capacitor charge and low-side gate drive. Note the bulky 2220 packages - not required but a symptom of the MLCC shortage.

The PCB layout is optimized to minimize the gate drive current loops. Although the controller gate traces meet criteria as a lumped system the ‘B’ traces include a delay to match the ‘A’ side. Ground pins are interleaved with high speed signals to minimize crosstalk. Both internal layers are used as ground planes.

Bridge Transistors

TI’s CSD19535KCS is used to switch the inverter bridge. It was selected for its low Rds(on), high switching speed, relatively low gate charge (Qg), and high current handling capability. Like the gate drivers, this transistor is also used in the SSR. So far it has proven to be a durable performer - no failures in semi-destructive (high temps, shorts) testing yet.As mentioned in the current management log these are mounted at minimum height to reduce their parasitic inductance. RC snubbers are included as optional in the design. Evaluation showed that they did not materially reduce transistor heat dissipation or switching rings. Rather they increased losses thru their own dissipation.

-

Current Sense Post Mortem

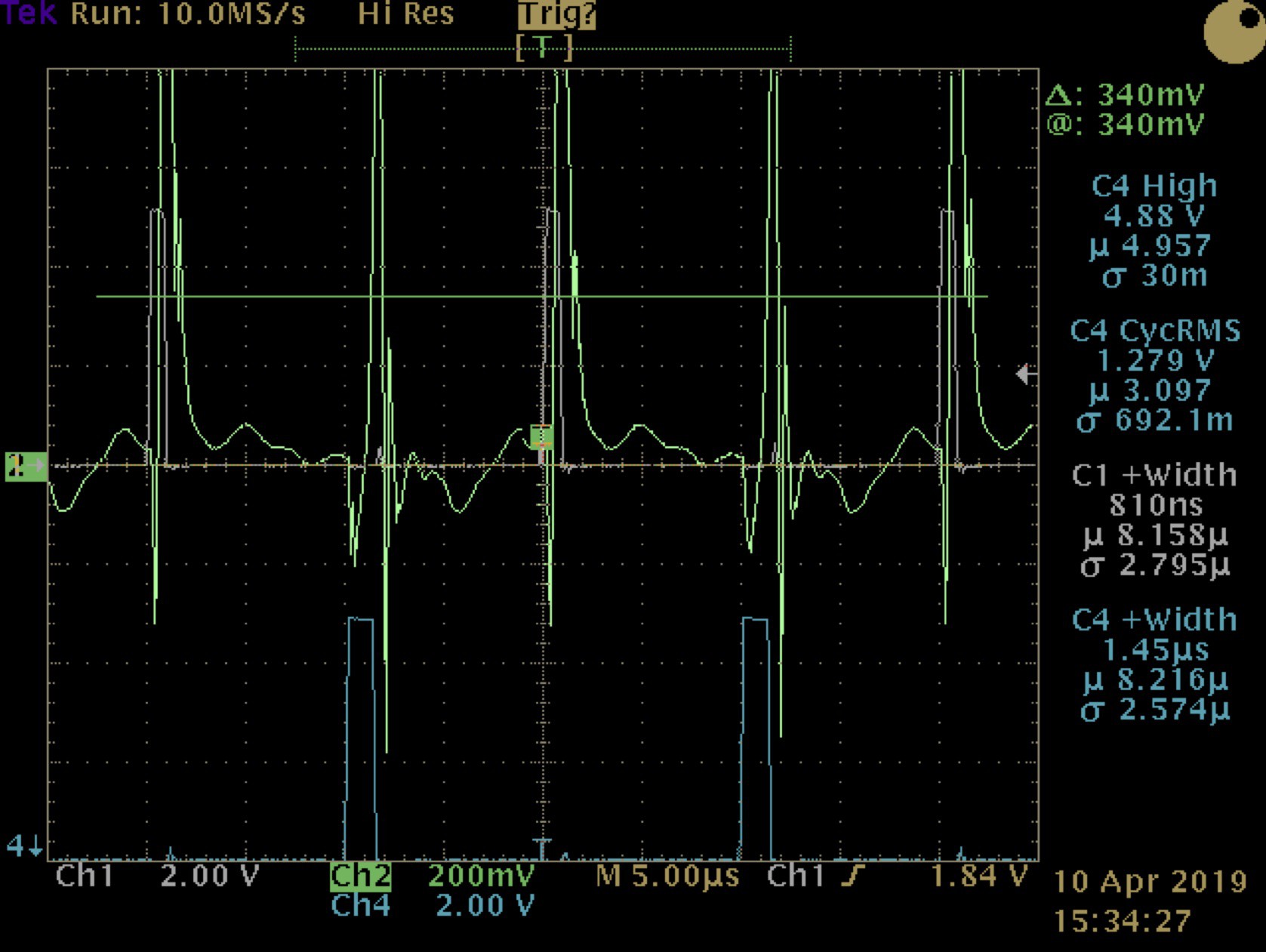

05/13/2019 at 18:26 • 0 commentsThere is a problem with the layout of the current sense circuit that distorts the ‘B’ side signal. Here is a trace:

![]()

Channel 1 is the sense signal at the gate driver card pin connecting to the main board. Channel 3 is the ‘A’ side drive signal, so when 3 is low the sense signal is coming from the ‘B’ side of the bridge. Amazingly, it doesn’t appear to effect the safe operation of the unit. Below is a trace of over-current limiting prompted by a surge event on the sine output:![]()

Channel 1 is the gate drive for the ‘A’ side and channel 4 is ‘B’. Channel 2 is the current sense signal from the gate card pin. Note the delay and subsequent higher DC for the ‘B’ side; but still short enough to protect the transistors.Instruction ten describes a fix to improve the B signal and it’s highly recommended that you do this. Below is the output from the sense pin with the modification.

![]()

So what happened? Poor layout is to blame: the circulating currents in the bridge traces interfere with the signal. Using discrete wires avoids this.A proper fix requires placing the amplifier closer to the sense resistors and at the same time keeping the distance from the amplifier output to the controller short as well. As explained in the log on the general design problem that is essentially a redesign.

-

Current Monitoring and Over-Current Protection

05/13/2019 at 18:22 • 0 commentsThe TS350R1 employs a discrete, high-side current sense circuit in the inverter primary that is the basis for all over-current protection & monitoring in the unit. Reference the main & inverter gate drive schematics for details.

The current signal is derived from a pair of 3mΩ (R302, R303) sense resistors that create a 1.5mV/Amp voltage drop across them. A resistor solution was chosen due to its compact size and low cost. They are placed in a high-side configuration due to the high currents and to provide better protection for the bridge. Low-side circuits can’t detect shorts occurring upstream and can contribute to ground bounce related problems for the gate drivers.

The challenge with high-side sensing is that it isn’t ground referenced so the conditioning circuit must operate from an floating supply or be capable of handling the common mode voltage. Since isolation isn’t practical the TI OPA197 (U2) is used. It allows for rail-rail inputs and supports slew rates up to 20V/uS which is satisfactory for per-cycle current limiting. It is configured as a differential amplifier. R4 & R5 in conjunction with D2 & D3 provide input protection from voltage spikes. R4 & R6 set the gain at 10 and Q1 level shifts the output (removes the CM voltage) for direct consumption by controller U101.

The controller processes the current sense signal to provide per-cycle [SOA] protection for the bridge MOSFETs and to perform overall power management thru two distinct hardware pathways using on-chip peripherals.

![]()

MOSFET SOA Protection

Per-cycle protection uses DAC1 to provide a reference voltage to comparator CM2. The reference voltage is set to a value high enough (140A) to support the significant currents required of equipment startup surges but still within the MOSFET’s Safe Operating Area. CM2 compares the sense value to this reference and produces a H->L transition that is gated thru logic cell CLC3 to a Falling Event input of the COG (Complementary Output Generator). A transition immediately terminates the gate drive for that side of the bridge for that cycle.The role of CLC3, along with PWM11, is to provide a blanking function at the beginning of each half cycle. Blanking prevents a premature termination of the gate drive and is set to a few hundred nano-seconds (defined by the DC of PWM11). This is necessary because of the inherent noise in the current sense signal. Noise is a problem for all sense circuits based on shunt resistors but it is more significant in high current, high frequency applications due to parasitic inductance and poor signal to noise ratios.

Current Averaging

The current sense signal is also connected to the non-inverting input of opamp OPA2 which is configured as a voltage follower. This is necessary because of the relatively high impedance of the current sense circuit’s output and, most importantly, for OPA2’s tristate feature. Tristate, along with C1 (C102 on the main schematic) form a sample & hold circuit that averages the current sense signal from both sides of the bridge and allows the controller to derive RMS current. CLC2 gates OPA2’s output to ensure that it is only passing a signal when the bridge is active.The voltage read from C1 represents an averaged value of the sense signal but it reads high. This is because the sense circuit delivers an exaggerated slope with a large spike (parasitics) at the end of each cycle which inflates the measured S&H voltage. Since the controller has limited processing bandwidth a linear coefficient is applied to reduce the read current to obtain a value that is +/- 1.5A of the actual RMS value.

The controller multiplies this RMS current value with the inverter’s input voltage to estimate the input power and uses this to perform load management. The power value drives the front panel LED meter and overload shutdown.

Overload and Power Management

In this design current, much more than power, is the most important parameter to watch for unit protection. That said, it manages by power because this is the better metric for the entire unit over its input voltage range. With a 15V input and 80% efficiency the input power is:Po / n = Pi -> 350 / 0.8 = 437.5 watts (1)

And the required input current is:

Pi / Vi = Ii -> 437.5 / 15 = 29.16 amps (2)

Whereas at 11 volts the required input current is:

437.5 / 11 = 39.77 amps (3)

So if the load was managed by current alone and 33A was the expected full load current, a 15V input at that current would be delivering:

(Ii * Vi) * n = Po -> (33 * 15) * 0.8 = 396 watts (4)

And an 11 volt input would only be delivering:

(33 * 11) * 0.8 = 290 watts (5)

Higher input voltages aren’t a concern because the sine section can easily handle in excess of 400 watts continuous. But, at low voltages there is the risk that the inverter will overheat due to high currents and create user confusion since the power meter won’t display a full / boost load. This is solved by derating the inverter below a set-point input voltage (Vsp) using the concept of equivalent power (Pe). This approach maintains a unified control loop based on managing by power.

The set-point refers to an input voltage that is reasonably expected to be available in the real world - e.g. automotive 13~13.8V and that the inverter can supply rated power with some operating margin for boost. Equivalent power is defined as the actual power when operating above the set-point and, when below is:

((Vsp - Vi) * Ii(rms) + Pi = Pe (6)

The controller uses Pe to drive the load meter and for overload protection. It also calculates the actual input power and that value can be accessed thru the service port.

Over Current Protection

Over current protection is different from overload. It is intended to disable the inverter when the per-cycle (SOA) protection engages repetitively over short time intervals. This indicates either a failure in the bridge or sine sections or excessive current being drawn from the AC load.Detection is done by measuring the duty cycle (DC) of the inverter using the controller’s Timer5 peripheral. Since DC is a constant value, anything less is considered an over-current event. The controller’s run-time loop samples the DC continuously and applies a simple PD (proportional derivative) algorithm to disable the inverter if the frequency or rate of events exceeds a set limit. This approach is used to support short duration (<2mS continuous) inrush events typical of AC equipment and allows in excess of 20A to be sourced.

Sine Current and Load Protection

As described above, the main controller provides load management for the entire unit. Current protection is passive and works for several reasons.- The output filter inductors, L401 & L402, limit the di/dt imposed on the sine bridge transistors.

- There is limited instantaneous energy available in the high voltage supply capacitors C407, C408, C410.

- The SOA envelope of the sine bridge MOSFETs.

- The relatively high impedance of the inverter, in part due to its active current limiting.

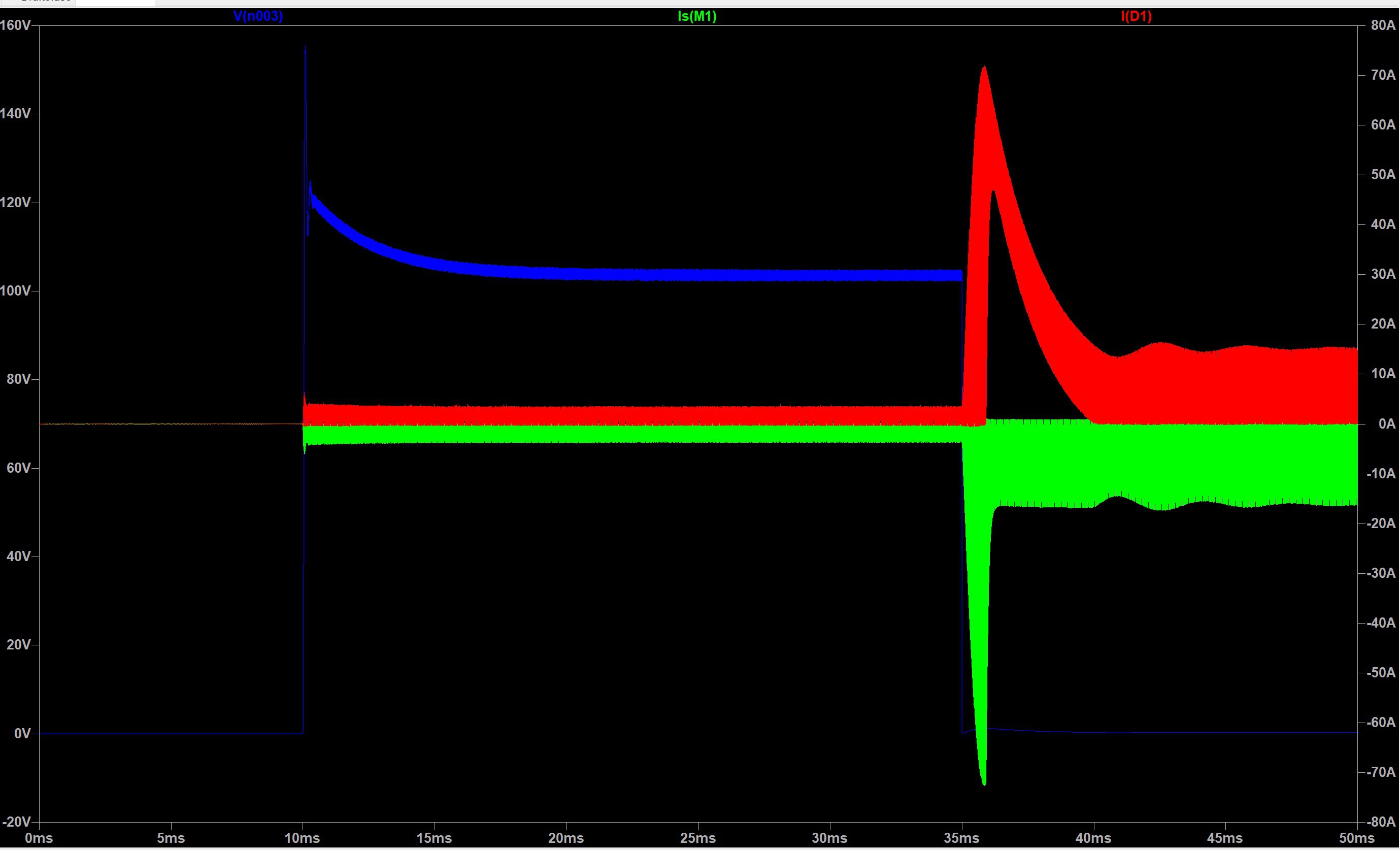

A picture, instead of a mathematical analysis, best summarizes these points.

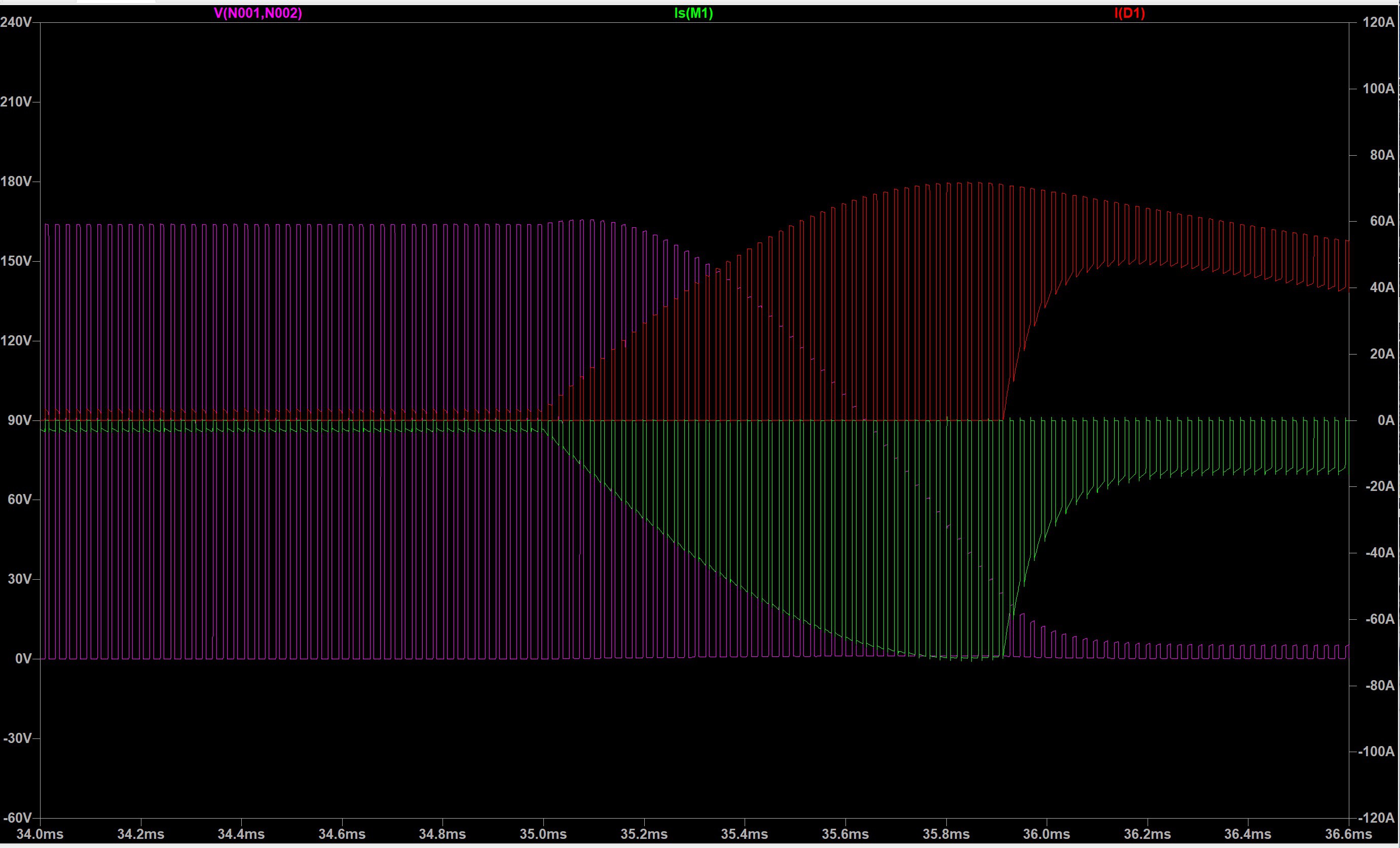

![]()

This is a spice simulation of a dead short event on the sine output. The blue trace is output voltage, green is current at the MOSFET source node, and red is the cathode of the freewheeling diode. The output is turned ‘on’ at 10mS and runs at full load until 35mS when the output is shorted. The large current spike is the bulk capacitance discharging.![]()

Zooming in on the start of the event, you can see that the drain to source voltage (magenta trace) is well below 100V before peak current is reached. The actual peak currents will be lower due to capacitor ESR, DC resistance of the inductors, Rds(on) of the MOSFETs, and the inverter’s impedance (which is higher than represented in the simulation).Here is the simulation circuit.

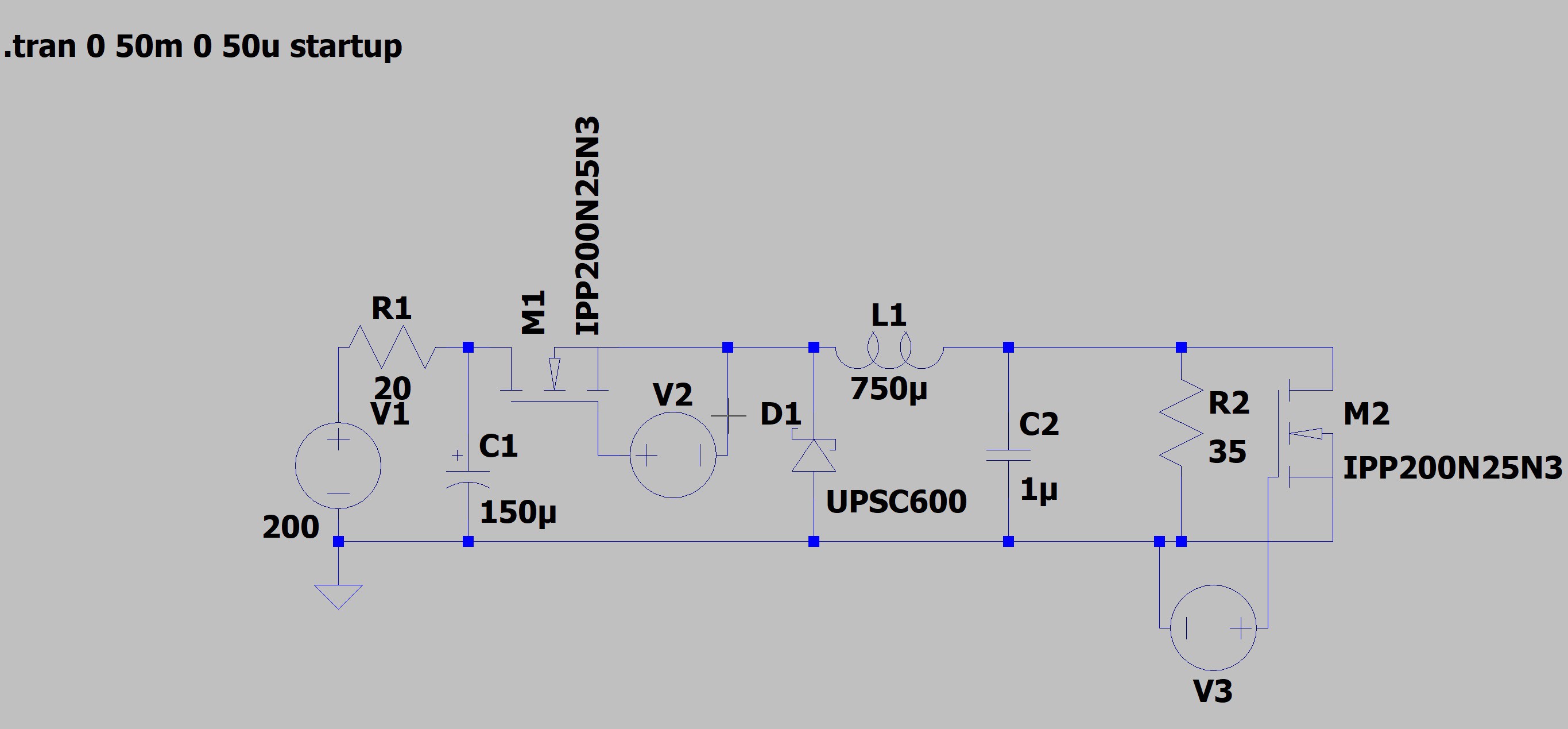

![]()

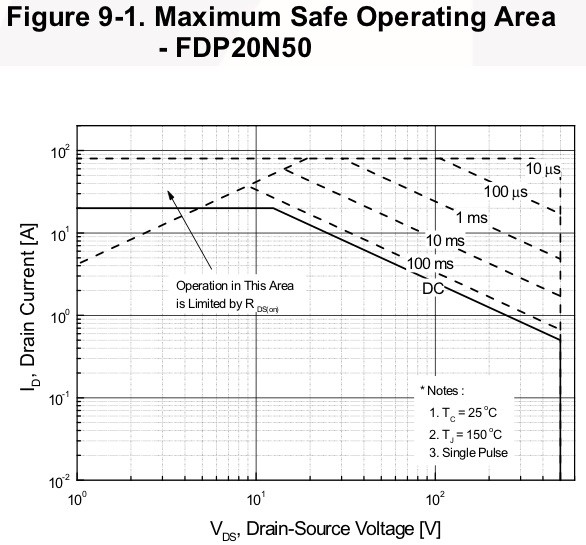

V1 and R1 form a limited impedance voltage source. M1 and D1 simulate one half of the sine bridge with D1 functioning as the low side MOSFET. C1 is the bulk capacitance. V2 is the floating gate drive set to a 63% DC which is the average over a half sine. L1 and C2 represent the output filter with L1’s value set to the expected inductance at full load. R2 simulates a 3A load, and M2 and V3 form the shunt which triggers at 35mS.Last, the transistor’s Safe Operating Area is an important selection criteria:

![]()

The FDP20N50 can handle 30A with 300V across it for ~ 100uS and, as voltage decreases the current handling increases to 80A. More than sufficient.This simplicity does have a shortcoming: it will not protect the sine bridge from an internal short. The absence of this protection isn’t a safety issue, so it was omitted to retain a simple & cost-effective design.

-

Inverter Design

05/10/2019 at 20:25 • 0 commentsAt the core of all power supplies is a transformer. It is central to the design and dictates the form and function of everything around it. A home brew design imposes additional requirements. Without specialized tooling and winding materials (e.g. Litz or foil wire, etc.) the design must also be constructible. Given this, my criteria were that the transformer must be:

- Manufacturable

- Efficient

- Simple

- Reliable

From earlier work I knew that a center tap secondary is not practical due to the voltage gradient and turn-turn capacitance.

In a center tap secondary, the voltage measured across the legs is twice the output voltage. So a 200V secondary actually has 400V across the entire winding. In high frequency transformers this, combined with the turn-turn capacitance becomes a serious problem that results in significant ringing. Here’s an example:

![]()

Note the cursor value and the scale for channel 3 - 100V! Professionals get around this with lower capacitance magnet wire and special winding techniques that can only be done by machine (e.g. Bank).Which is a shame since a center tap requires only two rectifiers instead of four. That may not seem significant but consider the consequences: space, dissipation, materials. In this design if a CT were viable I could have ditched the heatsink and likely used two SMD diodes saving space & halving the dissipation.

Decision 1: single winding secondary.

A center tap primary is possible and that would allow the use of a half-bridge - again saving significant space and halving dissipation. But there are drawbacks:

- Window utilization is poor - each half winding is only doing work half time.

- A larger core size would likely be required given the size of the winding - 2 turns of 20 AWG 10 strands becomes 4 turns.

- Terminating the center tap would be problematic.

- Greater chance of staircase saturation: any imbalance in each half winding (due to construction) creates a flux imbalance which over successive cycles walks the core into saturation.

Decision 2: single winding primary.

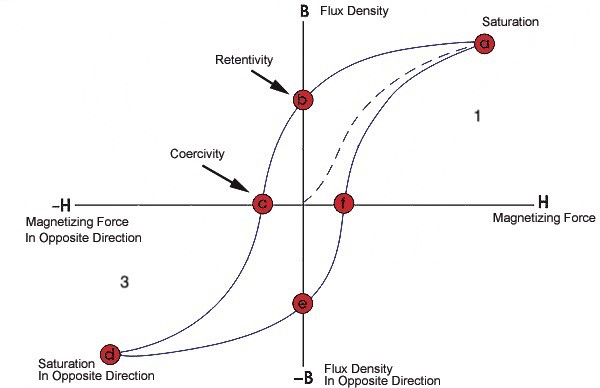

An inverter acting as an AC power source must be capable of handling large currents and supporting surge power well beyond their continuous rating. To do this the transformer must have a high power density and this requires operating it across the full BH loop in quadrants 1 & 3.

![]()

This is related to the single vs. center-tap primary discussion but emphasizes the power handling requirement of the transformer. To operate the transformer in this manner requires either a half or full bridge; but the prior discussion already discounted the half bridge due to manufacture concerns.Decision 3: full bridge.

Most switching power supplies regulate thru some form of modulation - either the switching frequency, duty cycle, or both. Without a center tap secondary the conventional approach to regulation via the use of an output inductor to prevent the transformer core from resetting during off time isn’t practical. A current fed primary topology is a possibility but adds inductors & switches, introduces more losses, complicates the design, requires more space.

Decision 4: no regulation.

These decisions support the design criteria: a design that is manufacturable, simple, reliable, and (theoretically) efficient. The transformer can be operated at a high, constant, duty cycle, utilizing the full BH loop and achieving good power density. The complexity of a control loop is eliminated. But this shifts some burden to the rest of the design, both in front of and behind the transformer:

- A full bridge will be used, doubling the space requirements and losses.

- A full bridge rectifier is required, also doubling the space requirements and losses.

- The secondaries will track the input voltage according to their turns ratio and both limit the input voltage operating range and require components with higher voltage ratings.

- A method of regulating the AC output voltage will be required. Fortunately precise regulation isn’t necessary.

With the transformer design and operating modes set the remainder of the inverter design is relatively straight forward. The gate drivers and current sense circuit are placed on a vertical mount daughter board to conserve space and keep the gate drive loops small. This is an idea I’ll likely carry forward to higher power designs using heavier copper on the main power board (in fact expand it so that all logic resides there).

The 2oz limitation on maker PCBs forces some compromise in the layout. Ideally the gate drive card would reside in front of the transistors instead of between them and the transformer. I couldn’t do that because I needed all the copper area I could get to feed energy to and return from the bridge. The card, with its pins and gate drive traces would interfere.

I specifically selected the Ohmite heatsinks because of their mounting flexibility. I was really concerned that the parasitic inductance in the MOSFET leads combined with a 2oz maker PCB would cause grief for the monolithic gate drives. Mounting the transistors at minimum height significantly reduces this. So much so that even under hot over-current / over-load conditions the design doesn’t need protective circuits for the typical VS or COM below ground problems.

-

The Specific Design Problem

05/09/2019 at 19:53 • 0 commentsI did a poor job on the systems engineering for this project. I spent a lot of time on specific problems like the layout of the inverter bridge, rectifier switching losses, heat sinks, firmware, etc. but I was too myopic. I didn’t bring it all together early enough in the design and this resulted in two major deficiencies.

Thermal management. I spent much time modeling heatsinks’ thermal performance, tab insulator materials, mounting height considerations to minimize parasitics and - key - the coefficients of each transistor on the dual sinks. But I stopped there: I compartmentalized the thermal models and did not establish the coefficients of adjacent areas or consider the entire system given hot air would be circulating in an enclosure.

I should have mocked-up the full layout with all key heat sources to establish coefficients and determine the size fans it would need. The as-built dissipates ~ 68 watts of heat at full load. Had I better established this early on I would have changed fan positions and sizing or maybe the entire design. I was fortunate that I had a convenient spot, and the power budget on the auxiliary supply, to add a third fan.

An early design goal was, aside from board size, for the unit to be enclosure agnostic. That’s how the pull/push arrangement for the fans on the board came to be. I realize now that in a design dealing with this much dissipation that the enclosure and the gear going in it are inextricably linked. An ah-ha related to this is that a fan must be positioned at the enclosure to ambient interface to ensure air is forced in or out of the unit. Otherwise internal fans will only be moving hot air around.

I also might have thought twice about trying to get this much power out of it. A two layer, two ounce PCB is woefully inadequate to handle this much current. Traces turn into copper pours, grabbing more space and reducing layout efficiency. Sometimes both layers must be used wasting even more. In spite of a very generous layout for the inverter primary it is dissipating almost five watts. That might not sound like much against the total losses but it is concentrated around other high dissipators and contributes significant heat stress.

AC Voltage Regulation. The sine controller has eight DDS sine patterns stored in flash and selects one based on the transformer’s auxiliary supply voltage (a proxy for the HV supply). It does a good job of keeping the no / light load voltage between 115 - 120V AC RMS. Under heavy loads the output tends to droop towards 100V. Most electronic devices won’t care but small motorized appliances (like a Dremel or mixer) will.

A few things contribute to this. Transformer regulation is poor; but this can be compensated for by adding a few turns to the secondary. Second, the DDS pattern imposes a voltage margin on the HV supply (you can see this in the Excel model). Essentially, the output voltage from the sine pattern on any given cycle is:

Vhv * Tdc = Vout

Where Vhv=High voltage supply

Tdc = Duty Cycle, percent

Vout = output voltageThe sine bridge on times are highest at the sine peak with a low input voltage. The DDS patterns are designed to ensure that there is a discernible off / on time for the same side high / low pair respectively to avoid high switching losses and maintain bootstrap capacitor charge. It also reduces the chance of violating transistor SOA and a resulting failure should a short or transient occur at just the right time.

-

The General Design Problem

05/09/2019 at 17:59 • 0 commentsTwelve volts is a ridiculous standard. Most of industry, sans automotive, abandoned (or never adopted) it more than a half century ago. Automotive just didn’t have an incentive and so the consumer world and all the stuff in its orbit are stuck with it. Hybrid & EVs are changing this but not soon enough.

Conceptually an inverter is simple: boost the voltage and modulate it in some way to obtain the desired AC output. There are several ways to do this depending on the application but all have in common the necessity of high currents if the input voltage is to be low.

A first order analysis is instructive. The inverter is to deliver 350 watts at 120 V AC RMS, 60Hz. Assume that only constant impedance, non-reactive (power factor of 1), loads will be used. The RMS current is:

Po(rms) / Vo(rms) = Io(rms) -> 350 / 120 = 2.91 amps (1)

Also assume the power conversion is 100% efficient. With a 12 volt (constant) input the required RMS current is:

Po(rms) / Vi = Ii(rms) -> 350 / 12 = 29.16 amps (2)

But the inverter must be capable of delivering power to match the load impedance throughout the sine cycle which is highest at peak. So for 120 V AC RMS the peak voltage is:

Vo(rms) * √2 = Vo(pk) -> 120 * 1.41 = 169.2 (3)

The impedance of the load is:

Vo(rms) / Io(rms) = Rload -> 120 / 2.91 = 41.24 Ω (4)

At the sine peak the load current is:

Vo(pk) / Rload = Io(pk) -> 169.2 / 41.24 = 4.1 amps (5)

And the power consumed by the load is:

Vo(pk) * Io(pk) = Po(pk) -> 169.2 * 4.1 = 694 watts (6)

Hence, the inverter’s peak input current is:

Po(pk) / Vi = Ii(pk) -> 694 / 12 = 57.83 amps (7)

Almost 58 amps. That’s a lot of juice and, since no machine can be 100% efficient the actual currents will be higher. At 90% efficiency the RMS input current increases to:

(Po(rms) / n) / Vi = Ii(rms) -> (350 / 0.9) / 12 = 32.4 amps (8)

Additionally, it’s expected that the inverter’s power supply impedance will be much lower and that the interconnecting supply wiring is absent nasty parasitics. Sure.

So the general design problem is big current and questionable source impedances. How it is solved for is the primary determinant of the machine’s overall efficiency and performance.

-

Design Goals

05/08/2019 at 21:13 • 0 commentsThe prototype 350 I did last year was just that. It was never viable for anything off the bench but it was a great learning platform. The R1 is meant to be a refinement that actually works:

- Fits in a commercially available enclosure

- 350 watt (VA) output

- 11-16V DC input

- Semi-regulated output 110~120V AC RMS

- Distortion on par with commercial units (<5% THD)

- Powers wide variety of small appliances - resistive, electronic, inductive

- Operates in ambients up to 50C

- Lower EMI floor than prototype

It mostly meets these. Two problem areas are dissipation and poor regulation at high loads (low output voltage). Neither are show stoppers and its another opportunity to learn & improve.