This Friday, November 22nd, I am going to join @de∫hipu at the HeroFest gaming festival in Bern, where he secured a booth to exhibit the PewPews, and will bring my PicoPews.

When thinking about how to present them, I wondered whether it would be possible to stream their display content to a larger screen. They have WiFi and a beefy processor, after all. At first I was thinking of implementing a VNC server, but then decided against that when the color palette experiments described in the previous logs showed that I would need to transform the color values before they could be sensibly displayed on a typical RGB display. With VNC, I would have to do that in the server (or write a customized client on the desktop, but that would defeat the purpose of using a standard protocol in the first place). I would rather avoid spending that CPU time on the device, potentially slowing down the games.

My next choice was WebSockets, which already provide message framing and would allow the client to be written in HTML/JavaScript so that it could run on a variety of devices. I knew that MicroPython had some support for WebSockets for its WebREPL. Pretty soon, I had a proof of concept that was working amazingly well, following the frame rate of the PicoPew without a hitch and with no noticeable lag.

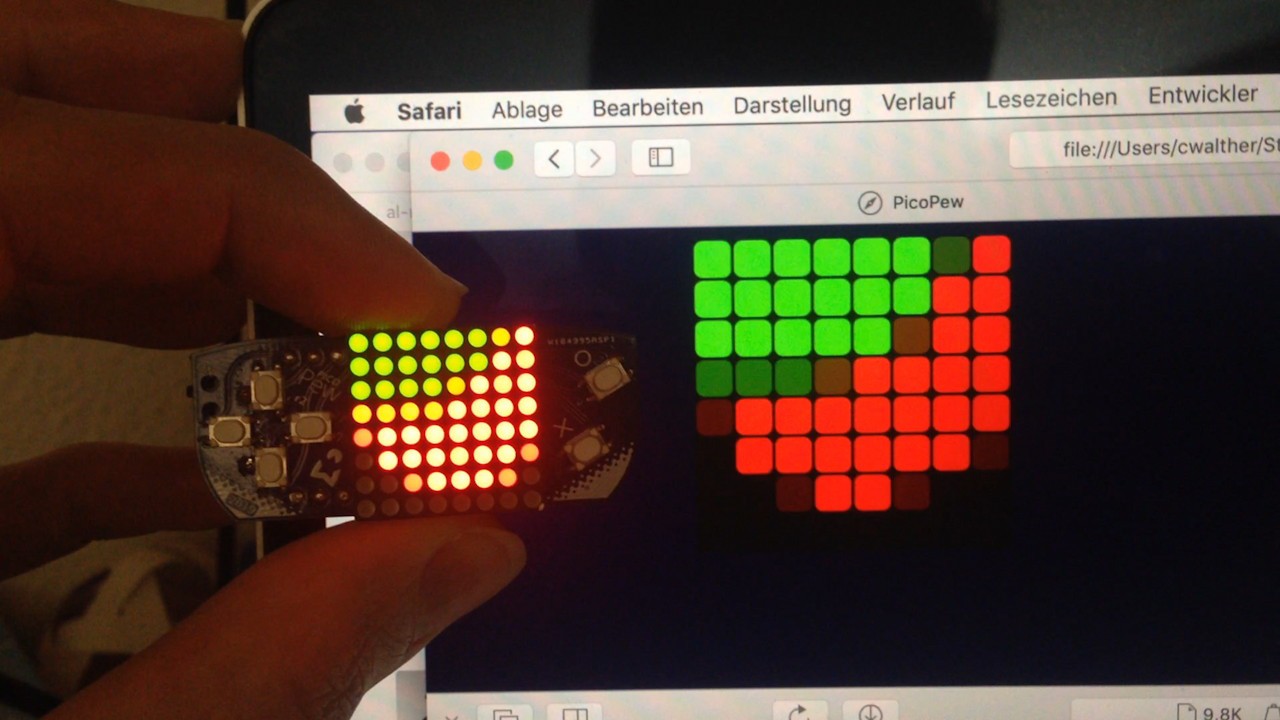

[video]

At every pew.show() call, when a client was connected, the same buffer of red/green values that would be sent over I2C to the LED driver was also packed into a binary WebSocket message and sent to the client.

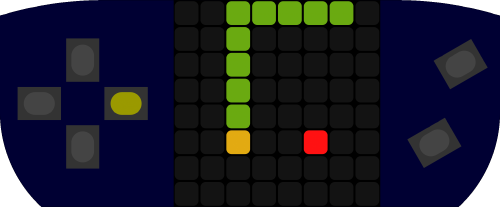

This was still without the color transformation in the client. After adding that, prettifying the looks, and also transmitting the state of the buttons at every pew.keys() call, it now looks like this:

The client code is remarkably short and simple, all graphics are done declaratively using SVG. All that SVG manipulation and rerendering is taking some toll on CPU usage though, probably drawing into a canvas procedurally would be more efficient there.

The code is available in a separate branch “remotedisplay” in the Git repository.

Christian Walther

Christian Walther

Discussions

Become a Hackaday.io Member

Create an account to leave a comment. Already have an account? Log In.