The goals of this project are the following:

- Apply the machine learning to a robot car.

- Add the PID controller to this robot.

To facilitate the understanding of this project, we have divided it into the following sections:

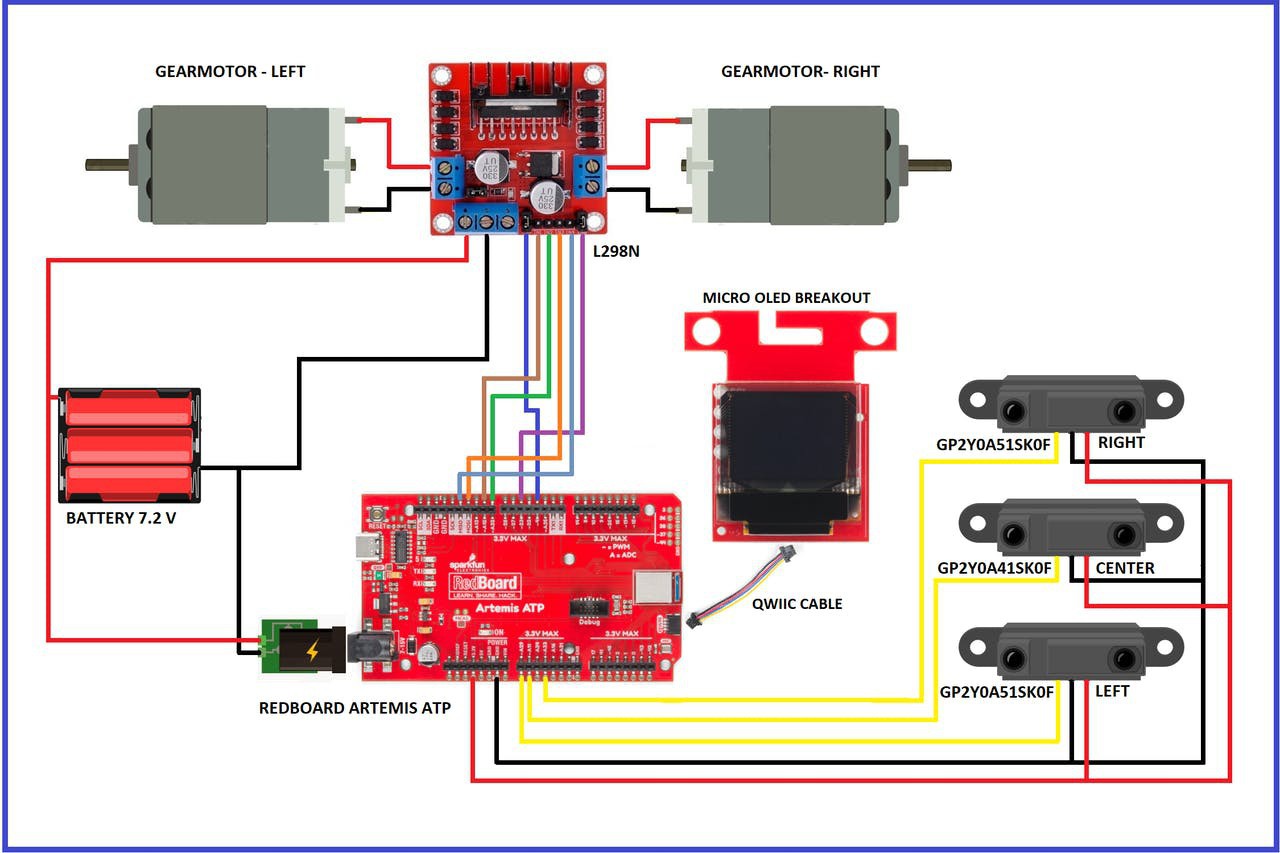

- HARDWARE,

- MACHINE PEARNING,

- PID CONTROLLER,

- PRINTING AND ASSEMBLING THE CHASSIS,

- TEST, and

- CONCLUSION.

- REPOSITORIES ON GITHUB

You can see my complete project at: https://www.hackster.io/guillengap/self-driving-car-using-redboard-artemis-atp-521c63

Guillermo Perez Guillen

Guillermo Perez Guillen

Miguel Wisintainer

Miguel Wisintainer

Marcelo G de Andrade

Marcelo G de Andrade

caltadaniel

caltadaniel

I was interested to read about your experience with ML on the Artemis platform. Although not in the scope for our current project ( https://hackaday.io/project/174098-lighting-color-control-with-commodity-lamps ), one of our team members purchased the Artemis board. There are definitely some significant implications for ML as it relates to our own proposed design that could take the project in some future iteration from "this thing we could program with some rules" to "this amazing device that could solve for scenarios we've not even imagined." Good luck!