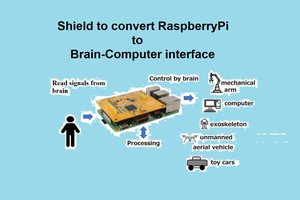

To solve the mobility problem of patients of celebral palsy, I wish to create an interface based on which such people can directly control mobility like electric wheelchairs and tricycles just by thinking. This is made possible by collecting EEG(electroencephalograph) data from a 'smart' cap and processing it on a Raspberry Pi fitted on the vehicle to determine the motion

Not only does this make challenged people more independent, it gives them the confidence to step out of their homes and mingle freely with normal people. It also decreases the anxiety of their loved ones which enables them to go about living freely.

Implementation

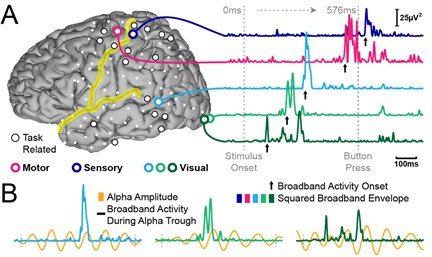

EEG data is taken through an I2C channel on the Raspberry Pi 3B, which after preprocessing and filtering is used to develop a feature vector. A neural network is the used to understand what direction and speed the user is commanding. The output layer has two neurons, one describing the direction the user wants to make the cart move in the format of polar angle and the other one the magnitude or the speed required.

Test Setup

I connected the EEG probes to the insides of a cap and routed the wires to a Raspberry Pi. I observed the output neurons while thinking about the direction of motion to get a good dataset. This process had to be repeated a number of times since I did not have any benchmarks. I referred to various websites all throughout the process to train my model in the best way possible. Finally, when I was confident, I fixed two geared DC motors to a testbench made of wood and sent PWM signals to a motor driver corresponding.

The problem I found was that sometimes, the commanded velocity became too high. Another one was that the direction changed rapidly sometimes. i had to apply additional filters to cover these cases.

I am currently trying to find loopholes in my setup.

Any suggestions are welcome.

Elecrow

Elecrow

Ildar Rakhmatulin

Ildar Rakhmatulin

Hi,

This project looks interesting!

Some time ago, I saw a demo of the OpenVibe framework, do you use it? Or did you write everything from scratch?

They share their datasets with people who have such projects, it may be useful for your project.

http://openvibe.inria.fr/datasets/