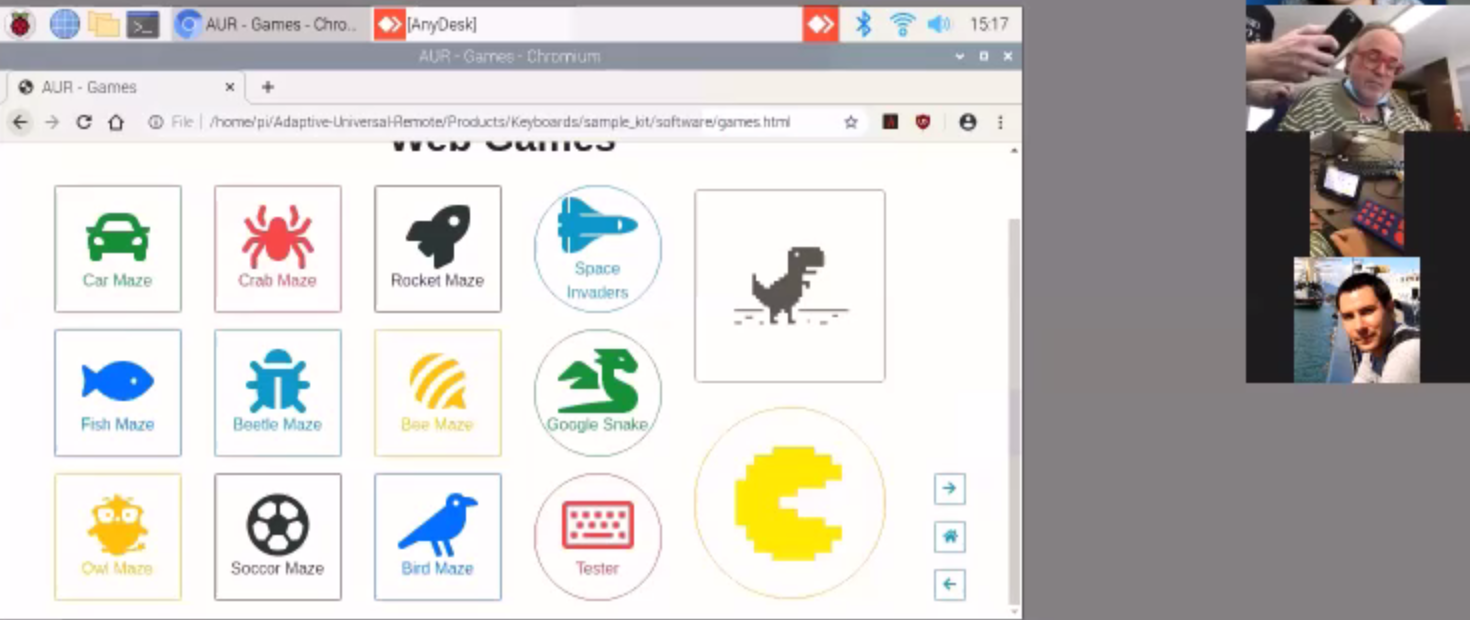

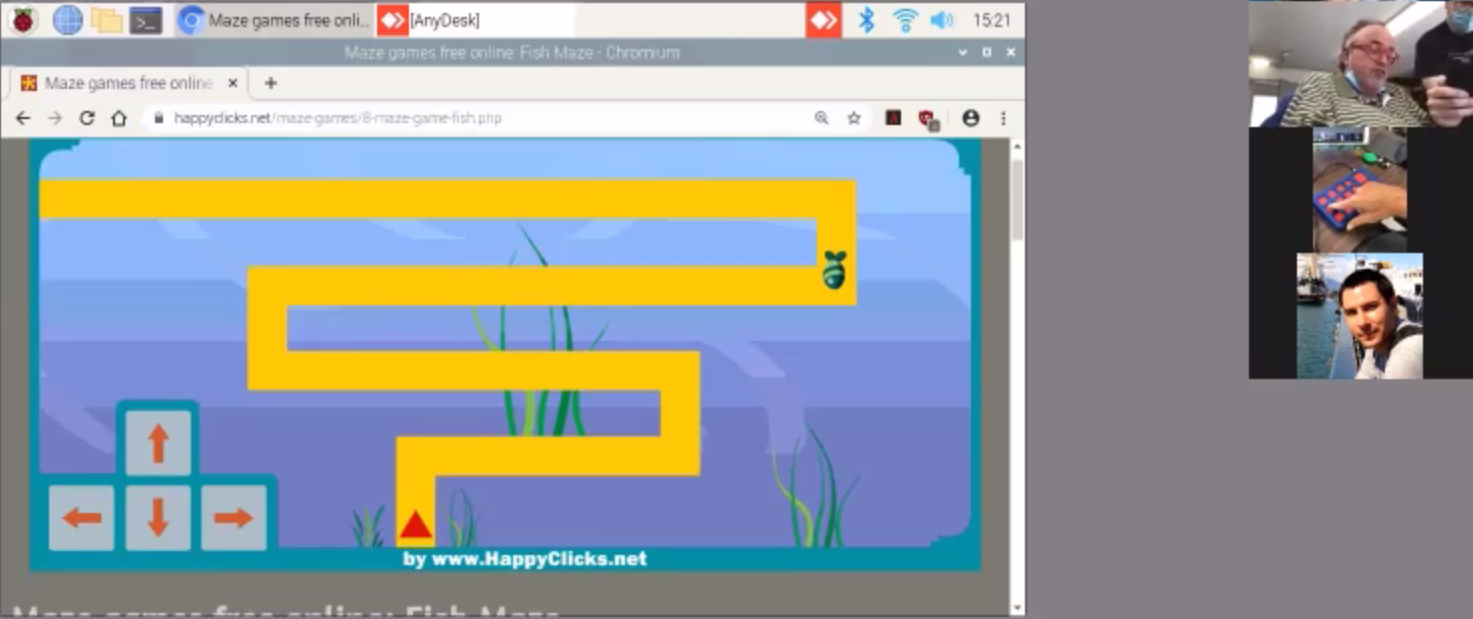

Last week, we had our first user testing session with UCPLA where we got our first chance to see our prototypes in the hands of our target audience. We were lucky enough to see this firsthand remotely through Zoom and got some valuable learning lessons. A few screenshots from this testing session are shown below.

This was the first time our devices were in the hands of other people, and it gave us valuable insight into some considerations we didn't focus on. Early on, we asked a few questions to help us better understand our audience, but watching someone try our devices allowed us to better grasp some of these issues.

- Designing for the Caregiver: Almost all of our focus was on the user itself, but there's also a setup procedure involved with our system. There were a few hiccups during the setup where some tasks took a bit longer than necessary. Some of this could be attributed to difficulties communicating through Zoom, some due to doing this trial for the first time, and some could be due to improper user workflow. Before we deliver the final prototype, we need to eliminate that third issue and have proper documentation on how to setup the system.

- Visual Impairment (Keyboard Device): We were told early on that about 50% of users didn't have line of sight, but it wasn't something that was easy to understand. This test gave me a better idea of what this meant. We had a display with the home screen near the keyboard, where the buttons on the keyboard spatially corresponded to images on the home screen layout. My assumption was that the delay for pressing a button was largely due to difficulty controlling one's arm. For this one user, it looked like the delay was just as much visual impairment than it was motor control impairment. First, the user needed to look at the display, then look at the keyboard to recognize which button needs to be pressed. Then, the user needed to move their hand and figure out where it was in relation to the target button. It became a more challenging task than previously thought, and during the maze game with the keyboard device, there was quite a number of wrong buttons being hit. This is for one user and I'm not sure how much this applies to other users, but it is something to consider for future user testing and future design revisions.

- Calibration (IMU Device): There were struggles with the IMU-based device as well. Before using the device, the user needs to go through a calibration sequence. Selecting the correct calibration positions was one thing, and the other thing was remembering those calibration positions during use. During usage, the controller had a difficult time distinguishing between left and right positions, while up and down positions had no issues. This issue was created by choosing the wrong calibration positions, and we need to figure out which calibration sequences will make it easier to accurately select different buttons. A lot of the issues here are to be expected because this is a foreign device that these users have never tried before and a learning curve is necessary. What was most encouraging was seeing how engaged the user was while using the IMU-device. By involving physical movements with a game, it was positive reinforcement to keep trying even though there were a lot of mistakes being made. During this session, physical therapy was brought up as a potential use for this system.

Discussions

Become a Hackaday.io Member

Create an account to leave a comment. Already have an account? Log In.

Thanks Erin! Looks like your team is making great progress too, good luck with the home stretch!

Are you sure? yes | no

Congrats on reaching this milestone! These are such great insights for your next iterations!

Are you sure? yes | no