This is more of an add-on type of feature. For live streaming, I use OBS with the websockets plugin and lorianboard as a scene controller. Lorianboard is pretty awesome including features that allow you to trigger multiple sounds, scenes, and sub-menu controls. At its most complicated, it can even create a simple state machine control over obs based on twitch chat, channel points, and other non twitch related triggers.

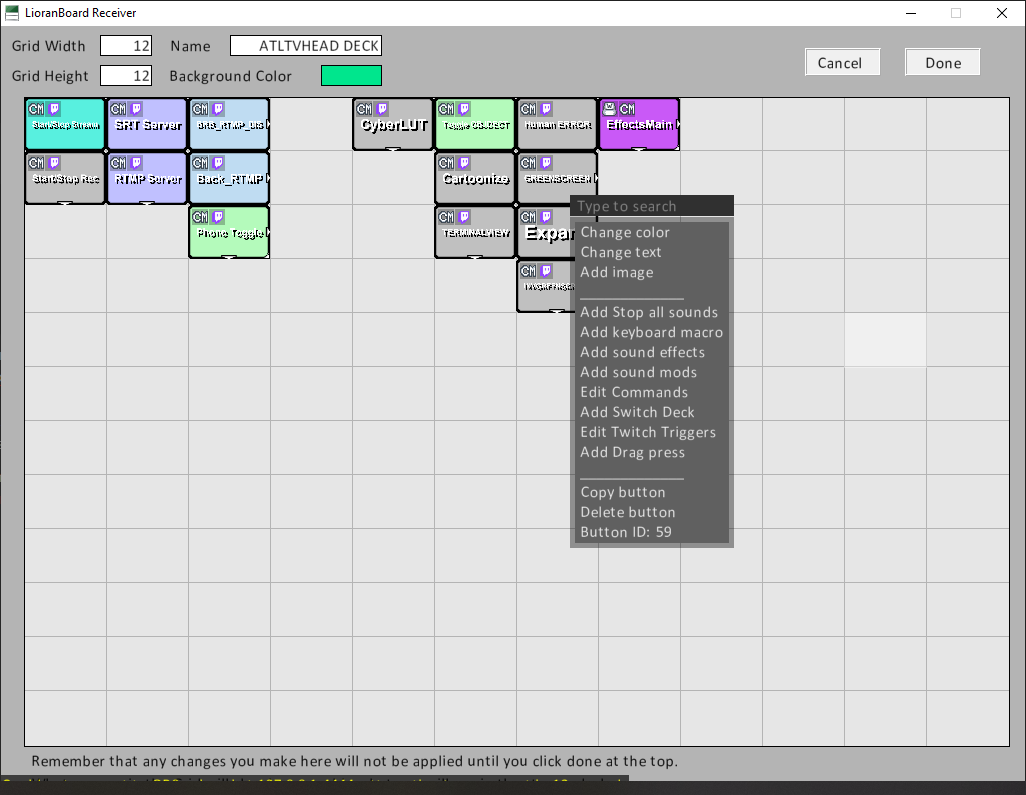

Since my gestures already trigger a chat message: !wave_mode !random_motion_mode !speed_mode !fist_pump_mode. It is rather easy to trigger a button in lorianboard. While editing a deck, choose a button and select Edit Twitch Triggers.

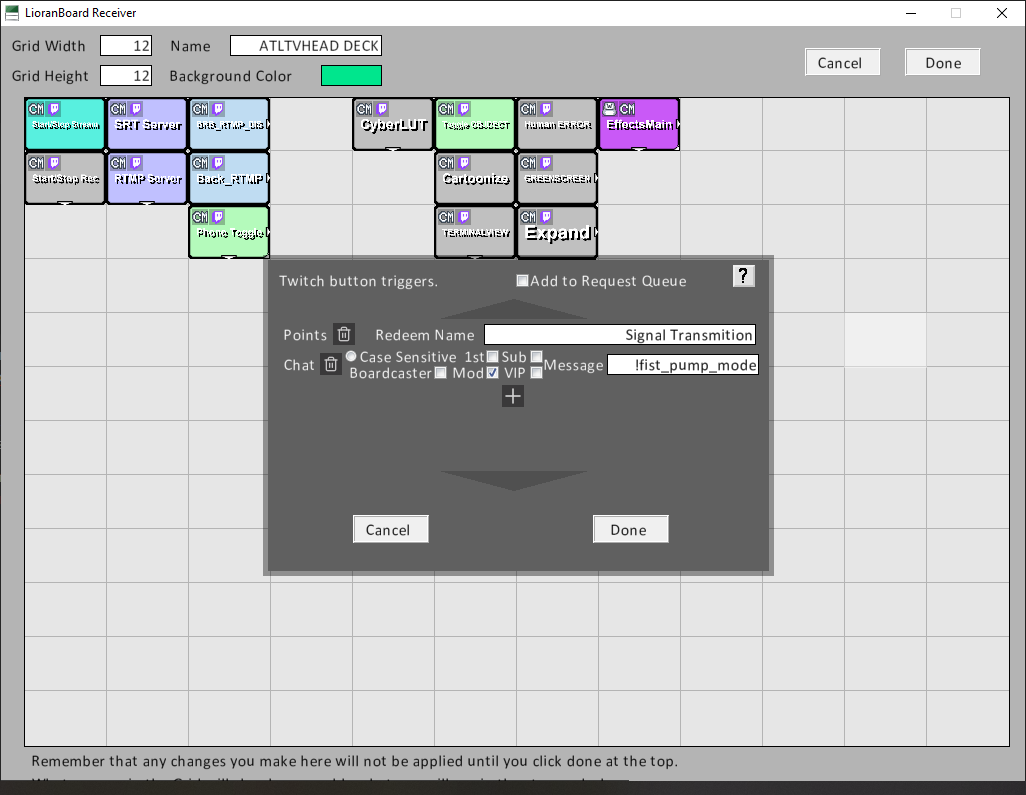

Click the plus and add chat command, type in the command and the role associated. You are done. With triggering the effect.

Click the plus and add chat command, type in the command and the role associated. You are done. With triggering the effect.

Coding the effect itself is a little more complicated.

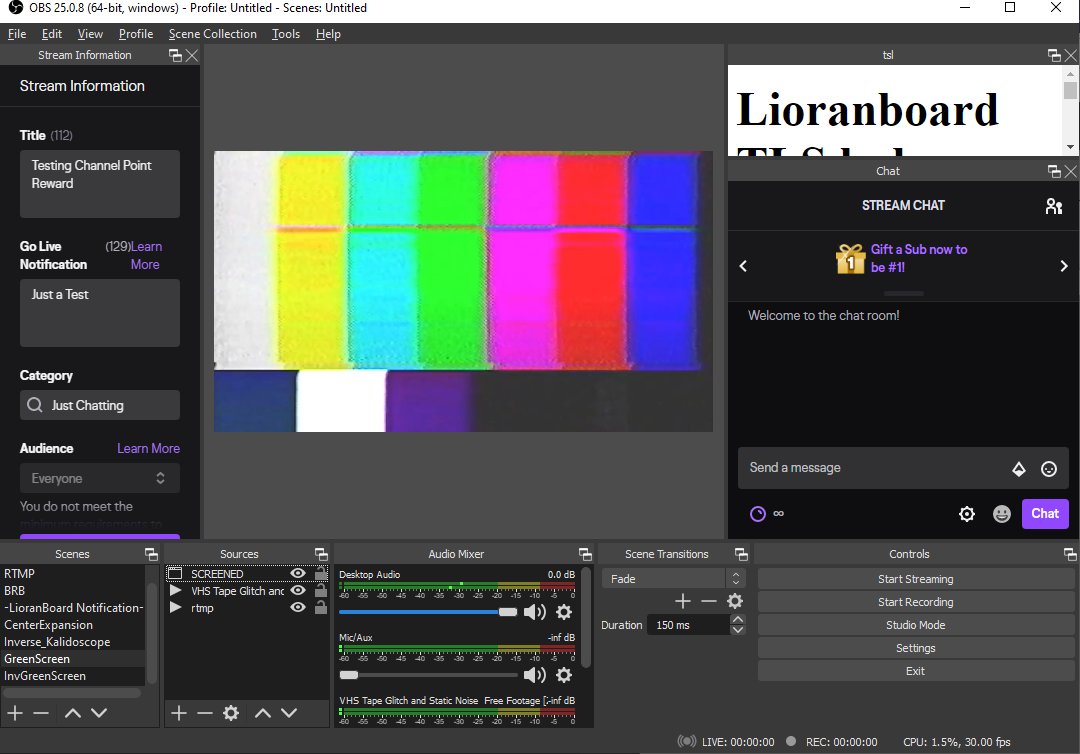

In OBS, create a scene for you to change to. Add in whatever sources you'd like.

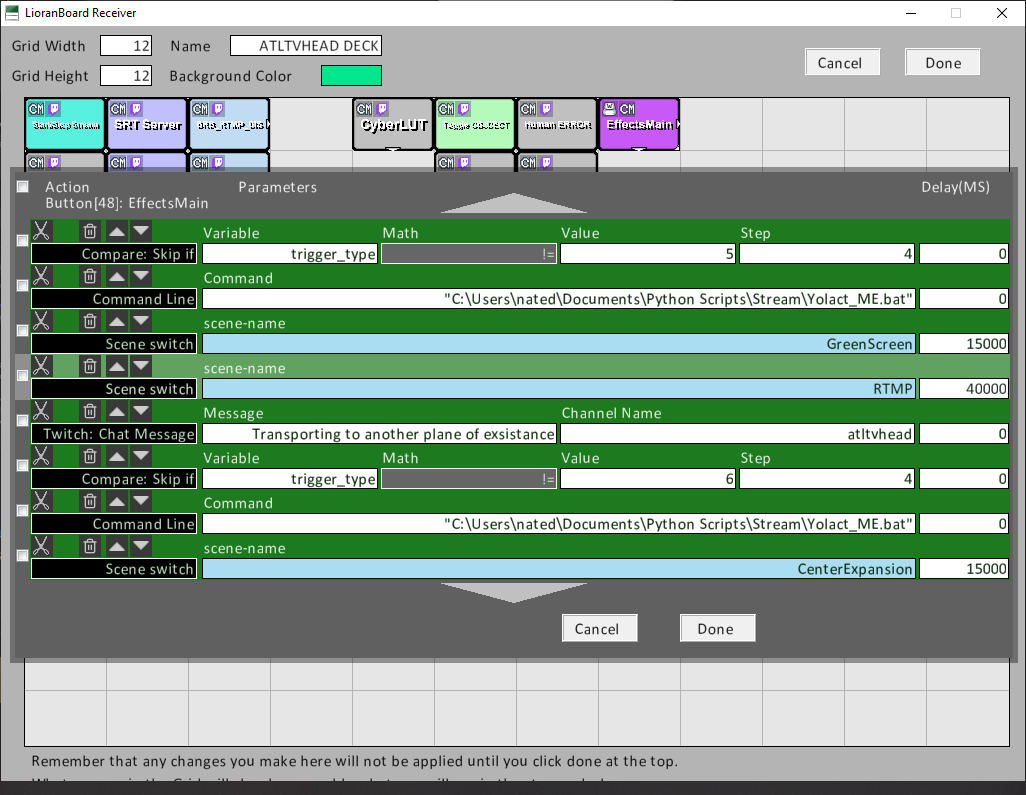

For me, I recreated a green screen effect using machine learning and the yolact algorithm. So I included the source window generated from python and yolact. I also rewrote part of yolact to display only the objects detected or the inverse. I also provided a glitchy vhs background. Back In Lorianboard I created a button with a "stack" or queue for effects. It looks like the following. An if statement with variable to the corresponding effect, a bash script executed by lorianboard, and scene changing commands based on timers.

The bash script runs my yolact python file with line arguments.

python eval.py --trained_model=weights/yolact_resnet50_54_800000.pth --score_threshold=0.15 --top_k=15 --video_multiframe=20 --greenscreen=True --video="rtmp://192.168.1.202/live/test"Notice my video is actually an rtmp video stream. I have an nginx-rtmp video server running in a docker container, which my phone broadcasts to using Larix broadcaster.

But Anyways the EFFECTS! Let's check out what I have created so far.

Pretty good for AI.

Inspired by Squarepusher's Terminal Slam video and some others work to recreate it. I tried my own varient.

atltvhead

atltvhead

Discussions

Become a Hackaday.io Member

Create an account to leave a comment. Already have an account? Log In.