After some struggles (and nightmares!) understanding the IEEE-754 specs and several FPU IP data sheets I have nearly finished implementing the NEORV32 floating point extension.

I have decided to implement the RISC-V Zfinx floating-point extension rather than the standard F extension to focus on size-optimization. Zfinx uses the standard integer register file x instead of a dedicated floating-point register file f, which is required by the F extension.

Using the x registers for floating-point operations makes context save/restore much faster since there is no need to push/pop f on the stack and also lowers hardware requirements by avoiding another full-scale 32*32-bit register file.

As a nice add-on the following F floating-point instructions are obsolete when using the Zfinx:

- FLW, FSW - load/store f registers

- FMV.X.W, FMV.W.X - move data between f and x register files

Unfortunately, Zfinx is not not yet supported by the upstreamRISC-V gcc port. There are experimental patches but I like having a production ready setup, which allows "plug-and-play" - or download-and-play, to be more precisely.

Therefore, I decided to use a plain rv32i toolchain and add Zfinx support by adding an intrinsic library. In case you don't know, intrinsics are basically some kind of inline assembly nicely wrapped up in C-language functions. I have written a set of macros (see on GitHub) that finally generate inline assembly producing a 32-bit instruction word using the ".word" assembler directive:

asm volatile (".word 0x1234abcd");

In this case, there would be a static instruction word 0x1234abcd. With some help of the macros you can modify the instruction word - or to be more specific: Modify things like opcode and register addresses making it a "custom instruction" macro.

Putting it all together, the Zfinx intrinsic library looks like this (cut-out of the floating-point addition instruction FADD):

uint32_t __attribute__ ((noinline)) riscv_intrinsic_fadds(uint32_t rs1, uint32_t rs2) {

register uint32_t result __asm__ ("a0");

register uint32_t tmp_a __asm__ ("a0") = rs1;

register uint32_t tmp_b __asm__ ("a1") = rs2;

// dummy instruction to prevent GCC "constprop" optimization

asm volatile ("add x0, %[input_i], %[input_j]" : : [input_i] "r" (tmp_a), [input_j] "r" (tmp_b));

// fadd.s a0, a0, a1

CUSTOM_INSTR_R2_TYPE(0b0000000, a1, a0, 0b000, a0, 0b1010011);

return result;

}

This function uses the noinline attribute forcing the compiler to turn it into a real function, that is called using the machine's calling convention. This calling definitions define the registers a0 and a1 to be used for passing the actual arguments. The function's result is also returned in a0. These two registers are hardcoded into the FADD instruction.

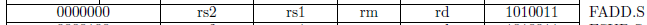

// fadd.s a0, a0, a1

CUSTOM_INSTR_R2_TYPE(0b0000000, a1, a0, 0b000, a0, 0b1010011);

There is also a dummy integer ADD instruction, which adds the function's arguments, but discards the results as it is written to register x0 that is hardwired to zero in RISC-V. This is a nice work-around to avoid that the compiler might optimize the function into some sort of constant propagating simplification. It CANNOT optimize the function to be some sort of constant propagating thing as we are actually doing something with the arguments that the compiler can identify (using ADD).

// dummy instruction to prevent GCC "constprop" optimization

asm volatile ("add x0, %[input_i], %[input_j]" : : [input_i] "r" (tmp_a), [input_j] "r" (tmp_b));

This intrinsic library works pretty well. I have also added "emulation" functions that compute the same operations as their intrinsic counterparts but using the GCC builtin functions. For example the according FADD emulation function looks like this:

float riscv_emulate_fadds(float rs1, float rs2) {

float res = rs1 + rs2;

return subnormal_flush(res);

}

So its a plain C-language floating-point addition. Since everything is compiled for a rv32i architecture, the compiler infer its builtin functions to compute the floating-point addition, which is a great reference to check the results from the actual hardware floating-point unit against.

I have setup tests for all Zfinx instructions that compare the results against the "golden" software-only reference. The inputs are generated by a XOR32 pseudo-random number generator and every now and then special values like INFINITY or NAN are inserted. Everything is running on one of my FPGA boards (since several hours) and the results look quite promising :D

<<< Zfinx extension test >>> SILENT_MODE enabled (only showing actual errors) Test cases per instruction: 50000000 #0: FCVT.S.WU (unsigned integer to float)... Errors: 0/50000000 [ok] #1: FCVT.S.W (signed integer to float)... Errors: 0/50000000 [ok] #2: FCVT.WU.S (float to unsigned integer)... Errors: 0/50000000 [ok] #3: FCVT.W.S (float to signed integer)... Errors: 0/50000000 [ok] #4: FADD.S (addition)... Errors: 0/50000000 [ok]

The whole Zfinx-related software framework is available on GitHub: https://github.com/stnolting/neorv32/tree/master/sw/example/floating_point_test

I have not yet published the FPU VHDL code on the NEORV32 GitHub repo. There are still some tiny things to fix in the FPU. Most important: code clean-up (also,the VHDL sources are still full of inappropriate curse words... xD).

Also, I have not found a convenient way to verify the generated exception flags. The standard C++ handling of the exceptions generated by the SOFTWARE floating-point emulations seems to be not supported by my toolchain.

#include <fenv.h>

#pragma STDC FENV_ACCESS ON

Maybe someone out there has a nice idea how to verify the exceptions?! ;)

The FPU sources will be released within the next days - so stay tuned!

Discussions

Become a Hackaday.io Member

Create an account to leave a comment. Already have an account? Log In.