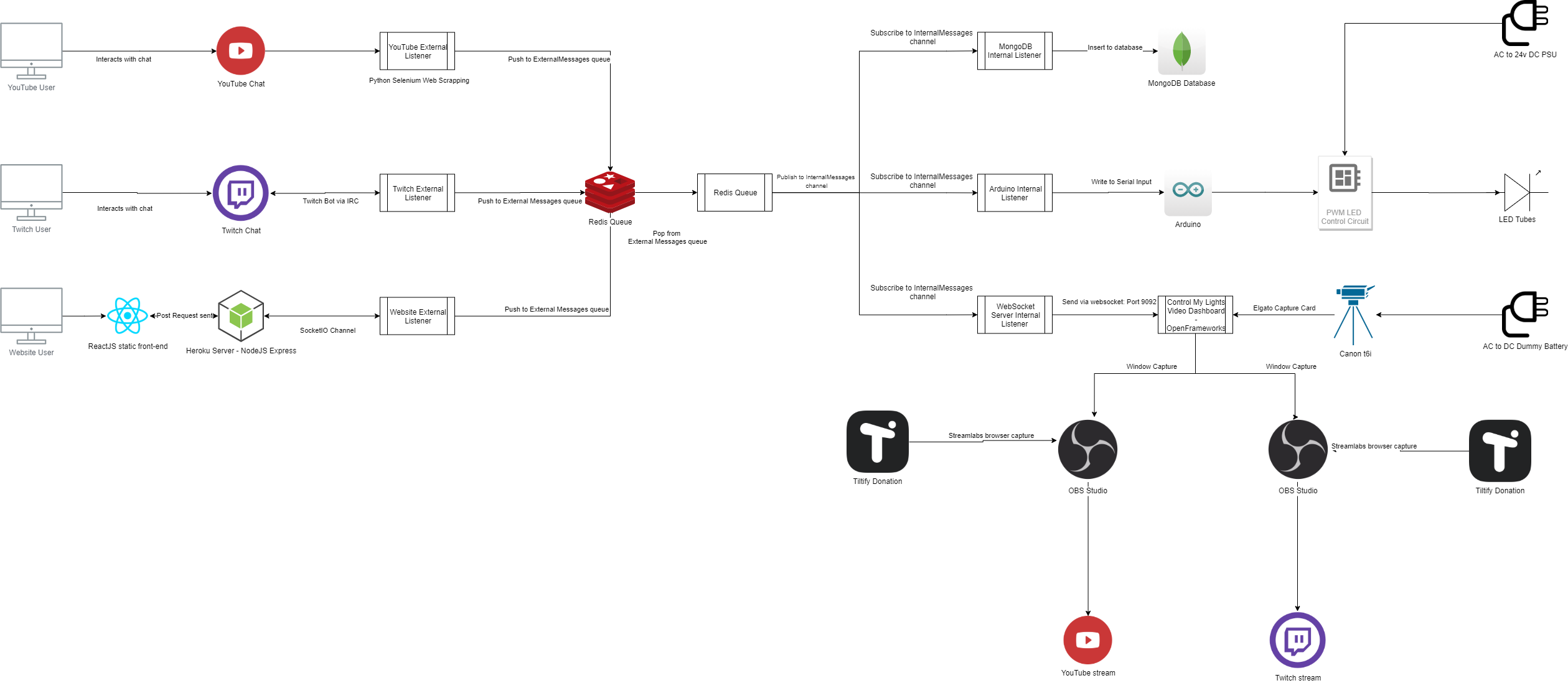

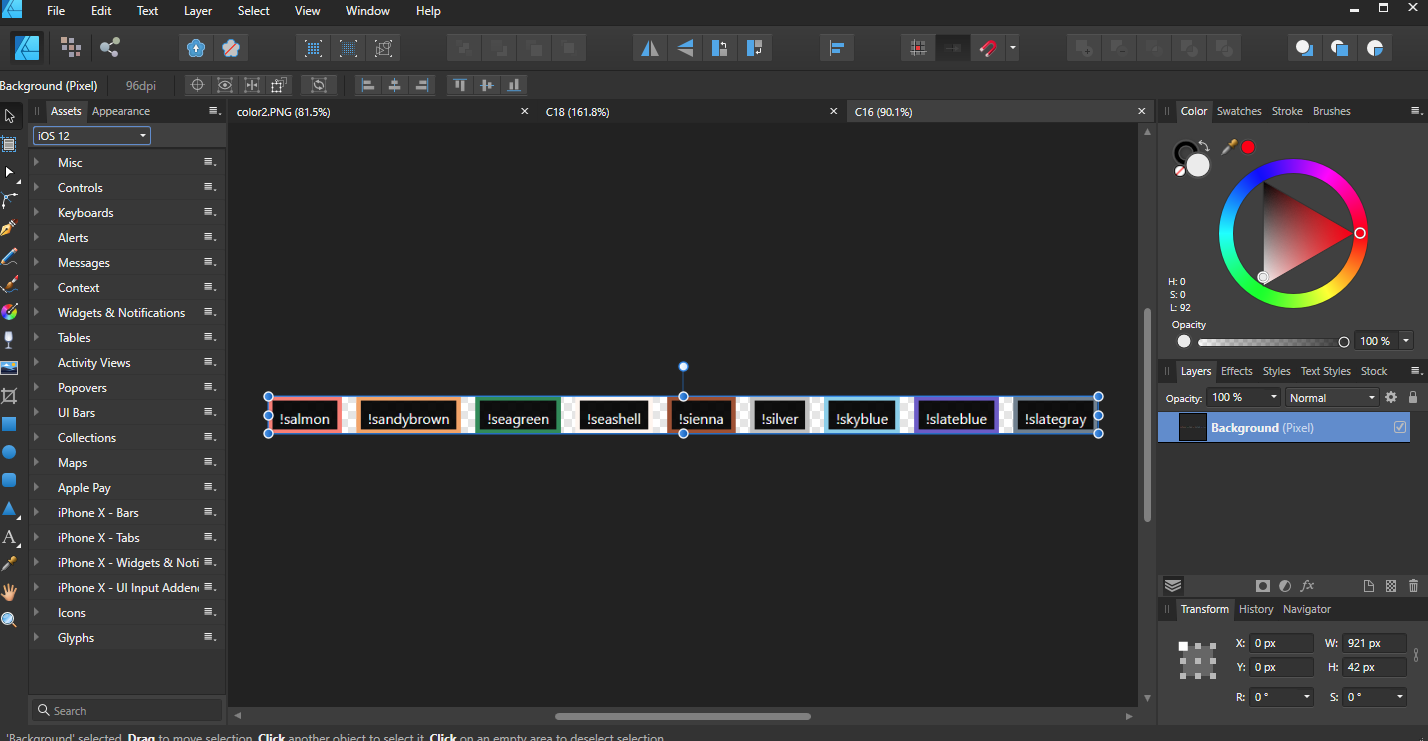

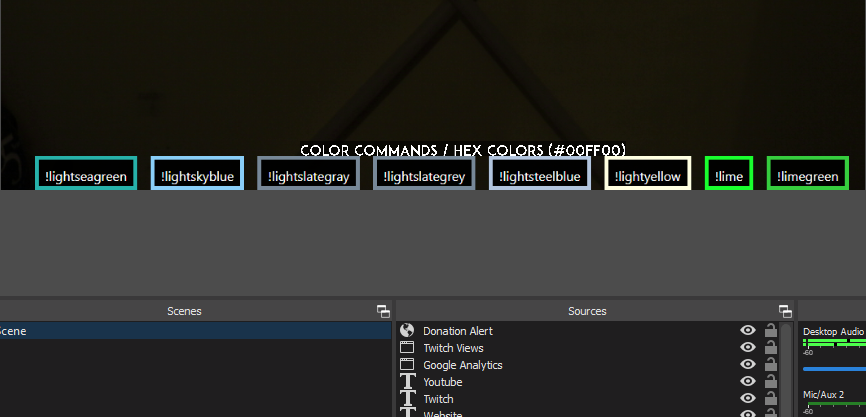

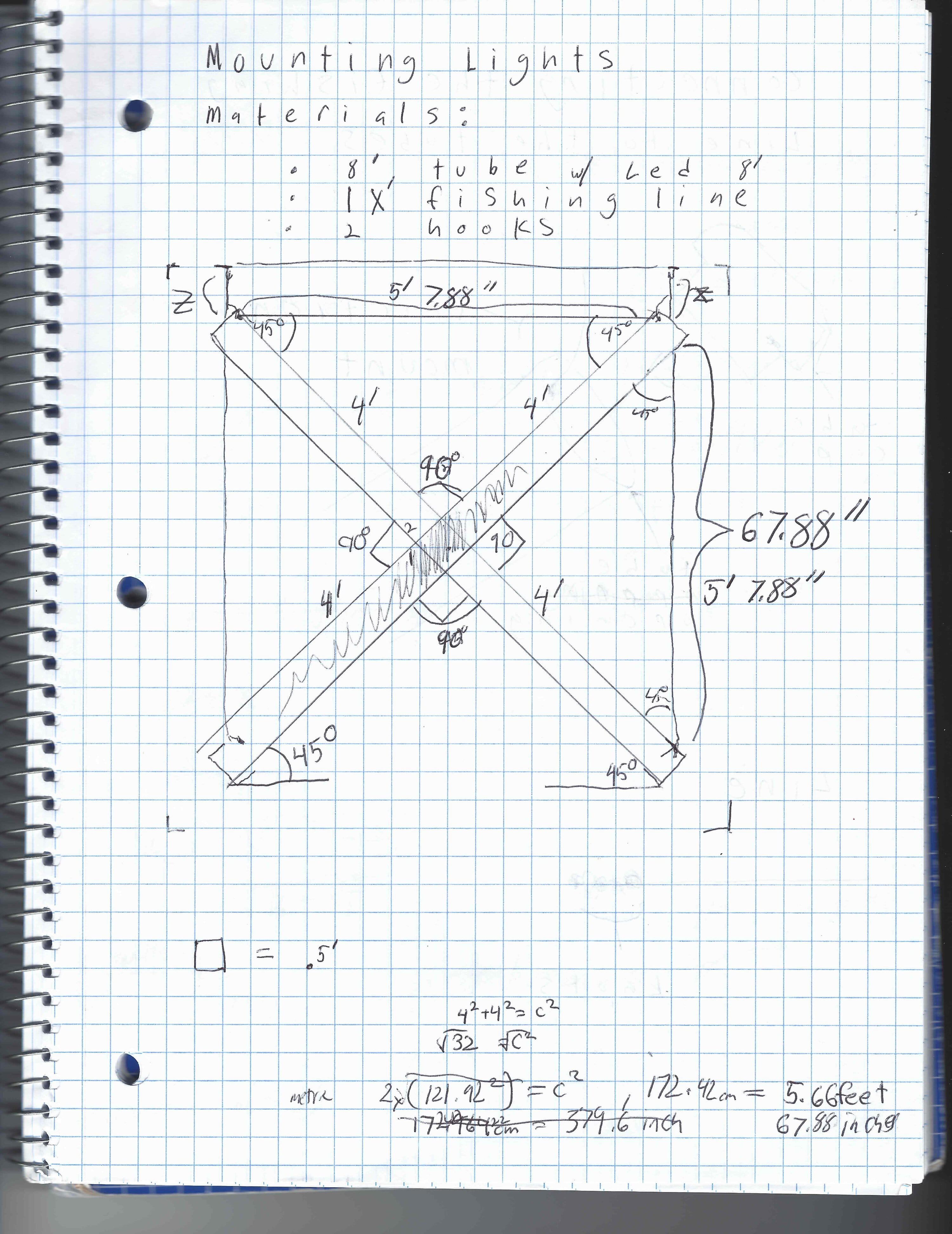

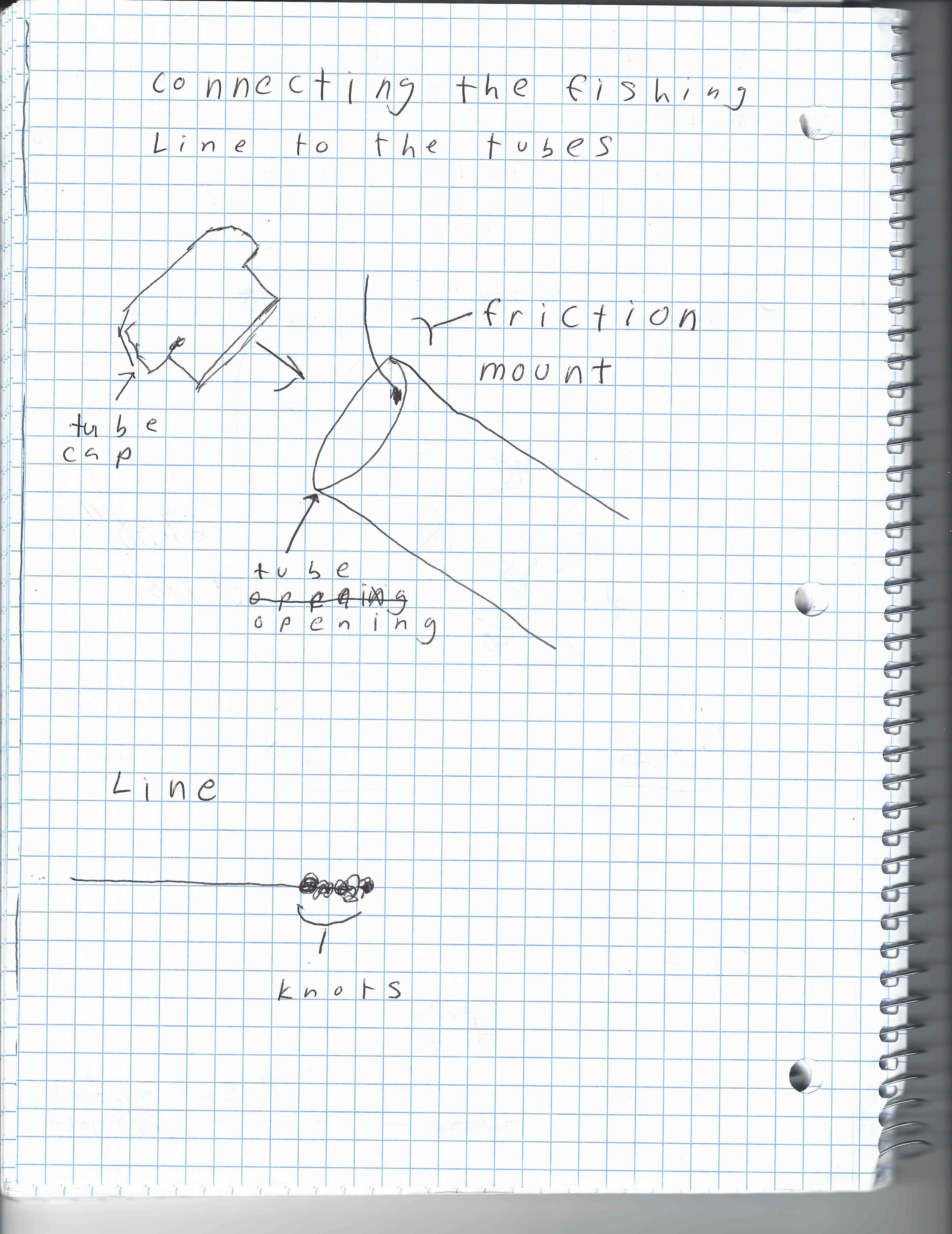

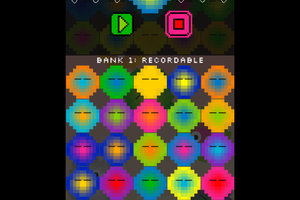

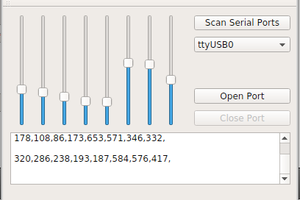

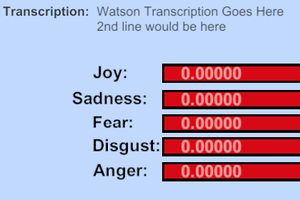

This is an interactive light installation that can be controlled via that website, Twitch Chat, or YouTube chat. I am also using It to raise money for Feeding America (I put In the first $25, goal is $1000). I would be honored if you check it out. Here’s the server side tech stack (on Heroku): NodeJS(ExpressJS/SocketIO), ReactJS. I also made the mockups for it in Figma. Here is the desktop tech stack: NodeJS, Python (Selenium – Chrome Web Driver), Redis, MongoDB, OpenFrameworks (C++ framework), Arduino, Web Sockets.

Control My Lights

Control My Lights using a website, Twitch chat, YouTube chat. controlmylights.net

Edward C. Deaver, IV

Edward C. Deaver, IV

Ben Delarre

Ben Delarre

Fluxly

Fluxly

ZaidPirwani

ZaidPirwani

Jerry Isdale

Jerry Isdale