Love Elemental

A fuzzy, glowing, touch-responsive serpent using ROS + SOTA machine learning to make lifelike behavior.

A fuzzy, glowing, touch-responsive serpent using ROS + SOTA machine learning to make lifelike behavior.

To make the experience fit your profile, pick a username and tell us what interests you.

We found and based on your interests.

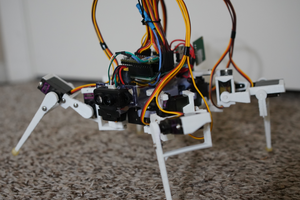

Ellie now can move properly, after working with Robotshop to debug the usage of the LSS servos.

Here she is with her ribs on--each rib has 29-32 RGB controllable LEDs. Also, you can see her janky v0 head--just a Lattepanda Alpha, Realsense D435i camera, and a battery pack zip-tied on board. The wide-angle lens hides that she's almost a meter long uncurled.

Here she is moving at last. The gait is a Central Pattern Generator, based on one used in Amphibot II: https://www.researchgate.net/publication/40755126_AmphiBot_II_An_Amphibious_Snake_Robot_that_Crawls_and_Swims_using_a_Central_Pattern_Generator . Although we don't have proper movement yet, I believe this is a function of the floor & wheel design--the wheels and tire mounts will need further iterations.

I then tried increasing the gait frequency to 25 Hz, which certainly helped with the jitteriness/audible annoying noise.

Now that the basic motion controls all seem to be working, there's lots of work to be done in finding workable gait parameters. The CPG equations/software has several parameters:

- `h`, the differential equation solver step size

- `a`, which I'm not sure how it's chosen (see the paper)

- `w`, the oscillator coupling coefficient

- `v`, the CPG frequency

- `delta_phi`, the number of sine wave periods within a body segment

- `A`, the amplitude of the gait

- `servo_update`, the update rate of positions to servos

- the servo parameters, such as gain, stiffness, speed, acceleration, etc

- the spine vertical angle, to have Ellie "press" herself into the floor to improve traction/wheel contact

Ellie got tired after all that hard work, and one pushrod even came undone completely. Now that the movements are working, I can proceed with applying loctite to all of the pushrod connections (which I had delayed in case I needed to do major maintenance).

In the above photo, you can also see the tires, which are simple TPU parts press-fitted onto tiny 603ZZ bearings. The tires slip to the side and rub against the support struts, which is also negatively impacting Ellie's isotropic friction. Please leave a comment if you have suggestions of ways to improve the wheels!

After nearly a year in R&D, the Love Elemental's body's hardware is pretty much done.

She's got 7 working segments with custom electronics and controls: each segment has a 120MHz Cortex M4 microcontroller that handles the LED visualization, motion planning, battery charging, and CAN-FD communication. The 'head' (which is a stopgap for the real thing) has a Lattepanda Alpha, which is a crazy-fast nearly 4GHz x86 board that's the size of a raspberry pi.

With the LEDs mounted on the ribs, the fur effect is definitely looking awesome!

The actuators are Lynxmotion LSS-HT, but I'm running into issues with overtorque or shutdown situations. Hopefully it isn't long before Ellie is swimming across the floor.

I've spent the past many months designing and building the PCBs and mechanics of the Love Elemental. Now, this log will include a few teasers of things that are being included in the build as I put it together over the next couple months. The boards have nearly all the functionality I hoped for: automatic charging for Lipos, 10A+ discharge from integrated 3S Lipos, DMA-based control for LED strips for animation, connections to the servo motors, and a fan driver (if the board needs extra cooling help). The capactive touch sensors work, but don't have the range/proximity that I wanted to achieve when combined with the actively shielded cables I'm using. The battery protection & charge management IC circuit doesn't work, so that'll need to be remade at some point. All but one circuit working on the first spin, for my first PCB in over 10 years? I'll take it!

The body has also undergone many iterations & improvements, to make it easier to assemble, iterate on, and to give it capacity to mount everything needed. Most excitingly, the Love Elemental now has ribs that are attached to the inner skeleton. These ribs use 3D printed flexures to constrain their movement, so that although it'll only have 7 segments, it will have 28 individually passively articulated ribs, which I think will give it a very nice aesthetic. The design of the ribs, flexures, and mates will have to come in another post, as there are a lot of tricks I used to ensure they're easy to print, assemble, and replace, while also being strong.

Lastly, I imported all the mechanical designs into solidworks, so that I can iterate faster than my custom CAD environment allows me to. As it turns out, even I like the real CAD tools :)

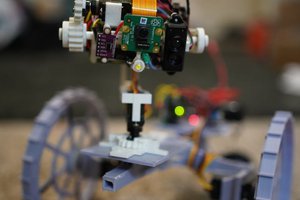

I've managed to bring up mapping & visual odometry in my ROS simulation of the love elemental. From the get-go, I knew I wanted to use a kinect/realsense sensor for perception, because having a color + depth map seems like a rich space for doing interesting things, and the cost has fallen so much over the years.

Before getting into the details, here's a screenshot of Rviz (only visualizing the robot's internal model state, top right), Gazebo (visualizing the actual simulation, bottom right), and Rtabmap (visualizing the map-making & SLAM model, left):

For navigation, I've also known that I'm going to use ROS Navigation, which is a mature and open navigation platform. To set up navigation for a robot, you need several things:

- odometry (where are you relative to where you where)

- pose estimation (how are the joints oriented & which way are you pointing)

- mapping (what's around you)

- a movement controller (how do you go forward or turn)

- path planning (how should I get from here to there)

Some of these are easier and some are harder. I'll now go into more detail about what I tried, what I learned, and how I got this working.

For instance, for wheeled robots, odometry is easy, since you can measure distance traveled via wheel odometry. For a serpent robot, this is not a studied field. My initial gait controller R&D (see the prior log) had a way to do dead reckoning, but I've determined that wheel/ground slippage happens constantly, so I can't use dead reckoning or encoder odometry. So, I tried several packages to compute odometry.

First, I tried hector_slam, which uses a lidar scanner and estimates the map & location simultaneously. As it turned out, hector_slam's alignment requires a very wide field of view to be successful, and since I was using depthimage_to_laserscan conversion (via the eponymous ros node), I was only able to get a laser scan with a narrow FOV. So, hector_slam would fail to map & align successfully.

Next, i found ORBSlamV2, which takes a stream of images, stereo images, or depthscan images and uses optical flow to estimate odometry (and maybe it does map-making too). After I built Orbslam2 and got it running on the love elemental simulation, it would nearly instantly lose tracking within a few hundred milliseconds, and then it would segfault. On to the next one!

Finally, I found RTABmap, which has a community of users and seems better supported. Thankfully, I also found this tutorial on setting up rtabmap with different sensor configurations which helped me figure out how to configure rtabmap, which luckily worked! And happily, rtabmap handles mapping and visual odometry, which solved 2 of these problems.

Also, as an aside, if visual odometry really doesn't work out with rtabmap, you can always slap a T265 visual odometry module onto the robot and get something significantly more accurate than the OSS tech. The downside is, of course, that this isn't good for the aesthetics I'm going for.

Odometry gives you one pose estimate, but typically, you'll combine sensor information from other sources (like an IMU) to get a better pose estimate than each individual sensor will provide. I found this example for pose estimation with rtabmap for realsense, where they use an unscented kalman filter to fuse visual odometry with the IMU. This is still in development for the simulation, since I'm finding that the simulated IMU gets bounced around a lot.

I still need to implement a movement controller, since the propagation manager isn't a good way to do movement. There are 2 parts to the movement controller I need to implement: the body alignment (i.e. which way is forward when you're slithering, since your head doesn't usually point forward), and how to generate movement (i.e. timing the movement of the joints).

For body alignment, this paper has a simple approach that decomposes the snake's body using PCA to find the principle...

Read more »(This is as-of December 25, 2020)

Due to the interesting structure of a universal spine joint with 2 actuated abdominal muscles, doing the trig for the inverse kinematics isn't as simple as it would be for legged robots. As a result, I ran a simulation that tried all the different servo positions. I measured the servo positions & spine angles and trained an lightgbm model to create a kinematics controller.

This video shows a the simulated robot doing a simple serpentine gait. While there's still a lot to do, I'm excited that some of the low-level components of the project are starting to seem real.

(This is actually as-of Dec 19, 2020)

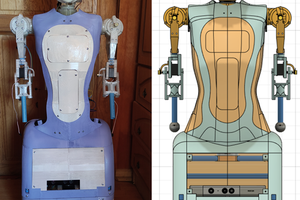

This shows the environment I created that uses python to combine openscad, step file importing, and joint definitions to export to a physics and motor-control simulation-ready model for Gazebo/ROS.

It was particularly exciting because this approach actually includes full inertial models for the entire robot, doing calculations to estimate them from the component's STEP files.

This shows a side profile of the robot at this point, highlighting all points with their orientation axis in red, and connections points to combine components (so that all calculations can be done in local frames of reference). This shows an initial design for the body segments, spine, servo motors, and wheels for the elemental. The servo models come from the vendor's step files.

Create an account to leave a comment. Already have an account? Log In.

Become a member to follow this project and never miss any updates

Maximiliano Rojas

Maximiliano Rojas

Jacob David C Cunningham

Jacob David C Cunningham