I've managed to bring up mapping & visual odometry in my ROS simulation of the love elemental. From the get-go, I knew I wanted to use a kinect/realsense sensor for perception, because having a color + depth map seems like a rich space for doing interesting things, and the cost has fallen so much over the years.

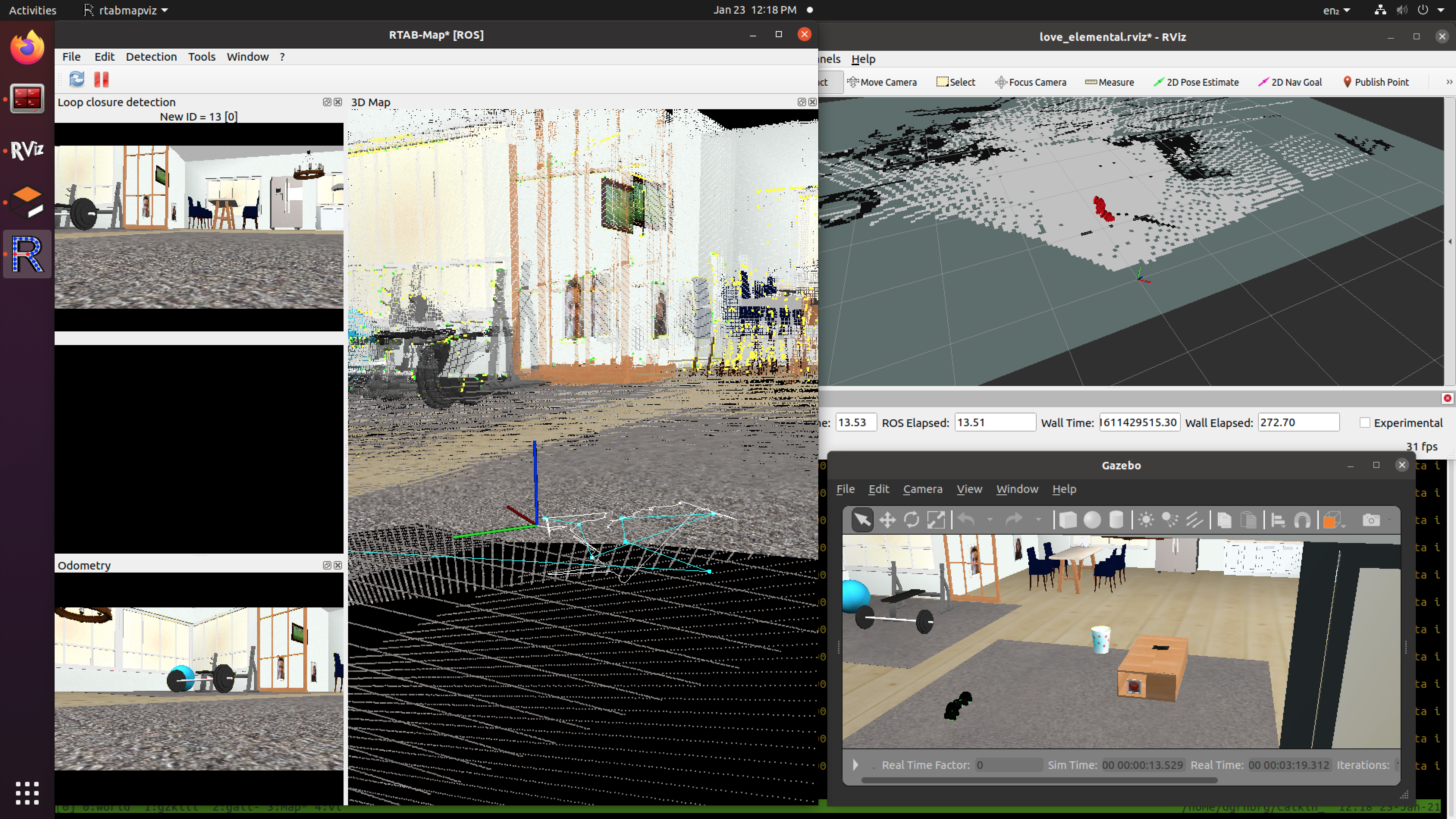

Before getting into the details, here's a screenshot of Rviz (only visualizing the robot's internal model state, top right), Gazebo (visualizing the actual simulation, bottom right), and Rtabmap (visualizing the map-making & SLAM model, left):

For navigation, I've also known that I'm going to use ROS Navigation, which is a mature and open navigation platform. To set up navigation for a robot, you need several things:

- odometry (where are you relative to where you where)

- pose estimation (how are the joints oriented & which way are you pointing)

- mapping (what's around you)

- a movement controller (how do you go forward or turn)

- path planning (how should I get from here to there)

Some of these are easier and some are harder. I'll now go into more detail about what I tried, what I learned, and how I got this working.

Odometry

For instance, for wheeled robots, odometry is easy, since you can measure distance traveled via wheel odometry. For a serpent robot, this is not a studied field. My initial gait controller R&D (see the prior log) had a way to do dead reckoning, but I've determined that wheel/ground slippage happens constantly, so I can't use dead reckoning or encoder odometry. So, I tried several packages to compute odometry.

First, I tried hector_slam, which uses a lidar scanner and estimates the map & location simultaneously. As it turned out, hector_slam's alignment requires a very wide field of view to be successful, and since I was using depthimage_to_laserscan conversion (via the eponymous ros node), I was only able to get a laser scan with a narrow FOV. So, hector_slam would fail to map & align successfully.

Next, i found ORBSlamV2, which takes a stream of images, stereo images, or depthscan images and uses optical flow to estimate odometry (and maybe it does map-making too). After I built Orbslam2 and got it running on the love elemental simulation, it would nearly instantly lose tracking within a few hundred milliseconds, and then it would segfault. On to the next one!

Finally, I found RTABmap, which has a community of users and seems better supported. Thankfully, I also found this tutorial on setting up rtabmap with different sensor configurations which helped me figure out how to configure rtabmap, which luckily worked! And happily, rtabmap handles mapping and visual odometry, which solved 2 of these problems.

Also, as an aside, if visual odometry really doesn't work out with rtabmap, you can always slap a T265 visual odometry module onto the robot and get something significantly more accurate than the OSS tech. The downside is, of course, that this isn't good for the aesthetics I'm going for.

Pose Estimation

Odometry gives you one pose estimate, but typically, you'll combine sensor information from other sources (like an IMU) to get a better pose estimate than each individual sensor will provide. I found this example for pose estimation with rtabmap for realsense, where they use an unscented kalman filter to fuse visual odometry with the IMU. This is still in development for the simulation, since I'm finding that the simulated IMU gets bounced around a lot.

Movement Controller [in progress]

I still need to implement a movement controller, since the propagation manager isn't a good way to do movement. There are 2 parts to the movement controller I need to implement: the body alignment (i.e. which way is forward when you're slithering, since your head doesn't usually point forward), and how to generate movement (i.e. timing the movement of the joints).

For body alignment, this paper has a simple approach that decomposes the snake's body using PCA to find the principle axes of alignment. I'll port this to a ROS node, and have it broadcast a TF2 transform that allows odometry, mapping, and navigation to work in the aligned frame, and the transform will map from the aligned frame to the physical frame of the robot (and its sensors).

For movement, I've found 3 approaches that seem reasonable. Least complex is the hirose serpentine curve, as expanded upon in this paper. This is very simple to implement, but in order to change between gaits (i.e. forward fast vs slow, or straight vs turn), it will require a gradual transition in the controller parameters to continue to look smooth. The next, more complex approach uses CPGs (central pattern generators, which are bio-inspired). This approach handles reactions to the environment resisting the gait through dynamic feedback adaptation, but it requires a bit more coding, since it depends on estimating several meta-parameters, and it uses quite a bit more calculus and numerical integrations. The final approach uses MPC (model predictive control, where you run simulations of the movement into the future given the robot's dynamical model). This approach is probably the best for gait control, but I'll need to really understand that paper to recreate their dynamical model, and I'll need to write a new optimizer that's fast enough to run on the love elemental. Luckily, I happen to have some expertise in optimizer implementation; however, I'll only take this approach if I can connect with that MPC research group and collaborate, since this is hobby for me ;).

Path Planning [next to do]

In ROS, move_base is the module that can take a map, odometry, and a goal position, and compute a plan & commands to move from the current location to the goal position. The default planner for move_base assumes that robots can pivot (like differential drive), but serpents act more like ackermann-drive robots (like a car with a steering wheel, it has a minimum turning radius). I found the TEB local planner, which has support for minimum turning radius and robot collision footprints, so luckily it looks like this project will work out. Once I have the alignment implemented, I'll be able to use the aligned principle axis as a line collision model for the robot, with the collision radius set by the amplitude of the slithering curves.

I totally left out all the experimentation I did to find a high-quality simulation model for the realsense camera, but the working models will be available when I release the code & artifacts.

So, that's an update on the very exciting progress for the love elemental. At this point, I may need to return to the low-level machine-learned actuation controllers to get better & smoother motion plans for the spinal joint, so that the gait controllers will be smoother, so that the path planner can be smoother. Additionally, I hope some of this research into ROS navigation will be useful for people building other non-wheeled robots.

David Greenberg

David Greenberg

Discussions

Become a Hackaday.io Member

Create an account to leave a comment. Already have an account? Log In.