I decided to use a WS2813 strip and the ATMega32u4-based Arduino Pro Micro. The Pro Micro is good for a couple of reasons - the Chinese clones are cheap and, presumably, it has stable serial communication with OK throughput since the ATMega32u4 can talk to USB directly - although I mainly picked it just because I had it on hand. Since the ATMega32u4 is lucky if it manages to calculate the first 100 digits of pi until the end of next year, all the processing will have to be done on the PC, which meant it needed to not hog performance, while still being fast.

The software

For the prototype, I used Python with NumPy to handle all the calculations and mss to capture the screen. I divided the edge of the screen more or less evenly (rounding the couple pixels of division error) into cells with some pre-determined height.

In the corners, in theory (if the LED strip is laid out evenly), 2 LEDs are responsible for the same cell, and I could've come up with a neat solution of how to split the corner evenly between the 2 LEDs, but I said fuck it and just let the top and bottom LEDs take the corner and assumed that the side LEDs are offset by a bit (which, in practice, they were because of the wires connecting the LED strip segments).

I have color data in cells, but now how do I process the data to get a single color?

First I experimented with clustering algorithms. I attempted to use K-Means from the SciPy module, which did work, but it was sooooooo slooooooooow. It was only managing to calculate 2 frames per second with 100 cells (All benchmarks are done on a 1920x1080 display). After some further research, I decided to not bother with anything fancy (at least for the first attempt) and go with the good ol' mean value.

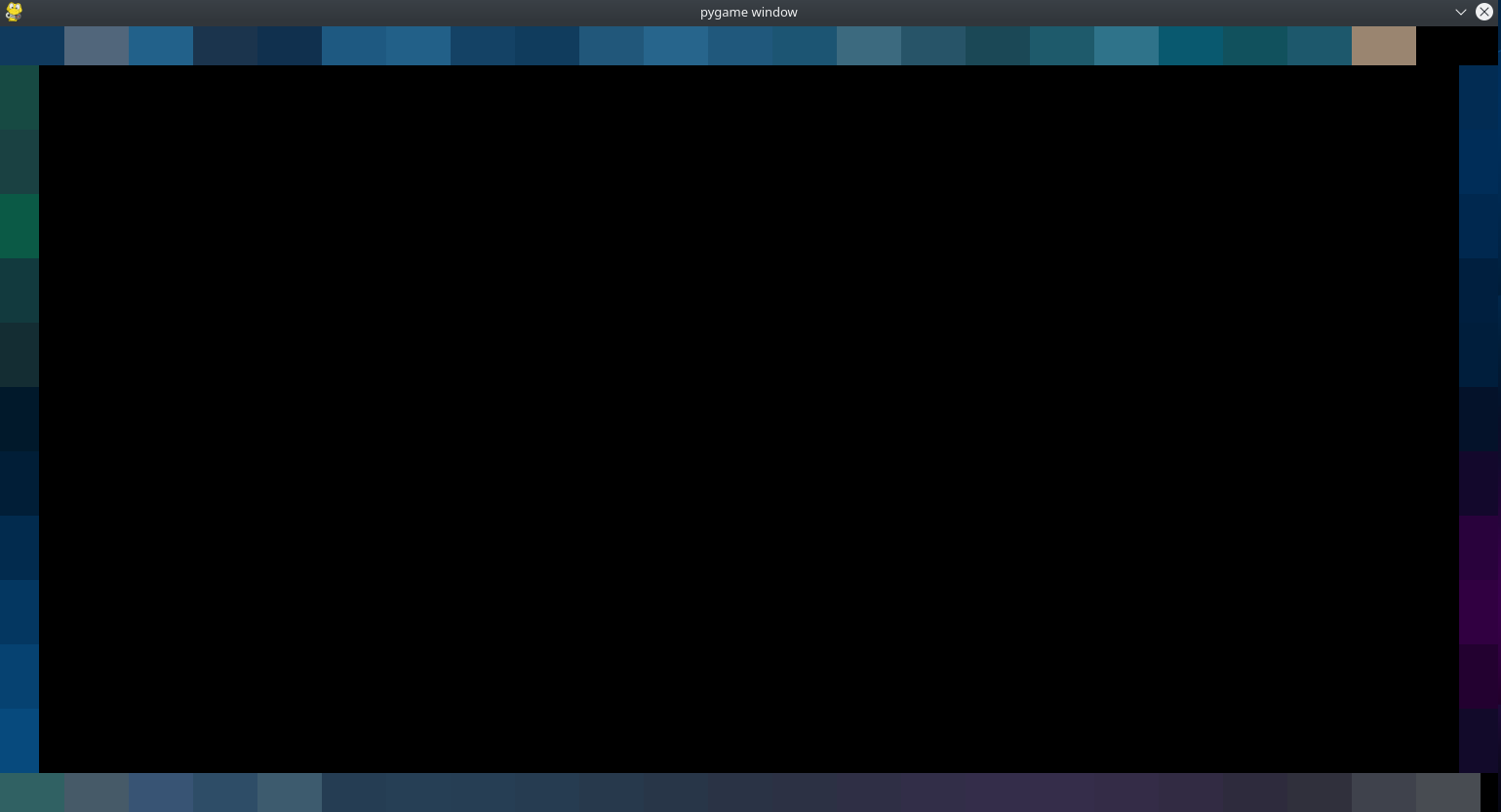

Instant improvement. It was getting a solid 50 FPS with 100 cells. But then I thought - why stop there? After splitting the code into 2 parts, and rewriting the calculations using Cython, It was already doing around 100 FPS. Granted, it didn't include transmitting the data to the hardware, but it was a good sign. To verify that the code was actually doing its job, I wrote a quick and dirty function that rendered the output of each frame using Pygame.

Success.

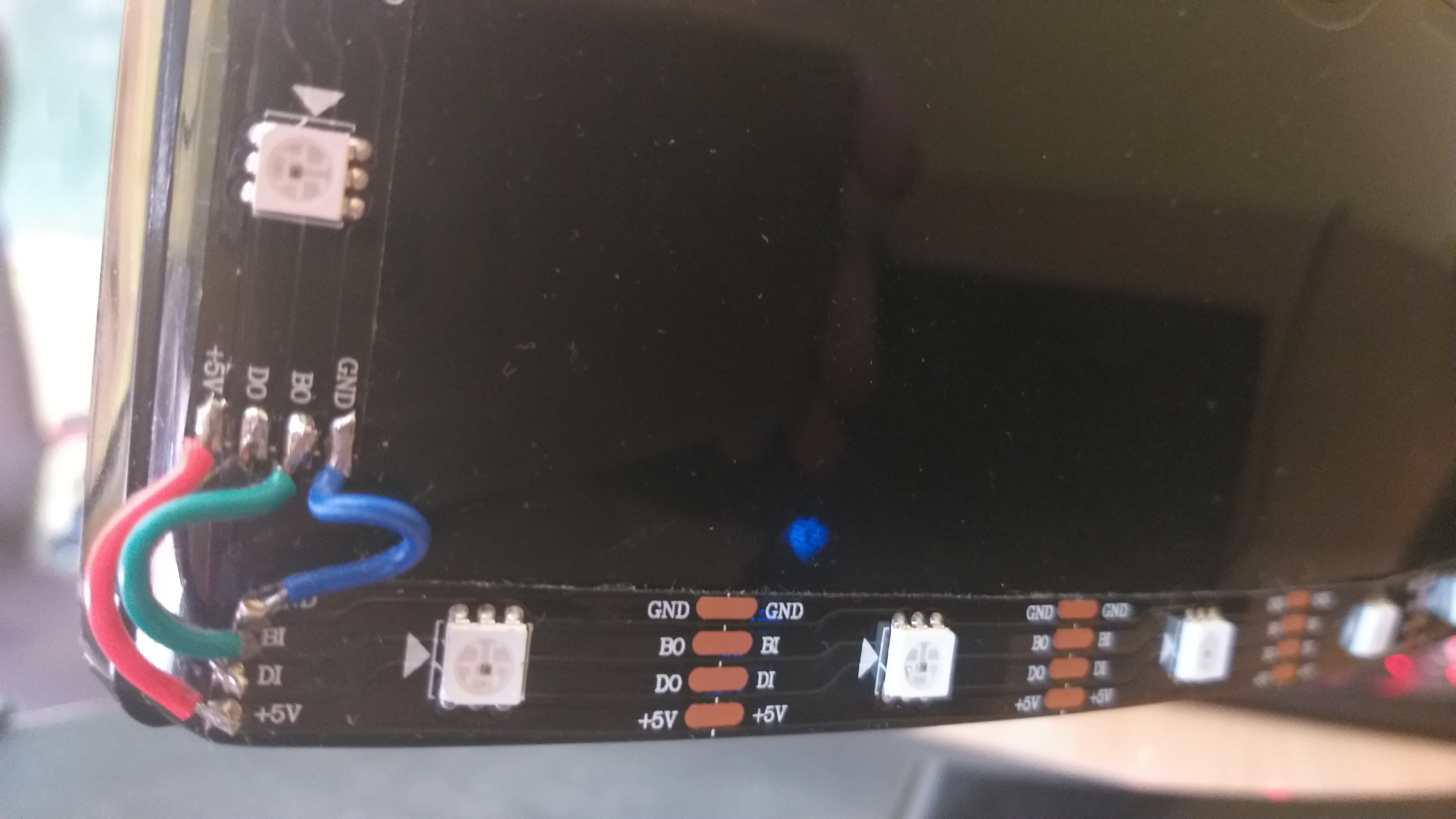

The hardware

I put together the LED controller and wrote some simple code that would listen for data on the serial port in the format

{Cell #} {R} {G} {B}

There I ran into an issue - turns out I was changing a single LED, and then refreshing the entire strip of LEDs to make the updated color visible... approximately 80 times per frame, which, it turns out, is quite performance intensive. I ended up reserving LED address 255 and made the uC update the entire LED strip if it receives FF as the first parameter (Cell #). Now the PC client just send FF at the end of each frame.

At this point, I couldn't tell if it was updating any slower than the framerate my python code was outputting, so, as far as I could tell, problem solved?

Making the software interact with the hardware

After calculating the entire frame the code sent each cell's color value to the uC using pySerial. What surprised me is that it worked... first try (more or less). Simply godlike. But there were issues.

The first problem - performance. When sending data to the uC, the performance decreased to a plebian 40 FPS. After doing a bit of optimization - if a cell is very dark, just assume that it is black to not bother with the rest of the calculations and if a cell has a similar value to which it had in the previous frame, don't bother sending over the new color. In the end, this increased the performance to an acceptable 60 FPS.

The second problem - some cells were getting a weird color. It turned out I was getting (and silencing) a division by zero error when calculating the mean color in a very dark cell because the code would discard any very dark pixels for a reason only God knows. Instead of spending 10 minutes figuring out why I did what I did, I just made the software assume the cell is black if it gets a division by zero error. Problem solved (almost) :).

Conclusion

After tinkering with the prototype some more, I had more or less function bias lighting.

However, it did still have a couple of issues.

- Very dark cells still break and get a strange color

- No way to adjust settings outside of the code

- CPU usage occasionally spiked with peaks of ~30% (on an Intel Pentium G4620)

- Cross-compatibility (Breaks on Windows for some mysterious reason)

- The cell color could be a bit nicer (e.g. instead of taking a plain mean value, perhaps take a weighted mean, where the more saturated the color is, the higher the weight?)

For a prototype, it did its job nicely. However, for the next iteration, I'm planning on rewriting and de-spaghettifying the code, while also adding a nice GUI using Tkinter. I'm probably going to stick with Python + Cython because the performance seems to be good enough (and I'm not that good at C++ anyways). To improve performance perhaps I could utilize GPU parallelization?

Kristiāns

Kristiāns

Discussions

Become a Hackaday.io Member

Create an account to leave a comment. Already have an account? Log In.