-

Introduction

06/27/2021 at 09:29 • 0 commentsEver since the first videos of Boston Dynamics’ creations started showing up on the internet, I have been fascinated with legged robots. Back in 2013 I created a quadruped robot. The project progressed until the robot could stand and do some very basic walking. But it turned out to be really difficult to develop proper walking gaits and the servos seemed to struggle with the weight of the robot. The decision to go with four legs instead of six was mainly due to the cost of servo motors. However, six legged robots have a big advantage: Unlike quadrupeds they can lift three of their legs while the remaining legs form a stable tripod. This eliminates the need for constant weight shifting and balancing.

There are lots of Hexapods out there, ranging from small research projects to commercially available kits. One of them that stood out to me from the beginning is Matt Dentons µBug. Denton has been making hexapod robots since the early 2000s and has since perfected them to the point where they were even used in movie productions. Their organic, insect-like movements are something very few robots get right. And since I saw the videos of these robots, I wanted to build one myself. While the uBug was once sold as a kit, the shop and forums have long gone offline. So I tried to find what was left of the documentation and decided to build my own hexapod from scratch.

-

A Walking Simulator

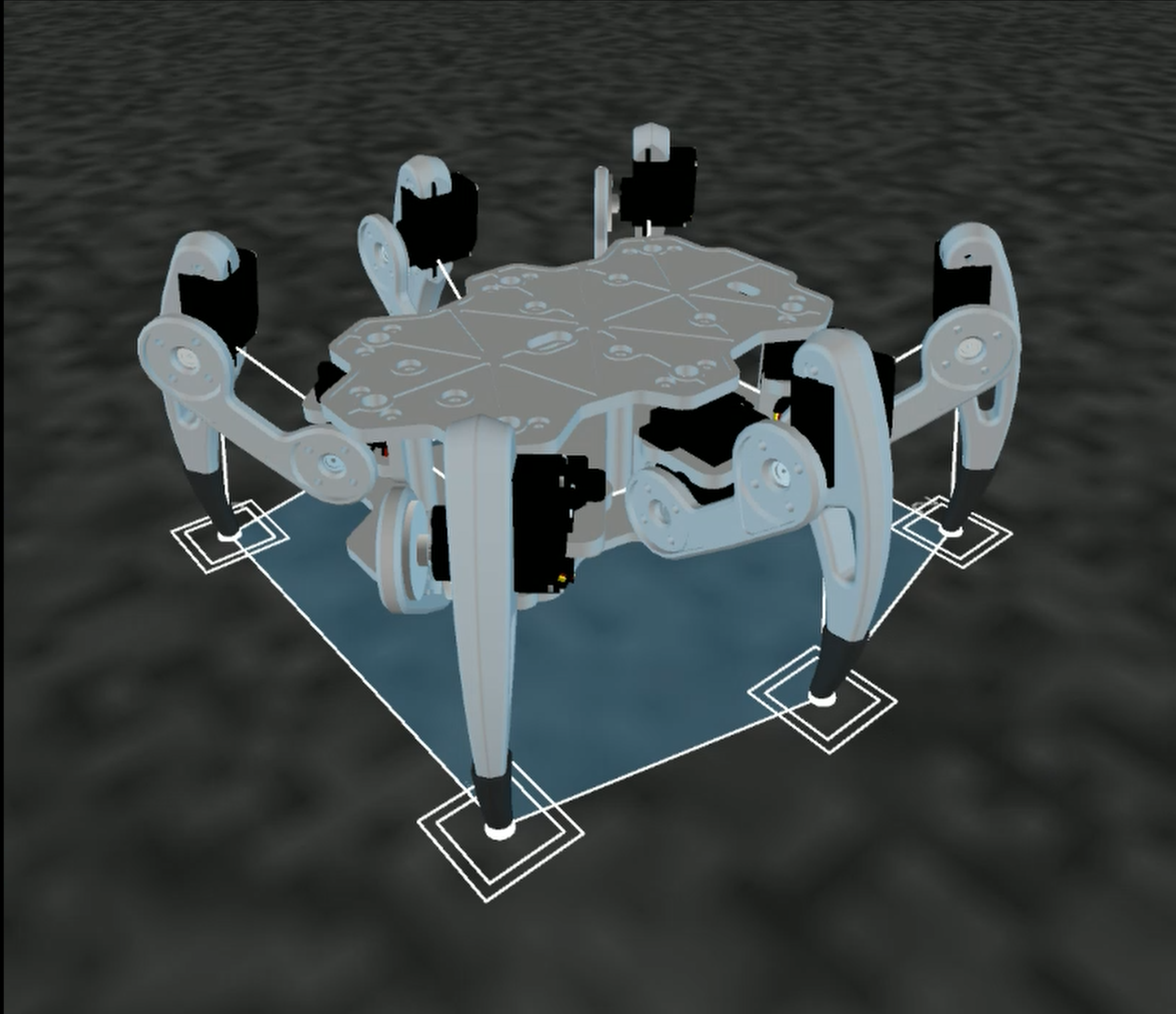

06/27/2021 at 09:29 • 0 commentsOne mistake I had made with my earlier quadruped robot was to build the hardware without knowing how the software could be done. For this project, I wanted to be absolutely sure that I could get the robot to walk before investing time and money into a physical prototype. And the best way to do this is using a simulation. Instead of a specialized robotics simulator I decided to go with Processing again, which I had already used to create a simulator for my quadruped robot. Processing is a programming language and IDE very similar to Arduino, only that it is based on Java instead of C++. To me one of the biggest benefits is that the languages are so similar that code developed for a Processing desktop application can be easily ported to Arduino with only minor modifications.

I started with a very simple animation of a robot’s leg moving, then added more and more complexity until the hexapod was moving properly. You can see a little supercut of the development process below:

![]()

The most difficult part was figuring out the locomotion. As the speed, direction and rotation of the movement are variables, the hexapod has to determine what legs to move to what position at a given time. Now my implementation of a locomotion engine works like this:

Each leg has tree coordinates: A default position, a starting position and its current position. The default is the coordinate that the leg will try to resume. With every leg at this default position the robot’s is in a neutral pose. When the hexapod starts to walk, its legs first move opposite to the body. This means shifting the starting and current position while the legs are on the ground. Obviously without the legs making a step they will exceed their range of motion very quickly. To determine which legs to lift a gait sequence is used. This is nothing more than a lookup table that says which leg can take a step at what time in the sequence. If it is a leg’s turn to step, the starting coordinate is frozen. The leg now follows a path between the start coordinate towards the default position until it has reached its target. The step height is determined by the progression along that path, making the leg move in the shape of an inverted parabola. As the code loops through the gait sequence, each leg tries to catch up with the body’s movement, trying to reach their default positions.

![]()

This is the simplest approach I could come up and it’s enough to make the robot move quite smoothly. After developing this locomotion function, the rest is fairly easy to implement. To make the hexapod move and rotate statically, the leg coordinates get translated/rotated around the body’s center point. To determine the angles for the leg joints, inverse kinematics are calculated. While this might sound complex, it’s just basic mathematical equations that have to be performed for each leg.

For the simulator, Processing’s 3D rendering is used to display the hexapod either as a wire mesh or with its later CAD files. Controlling the robot is done via mouse and keyboard. This was more than enough to get a sense of what the walking would look like without the need for any hardware.

![]()

-

Moving on to Hardware

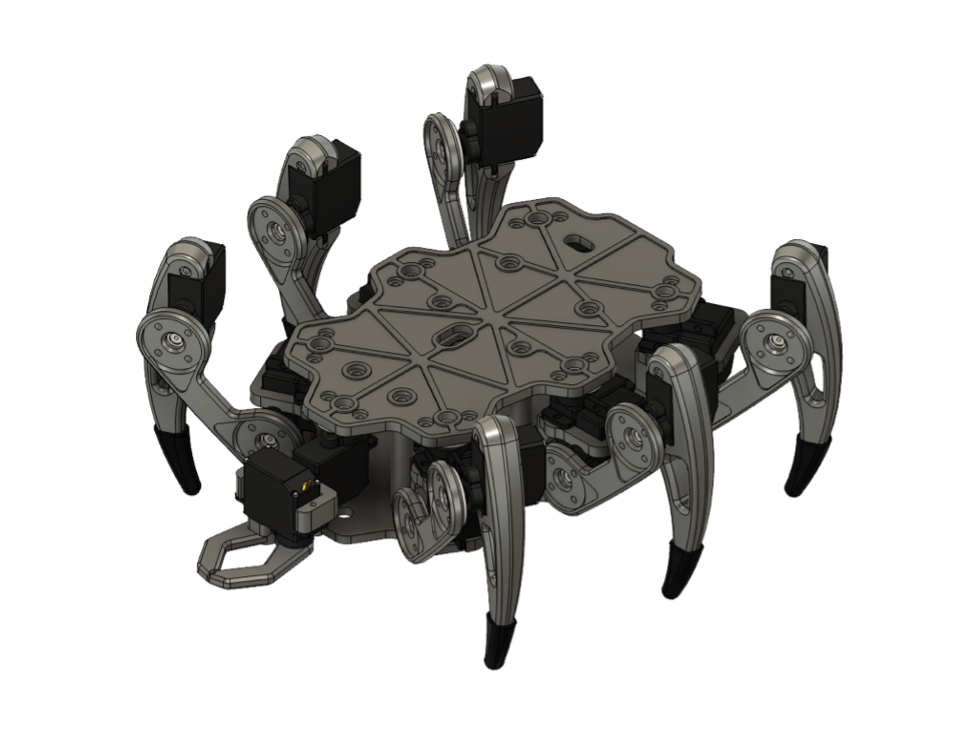

06/27/2021 at 09:30 • 0 comments![]()

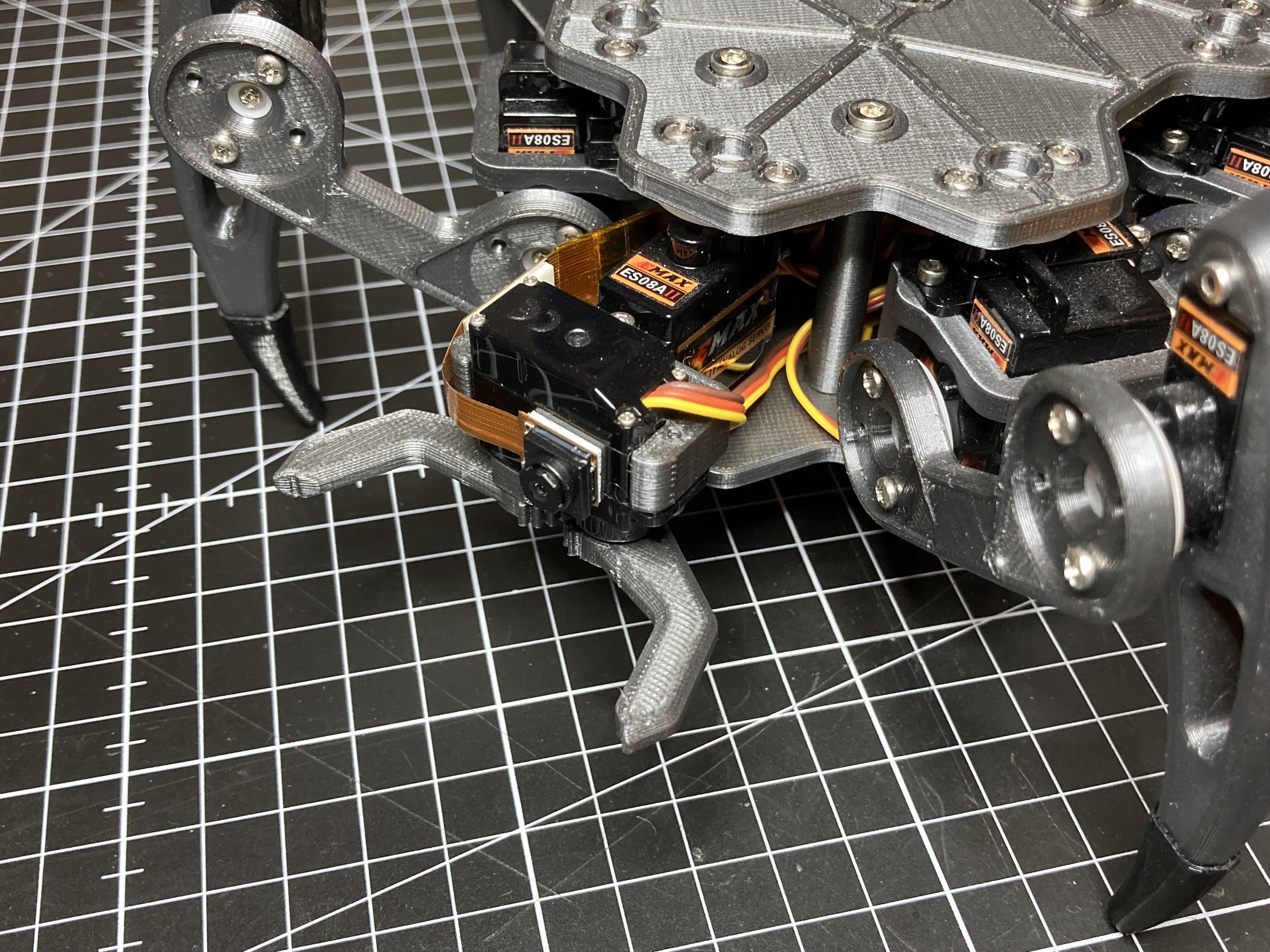

With the simulation done it was time to move on to the physical hexapod robot. I started designing parts for a single leg and searched for fitting servo motors. Since there are 18 servos needed for this hexapod, they define the total cost of the robot. I settled for some cheap Emax ES08A II micro servos. They are quite powerful for their size and their plastic gears seem to be holding up well so far. I paid around 80€ for the entire set of servos. When using proper smart servos for robotics, a single unit can cost this much.

Although they are “micro”, these servos can draw a lot of current. I was planning to use a lithium battery first but later switched to NIMH. The huge advantage is that six 1.2V NIMH cells add up nicely to 6V which the servos need. A lithium battery requires a voltage regulator, and with the high current demand that just complicates the project unnecessarily.

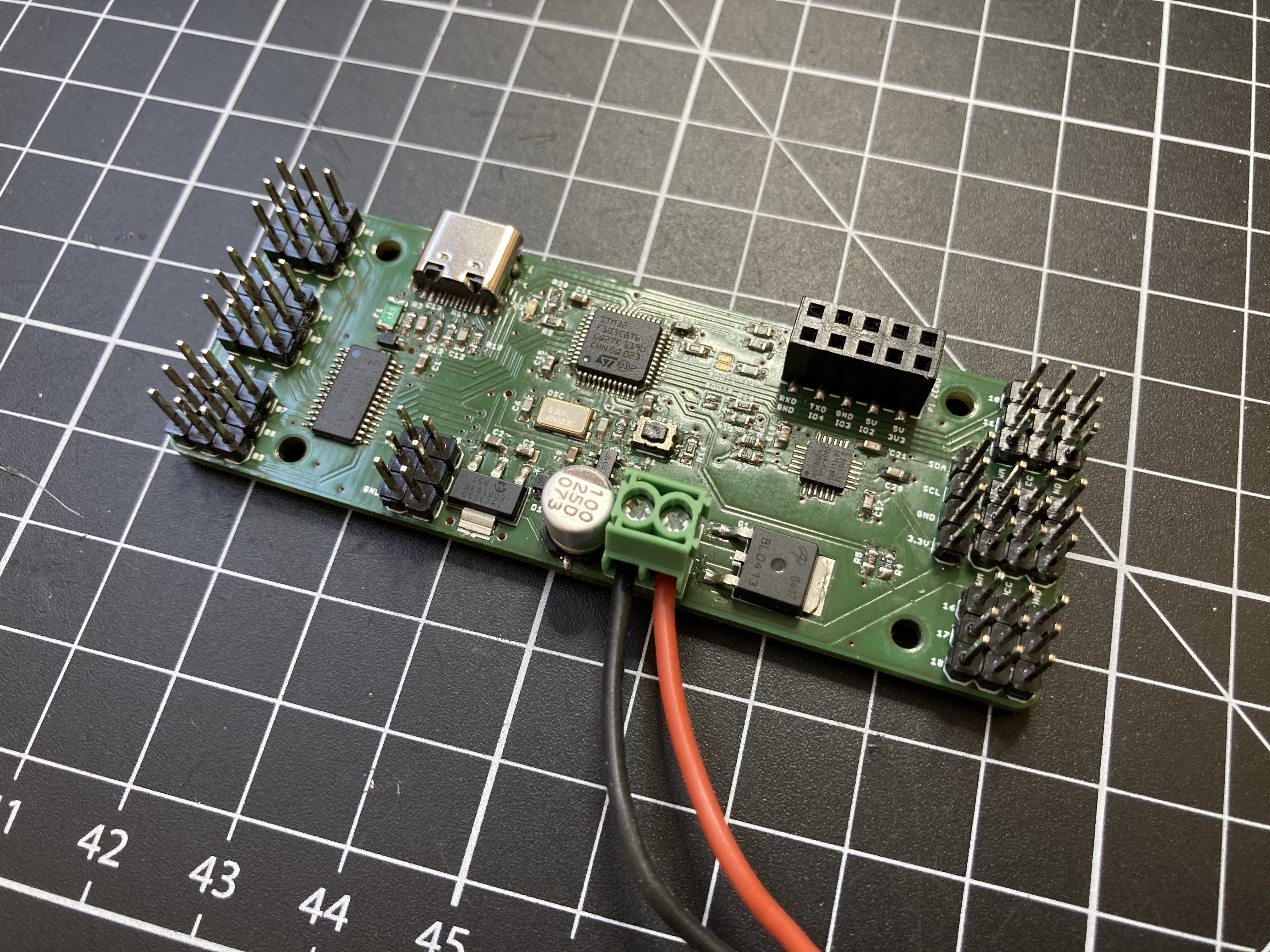

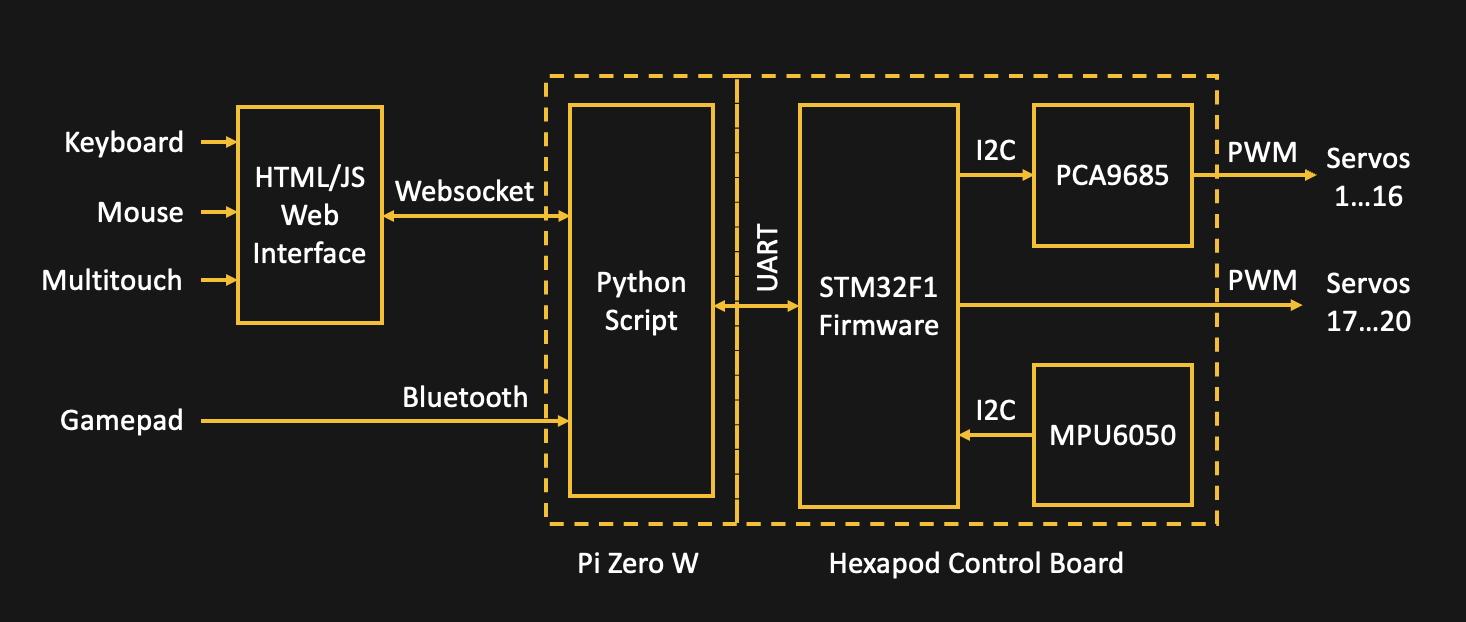

To drive the servos 18 PWM outputs are needed. At first, I tried to find a microcontroller with enough IO to connect the servos directly. The Teensy 4.0 might have been a good candidate for this. However, the robot has very limited space for electronics and the Teensy board just didn’t seem to fit right. The STM32F103 was the next best choice as it is Arduino-compatible and I had already gathered some experience with my macro keyboard. Because of the limited IO and timers, a PCA9685 PWM driver is used to control 16 servos while the remaining four can be handled by the STM32 itself. You can view the hexapod software running on a macro keyboard prototype below:

![]()

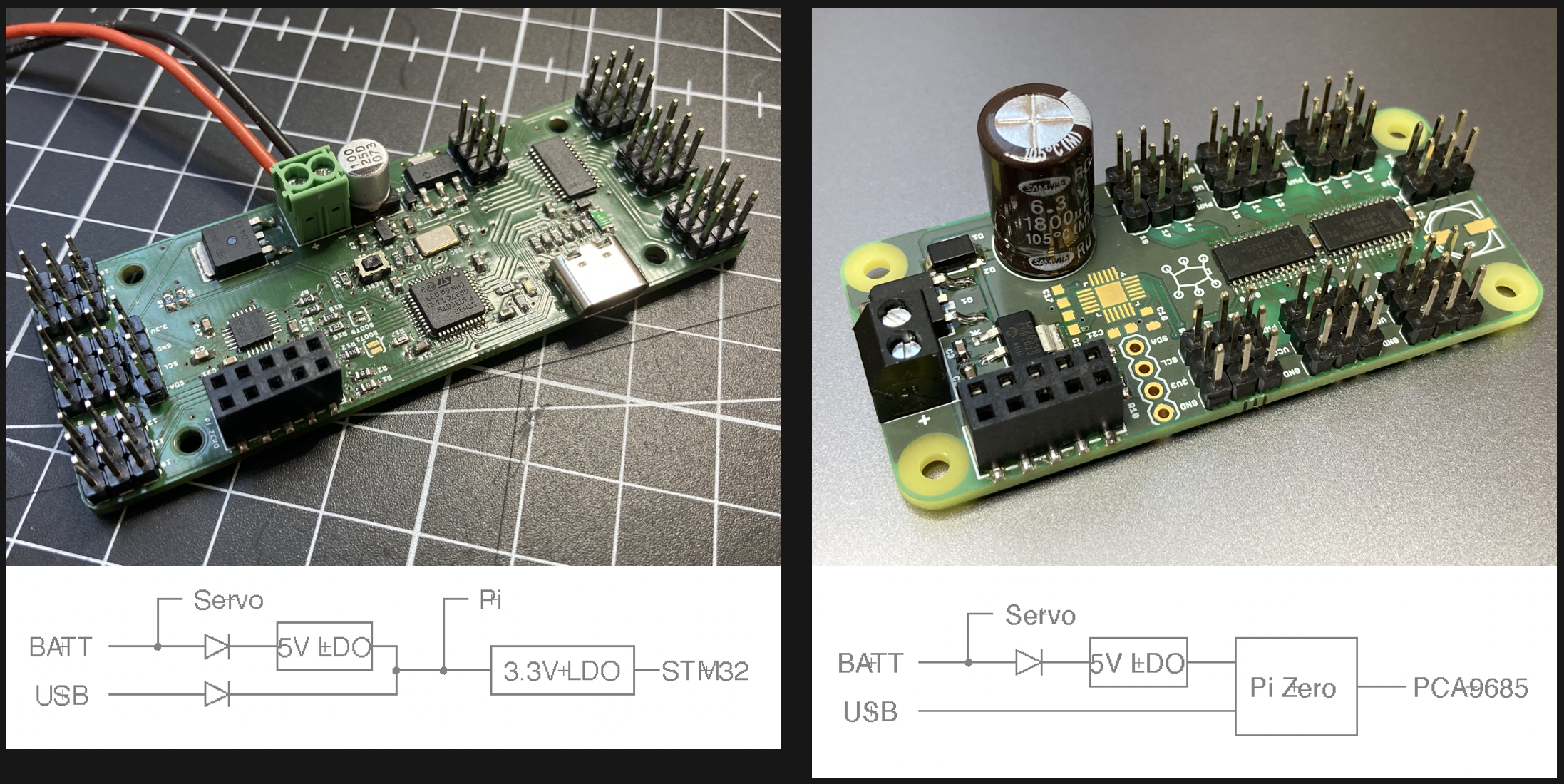

With the microcontroller selected, the robot still has no way to be controlled from the outside. To add wireless connectivity, I decided to include a Raspberry Pi Zero W. For my Pi Zero-based Zerobot robot I had already created software for a web interface, and I figured that adding a Bluetooth game controller should not be a problem. With the most important parts selected, I went on to create the PCB.

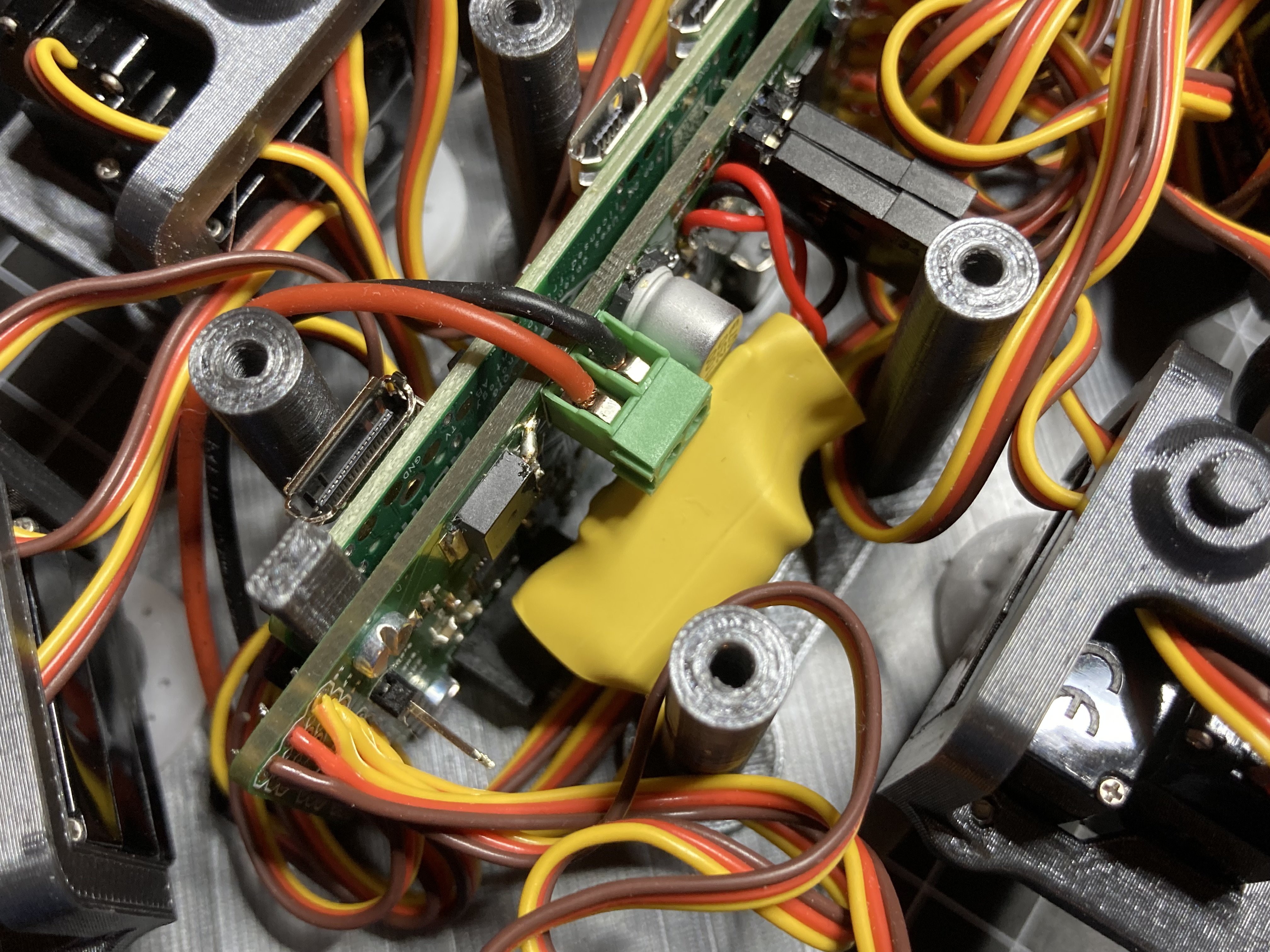

On the ZeroBug board, the microcontroller and Raspberry Pi are connected via UART. I am using a bottom-entry pin header. This allows for a very compact assembly without having to solder the Raspberry Pi. The STM32 can be programmed using its USB interface, while the Raspberry Pi is using its WIFI connection for access via SSH or VNC Viewer.

Supplying the components with power was tricky, as I wanted to be able to supply the board with either a USB connection or the battery. This is done by creating a diode-OR circuit (see picture below): With only USB attached, the Pi supplied with (almost) 5V, which it internally drops to 3.3V anyway. Additionally, the STM32 gets its own 3.3V regulator. When the battery is connected, it powers the servos directly through a simple reverse polarity protection. An additional 5V LDO supplies the Raspberry Pi and microcontroller. In reality this worked out great. Only with a very low battery, the Raspberry Pi might reset during quick movements. For this reason I am measuring the battery voltage with the STM32.

![]()

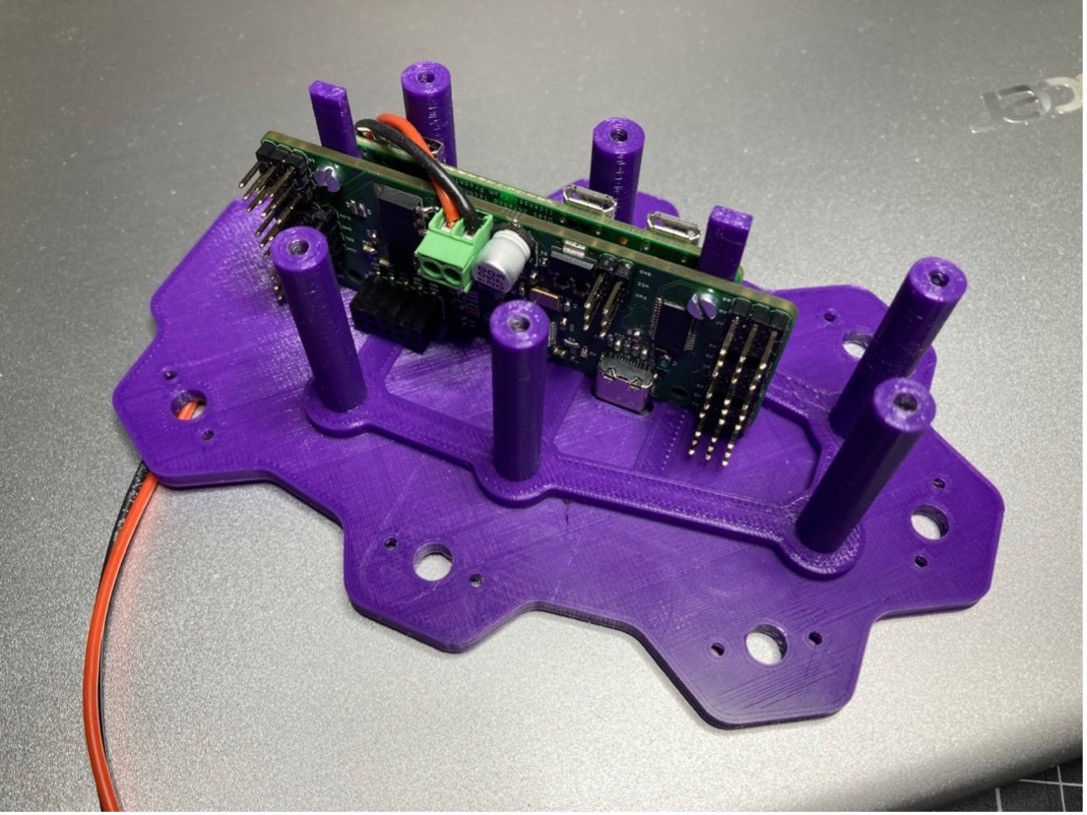

When orienting the parts some aspects had to be considered: The USB socket must be easily accessible. The servo connectors had to be positioned to minimize wire lengths. To get the Raspberry Pi’s antenna out of the way of motors and the battery, I had to point it towards the ground. Finally, the camera connector is oriented to the front of the robot so a camera can be attached later. I overlooked how long the pin headers for the servos are and together with the servo plugs they are too big to fit into the robot. Because of that the servo cables had to be soldered on later.

![]()

I managed to fit all the components on two-layer board. There was even space left for a MPU6050 motion sensor and a connector for I2C OLED displays. The connector came in handy for testing as an OLED screen can show the state of the legs without having to power the servos.

![]()

While I was waiting for the PCBs to arrive, I continued to design the 3D printed parts for the legs and body of the hexapod. I took the proportions of Matt Dentons uBug as a starting point. It turned out that I could barely shave off a few millimeters without compromising on the movement range of the legs. All the mechanical parts were designed in Fusion360 and can printed with minimal supports. To prevent slipping, I made some flexible TPU socks that can be snapped to the legs. I also added an optional claw to the front of the robot. This space was originally intended for a camera, but moving object with the claw it much more fun than a shaky camera stream.

![]()

-

Combining Hardware and Software

06/27/2021 at 09:30 • 0 commentsPorting the locomotion code from the Processing simulation to the STM32 was relatively simple. Some safety checks had to be added to prevent the robot from accidentally crashing its legs into each other. The microcontroller manages the calculation for the locomotion, rotation, IK and everything else in just under 20ms. Surely some optimization is possible to make it run even smoother.

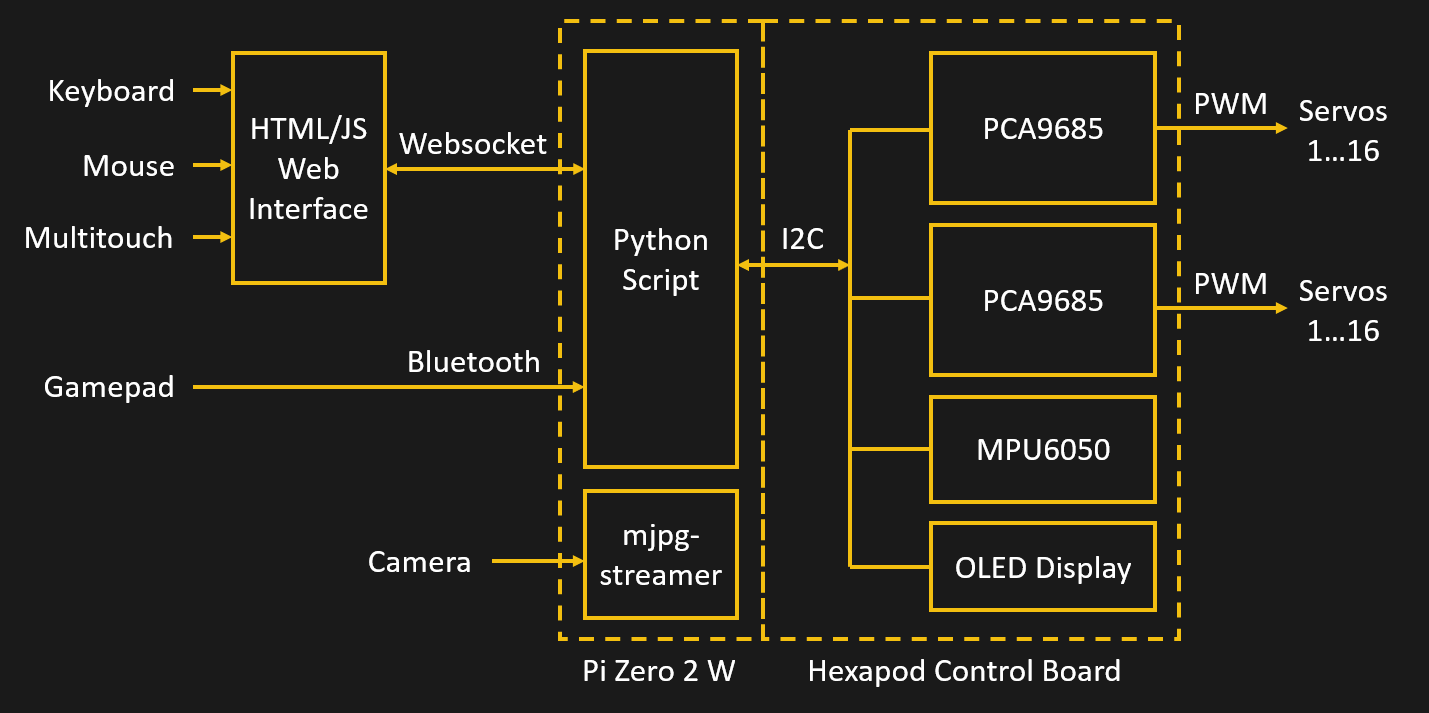

I wrote a simple protocol for controlling the STM32 over UART. This protocol can be used to receive commands either from USB or from the Raspberry Pi Zero. The Raspberry Pi runs a python script that handles the Websocket communication, UART and pygame. As soon as a client connects to the Pi, a website is loaded that can be used to control the hexapod with keyboard, mouse or touch inputs. Pygame allows for a Bluetooth connection with a game controller. The Raspberry Pi translates the commands and sends them via serial to the STM32. The Pi also sends a periodic heartbeat signal, so the STM32 can stop the servos in case the Raspberry Pi freezes up.

![]()

This combination of Raspberry Pi and microcontroller works really well. Controlling the hexapod is a lot of fun, especially with an Xbox controller. You can see a video below of how the controller is mapped to the hexapod axes:

Overall, I am happy with how this project turned out. The hexapod walks like I wanted to and can be controlled with surprising precision. The development didn’t always go as smoothy as it might appear from reading these project logs. I actually started working on a simulator in 2014 and shelved the whole project out of frustration, only to dig it up a few years later. Just at the start of this year it really clicked and I got the motivation to go through with the project. In retrospect the ESP32 might have been a possible replacement for both the Raspberry Pi and the STM32. Performance and compatibility with game controller might be an issue though.

-

Upgrading to the Raspberry Pi Zero 2

11/20/2021 at 22:15 • 0 commentsWhile I appreciated the small form factor, the performance and boot times of the Raspberry Pi Zero had always been bothering me. When the upgraded Raspberry Pi Zero 2 was announced a few weeks ago I immediately knew this would be perfect for my hexapod. Not only would this speed up the boot time but also enable some new features that were not possible before.

The Raspi Zero 2 now features the same quad-core CPU as the Raspberry Pi 3, just under-clocked to 1GHz. Even with the cores slowed down, the new board draws a quit lot more power. Tom’s Hardware tested the power consumption in their early review: https://www.tomshardware.com/reviews/raspberry-pi-zero-2-w-review. Under stress, the current draw rises from 260mA to 370mA. This meant that although the dimensions and ports stayed the same I still had to make some changes to accommodate for the new single board computer.

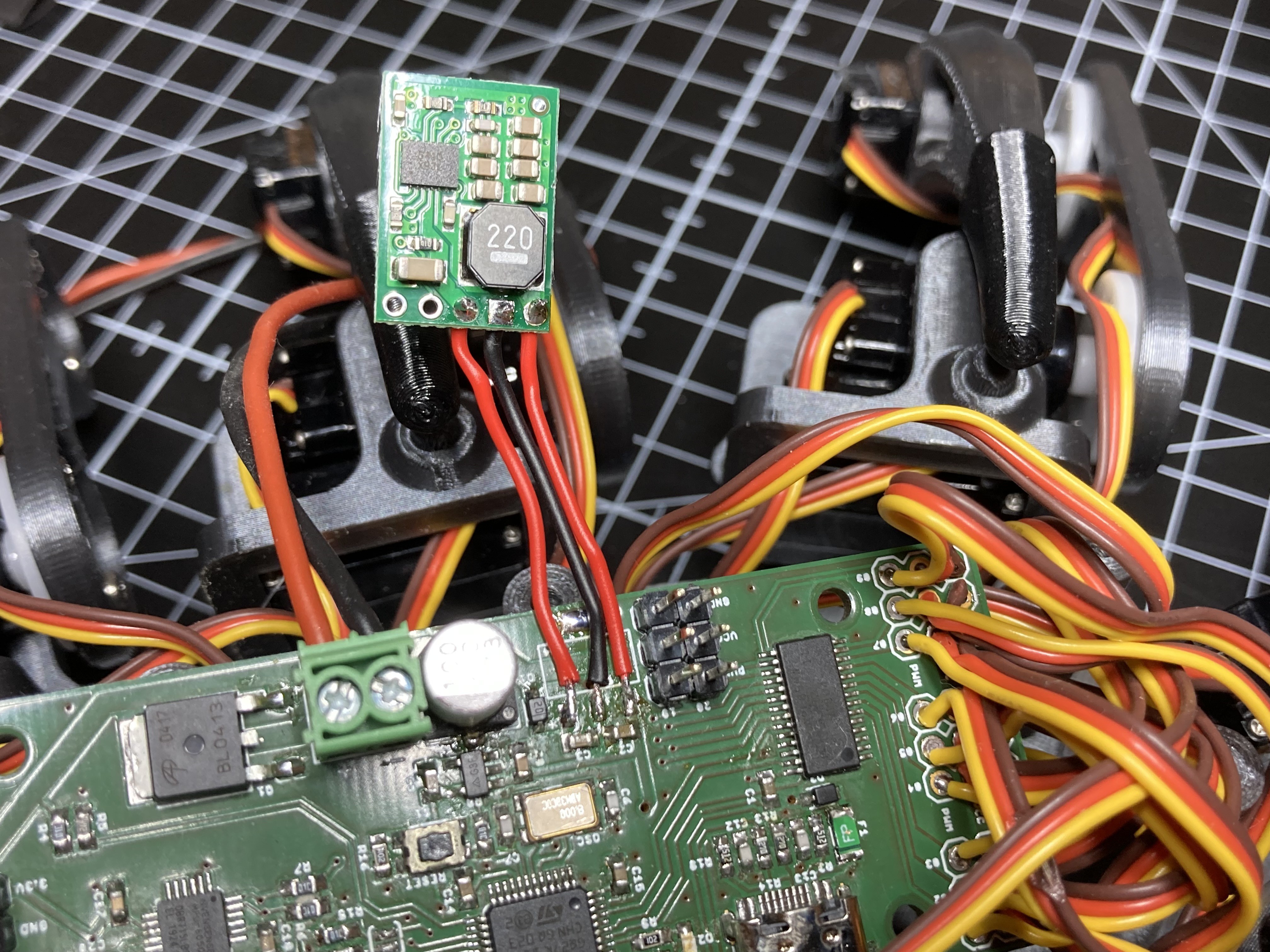

When the hexapod is powered by a 6V-battery, a voltage regulator supplies 5V to the Pi Zero and to the 3.3V-components. I chose a Microchip MCP1825 with 500mA output current which was more than enough at the time. To be on the safe side, I now decided to replace the LDO with a 1A DC/DC converter by Pololu. I still have to test wether this was really necessary.

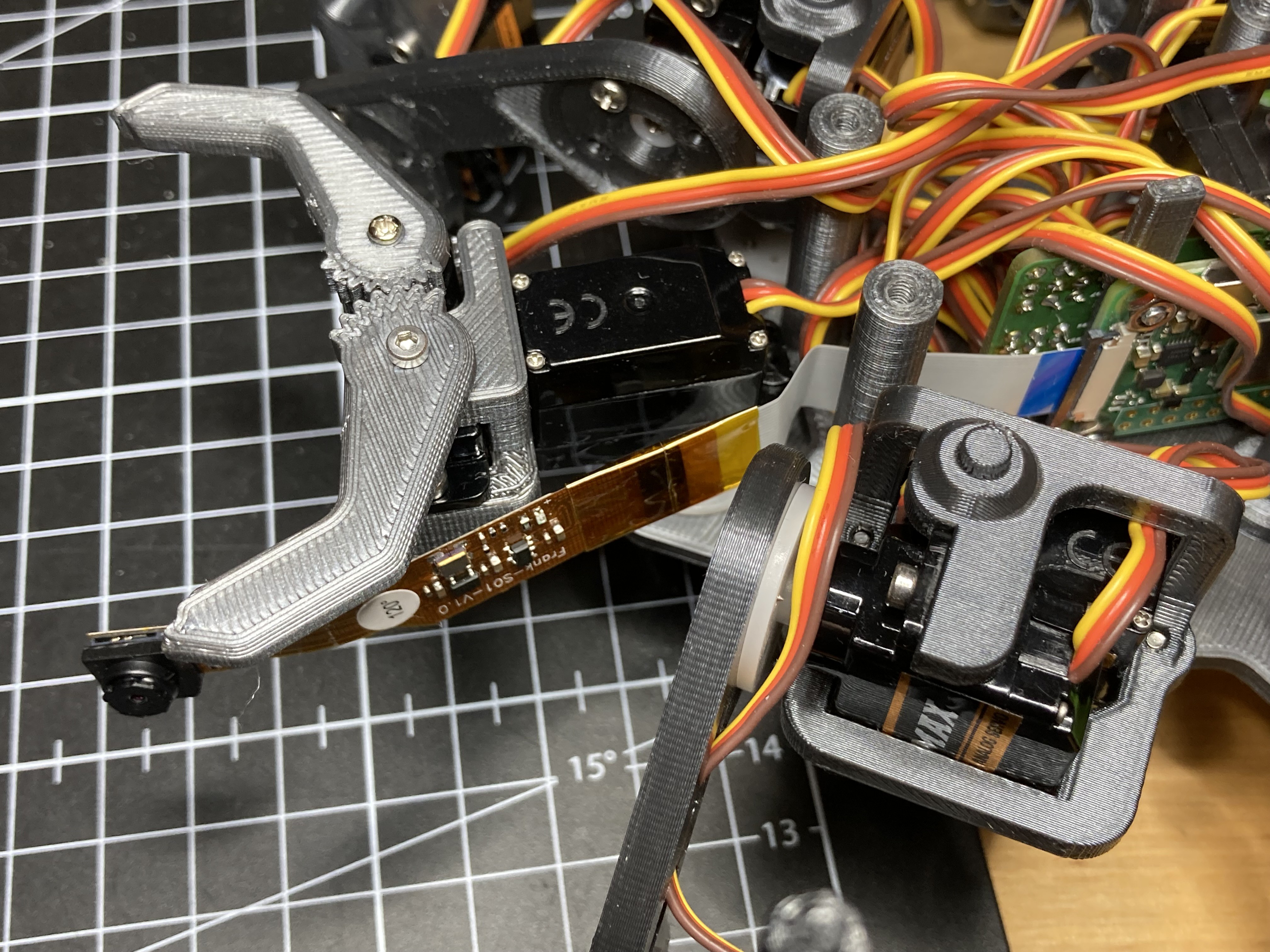

Originally I planned to fit a camera into ZeroBug for streaming video. But as the Raspberry Pi Zero was already struggling with the controller inputs I decided against that. Now with the more powerful Pi Zero 2 the camera would not be a problem anymore.

I settled for a camera module by Pimoroni that integrates the 22-pin camera connector. After soldering an extension to the cable I stuck the camera directly to the gripper servo of the robot.

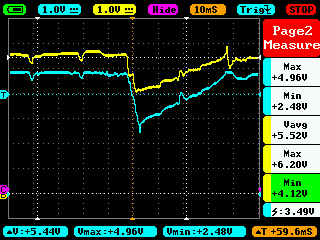

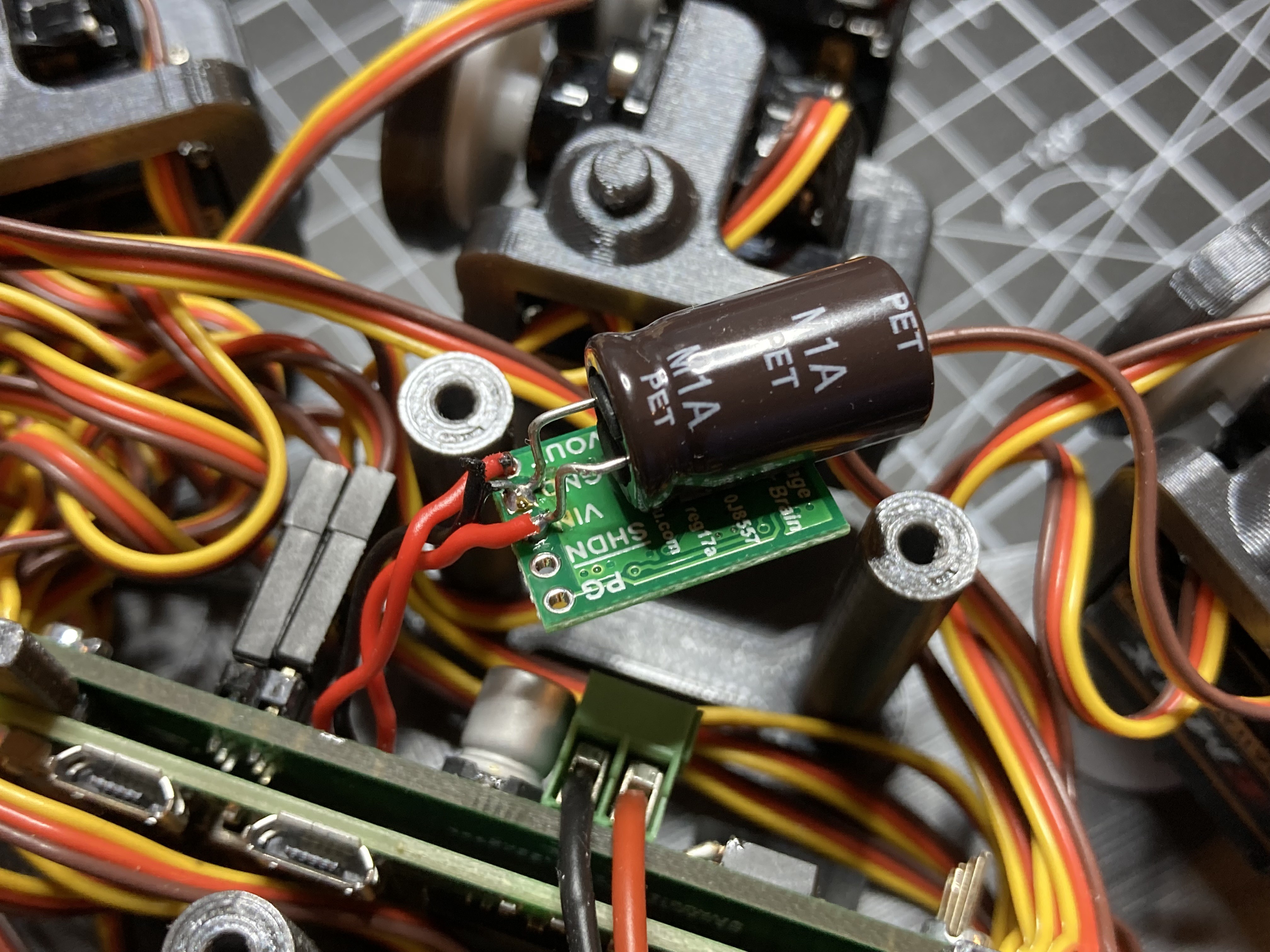

The new Raspberry Pi Zero 2 worked right away with the same SD card image. But then I noticed a problem: After moving the servos for more than a few seconds the Raspberry Pi reboots itself. The reason for this was quickly determined: When some of the 20 servos are moving, the battery voltage drops below 3V. The old Raspberry Pi Zero was more forgiving in that regard. I solved the issue by including a 1.8mF electrolytic capacitor on the input of the 5V DC/DC converter. The existing diode ensures that the capacitor only buffers the logic components and not the servos.

![]()

With the capacitor in place ZeroBug ZeroBug now only needs a few changes to its software to enable the camera stream. For this I installed mjpeg-streamer and created a batch script that starts the stream. By adding a line to the etc/rc.local file the script is executed during boot up. The web-UI was already prepared for embedding a stream element, so there is nothing more to do than uncommenting one line in the html. You can have a look at the updated code on Github: https://github.com/CoretechR/ZeroBug

The video streams is a fun addition to the hexapod and together with the processing power of the Raspberry Pi Zero 2 there are many possible applications. Amongst others this includes face tracking and autonomous navigation. Maybe the servos can even be controlled by the Raspberry Pi directly which would render the STM32 unnecessary.

-

ZeroBug Lite

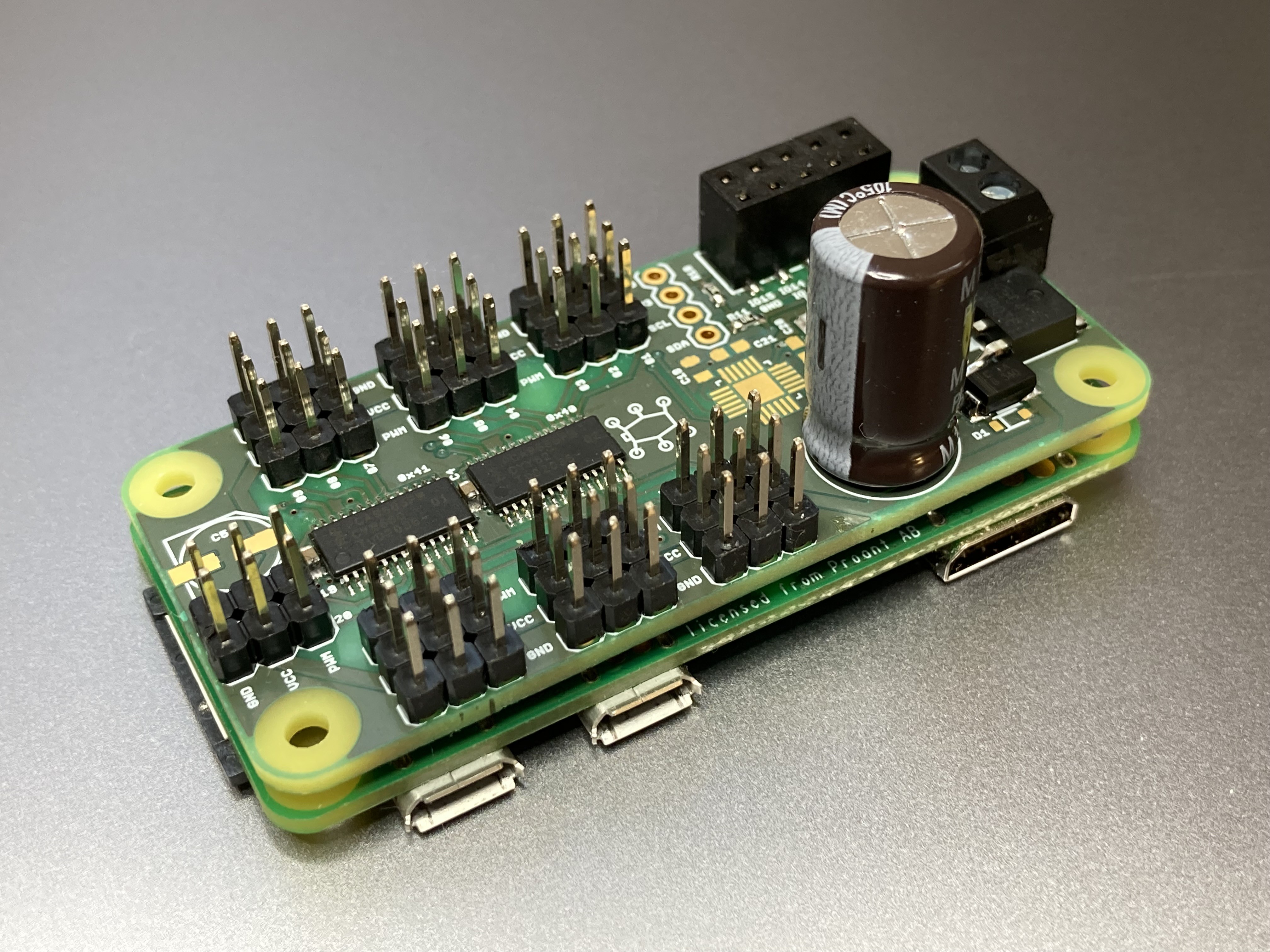

01/29/2022 at 15:59 • 0 commentsWith the chip shortage still raging on, building a ZeroBug with the rare STM32F103 is becoming increasingly difficult. I was looking for a replacement for a while, even considering an ESP32. But now that the powerful Raspberry Pi Zero 2 is here, an additional microcontroller might not be necessary at all to control the servos. To experiment with a Raspberry-only approach I created a new PCB.

Removing the microcontroller means that the Raspberry Pi has to control the servo driver by itself. That’s why a second PCA9684 PWM-driver is needed. The i2c pins are conveniently positioned on the existing connector so no change is needed there. The microcontroller and its USB port together with lots of smaller components are no longer needed. With much fewer parts, the PCB can be shrunken down the the Pi-Zero size. By using the Pi’s internal 3.3 voltage, even the 3.3V regulator can be taken out (the board still needs a 5V LDO to power the Pi). I also put in a chunky 1.6 mF capacitor to make sure the Raspberry Pi doesn’t reset while the servos move.

The new, smaller PCB and the Raspberry Pi can be mounted horizontally in the Hexapod while still leaving room for servo wires.

Software-wise a few changes had to be made. The Raspberry Pi now has to take over all the tasks of the microcontroller that was running the gait engine. To make everything run in a Python environment, I had to port the entire gait engine to the new language. As Processing(.org) features a Python-mode I used this for testing the code (the Python-based simulator is also available GitHub: https://github.com/CoretechR/ZeroBug-Lite). Adafruit has a nice CircuitPython library that makes it easy to control the PCA9685.

With everything assembled and programmed, the ZeroBug can walk with only a Raspberry Pi Zero as its brains. How well does it work? I did some testing but cannot say that the „ZeroBug Lite“ version is as reliable as the microcontroller-based one. For one, the output enable signal that tells the servo drivers to stop is only controlled by the python script. When the software is abruptly stopped, the servos can’t be disabled. And as the Pi can’t measure analog signals, there is no way to monitor the battery voltage without additional hardware. The software at least needs more work to build in the same kind of safety checks that were implemented in the original robot.

If you are interested you can check out the new GitHub Repository: https://github.com/CoretechR/ZeroBug-Lite

Max.K

Max.K