I still haven't decided whether I should try to go the "Boe-dozer" route like I was thinking about earlier. Or maybe I could mount a small NTSC-compatible video camera as if it was a part of some kind of turret, like a miniature "tank." It would also be nice if I had a "bigger barn", so as to be better equipped for dealing with issues of scale when planning and/or doing live modeling, instead of just talking about running things in "simulation". This is the post that I was going to entitle "Go Ask Alice (when she is ten feet tall), but I changed my mind - after reading some of the news about Afghanistan, and how a relief worker and seven children were recently killed, and now the Generals just admitted that "no explosives" where found, and no link to ISIS existed. Maybe this isn't the place to be political, or else I could have said something about being "Wasted away again in Margaritaville" since "I read the news today oh boy" about empty Tequila bottles being found strewn about in the new Air Force One. End of rant. Back on topic: If robots become a part of out daily lives, and AI is going to be relied upon to make life or death decisions, well - are we ready yet to meet our new robot overlords?

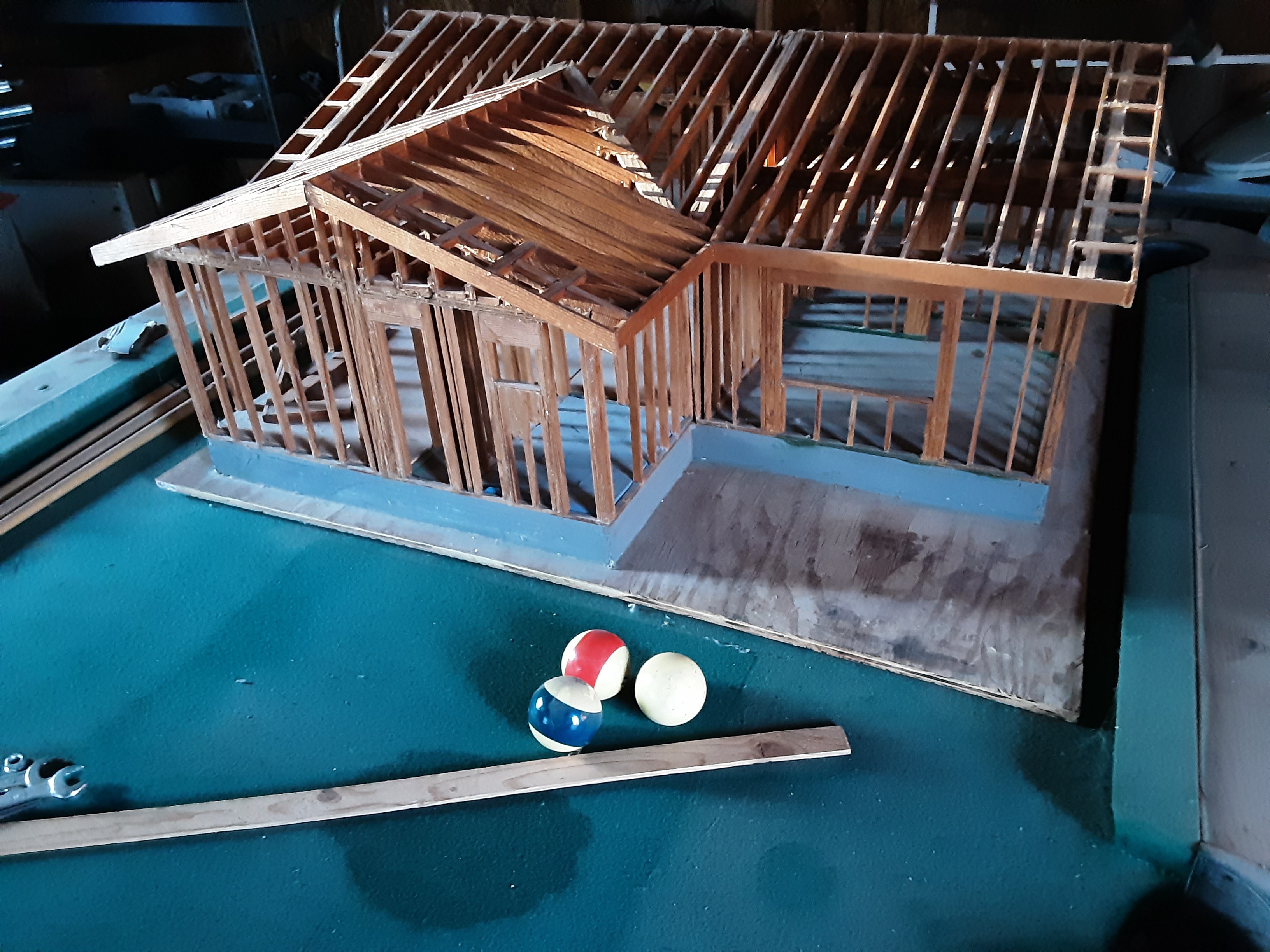

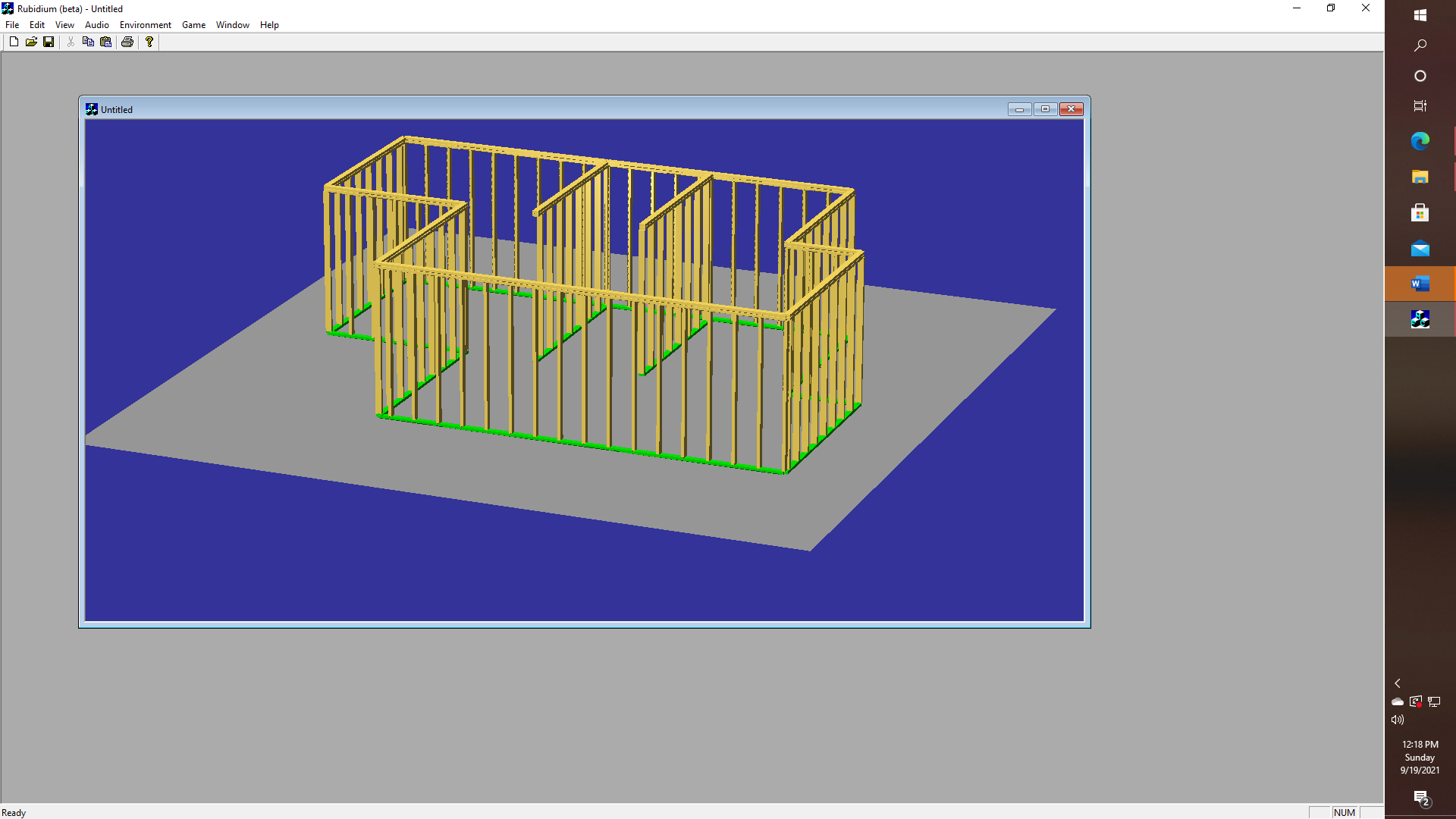

O.K.. off of the rant now, and back on topic - I hope! Imagine a robot where we can give it commands - like "IDENTIFY THE ROOM WHICH CONTAINS THE CRYSTAL PYRAMID BUT DO NOT ATTEMPT TO ENTER ANY ROOM WHERE TROLLS MIGHT BE PRESENT." This sort of thing is very doable with game engines of the type that were used to implement classics like "Adventure" "Zork" or "The Wizard and the Princess". Provided that is that we have a way of building an accurate model of the world that we want our robots to exist or CO-EXIST in. Elon Musks' recent video about how Dojo will work was very interesting in several ways, like how the model view for autonomous self-driving cars is supposed to work. It's one thing to talk about OpenGL and graphics, yet there is so much fertile ground for exploration with programs that might try to generate an accurate point cloud by actual exploration, and then proceed to the problem of defining vertex information, line sets, bounding planes, then proceeding to the minimum convex hull, as well as collision domains, with object recognition thrown in along the way. The Boe-Bot is, of course, a bit too big to be able to enter and leave the various rooms of the model house that I built on its own, which is just another Goldilocks problem, I suppose, just as it is a bit small to be able to competently navigate a real world labyrinth. And I could still use a bigger barn.

Real-world programs of course might be variations of the type "IF TROLLS ARE FOUND YOU MAY FIRE MISSLES, AND CONTINUE FIRING MISSILES UNTIL ALL TROLLS ARE KILLED, BUT IF A CRYSTAL PYRAMID IS FOUND, WHICH IS BEING GUARDED BY THE TROLLS, OR OTHERWISE BEING HELD HOSTAGE - THEN CONTACT BASE COMMAND FOR FURTHER INSTRUCTIONS". Oops, I said that I wasn't going to get political. Even though I just did.

Somewhere. I have the source code for an old Eliza program that I converted to run under my Frame Lisp APIs, so I am fairly certain that I can get some SHRDLU like functionality up, but honestly, this thing is huge. The implications for this technology are even larger. Consider for example the economic consequences if an elderly person falls and breaks their hip, and then isn't strong enough even after hip replacement surgery to use a walker or a wheelchair on their own. The cost of nursing home care in the United States can run anywhere from $5000 to over $25000 per month, and Medicaid does not kick in until after you sell your stocks and other assets, and even then they can (or at least will try to) put a lien on your house (or your parent's house), and now how much does that add up to for example - if that sort of thing goes on for three or four years? Or eight? How many people have an extra million or two to watch evaporate? The whole concept of Elon's household robots has a certain appeal, at least in those situations where someone might be able to live semi-independently if "someone" was available to bring in the mail, take out the trash, put things away and take things down from high shelves, etc.. In the meantime, the very rich will continue to get even more so very rich, and the middle class will continue to vanish.

That of course wasn't the exact model that I actually built, but nonetheless, I think that should be possible to use a P2 chip as the "brain" for a simple robot, whether we have to give it the model that we already have - or better yet if it is possible (it should be) to take video input and generate the point cloud, vertex list, wireframe, surface list, etc. So that it should BE ABLE TO DO SOMETHING!

glgorman

glgorman

Discussions

Become a Hackaday.io Member

Create an account to leave a comment. Already have an account? Log In.