Future project logs and build instructions will go into greater detail as to how ROS2 based SLAM (simultaneous location and mapping) algorithms generate maps, but the fundamental principle is that by tracking the location of the robot using sensor fusion (as discussed in the last project log) subsequent LiDAR scans can be overlayed one on top of the other matching together similar features: the corner of the room, or a doorway in the wall. Overlaying subsequent laser scans is analogous to building a jigsaw puzzle.

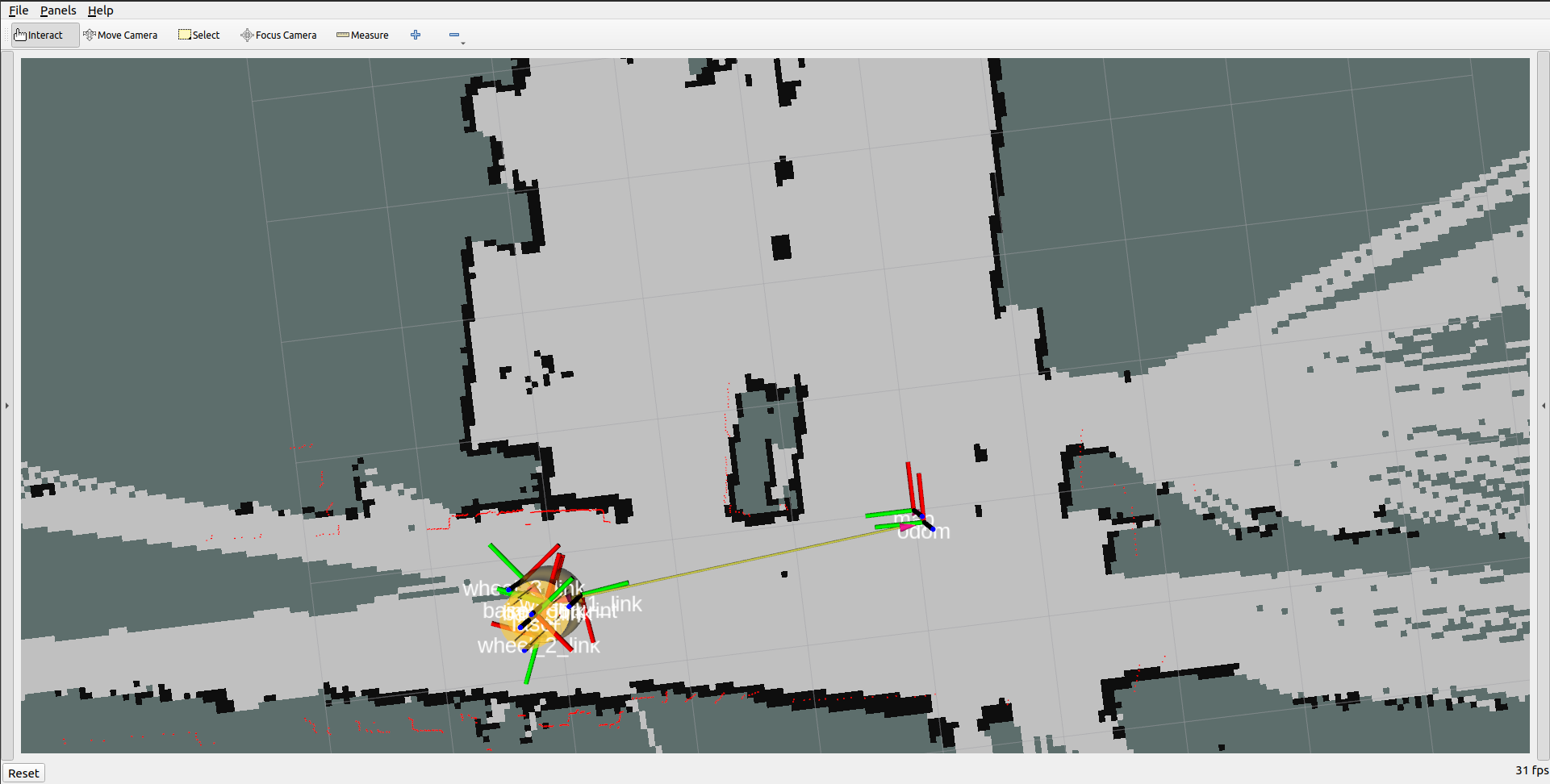

The picture below shows the robot traveling towards the left, behind it on the right shows a solid map based on previous LiDAR scans while further down the hallway to the left there has been no map created (yet) since the LiDAR sensor has not scanned this region.

Below is a video demonstrating the mapping process. The robot is being manually driven around the environment using a Bluetooth Xbox One controller.

Alternatively, instead of manually driving the robot around we can use the ROS2 navigation package to autonomously drive to different waypoints while avoiding obstacles.

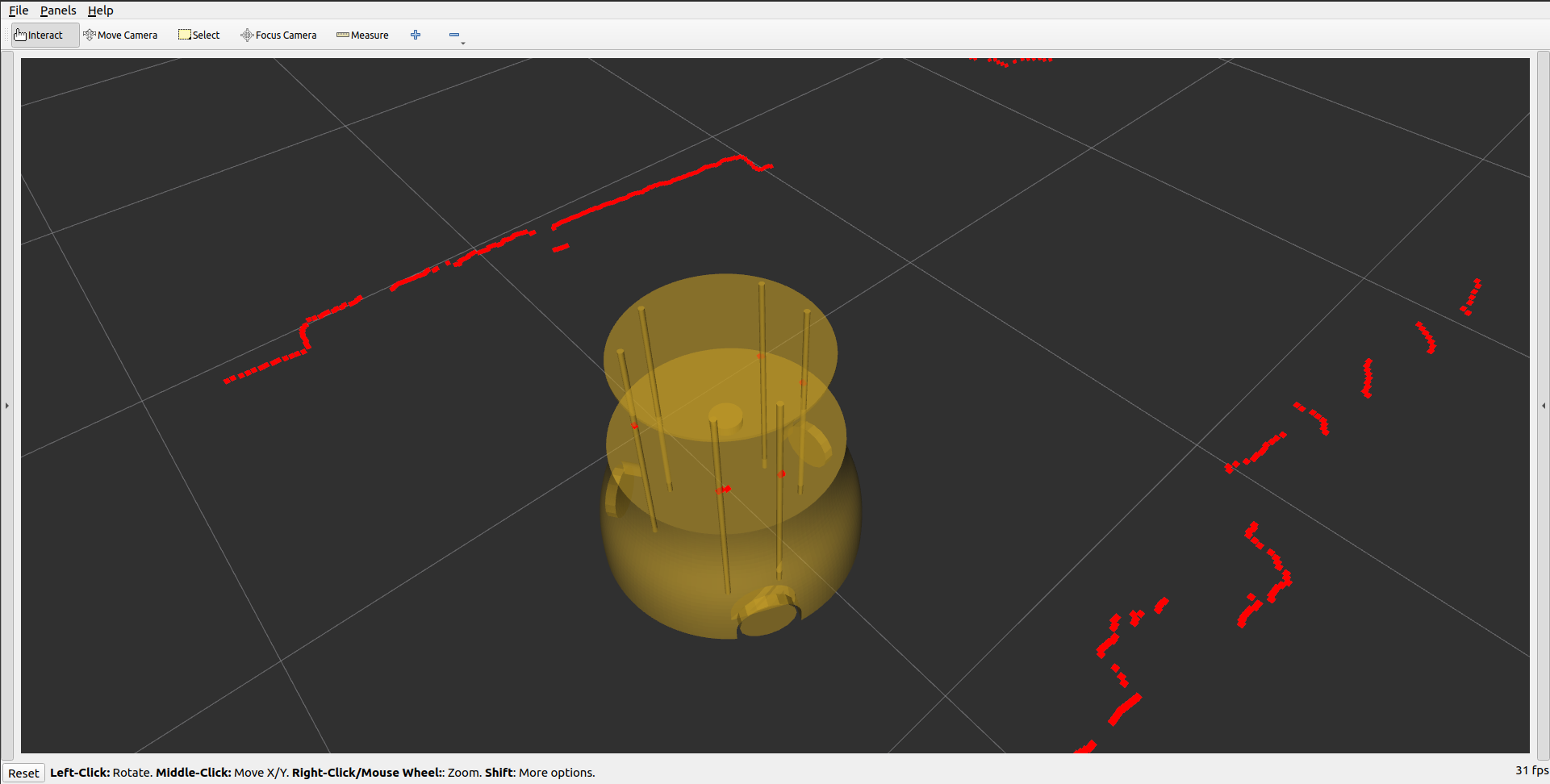

It is worth noting that these videos were recorded on the first version of the robot. Now that the second version of the robot has a platform above the LiDAR platform there are six poles that partially obstruct the LiDAR scan. This problem can clearly be seen in the picture below. The red dots represent the current LIDAR scan, several of which are located at the thin, vertical, yellow poles. In its current configuration, this would cause erroneous behavior as the SLAM algorithm would interpret that the robot has somehow passed through an obstacle. To mitigate this problem we are using the laser_filters package to remove data points closer that a certain range.

Will Donaldson

Will Donaldson

Discussions

Become a Hackaday.io Member

Create an account to leave a comment. Already have an account? Log In.