About

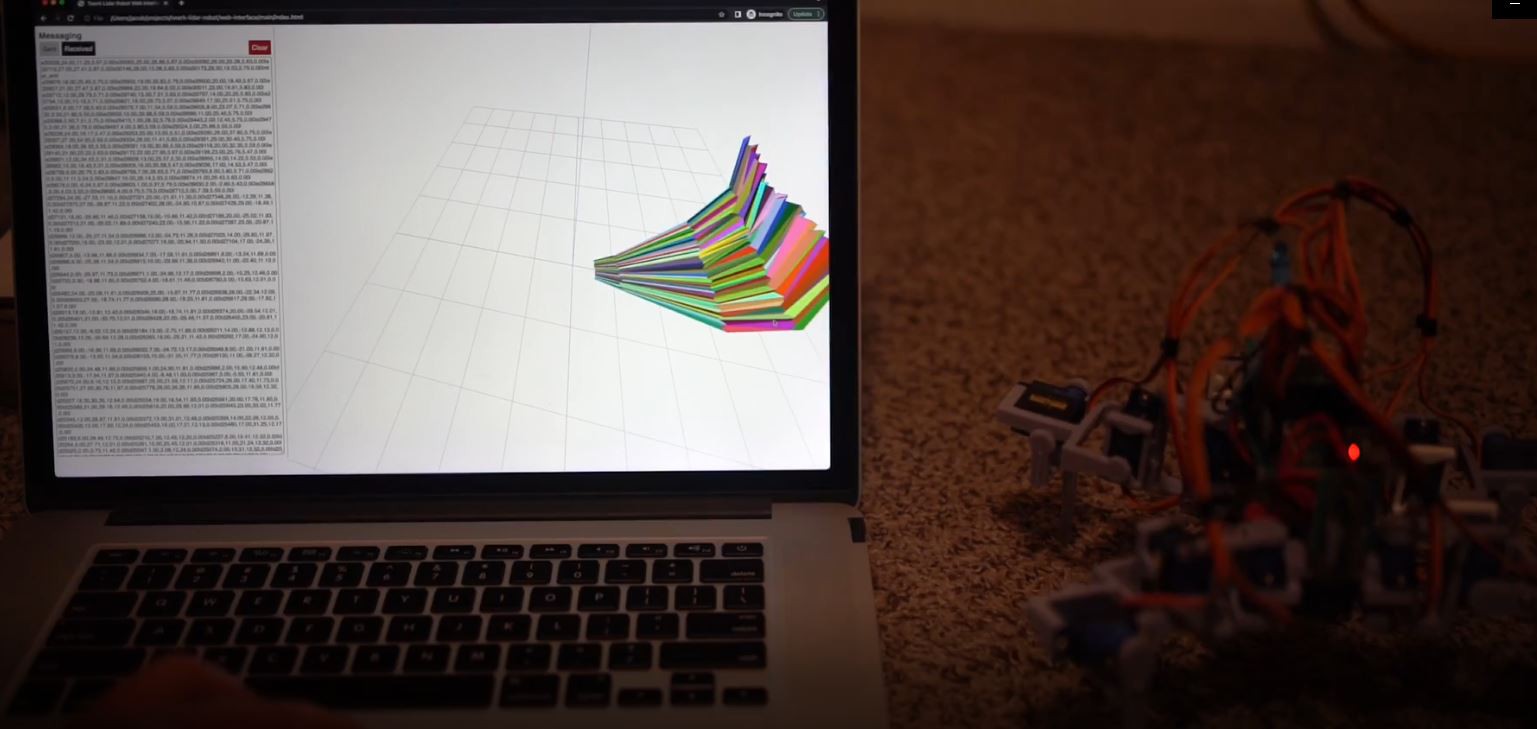

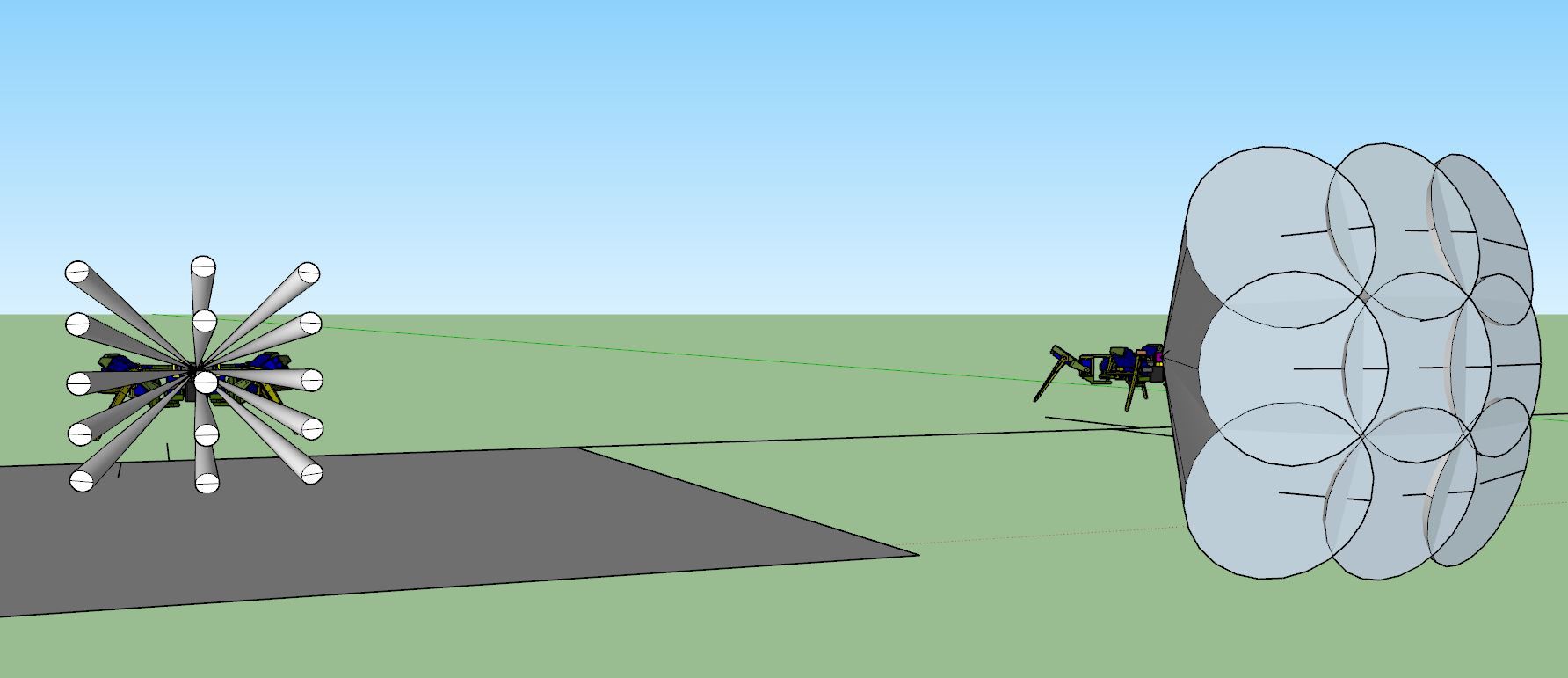

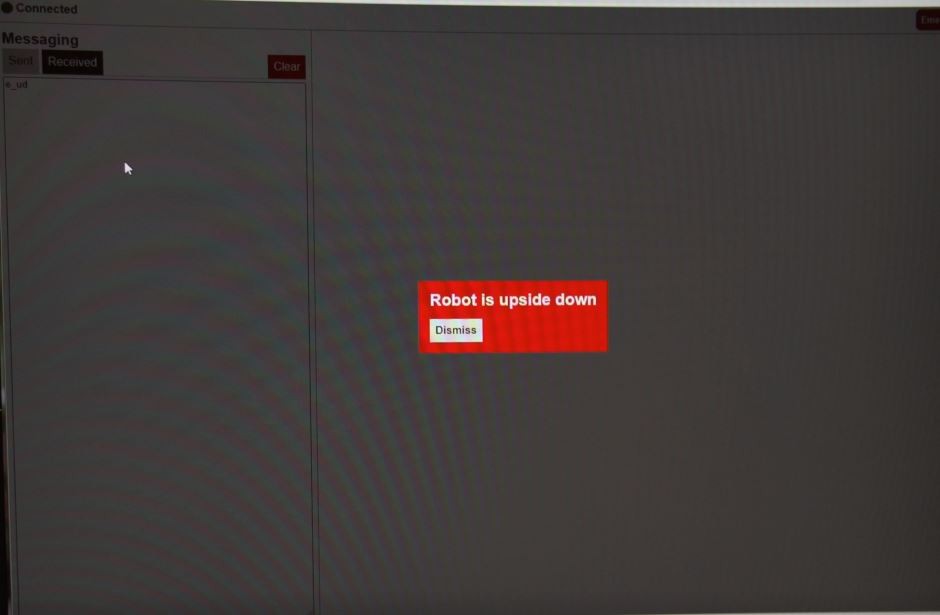

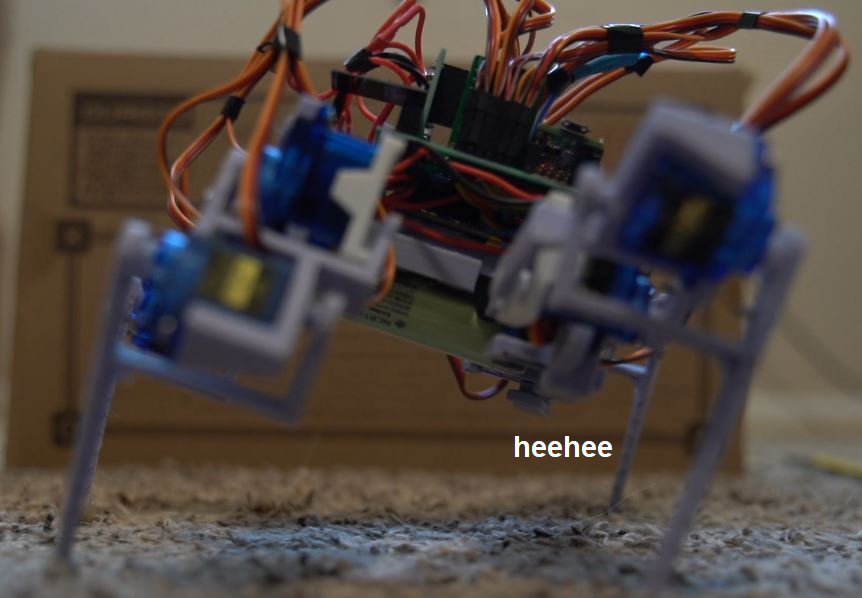

This project is self-learning of various subjects for me. I don't have much experience with IMUs and I have this personal fantasy of autonomous ground drones that map things/work on their own. This will also stream telemetry/use 3D rendering via ThreeJS that's also pretty new to me.

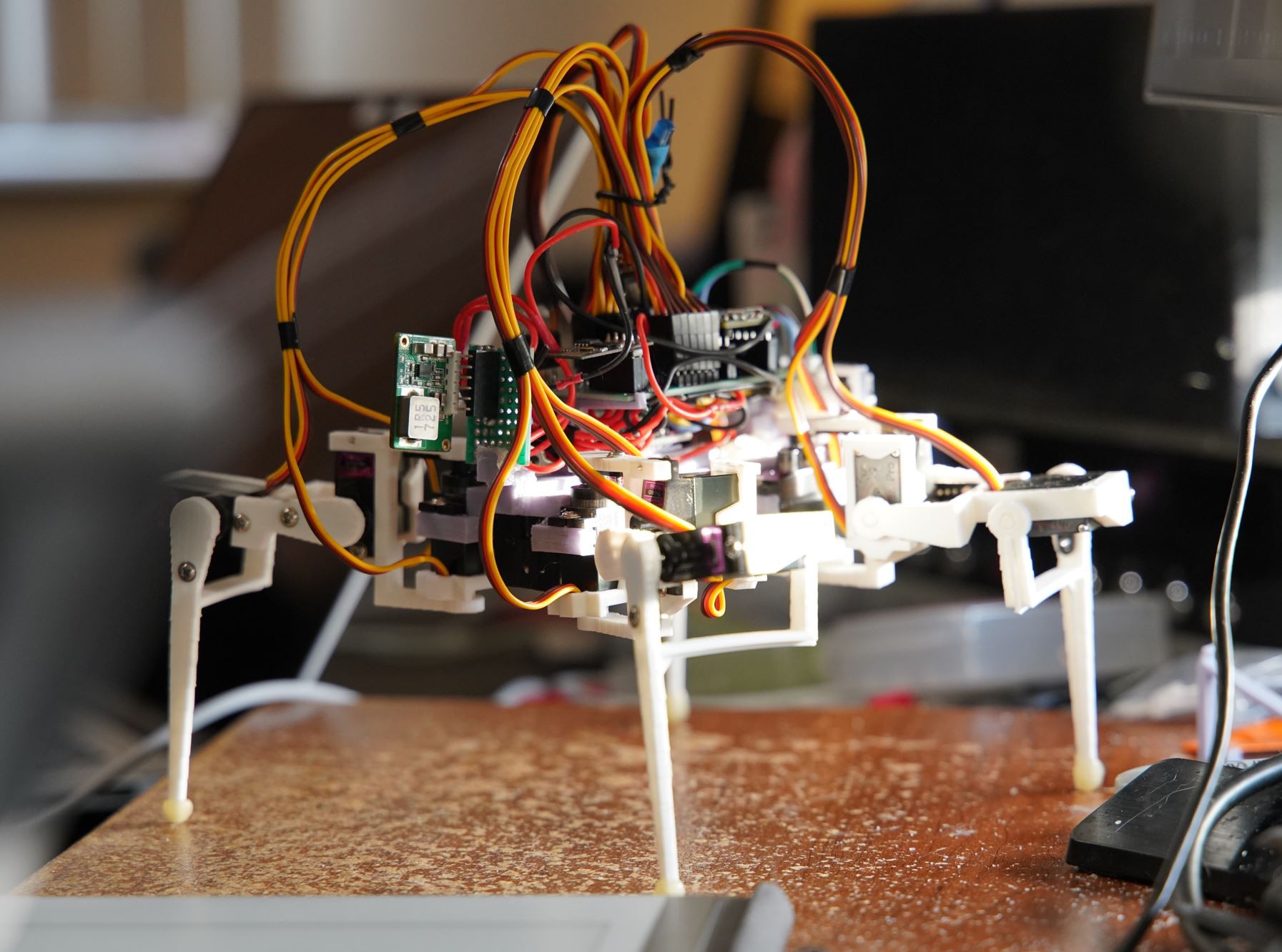

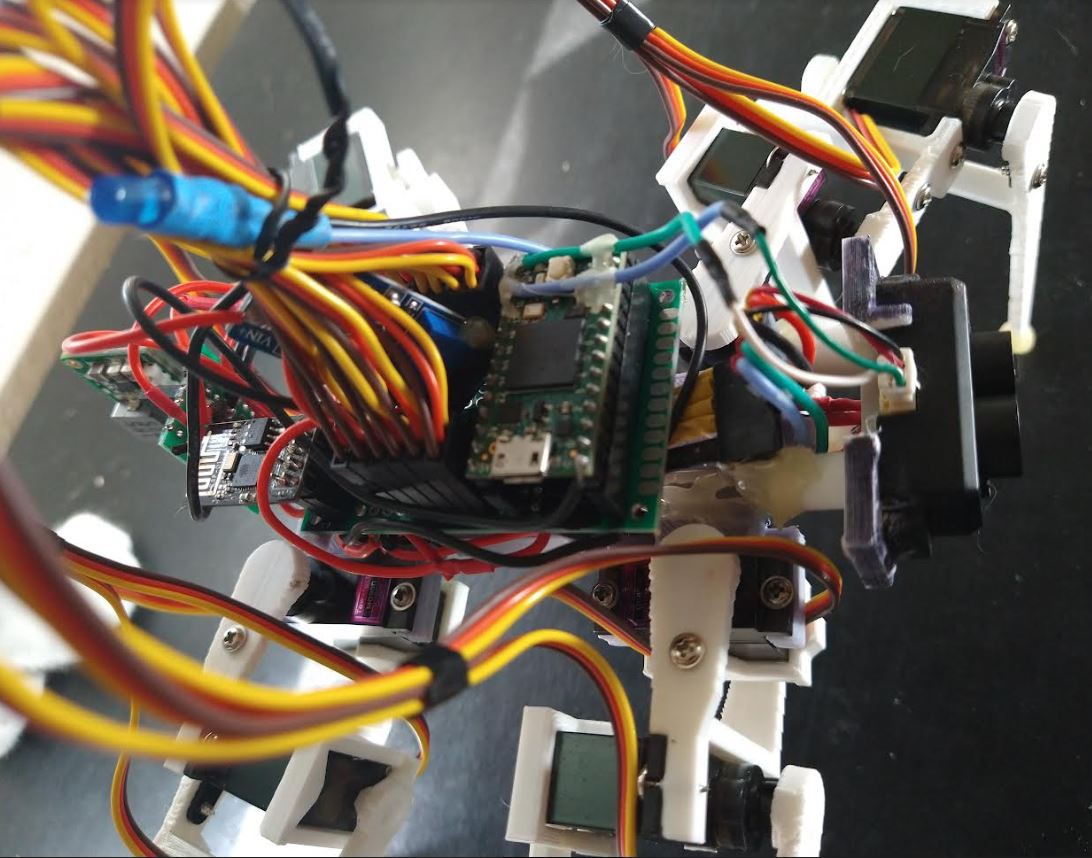

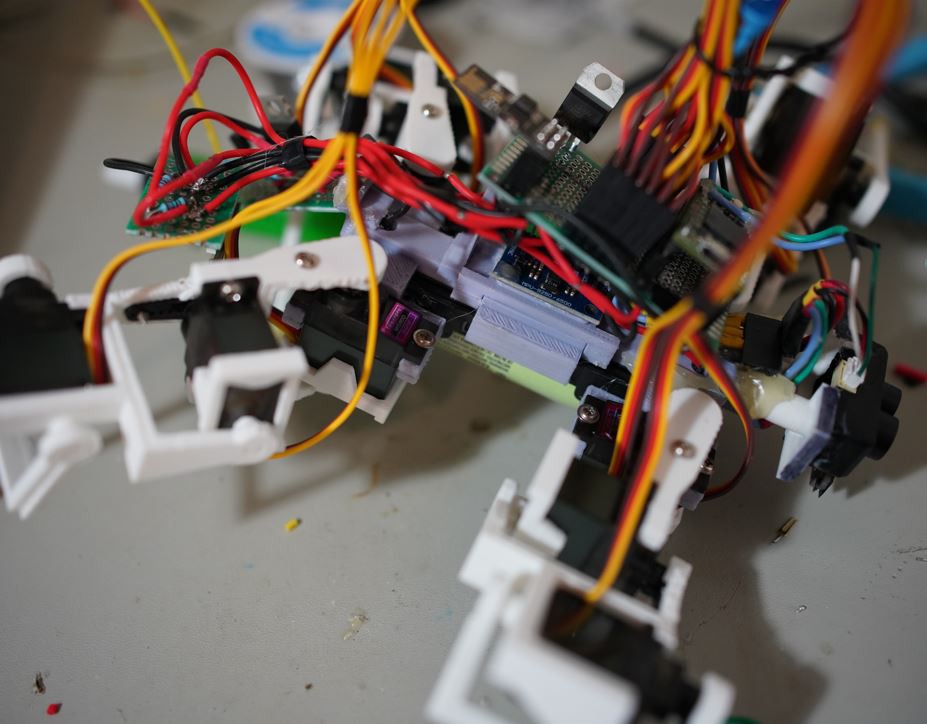

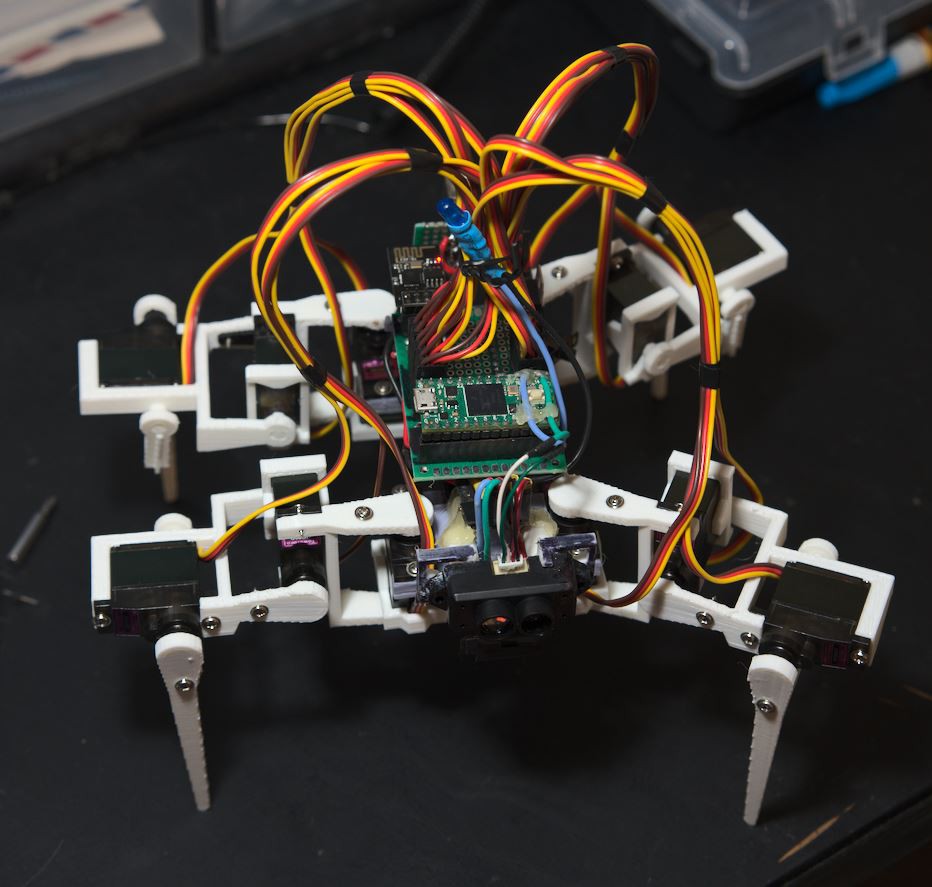

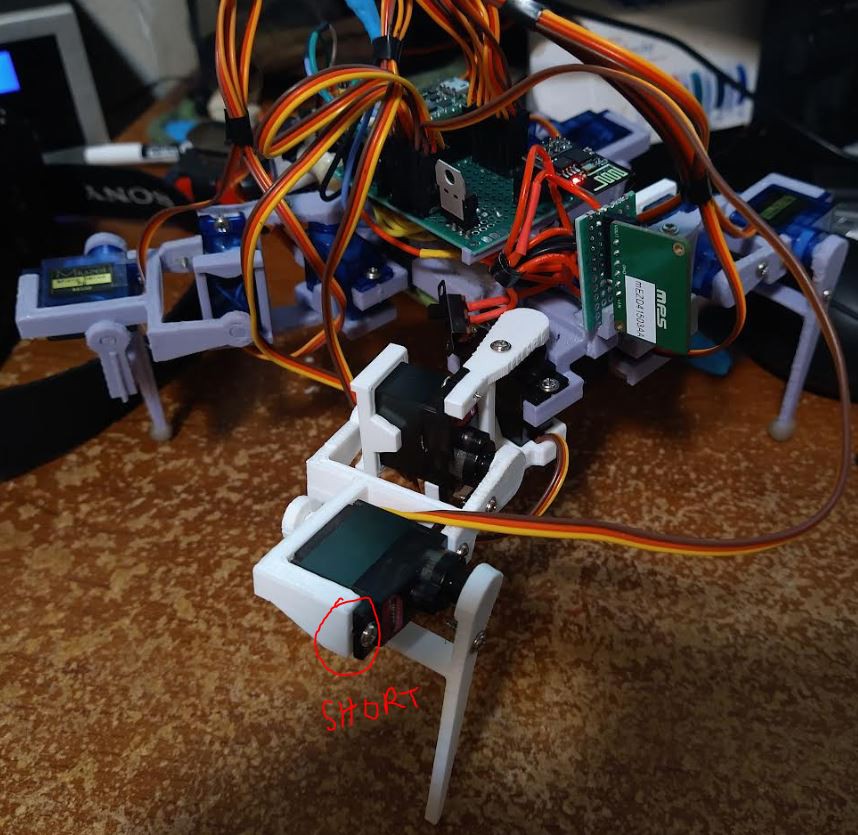

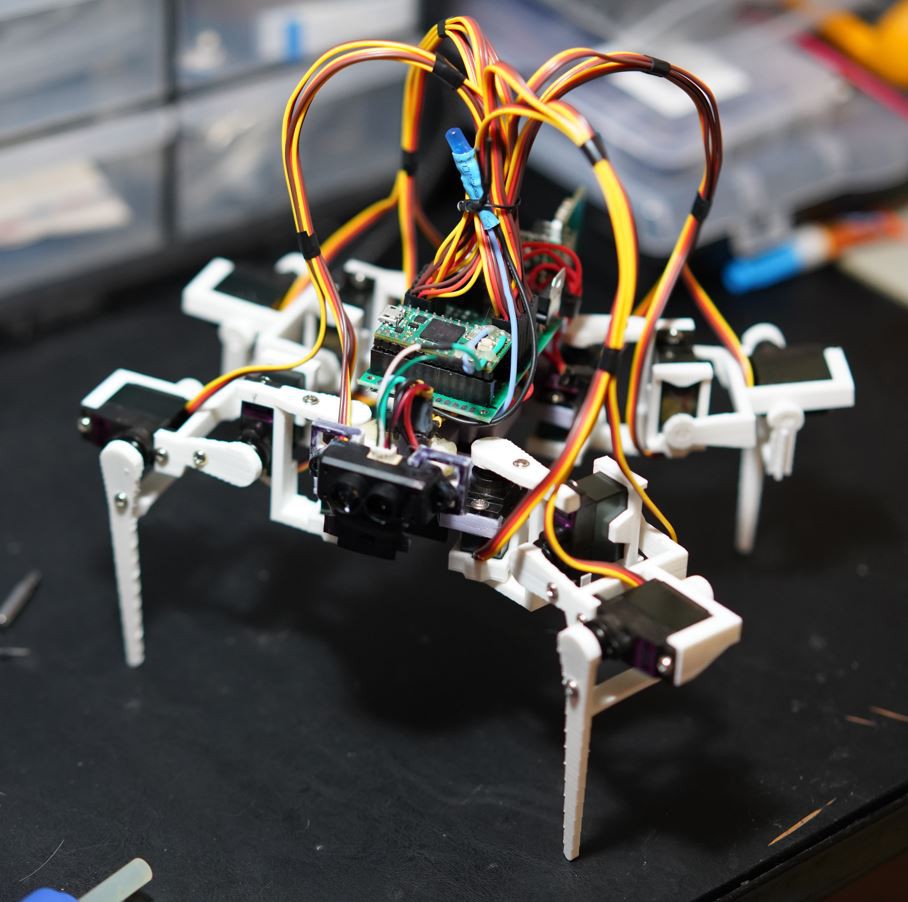

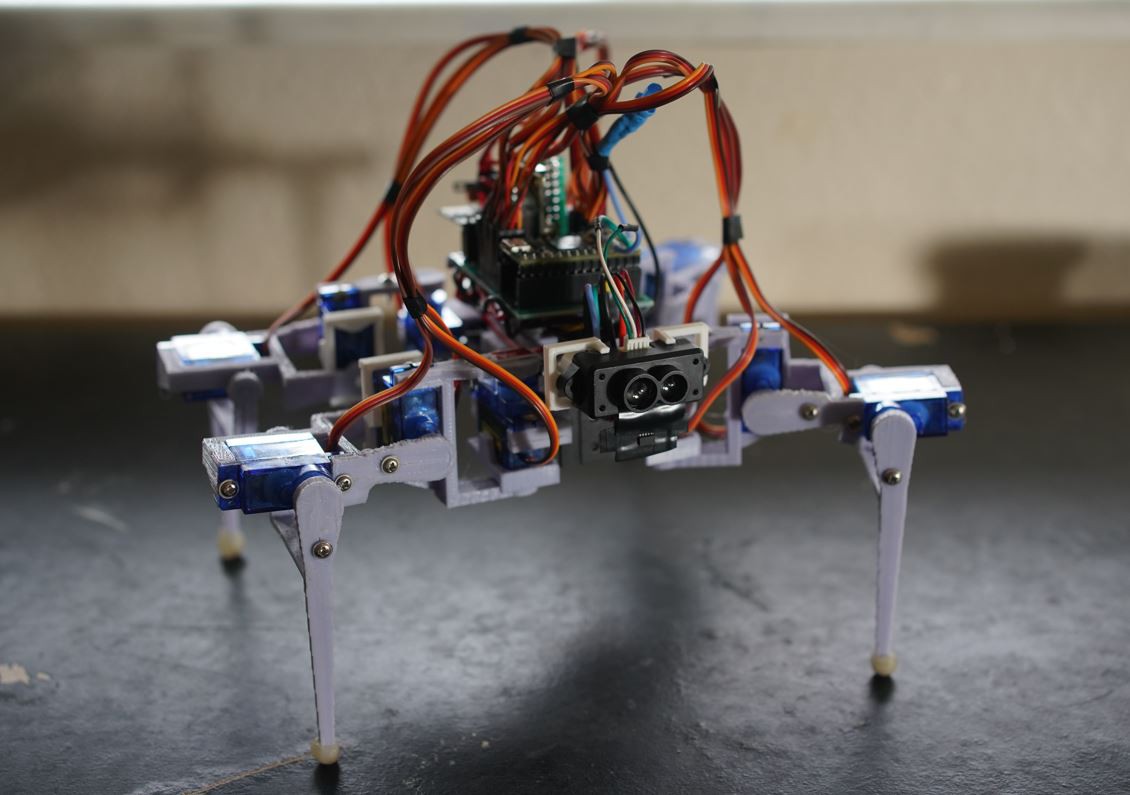

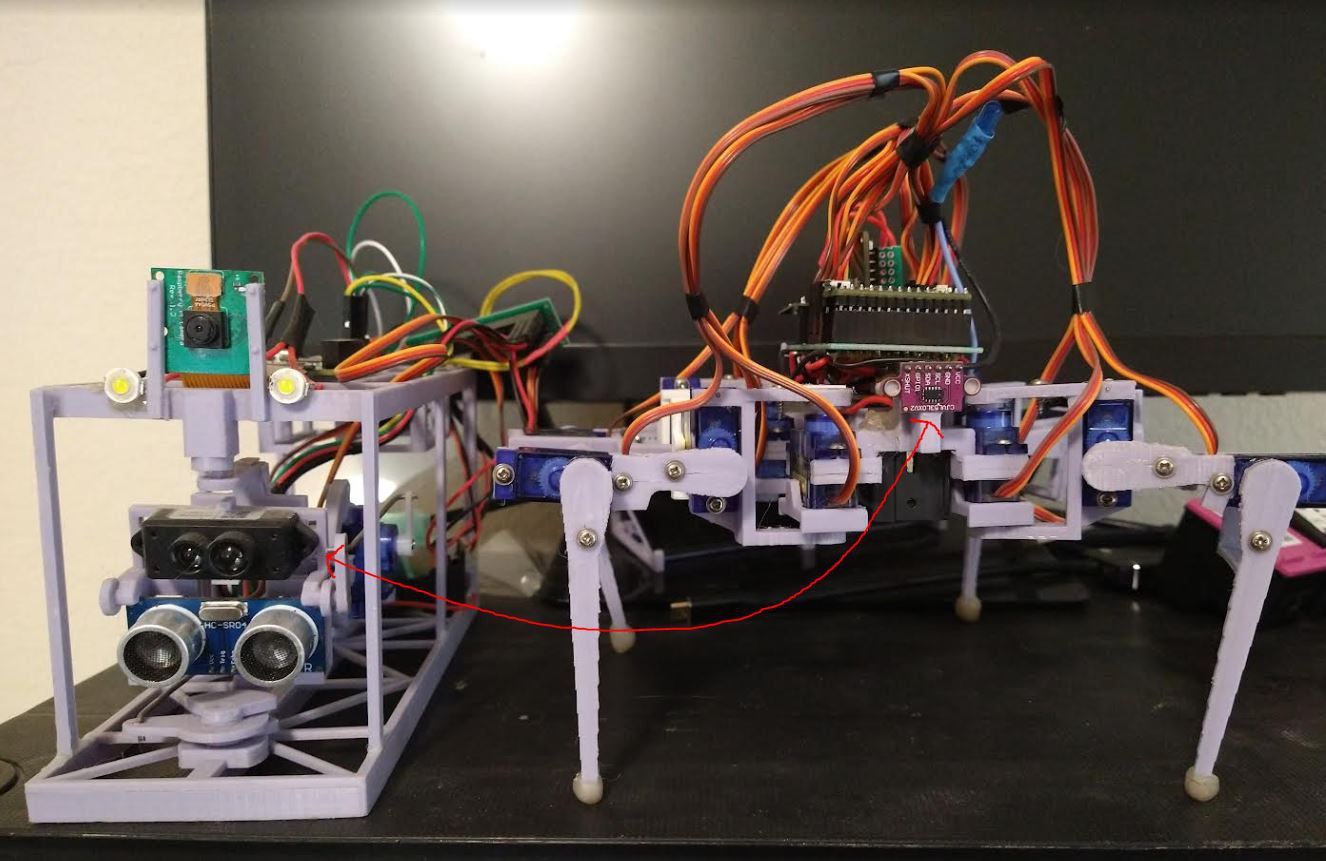

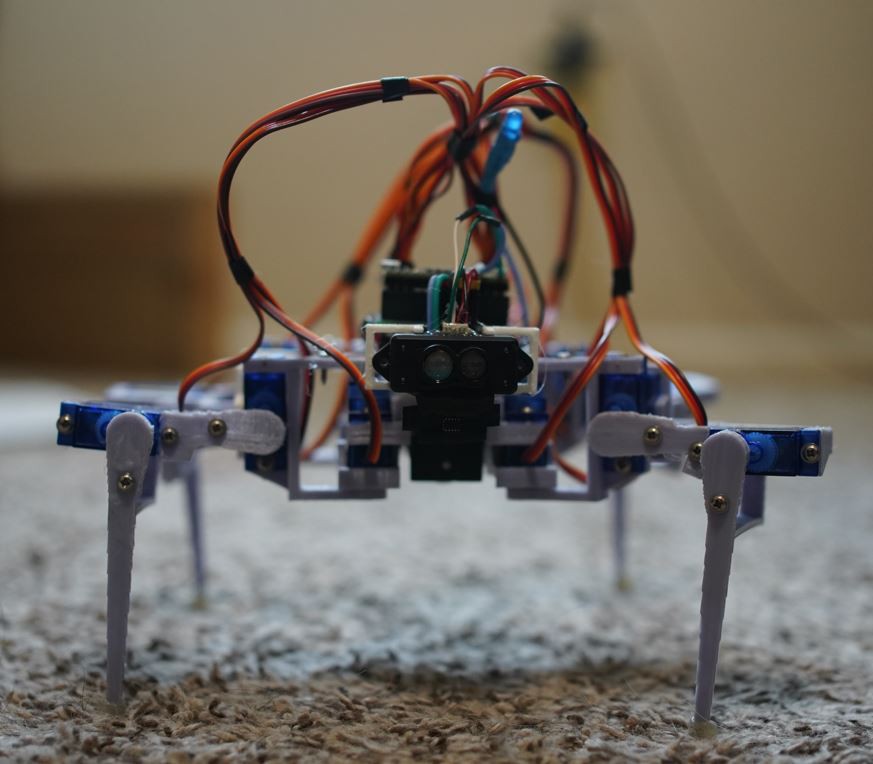

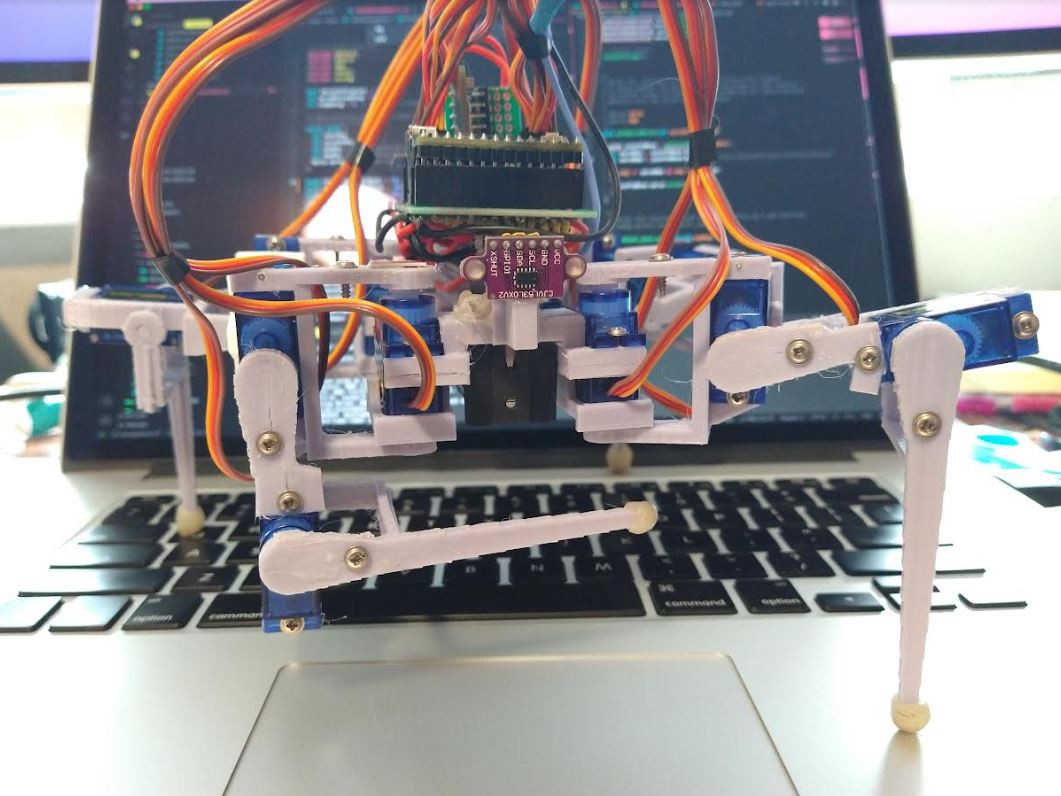

Components

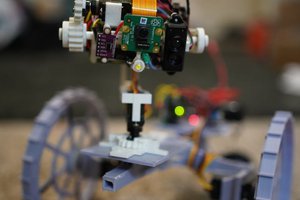

- Teensy 4.0

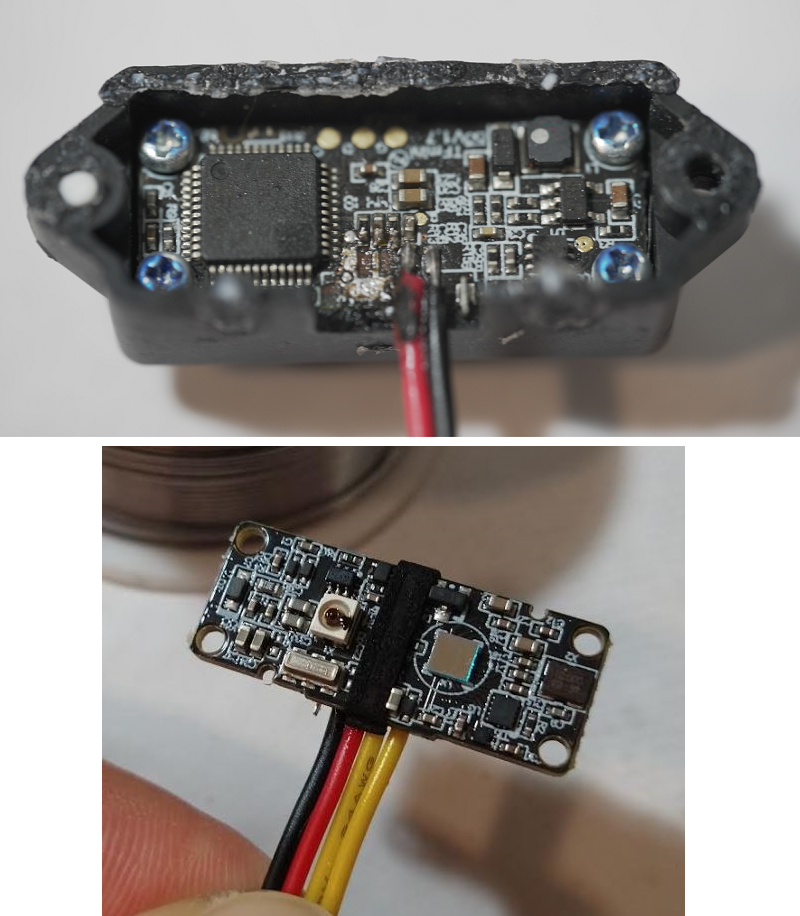

- MPU-9250

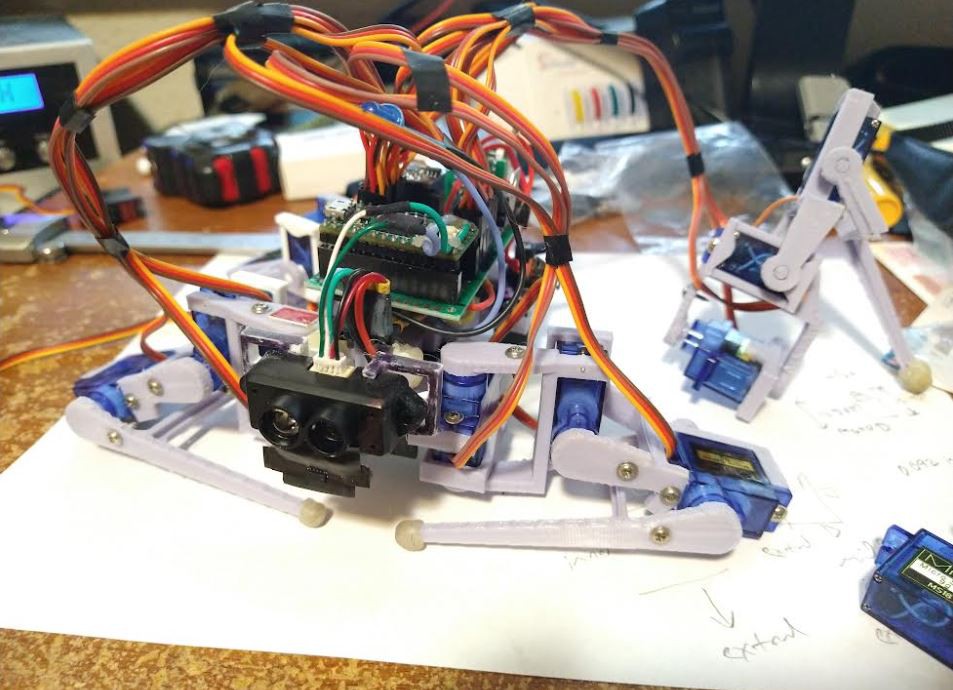

- VL53L0X (ToF laser ranging sensor)

- ESP-01

- 18650 battery

- MPS mEZD41503A-A 5V @ 3A (step-up converter)

- 12 x 9g servos

- TFmini-s Lidar

Unit cost $100.00 (blue servos, no TFmini-s lidar)

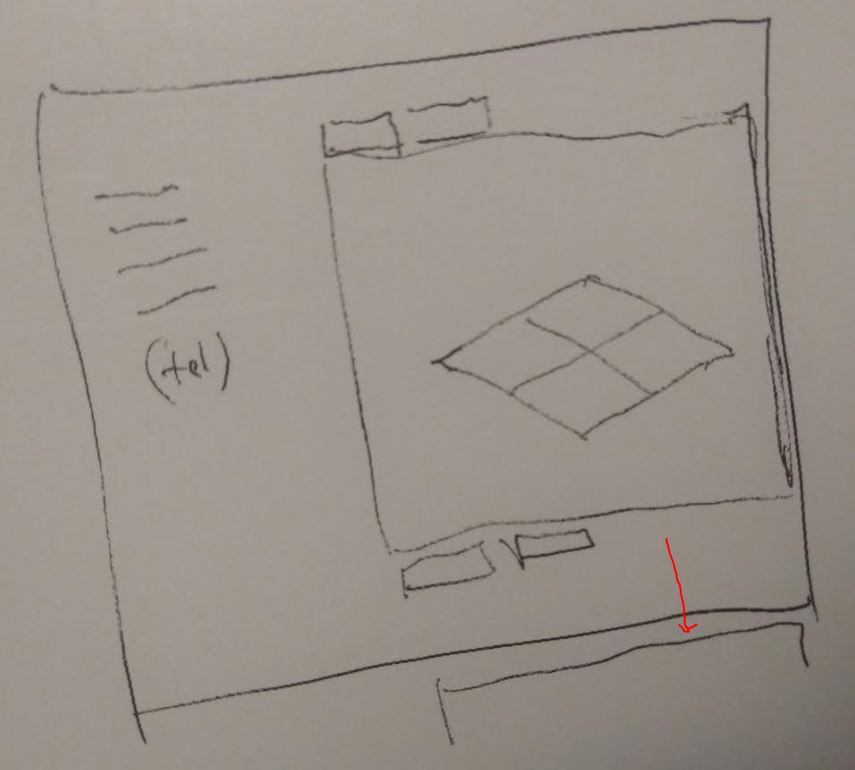

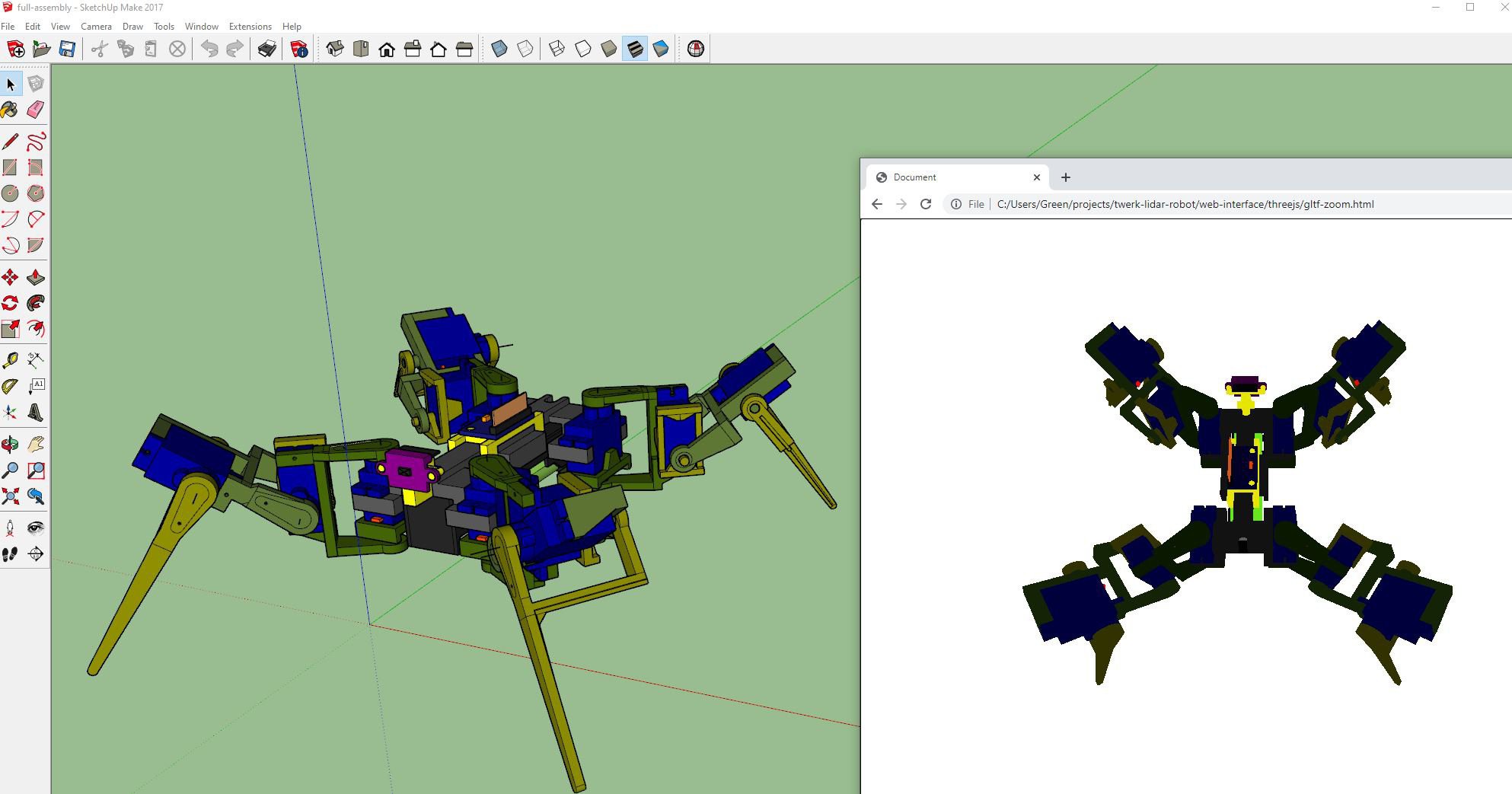

This project is ongoing, at this time 01/20/2022 I've just completed the physical design/build aspect and wiring of everything. I now have to actually do the mapping/3D telemetry stuff which is all doable because of exporting glTF and ThreeJS, plus the data streams off the robot through a web socket where a web interface can consume it.

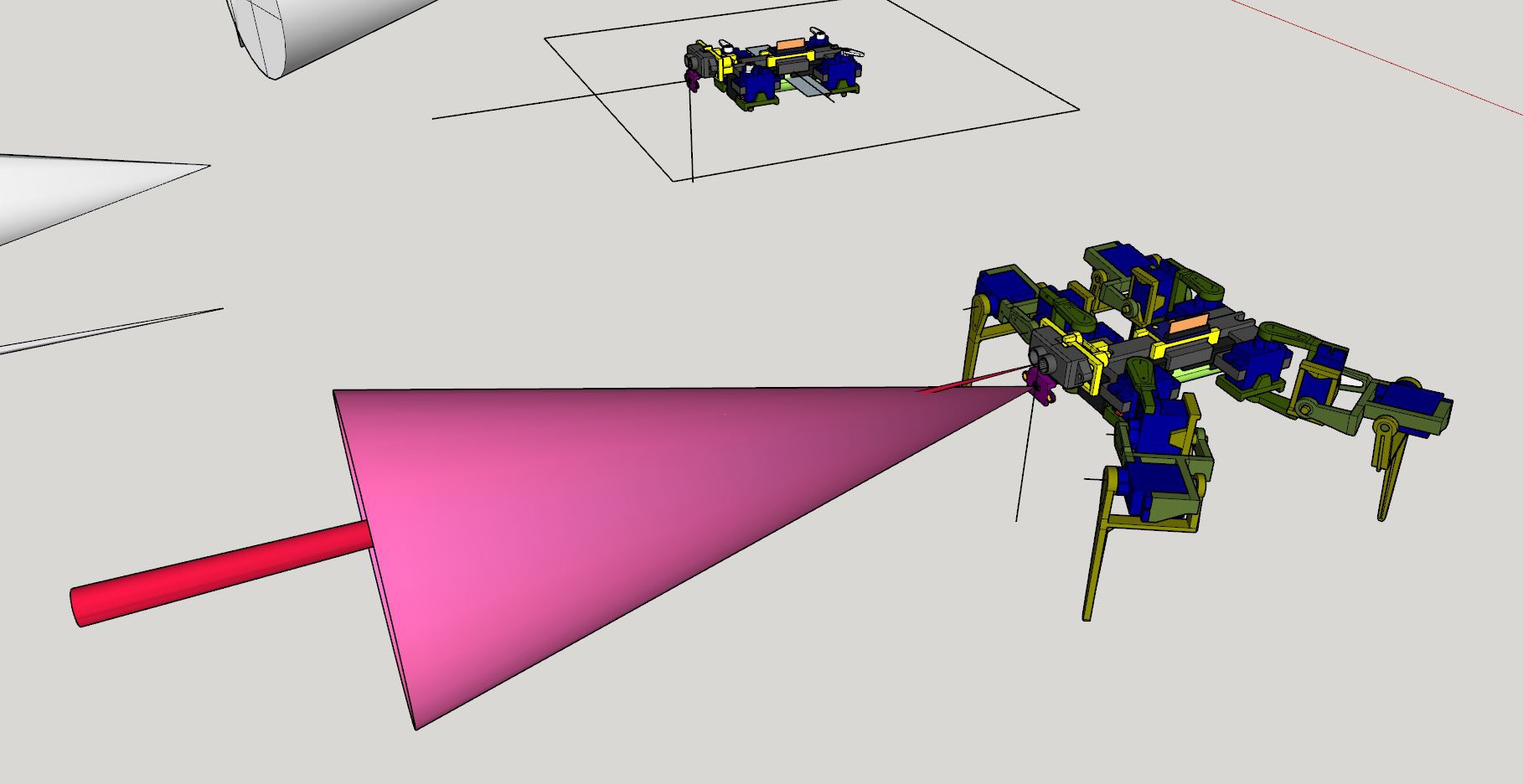

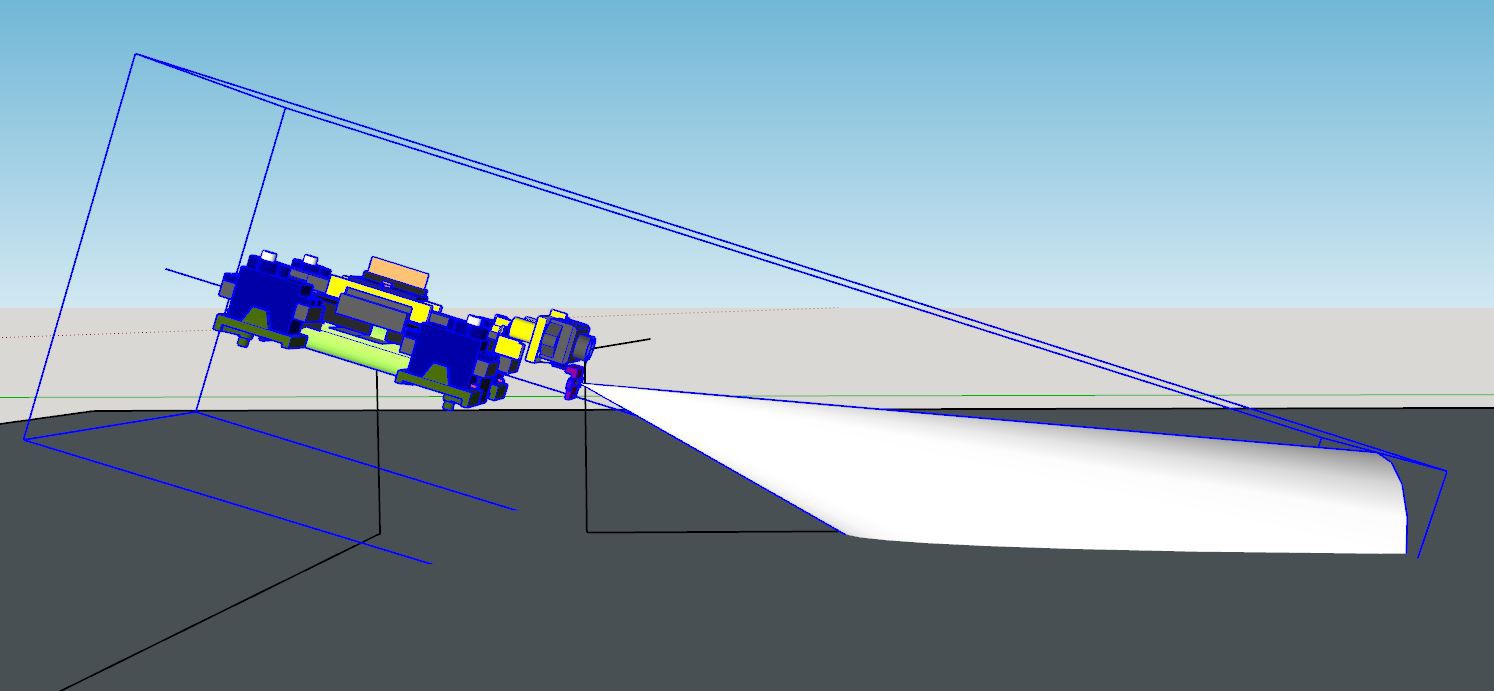

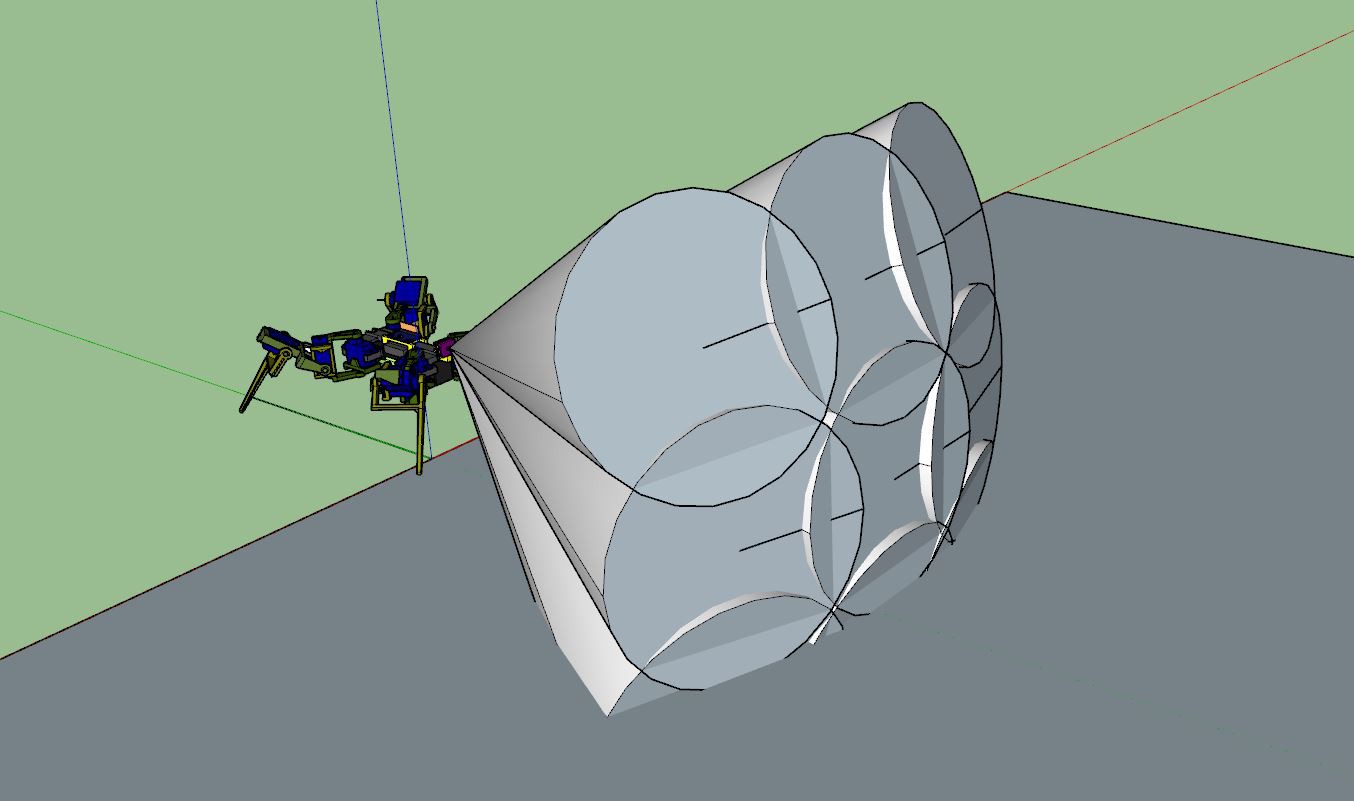

Scanning example

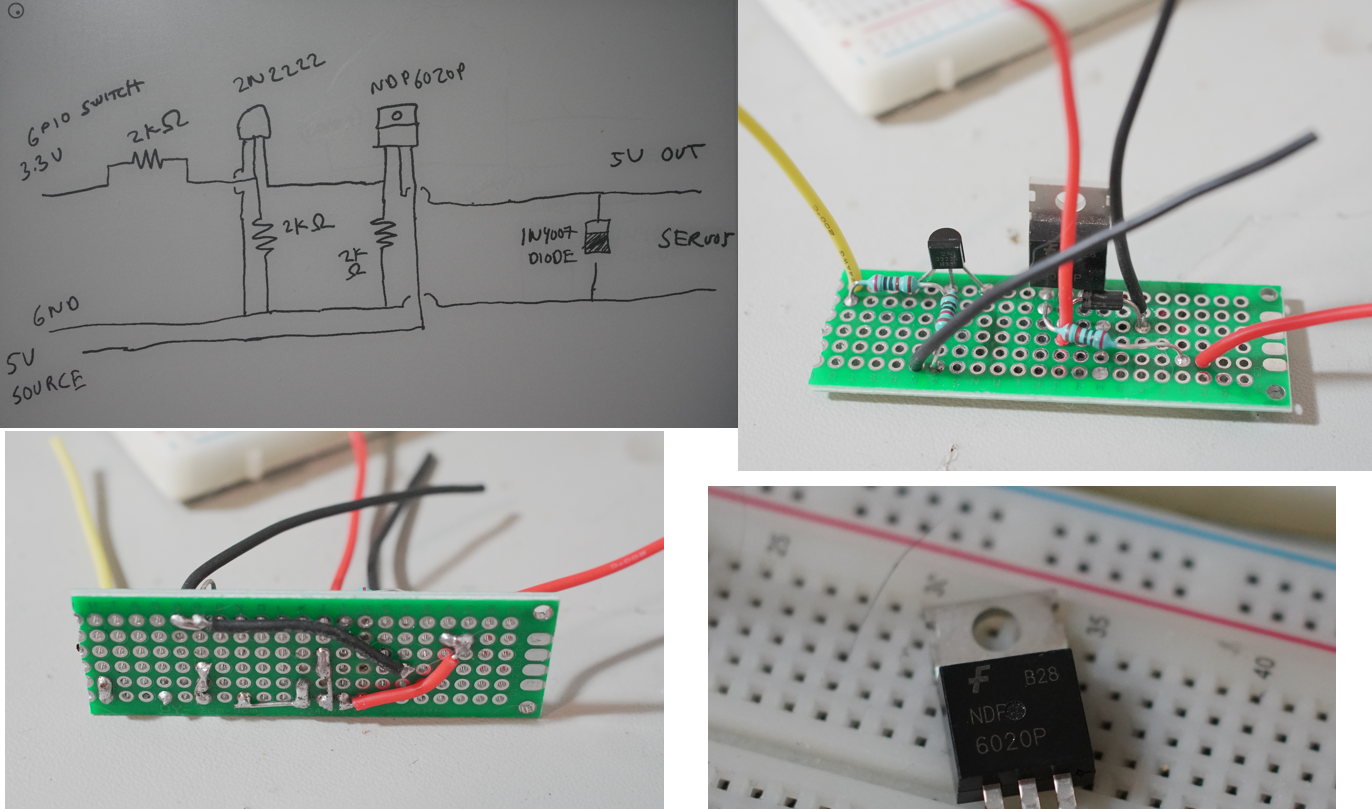

NOTE

This project is still in development and is not really intended to be reproduced due to the complexity of soldering the proto board. It's not so much complexity but a PITA. Also the project generally sucks, needs more planning and better parts.

Jacob David C Cunningham

Jacob David C Cunningham

Joshua Elsdon

Joshua Elsdon

Kenny.Industries

Kenny.Industries