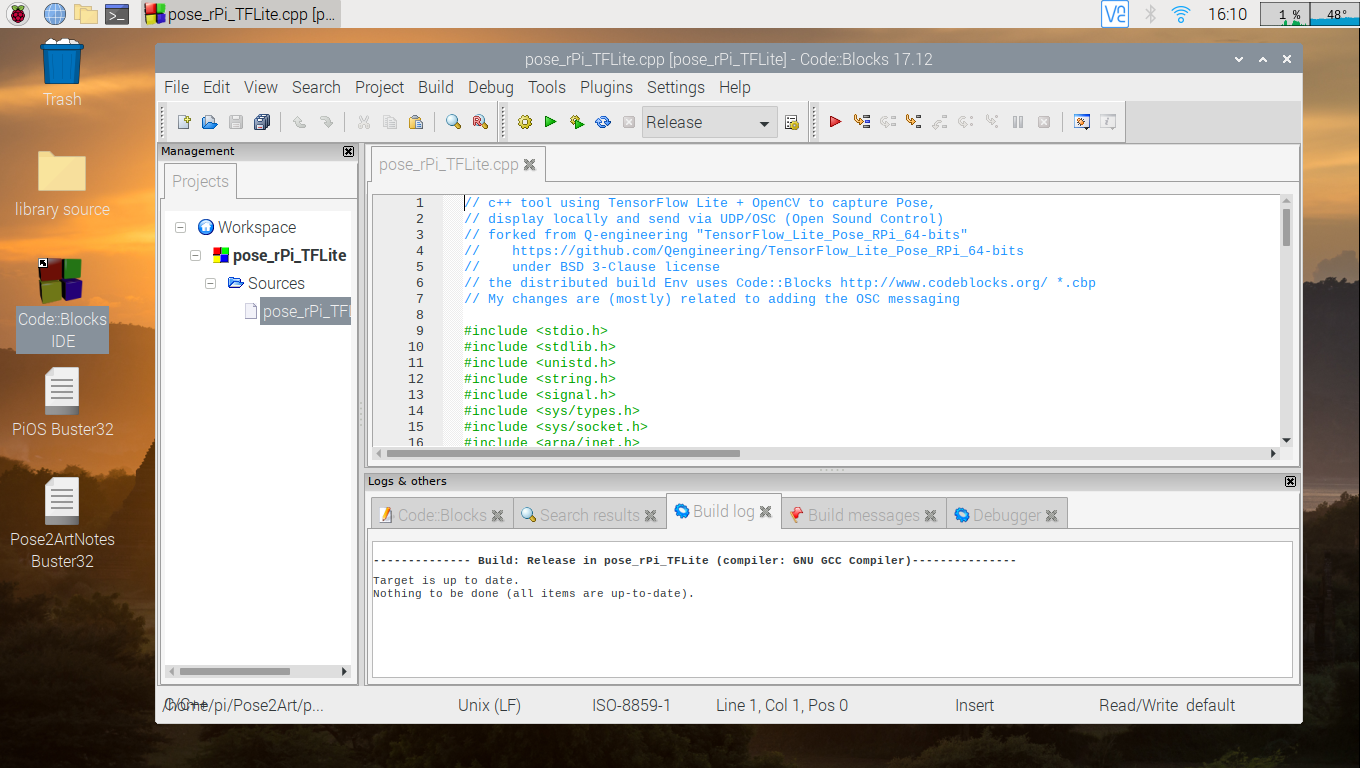

Now that we have shown the TouchDesigner Dots project working with the PC's webcam, we move on to the Raspberry Pi Smart Camera. Back in Log 1 and 2 we setup the software and networking. There should be a folder on your rPi called Pose2Art downloaded from my GitHub for this project. We are interested here in the pose_rPi_TFLite files, and will use the Code:Blocks application bundled into Q-engineering's os image to view/edit/run.

Using the File Manager window, navigate to the Pose2Art folder and double click on the file pose_rPi_TFLite.cbp. This is the Code:Blocks project and will open the tool. The Gear icon will compile the tool, and you can then run it by clicking the green > arrow in the icon row at top.

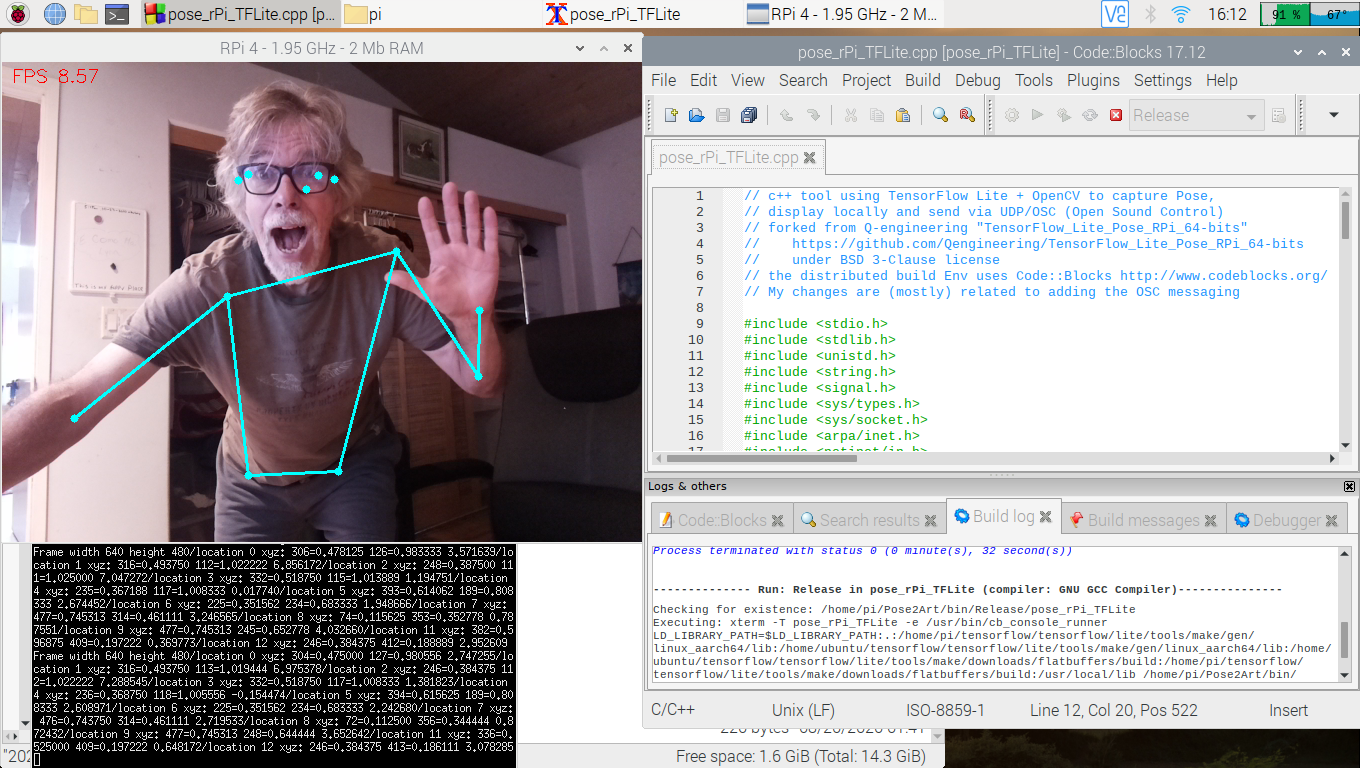

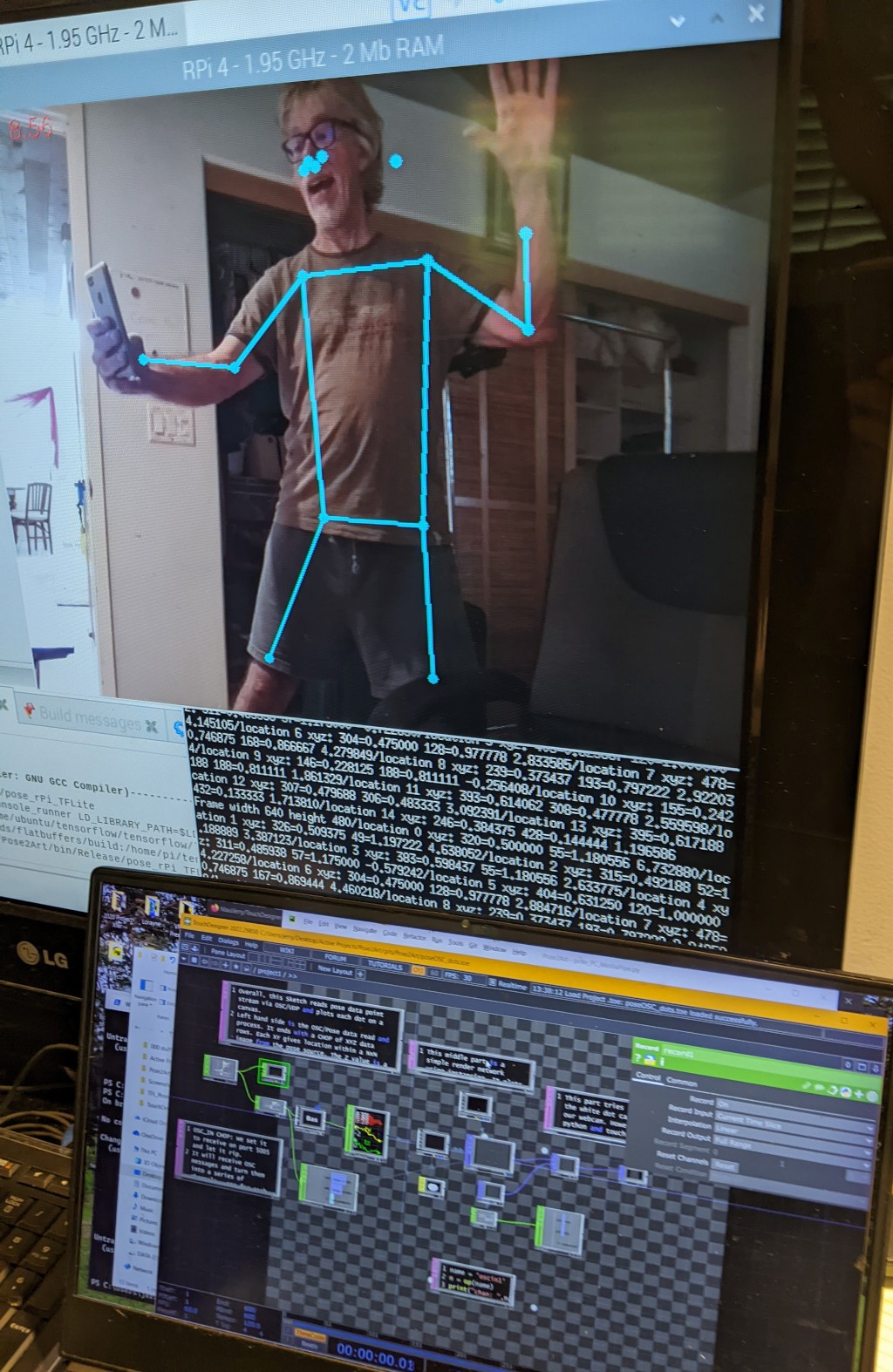

When you run the tool, two new windows will be displayed. One will be the std output (printing each landmark each frame). The other is the camera view with dots and skeleton drawn on top.

To exit the program, put the mouse in the camera view window and press ESC. Then hit ENTER to close the output window. You will be back at the Code:Blocks window.

Note there is an executable pose_rPi_TFLite in the Pose2Art/bin/Release folder. It needs to be run in the folder with the .tflite model file. If you open a terminal and navigate to the Pose2Art folder you should be able to run the tool with:

$ bin/Release/pose_rPi_TFLite

Only one new window (camera view) will be opened.

The C++ code is fairly well documented. It is a bit more complex than the Python, which is the nature of the two languages. There is more code to catch the ESC, deal directly with UDP sockets etc. Basic flow in main() is to capture a frame, invoke detect_from_video(), and display the frame with some timing overlays. The detect_from_video() grabs the image width/height then invokes the TFLite interpreter. Results come back in a couple tensors which are then used to find the locations and confidence for each landmark. It then sends the OSC messages and draws the dots/connections over the image.

If you have the rPi4 connected to the PC, and have the Dots TouchDesigner program running, you should see the dots in the TD render1 OP.

Jerry Isdale

Jerry Isdale

Discussions

Become a Hackaday.io Member

Create an account to leave a comment. Already have an account? Log In.