Create a container:

lxc-create -t download -n container-name -- --no-validate

Then it prints a list & you have to specify an image from the list with 3 prompts:

Distribution: ubuntu

Release: xenial

Architecture: amd64

Rename a container without lxc-rename or lxc-move:

lxc-copy -R -n old-name -N new-name

To set up networking for containers, enable bridging in the kernel:

Networking support → Networking options -> 802.1d Ethernet Bridging

modprobe bridge

The containers require a bridge to start or they'll give the error failed to attach 'vethEFO7AL' to the bridge 'lxcbr0': Operation not permitted:

brctl addbr lxcbr0

ip link set lxcbr0 up

Start a container:

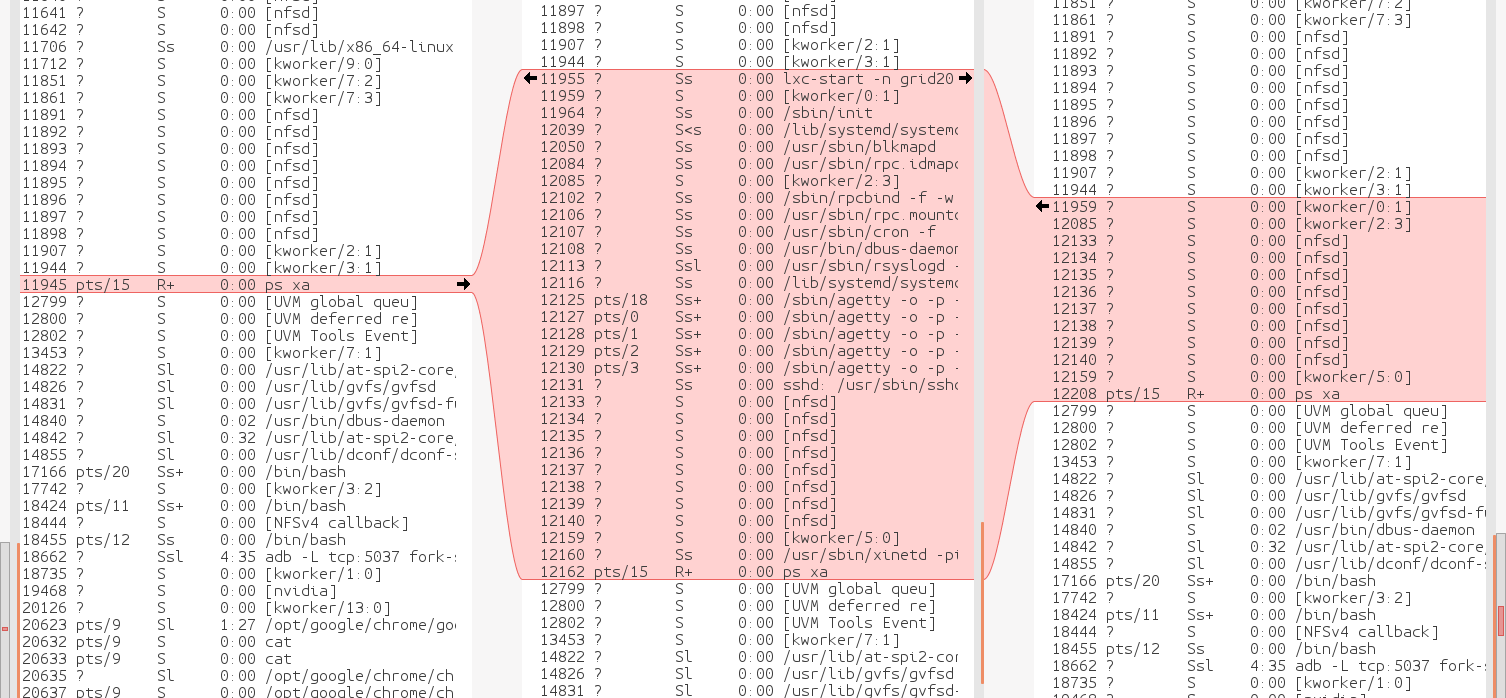

lxc-start -n name

Create a root console on the container:

lxc-attach -n name

New for ubunt 22, install ifconfig

apt install net-tools

For the container to access the network, unset the host's address

ifconfig enp6s0 0.0.0.0

Attach the host to the bridge

brctl addif lxcbr0 enp6s0

Assign the host address to the bridge

ifconfig lxcbr0 10.0.10.25 netmask 255.255.255.0

Assign the default route to the bridge

route add default gw xena

Set up the container by editing the container's /etc/network/interfaces. Helas, this is for ubunt 16.

auto eth0

#iface eth0 inet dhcp

iface eth0 inet manual

On Ubunt 22, the new dance is editing /etc/netplan/10-lxc.yaml Change dhcp4 to false

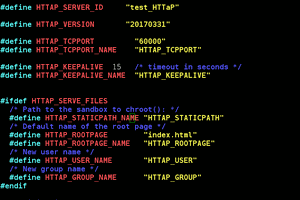

In the image configuration file /var/lib/lxc/container-name/config you need to add lines for the container's address & the gateway

lxc.network.ipv4 = 10.0.10.26/24 lxc.network.ipv4.gateway = 10.0.10.1

Add a nameserver line to the container's /etc/resolvconf/resolv.conf.d/head This too is for ubunt 16.

On Ubunt 22, deleting & creating a new /etc/resolv.conf after disabling dhcp seemed to work.On ubunt 20-22, you can disable the systemd networking the usual way

mv /lib/systemd/systemd-networkd /lib/systemd/systemd-networkd.bak mv /lib/systemd/systemd-resolved /lib/systemd/systemd-resolved.bak mv /usr/bin/networkd-dispatcher /usr/bin/networkd-dispatcher.bak

& run all the network commands in /etc/rc.local

Restart the container

lxc-stop -n name

lxc-start -n name

Mount a host directory in a container by adding a line to the config file

lxc.mount.entry = /grid /var/lib/lxc/grid20/rootfs/grid none bind 0 0

/grid is the host directory

/var/lib/lxc/grid20/rootfs/grid is the container mountpoint

Create the mount point with a mkdir in /var/lib/lxc/grid20/rootfs/

lion mclionhead

lion mclionhead

Yann Guidon / YGDES

Yann Guidon / YGDES

Enki

Enki

ziggurat29

ziggurat29

tlankford01

tlankford01