The Hardware Side of Computation

An exploration of CPUs, GPUs, and FPGAs, and how they each perform computation

An exploration of CPUs, GPUs, and FPGAs, and how they each perform computation

To make the experience fit your profile, pick a username and tell us what interests you.

We found and based on your interests.

It is time for the third type of processor I will be covering: the graphics processing unit (GPU). This is the one about which I know the least so far, so this will be the shortest post.

Specialized chips for graphics processing have existed since the 1970s. First used in arcade games, initially they served to connect the CPU to the monitor and provide graphics capabilities in a way that didn't require lots of RAM to store the image, or constant processing by the CPU to move 2D images around onscreen. In the late 1980s and 1990s, new devices were created that could handle basic 3D graphics calculations (transform, clipping, and lighting) in hardware. Again, the first of these were found in arcade machines. In 1996 and 1997 they became available in home video game and computer systems. Modern GPUs are descended from these.

Modern GPUs can perform programmable vertex shading and pixel shading. These consist of running a short program for each geometric vertex and each pixel in the output image. To speed this up, GPUs are built with many physical processing units (called shaders), which run these short programs (also called shaders) in parallel. Early modern GPUs' pixel shaders weren't actually fully programmable; they were more like blocks in an FPGA, programmable only for a certain set of functions. This was necessary to be able to run them at high pixel clock speeds to get all of the pixels in the image prepared in time. By the early 2000s, though, hardware pixel and vertex shaders were converging and also becoming more similar to CPUs, gaining looping and floating point math abilities, while keeping (and increasing) their parallelism. This soon came to be used for bump mapping, a process that simulates a texture on a rendered surface, even though the actual surface in the 3D model is flat.

Various APIs have been created to enable easy use of GPUs for graphics in your programs. They are useful for 3D games, 3D modeling programs, et cetera. OpenGL, a popular one, was first released in 1992 and is still used widely today. DirectX, a proprietary API (really a group of APIs, only some of which are GPU-related) from Microsoft, was first released in 1995 and is also very popular today, particularly for commercial games.

Most of the calculations used in 3D graphics are based on matrices and vectors, and involve doing a great many similar, simple calculations simultaneously, so modern GPUs are highly optimized for these demands. However, 3D graphics is far from the only field where highly parallel matrix and vector math is used. Using a GPU for non-graphics applications is called general-purpose computing on graphics processing units (GPGPU). This is done by means of compute kernels (or compute shaders), which are given to the GPU in place of vertex and pixel shaders. The data is usually provided in the form of a 2D grid, because GPUs are optimized for working with data in this form. Using a GPU to run a compute kernel on a grid of data is equivalent to using a CPU to loop over the grid and perform an operation during each iteration of the loop. However, the GPU performs the operation in parallel on multiple (perhaps all of the) elements in the grid simultaneously.

It is important to note that GPGPU is only effective for problems that can be solved efficiently by stream processing. While a GPU can solve problems where the subproblems are heavily interconnected, it will be much slower at that problem than a CPU, because the parallelism doesn't help with that type of problem.

When designing algorithms for GPGPU, you should try to maximize the arithmetic intensity (the number of arithmetic operations performed per unit of data), to keep the processing from being slowed down by memory access.

While you can do GPGPU using graphics APIs or direct access to the GPU, APIs also exist that allow easier use of GPUs for GPGPU, including OpenCL and OpenMP (both of which are not GPGPU-specific; they can run on many kinds of processors),...

Read more »I had planned to post this a month ago, but things got in the way. Watch this project log entry.

An alternative to serial processing such as is done by a CPU is programmable logic. In programmable logic, one defines a circuit of logic gates that achieves a desired result and programs this into a programmable logic device (PLD). Programmable logic devices include, in reverse order of invention and power:

(The PLD type names all seem very similar and non-descriptive. I doubt anyone could tell which name corresponded to which architecture without being told.)

An FPGA is a device that, instead of having a (mostly) fixed circuit that performs computations according to a program, allows you to reconfigure its logic gates to form any circuit you want. It uses a different architecture than previous PLDs, specifically, using logic based on lookup tables (LUTs) instead of the pure gate-based logic used by the earlier PLDs. The "field-programmable" in the name simply means that it is programmable after leaving the factory, as opposed to a non-programmable logic chip, which is permanently configured at the time of manufacture.

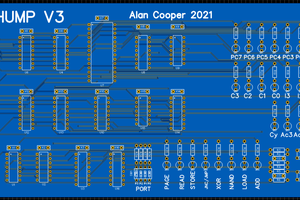

FPGAs are used in many applications, including real-time processing, glue logic, and prototyping of application-specific integrated circuits (ASICs). Originally they competed in the glue logic market with CPLDs, but they soon grew into other markets as well, adding dedicated logic circuitry such as multipliers, which enabled their use for digital signal processing (DSP). Some modern FPGAs also have integrated CPUs and RAM.

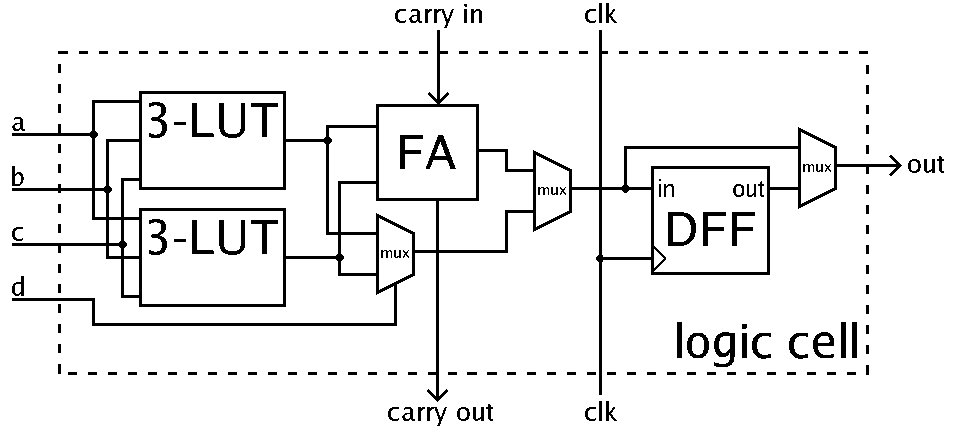

The base unit of the FPGA architecture is the logic block (aka configurable logic block, logic array block, logic element). This is a circuit that can be programmed to emulate any kind of Boolean logic gate. A diagram of a simple logic block is:

Diagram by Petter.kallstrom, public domain

Diagram by Petter.kallstrom, public domain

The basic components of the logic block are two three-input LUT or a single four-input LUT, a full adder, and a D-type flip-flop. Between these components are multiplexers, which determine the routing of the signals through the logic block.

The left mux combines the two three-input LUTs into a four-input LUT. Some modern FPGAs have an actual four-input LUT instead.

The center mux is used to switch between normal mode and arithmetic mode. Normal mode means the output of the four-input LUT is passed to the right third of the circuit, while in arithmetic mode, the outputs of the 3-input LUTs are combined by the full adder and its result goes to the right third. (The full adder and the LUT-combining mux both always operate; the middle mux just chooses which of their outputs to use.)

The right mux is used to switch between the synchronous and asynchronous output modes. In synchronous mode, it takes the output of the D-type flip-flop, which passes on and holds its input signal upon receiving a clock pulse. In asynchronous mode, it takes the output from before the flip-flop, resulting in the output not waiting for the clock signal.

Together, all of these components can be programmed to emulate any Boolean logic gate. There may be hundreds to thousands of these logic blocks in an FPGA.

Modern FPGAs also have hard blocks, which are dedicated logic circuits such as transceivers, multipliers, memories, and small CPUs. These are built in the normal way, as ASICs, instead of using logic blocks. This enables them to be much faster and more efficient, and leaves the logic blocks unoccupied for the user's circuits.

An FPGA also has switching components that connect the logic blocks to each other and to the hard blocks. The switches are individually programmable just like the logic blocks are. They are typically found between all of the logic blocks, though some modern FPGAs share switches between several logic blocks to increase speed.

The logic blocks and the switches form what is called the FPGA's fabric, in which the logic blocks are themselves programmable and are...

Read more »The central processing unit, or CPU, is a ubiquitous type of computational device these days. Every desktop and laptop computer has one (or more), of course, and so do cell phones, but they are also found in set-top boxes, TVs, modems, and cameras, just to name a few. As well, every microcontroller includes a (small) CPU, so they are also found in Arduino boards, remote controls, light timers, engine control units, and toy robots. This post will cover the basic workings of CPUs. It assumes that you have a basic familiarity with binary numbers. Throughout the post there are links to Wikipedia articles where you can read more about each subject.

The smallest unit found within a CPU is the switch. In all modern CPUs, these switches are transistors. In the past, vacuum tubes were used. You could also construct a CPU out of mechanical switches.

A switch generally has two states, on and off. Therefore, just about all CPUs work in binary, where everything is represented by ons and offs, aka ones and zeros. It is also possible (and sometimes done as a hobby project) to build a ternary CPU, where each switch can have three states, but this is not done commercially because it is less practical.

These switches are arranged into logic gates. A logic gate is a device that takes one or more input signals and produces an output based on those inputs. These implement Boolean logic, first described by George Boole in 1847, which deals with binary states, in this context called true and false. The basic Boolean logic functions are AND, OR, and NOT. AND takes two inputs, and produces a true output if and only if both of its inputs are true, producing a false output otherwise. OR takes two inputs, and produces a true output if either of its inputs is true, producing a false output only if both inputs are false. NOT takes one input, and produces the output opposite to the state of its input. The truth tables for AND, OR, and NOT are as follows:

AND

| Input 1 | Input 2 | Output |

| false | false | false |

| false | true | false |

| true | false | false |

| true | true | true |

OR

| Input 1 | Input 2 | Output |

| false | false | false |

| false | true | true |

| true | false | true |

| true | true | true |

NOT

| Input | Output |

| false | true |

| true | false |

These should be familiar to you from programming, if you have programming experience.

There are also other functions, including NAND (NOT-AND), NOR (NOT-OR), and XOR (exclusive OR). NAND and NOR are, respectively, composed of an AND or an OR with a NOT applied to its output. Everything in the output column is simply the opposite of what it is for AND and OR. XOR is similar to OR, but produces a false output when both inputs are true.

It turns out that all of the other Boolean operations can be composed of arrangements of multiple NANDs or multiple NORs. For this reason, modern digital circuits tend to use all NAND or all NOR, because this makes manufacturing easier.

As mentioned above, the electronic implementations of these functions are called logic gates. There are multiple competing systems of symbols to represent these gates, which can be seen in this image.

Another common type of circuit composed of the above described parts is the flip-flop, or latch. These circuits enable the storage of information. An example is the SR (set–reset) latch. Here is an interactive SR latch composed of individual switches: Interactive diagram by DrJolo (click link, then click on diagram to interact)

An SR latch can also be made from logic gates:

Animated diagram by Napalm Llama, used under CC-BY 2.0

Animated diagram by Napalm Llama, used under CC-BY 2.0

Several flip-flops connected together and sharing a clock form a register, which is a form of memory.

To transfer data around between different flip-flops, they need a trigger signal. This is provided by the clock. A clock in a processor is a signal that alternates between on and off at a specific frequency. (It has nothing...

Read more »

Create an account to leave a comment. Already have an account? Log In.

Become a member to follow this project and never miss any updates

Al Williams

Al Williams

Blair Vidakovich

Blair Vidakovich

agp.cooper

agp.cooper