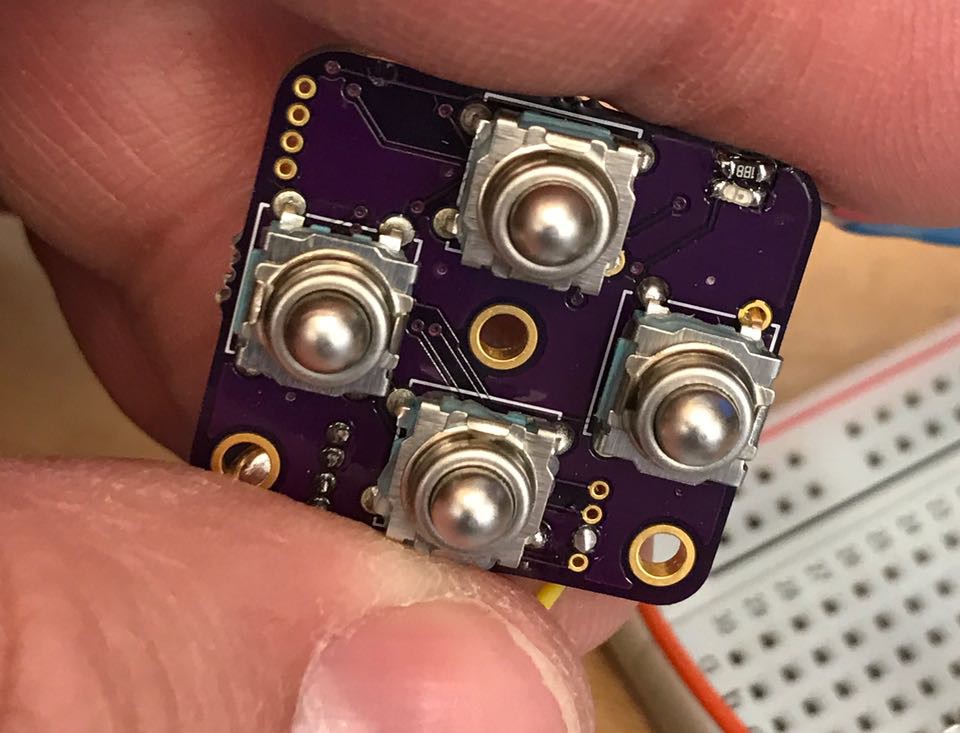

GM1201 - Metal ball tactile DPad

The second I saw these cool metal buttons on Adafruit, I knew they had to be part of my Makernet user interface. This DPad is a four way controller featuring four directional buttons. Cute, eh? They are super clicky when pressed - extremely satisfying.

Unfortunately, this DPad module presented a lot of unexpected challenges. Unlike the other modules I had built, this one had some unexpected clock issues that exposed some serious gaps in my understanding.

I first realized something was wrong when the serial port on the board was garbled. By way of explanation, my standard test jig involves GND, VCC, SWD, SWC and a TX UART pin. When I loaded the test firmware initially, the status messages printed to the UART at 115200 baud were garbled. At first, I suspected that I had somehow screwed up the SERCOM UART configuration, but loading identical firmware onto the rotary encoder board (GM1200) didn't show this problem. So it was a problem with the board, not the code.

I suspected that there was a short or some wiring issue, but i couldn't find any obvious reflection or inductance probing around with a scope. Granted, I am fairly witless when it comes to analog signal debugging so I may not have even been using the scope properly to do this. Next, I inferred that a slower baud rate would be more impervious to noise, but even running at 1200 baud still showed the problem.

After wracking my brain, I finally concluded that perhaps the clock on this particular copy of the chip might not be as stable as the other board. ARM clocks are notoriously complex and arcane. The SAMD architecture has 7 different clock generators that can be routed to nearly any purpose. For instance, you can clock your chip UART one way, and you peripheral bus another way, and your actual processor a third way. You can even use one clock to drive another clock in a phase locked loop.

What is supposed to happen on the SAMD11 architecture is that your code starts running at a very slow, baseline 1MHrz speed using a very unstable boot-up clock. It has all sorts of jitter but its supposed to be enough to get you going. The first thing your program code is supposed to do is set up a proper clock configuration. Typically you configure two pins to be XTAL inputs, and connect those to a 32Khz crystal. 32Khz is really slow, right? So something called a digital phased-locked loop is used to "step up" the speed to 48Mhrz. Not being a signal expert, I am a bit at a loss to explain how it works but my basic guess is that the DPLL is a much less stable but faster oscillator that is more sensitive to noise and the environment. The 32Khz signal is used as a reference heartbeat, and the circuitry must alter the speed of the DPLL to match the heartbeat, thus guaranteeing a consistent clock.

In my architecture, I don't want an external clock or crystal. Fortunately, the Atmel chip designers included a high precision 32K internal clock, presumably one based on a RC oscillator. This clock is factory calibrated. I had hoped this would be sufficient for all of my Makernet peripherals.

Given that the serial port didn't work with the internal clock, I was tempted to assume my factory calibration was off. I seriously considered just pulling the chip off and substituting for another copy. But my experience so far on this project has taught me the value of double checking my assumptions. I've noticed that if I force myself to learn how to debug something with the right tools and instruments, I'll gain much more insight and avoid more mistakes and frustration later.

The first tool I reached for was a Bitscope Micro. This little baby is supposed to work as a logic probe and an oscilloscope. However, over the past year, I have rapidly came to loathe it as an oscilloscope. On the other hand, the Bitscope supports a logic probe. I was eager to try it out and redeem my $129 purchase. But I rapidly discovered how sucky the software is. Slow and sluggish. I needed to calculate the actual clock rate of the chip based on its garbled signals. I figured that instead of the 1200 baud I had requested, i must be getting 1150 or 1300 or something. So if I could just set the receiver baud rate properly, I'd be able to rule out the possibility that there was some other weird hardware setup or configuration issue and confirm that the root problem was the clock.

You are supposed to be able to calculate the baud rate by looking for the width of the smallest LOW pulse you can find. From there, simple division should get you the baud. However, the bitscope GUI gives you no tools to actually measure pulses. Nor is there any auto-baud tool. Secondly, I had a great frustration even figuring out how to get into the window where you configure the baud rate. Yes, the user interface is that bad.

Setting the bitscope aside, I turned to my rigol scope. I still have 22 hours left of UART decoding on my trial software, so I figured I'd use some of that to repeat this analysis.

Once again, I came up short. The Rigol is generally pretty intuitive but I have not learned a lot of basic things. For instance, I have no idea how you are supposed to measure width of a single pulse. I know how to get the average and enable the report of all sorts of cool summary data on a signal. But I don't know how to set a cursor onto something and measure it on the screen. There has to be a way but I didn't have the time to figure it out.

On the other hand, the scope's UART decoder worked pretty well. You can set the decode speed in increments of 1 baud. But no amount of fiddling up and down from 1200 baud got me a correct decode. This was starting to disprove my clock theory. Once again, I started to wonder if I had somehow messed up the sercom. Or if the chip was just bad?

Finally, I decided to just re-read the clock configuration code and see if I had missed anything. Basically, I gave up on the avenue of using instruments to diagnose something. :( In debugging my code, I realized that I was not actually loading the factory calibration into the clock's configuration. In my first, working board, the chips oscillator must have been pretty close to perfect and that didn't matter. But in the second copy of the chip, it wasn't. Since the 48Mhrz DPLL is passed down to the serial port hardware, everything was off.

Put in the right code, and problem was fixed.

Jeremy Gilbert

Jeremy Gilbert

Discussions

Become a Hackaday.io Member

Create an account to leave a comment. Already have an account? Log In.