I'll upload a better diagram once I've worked on it this week.

The old diagram was horrible.

Vehicle-mounted camera with panoramic lens to look for emergency services and other vehicular lights.

To make the experience fit your profile, pick a username and tell us what interests you.

We found and based on your interests.

I'll upload a better diagram once I've worked on it this week.

The old diagram was horrible.

It's been a long time - back to this bad boy....

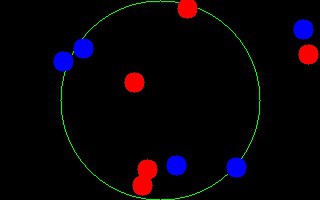

Pie slice bitmasks are doable as per my previous post.

These were generated in Tcl using the canvas and then exporting to postcript and then PNG. In reality, the bitmaps are planned to be loaded as BLOBs which will be generated if there's a change in the resolution of the acquired images.

What I've done is implemented Bresenham's algorithm for arcs and lines, recording each of the plotted points in an array. If given an arc with radius (r), and lines (v0 and v1) defined by an angle (in degrees), then the pie slice is generated by:

horizontal line from v0(x,y) of length v1(x',y) - v0(x,y) where v1(x',y) exists, or a(x'',y) - v0(x,y) instead.

It's fast. It works on one quadrant but the other quadrants can be generated by reflections in x and y and x/y axis...

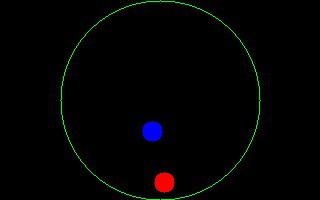

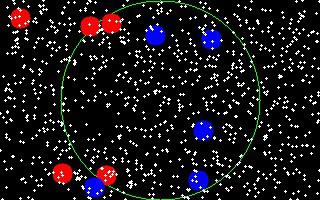

I simplified the maths behind matching the temporal and spatial positions of the lights. How? Well first of all, a quick check for light data in each quadrant of the circular image and then a rotating check of a bitmask for each of the "hours", comparison, and confirmation. This should prove to be faster than any trig functions to be calculated. The bitmasks can be defined well in advance and loaded into memory for comparison. This is a good thing. I'll have pictures later that will make more sense.

I found that the one cheap webcam that I was using had some problems with reliability so I bought another cheap one. The funny part was when I was at the store, the conversation went like this:

Store guy: "Um would you like the replacement warranty for $3.00?"

Me: "Oh goodness no - I'm voiding any and all warranties when I get home with this device. Possibly some provincial laws, a sacrifice to Ba'al, and certainly any number of other statutes will be broken as soon as I get this device out of the packaging."

Store guy: "Um cool - but we'll still replace it..."

Me: "Um no thanks."

Store guy: "What are you doing with it?"

Me: "I'm building a machine-vision device to assist with looking for police cars that are actively in pursuit of a vehicle or has pulled someone over."

Store guy: "So you're really not interested in the warranty are you?"

Me: "No."

I got it home, gutted it, freed the USB cable from the camera and reattached it through a more convenient hole. I then attached it to the harness and took it out for a spin. Now I know what I'm working with when it comes to images.

Road tests!!!!!!

Late night:

Morning (with a lot of sunlight):

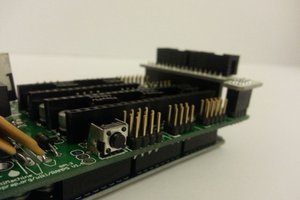

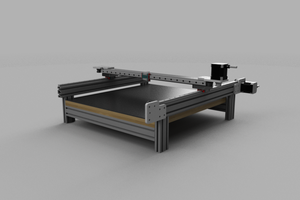

The camera test harness is built - just need to attach the camera to the base unit, adjust to centre it and then I should be in shape to start acquiring images. This project has highlighted a need for a drill press, table saw, and a t-square. Oh, I drilled the mirror-mast hole with multiple drill holes and then a nearly-fire-starting with a dremel tool. Hehehehehehehehe....

Yes the little Lego guy can see everything now. And it's awesome!

Holy saturation, Batman!!!!

These were taken with a Kodak camera from the Terry Fox overpass at 5:00(ish) at a distance of about 500m and zoom. No details but it is an example of how the camera could work at night - there's a lot of saturation but I can work with this and simulate my test images better.

I think I'll go over to the OPP detachment and see if I can't get access to a car and lights to do some measurements with real lights. I'll do so with the camera that I'm planning on using as well as the conical mirror.

I generated some experimental test patterns thanks to open-cv - just so I could move forward until I acquired some images of police lights in action.

I went out this morning to capture police lights but to my dismay there were no accidents/collisions or other traffic offenders. I did come up with two useful ideas while just out of the house:

1) I need to generate test patterns until I can get pictures of police cars for my own development process;

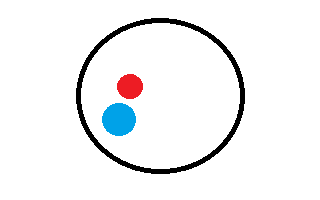

a) 320x240/200 images with blue and red blurry circles applied close to each other, not so close to each other, obscured, blurred, etc..

b) filter green components out;

c) split out red and blue planes;

d) check for red/blue within the circular Region of Interest (ROI)

2) I realized that the temporal component of watching for lights could be taken care of by overlaying multiple images onto a "working" frame that's comprised of 10 or so (TBD) images:

a) accumulate images into a queue and overlay the 1-10 images into a "working" frame that I can examine for the blue and red lights within a particular ROI on the image.

b) new images arriving will be added to the end of the queue while older images are pushed out.

Yay - I took some sample shots of a Red LED reflected by the conical mirror and into the camera. I'll obviously have to do up some other stuff but it makes sense to try mounting the system in a temporary form and try capturing some live images. I know where there are a lot of police cars by a construction site on the Queensway. Sounds like a field trip for tonight.

I was driving home from Trivia last night and had the opportunity to be stuck in traffic due to construction. An ambulance had to come zipping along with its lights flashing and I thought about how the lights would look in my system. It was then that I realized that I didn't need to care about dewarping the image - that was just me thinking like a squishy bag of liquids and gasses again. Whew. I'll draw my thoughts later...

https://github.com/glebite/occiferBob

Create an account to leave a comment. Already have an account? Log In.

Become a member to follow this project and never miss any updates

Tom Meehan

Tom Meehan

Samuel Wittman

Samuel Wittman

N. Christopher Perry

N. Christopher Perry

anthony.webb

anthony.webb