-

Testing Visioneer's phase I design with software implementation

09/04/2017 at 00:19 • 0 commentsTesting Visioneer's phase I design implemented with OpenCV in cross traffic.

https://www.youtube.com/watch?v=74L4lV5V-yQ&feature=youtu.be

Visioneer's phase II design will focus on improving traffic detection and accessibility with neural nets , machine learning, deep learning, or other open source AI frameworks.

The Pi Zero doesn't have the hardware to support sophisticated neural nets, so we are designing custom datasets which will be minimal in size and complexity. We are also optimizing OpenCV to maximize the camera FPS. TinyYOLO\Darknet is our current open-source choice of local neural net, but we also have a Movidius (Myriad 2) USB ($79), which can process Caffee 1 networks at around 100 gflops, while only using 1W of power.

![]()

-

Assembly of 3D printed parts (v1.0)

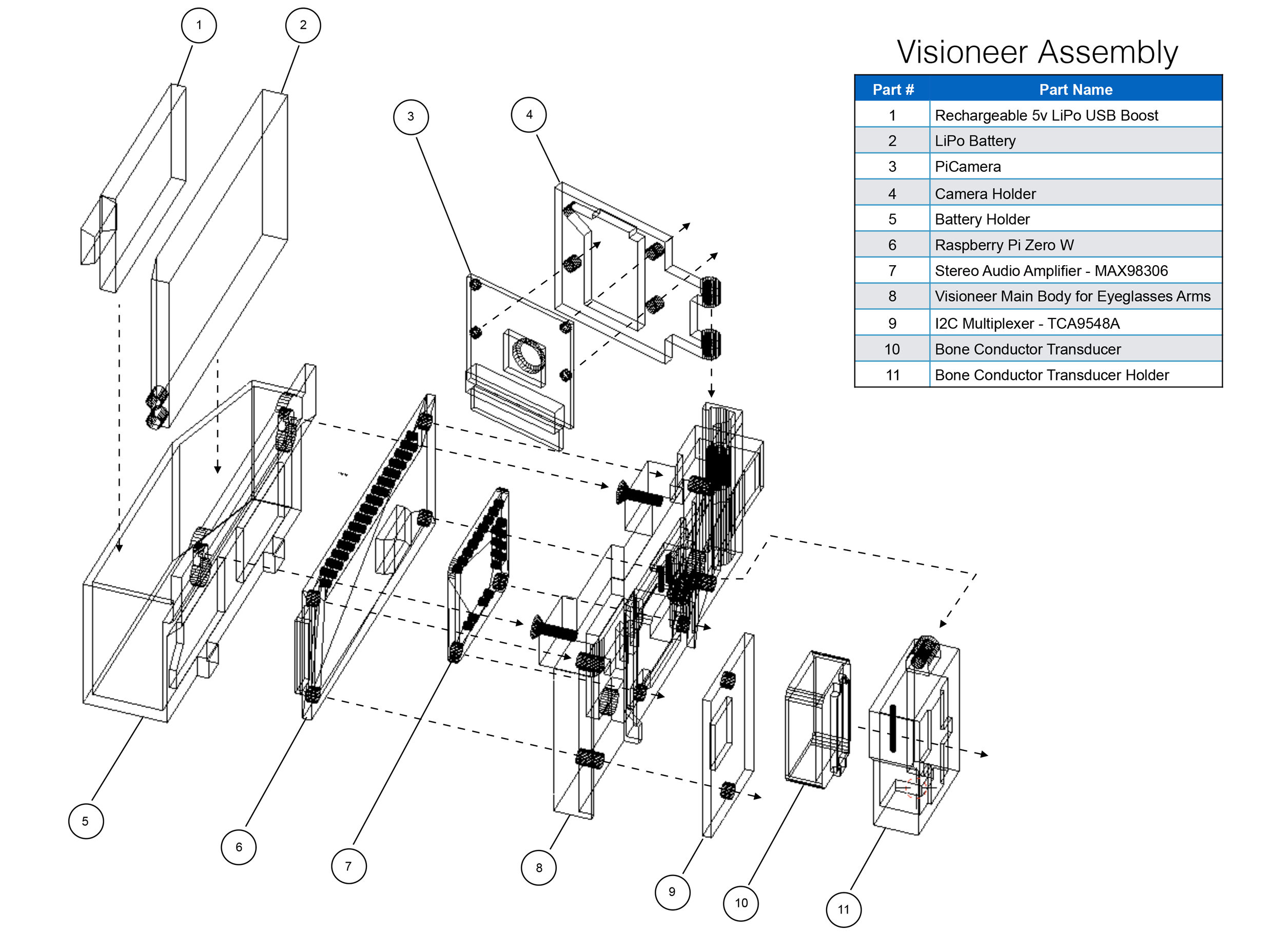

08/30/2017 at 00:22 • 0 commentsUnfortunately we found the glasses and hand band design was not comfortable to wear. So we decided to go with a design that incorporates 3D printed modular parts that snap onto existing sunglasses. This design is much more comfortable and we think it will be more appealing to potential users.

Here is an assembly diagram with the 3D printed parts.

![]()

-

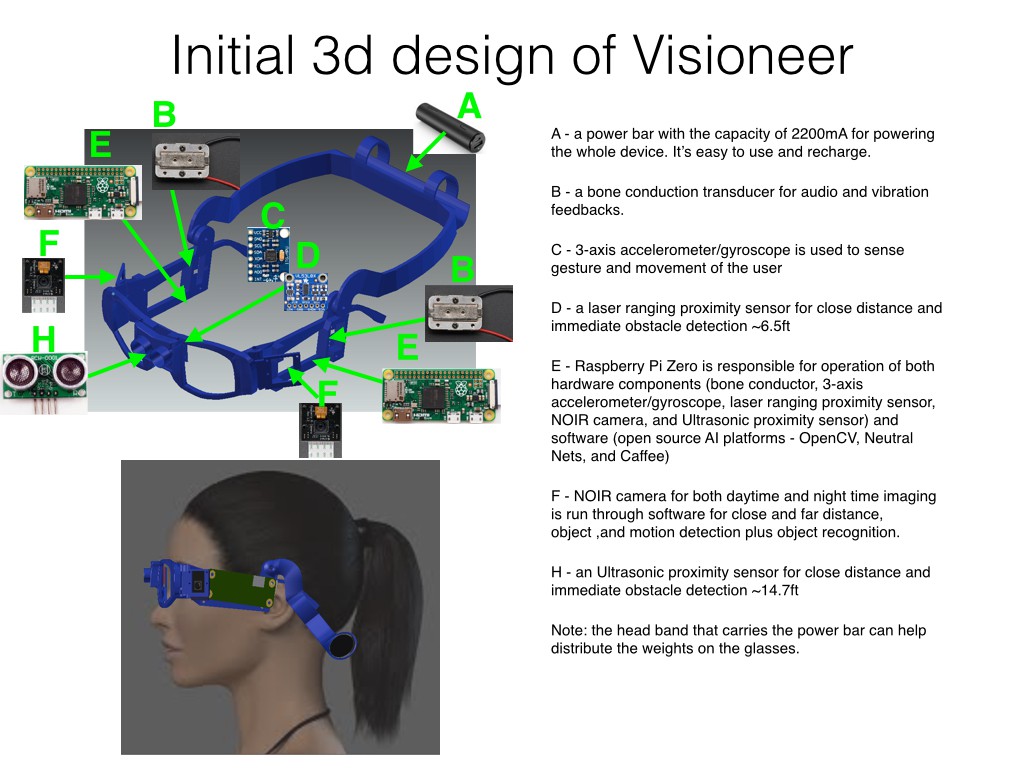

Initial 3D design and 3D prints

08/18/2017 at 23:04 • 0 commentsWe have considered several different design options for Visioneer such as a bracelet, necklace/pendent, pin, glasses, headband, and assistive case attachment. Each option has both pros and cons. Finally we narrowed it down to a design involving both glasses and a headband. The glasses allows the user to "look around" for traffic and provides a good height for obstacle avoidance. The headband will hold the power source and help to balance out the overall weight. This design also allows us to use a bone conduction transducer that provides the best audio signal reception and vibration feedback.

Based on the component list, schematic, and results of camera experiment, we've come up with the following initial 3D design.

![]()

We 3D printed the glasses frame and headband to test for comfort and wearability.

-

Exploration and Experiment

08/16/2017 at 18:20 • 0 commentsWe’ve explored the recognition capabilities of Google Vision and OpenCV. We decided to go with OpenCV, for our phase one design, because of its movement detection capability. Google Vision remains an option for recognition, but we intend to try local neural nets first to eliminate the dependency on an outside service.

Recognizing the label with Google Vision when it is less than 20 cm.

Using OpenCV to detect motion, speed, and position with a selected area on a frame.

Google Vision and Speech API via Asus TinkerBoard

We also did an experiment to determine how many cameras should be used, as well as, which angle is best for traffic detection. The conclusion was that two cameras in the angle of 60 degrees are best for performing traffic detection.

1. One camera, forward facing, no deflection.

2. Two cameras, forward facing, no deflection

3. Two cameras, 15 degree deflection

4. Two cameras, 30 degree deflection

5. Two cameras, 45 degree deflection

6. Two cameras, 60 degree deflection

7. Two cameras, 90 degree deflection (side facing)

Visioneer

AI glasses that provide traffic information and obstacle avoidance for the visually impaired.

MakerVisioneer

MakerVisioneer