-

Of big hammers and putting them down

10/24/2017 at 19:09 • 4 commentsIn the last update, I found that the Raspberry Pi USB device boot doesn't like waiting, and with some small changes to usbboot was able to prevent any of the nodes from timing out when powered up all at the same time.

Now there's another problem: at random, usbboot fails to send the first stage "bootcode.bin" to the Pi Zero, which causes all future transfers to that Zero to fail until it's power cycled. I spent a couple of late nights trying to figure this out. Is it because the ROM bootloader has some other timing constraint (not sure)? Is there a signal integrity or power problem with my board (maybe)? Are the hub ports going into a low power mode, angering the ROM bootloader (nope)?

The only thing I'm sure of that fixes it is a power cycle.

So in the spirit of inelegance that defines this project, I modified usbboot to cycle power on any slot that fails on a transaction involving bootcode.bin.

And it's not that inelegant either. Because I'm using the USB standard for in-band power control of hub ports, I can traverse up the USB tree from the current Pi's USB device that failed to find the hub. Once I have a handle for the hub, I send a message to the hub requesting that power be turned off and on to the Pi's port. When the Pi resets, it re-enumerates on the USB bus and is detected again by usbboot.

-

Improved terribleness

10/22/2017 at 18:53 • 0 commentsThe problems that I saw with usbboot seem to be a result of not being designed for booting multiple targets at once. rpiboot would end up serving bootcode.bin to all of the nodes at once, then serve the complete set of files to each node one at a time until no more Broadcom ROM boot devices are found on the bus. This would be fine, except that the Pi seems to hang after a certain amount of time after sending bootcode.bin if the transfers haven't completed. Since the failing node seems to die in the middle of a file transfer, I suspect that it's a watchdog timer or maybe a bug in one of the binaries involved in boot (bootcode.bin or start_cd.elf).

Whatever the root cause, it goes away as long as usbboot services only one node at a time. I came up with a fix that prevents rpiboot from talking to any other Broadcom devices in USB boot mode until it has finished booting the current device. Once it sends bootcode.bin successfully to one device, it will ignore other devices when scanning for the second stage boot device until it has completed serving files to the current device. A timeout prevents it from hanging indefinitely in the case where the current device fails to re-enumerate after bootcode.bin is sent.

I think I have it working reliably now, but it will take some overnight testing to prove it works.

A better solution, perhaps for the future, would be to make usbboot multithreaded, spawning a handler thread for each ROM boot target. This should be straightforward since the USB IDs are different for a Pi waiting for bootcode.bin (2763) and one that is re-enumerated after bootcode.bin executes (2764). This should also speed up the boot process.

-

Terribly unreliable

10/20/2017 at 20:08 • 0 commentsOne problem that's plagued the project is the USB boot process. The fourth node almost always fails to boot on the first try.

Terrible Cluster relies on the rpiboot utility from the Raspberry Pi Foundation to serve the bootloader and kernel to each of the compute nodes. rpiboot first watches for a USB device to be plugged in matching a Zero in USB device boot mode. It then sends down the first stage bootloader "bootcode.bin". The first stage begins executing, recognizes that it was booted from USB, and the reinitializes the USB controller in device mode. rpiboot notices that the device has re-enumerated under a different device ID, then it enters a USB fileserver mode.

At this point, bootcode.bin goes through the same process of loading executables and config files as when booting from an SD card: start_cd.elf, config.txt, kernel.img, etc. But instead of fetching the files from SD, it requests them from the USB host. rpiboot sits in a loop waiting for file requests and sending files to the device until it receives a message saying that the device is done, or a transaction with the device fails.

This works fine for me when I'm booting one Pi at a time. However, when I am booting all four Pi Zero nodes at once it often fails when sending the kernel to the fourth node that it boots. rpiboot does not operate sequentially, instead sending bootcode.bin to first USB device with a matching bootloader VEN/DEV. It is always the fourth one that fails, and it does not appear to favor a particular slot. Once a node finishes loading all of the required files over USB, it boots reliably. So this has everything to do with the USB boot process, and not the OS.

If I can't trust rpiboot to sit in the background and serve files to booting nodes, then this isn't going to be a useful system for learning about software deployment. Sounds like a good debugging project for the weekend...

-

Many OpenSCAD lines later...

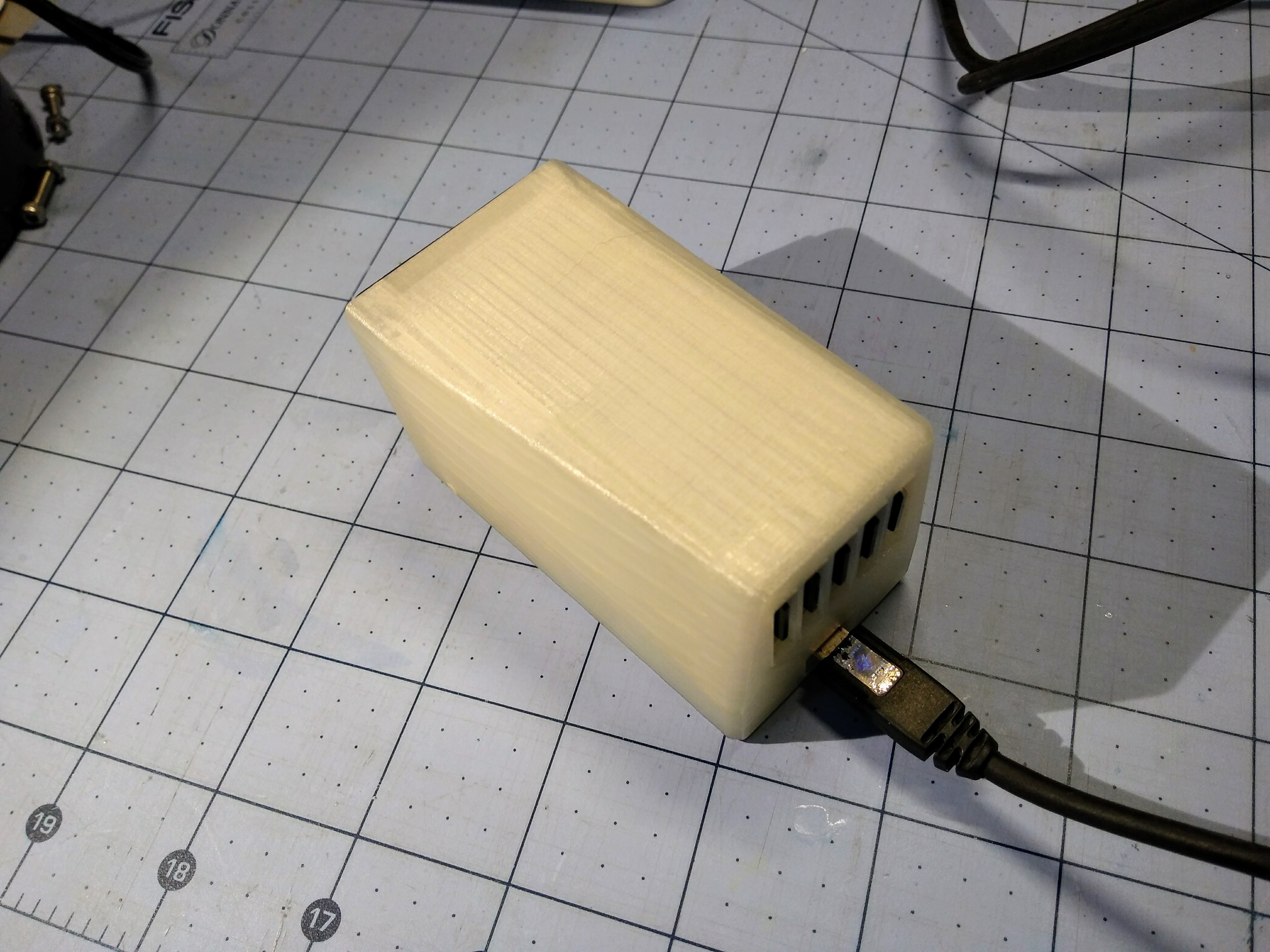

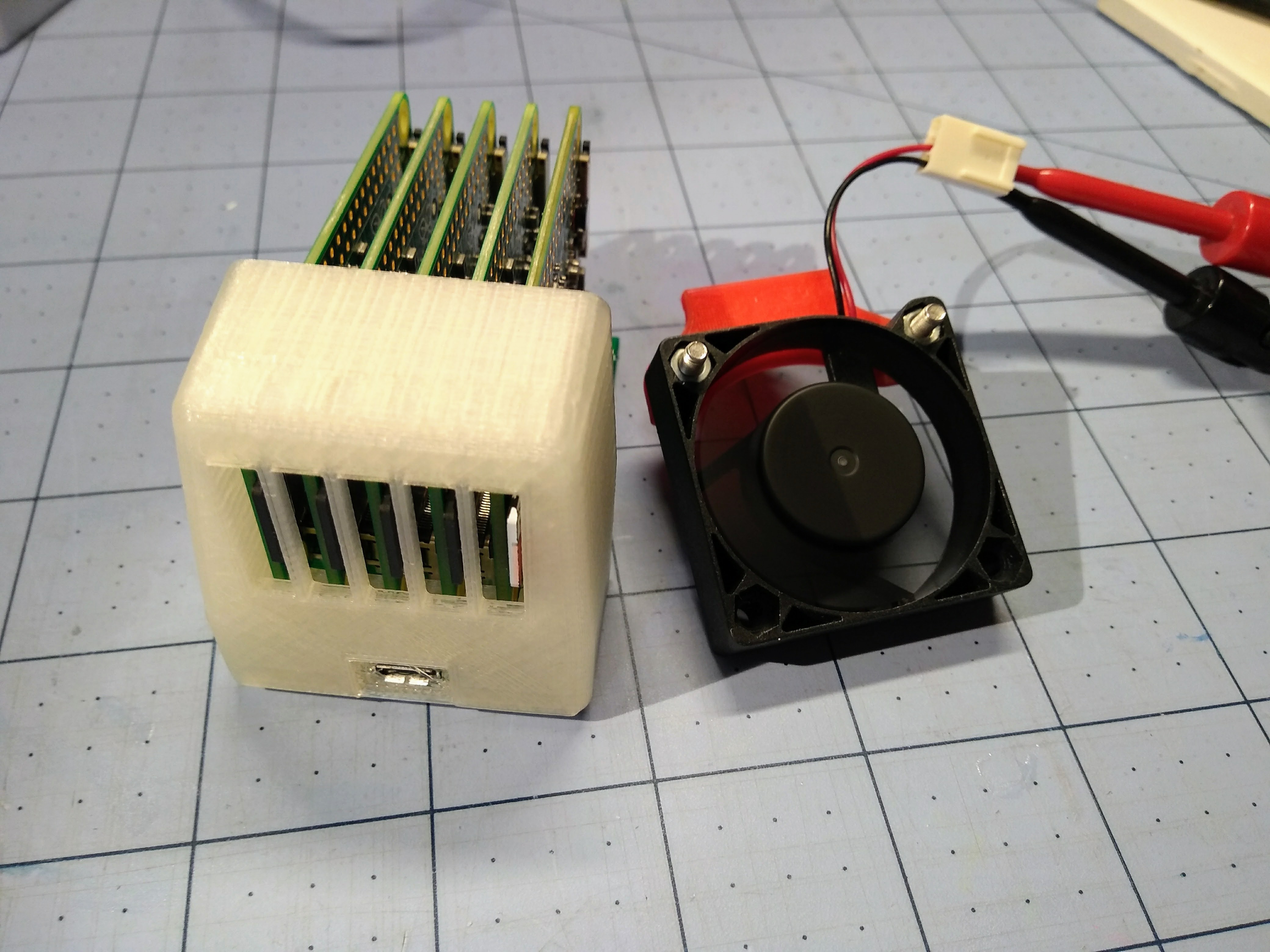

10/19/2017 at 06:21 • 0 comments![]()

![]()

-

Hot mess

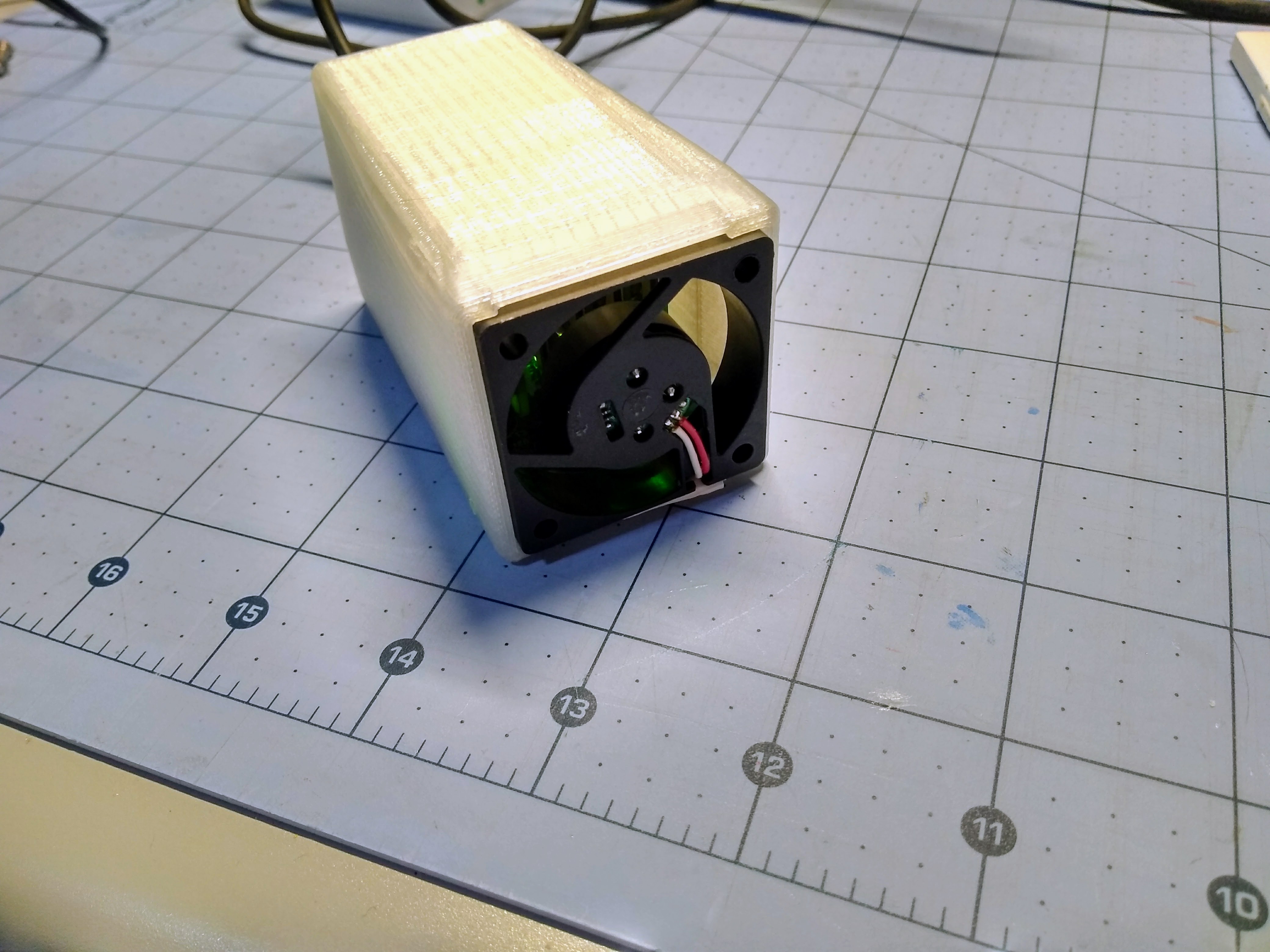

10/18/2017 at 06:22 • 0 commentsAfter a truly terrible week of meatspace problems last week, I'm back.

I've been working on and off on a 3D printed case for the cluster. The case doesn't need to be beautiful, but it does need to protect the cluster and restrain the Zeros so they don't flop around on the backplane's USB headers.

One problem though: the HPL runs from earlier show that the CPUs can reach over 70 degrees under load in ambient air. In an enclosure it's going to be much higher even with vents for convective airflow, and PLA plastic starts getting soft around 65 degrees...

Thus a fan is going into the case somehow.

Enjoy this pic of a test fit print while I figure this out:

![]()

-

Less terrible

10/04/2017 at 04:07 • 2 commentsAlthough performance is not the goal here, I wasn't happy that the cluster wasn't benching anywhere near what I expected with HPL. After some head scratching, I found out that the math library that I used to build the benchmark (libatlas) is built for soft-float in the RPi repos.

I rebuilt HPL with the libopenblas math library, added the head node into the pool, and hooked up my bench supply so I can use its current meter.

Now the terrible cluster does a max of 1.281 GFLOPS, and drew an average of 4.962W over the run. That means it's only 72 million times slower than the fastest computer on the June '17 Top500, and at an efficiency of .258 GFLOPS/W is 4.9 times more efficent than the least efficient computer in the same list.

(corrected with a slightly higher score after retesting 10/5/17)

-

Terrible metrics

10/02/2017 at 21:46 • 0 commentsFollowing instructions found here, I ran the HPL benchmark on the four compute nodes of the cluster. On the first try, I'm getting 390MFLOPS.

That's 300 million times slower than the fastest ranked supercomputer as of June 2017.

However, it's at worst only 40% less efficient* than the least power efficient computer on the same list, so that's nice I guess.

*Didn't measure average power during the run, but it does have a 5V 2A power supply and isn't hitting the limits. So I'm assuming 10W running full out.

-

Clusterstuck

09/28/2017 at 02:42 • 4 commentsI spent the last couple of weeks trying to figure out the best way to deploy an OS to the nodes over USB. I spent a few late nights trying to roll my own minimal Raspbian with debootstrap, and a few more scratching my head over how to use the USB boot and an initramfs to write the SD card on first boot or when directed to reformat and reinstall. And I was hesitant to write anything to automate any of this, since I have my heart set on using Ansible for deployment and I'm still an Ansible noob. I was busy yak shaving and yet I still didn't have a working way to copy an OS out to all the nodes over USB.

And then I remembered what I named this project.

So I went for the easy way out. Stick with the stock Raspbian Lite image. Modify the image so that it doesn't boot over SD and falls back on USB. Use the mass storage mode in rpiboot to write the Raspbian SD image to each node. Use rpiboot to serve different cmdline.txt bootfiles based on the USB hub port so that they each get their own unique USB networking MAC address. And use shell scripts for image generation and SD writing instead of making this another Ansible lesson. None of this is how I'd imagined it working, but at least it's working now. Later I can move the process to Ansible and different OS images and deployment schemes.

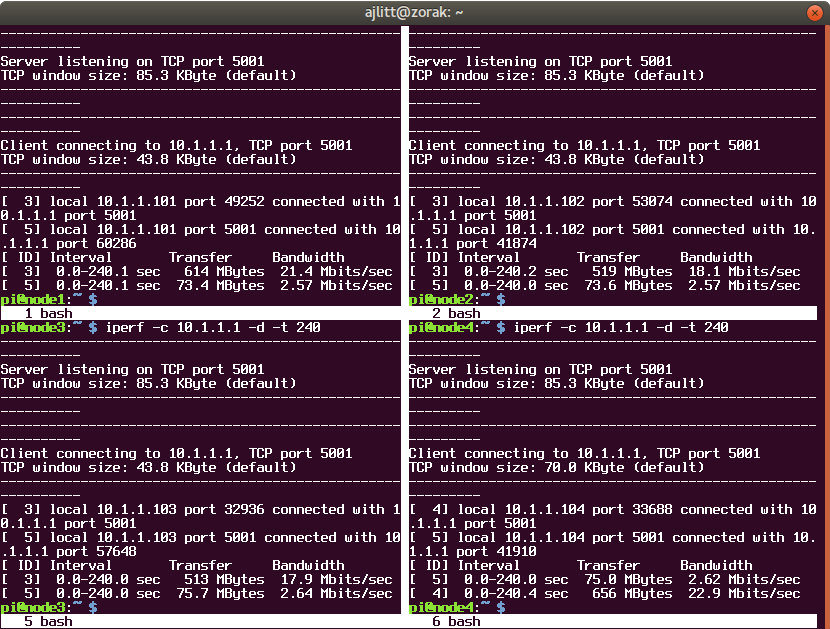

Three evenings later and the cluster can now write a Raspbian .img to all four nodes very slowly (30 minutes or so for all 4), boot them very slowly with a unique MAC and IP (about 5 minutes for all to come up), and take remote SSH logins from the host Pi.

It's hard (and boring) to show any of this in action, so here's a session showing a network between the head node and all compute nodes at once:

![]()

See? The Terrible Cluster lives up to its name.

-

Boring bring-up pt. 2

09/17/2017 at 05:37 • 0 commentsI soldered the rest of the board. Realized I had ordered the wrong part for the USB power connector and bodged it on anyway. I'll update on functionality in a few days.

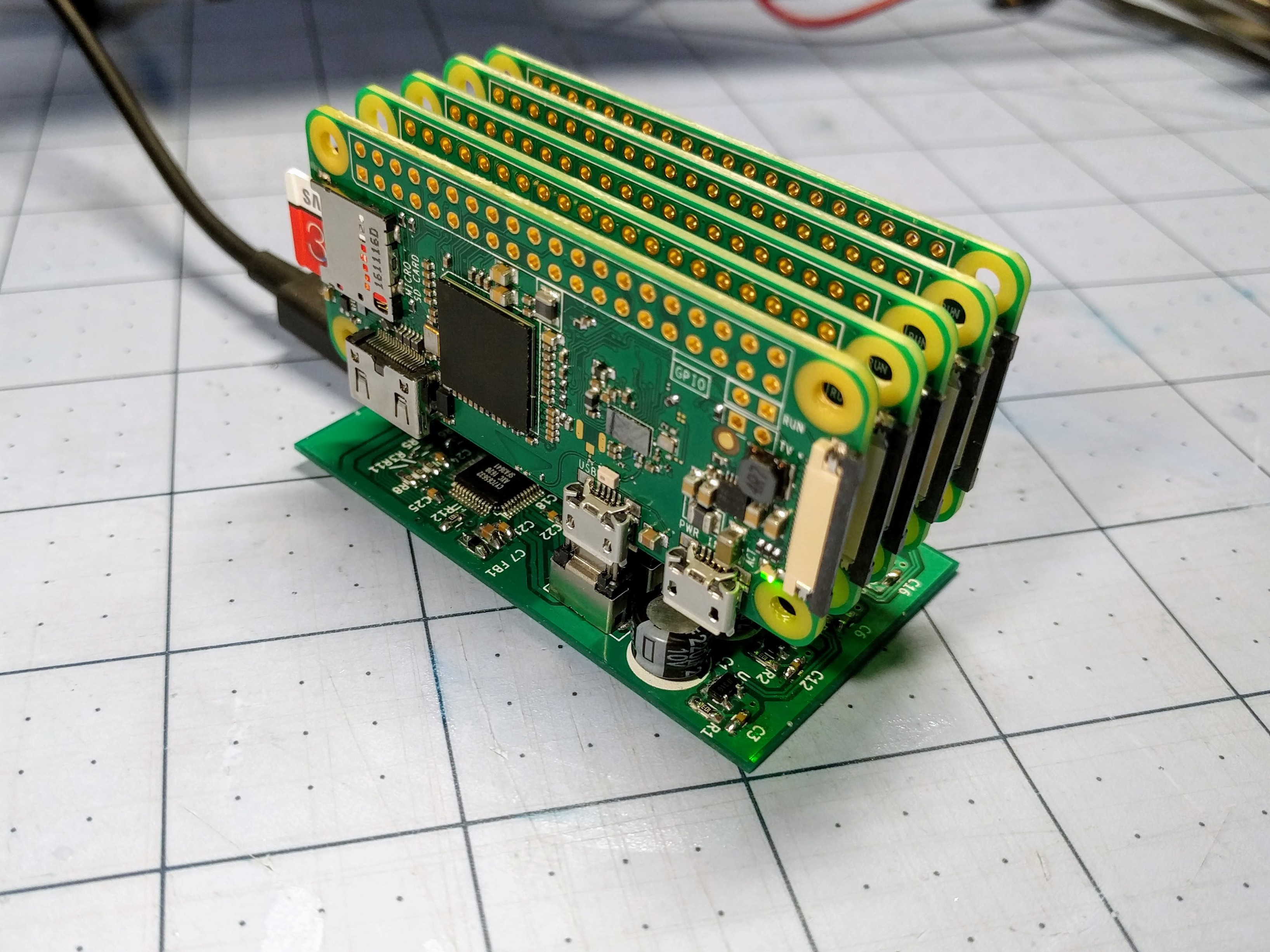

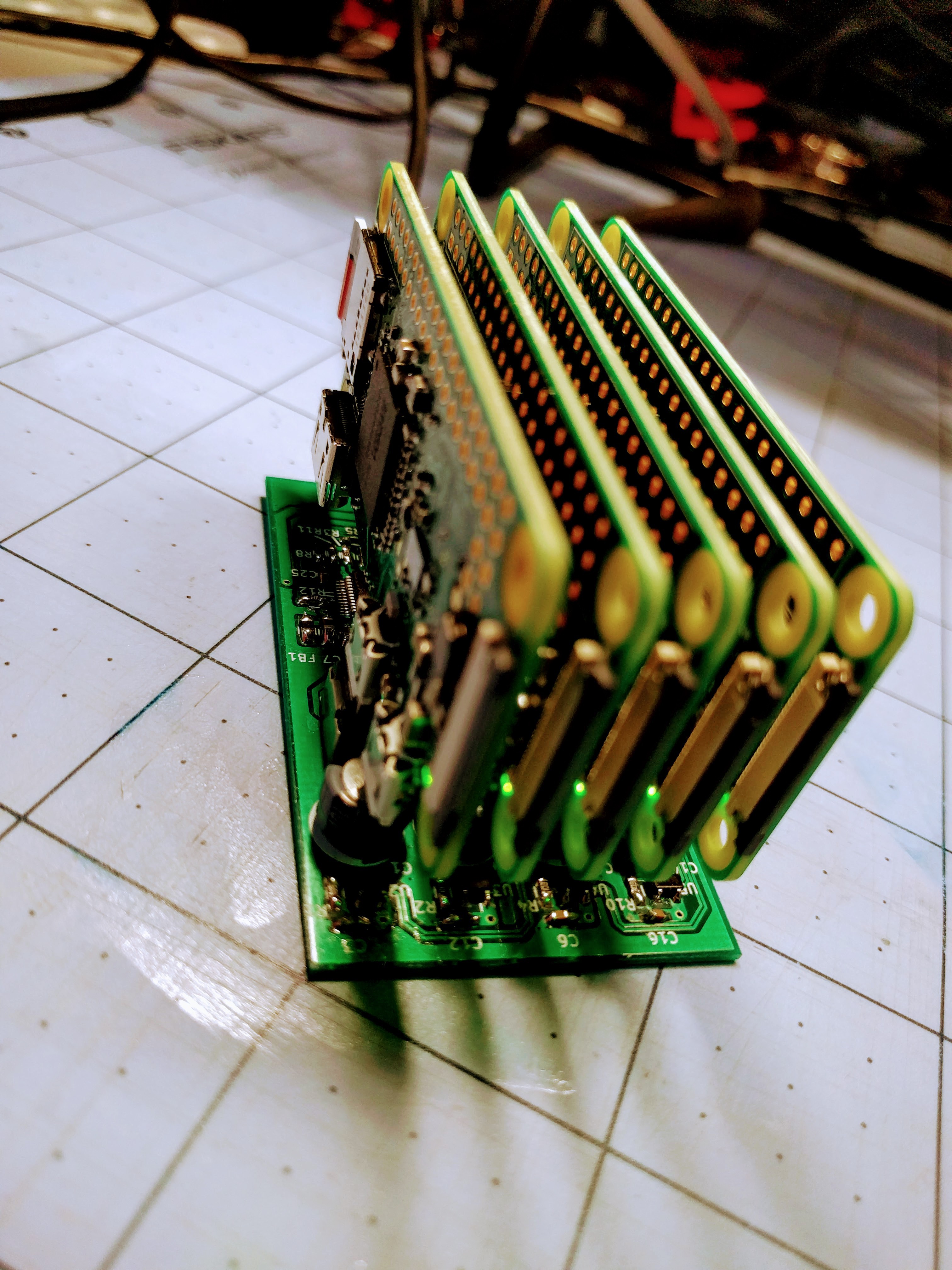

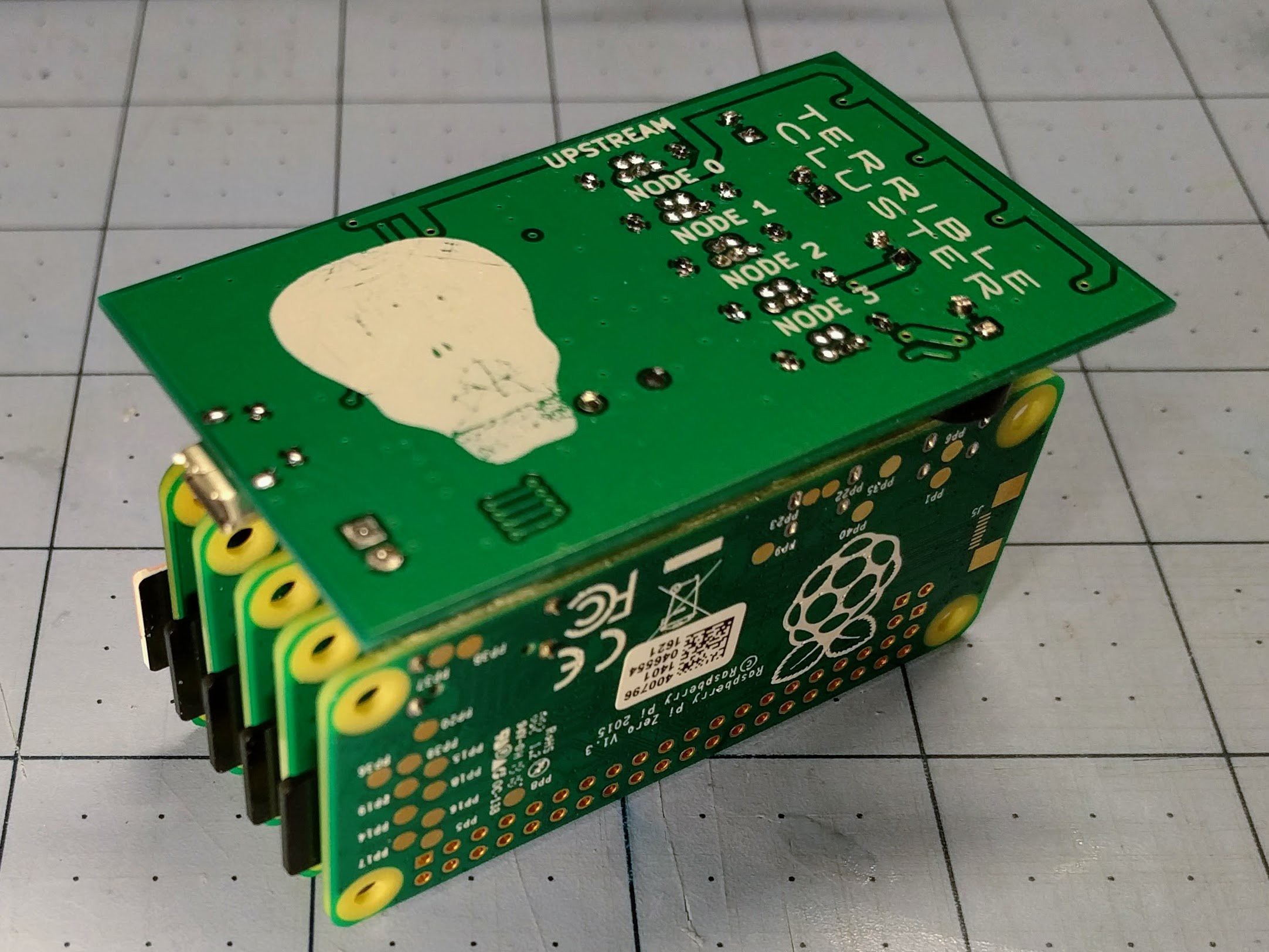

But for now, enjoy some photos of the assembled board and 5 Pi Zeros:

![]()

![]()

![]()

![]()

-

Boring bring-up pt. 1

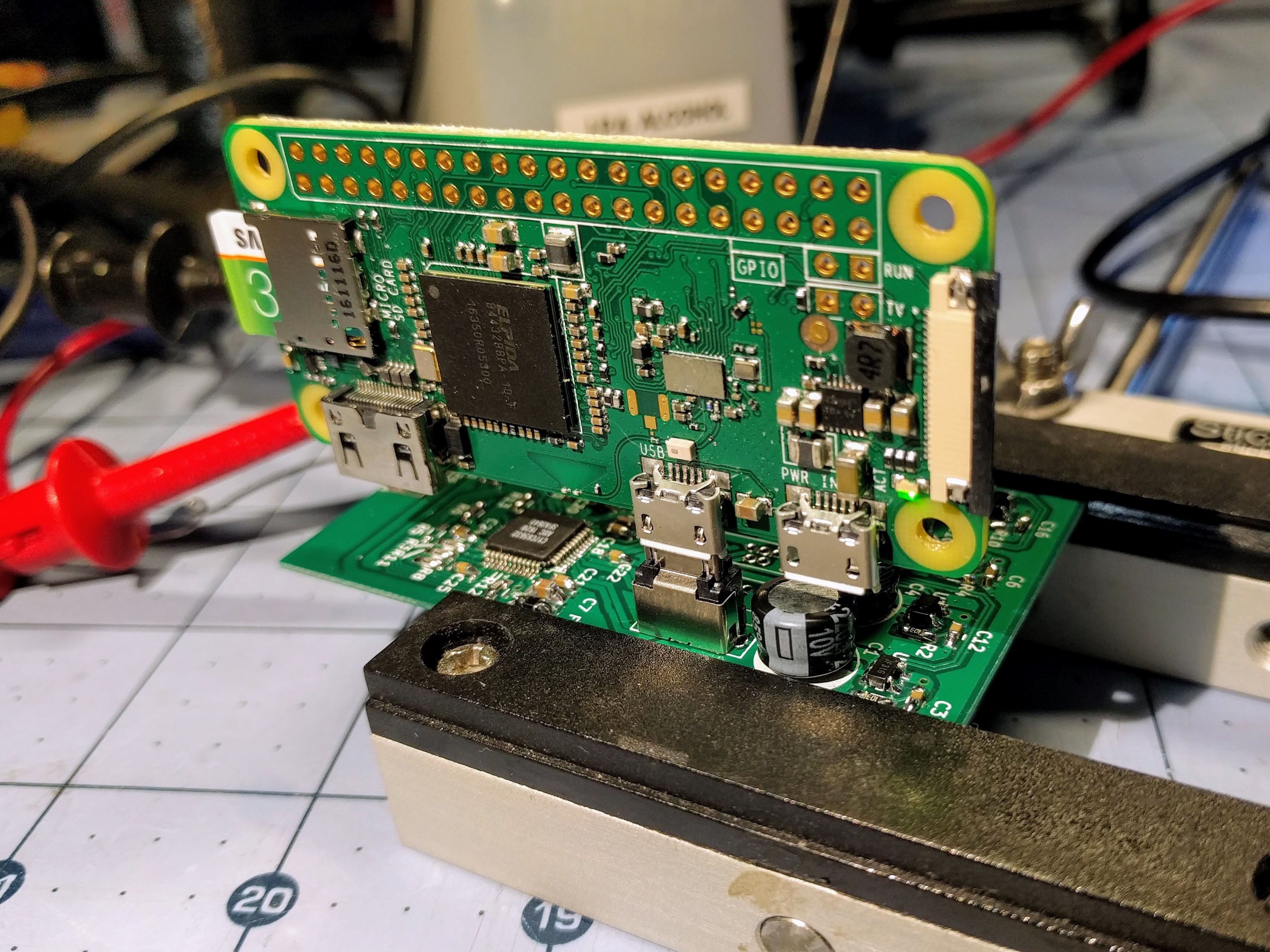

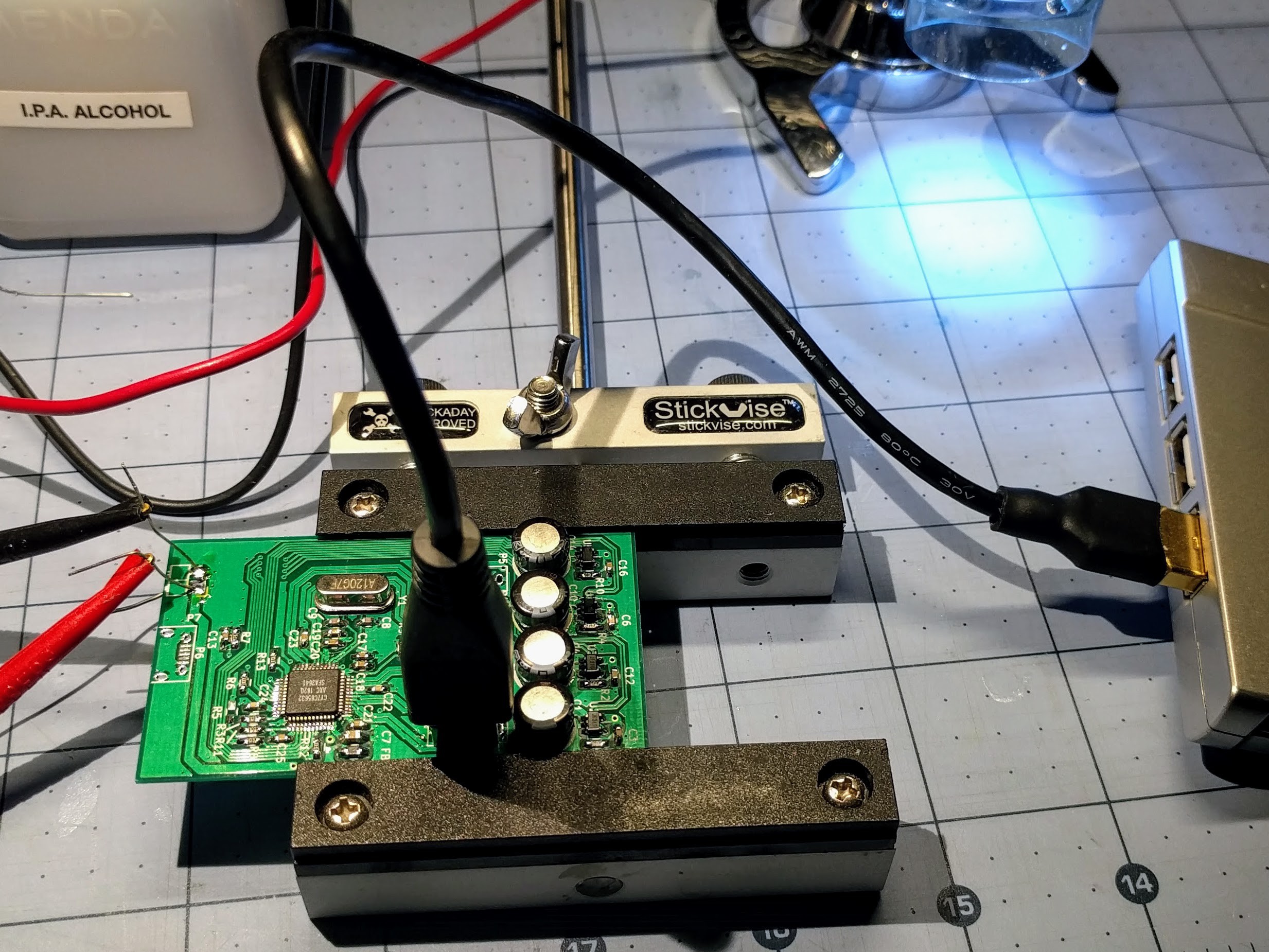

09/14/2017 at 05:59 • 0 commentsThe boards came in today, so naturally I had to get soldering despite being tired from working late last night.

![]()

I populated everything but the USB power jack and the four downstream node USB plugs. I didn't want to waste the connectors until I checked out the upstream end of the hub.

I first smoke tested the board with my bench PSU and checked that the oscillator was doing its thing. Once satisfied that I didn't have any surprise dead shorts, I hooked it to my PC through a micro USB jack to USB-A plug cable going to an intermediate sacrificial hub. I was able to see the hub enumerate under Linux, and checked that it's reporting as having per-port power control.

![]()

I also did a test fit of a Pi Zero W, and since I'm now confident it's not going to do any damage I powered it up. The Pi's LED did its blinking thing as usual as Linux boots.

Stuff I learned:

- The hole dimensions for the USB vertical plug are too big to fit snugly. I had to "justify" the upstream plug with one edge of the board. I'll need to remember to align the other four the same way to keep the Zeros spaced uniformly.

- The Cypress hub IC shuts off the 12MHz oscillator when nothing interesting is happening, probably to save power. I didn't catch this detail in the datasheet. I spent a good half hour wondering why I'd see a fleeting 12MHz on the scope on power-on and nothing afterwards. I suspect it will stay on once I get downstream devices connected.

- My thermal reliefs aren't that relieving. I soldered in one of the electrolytics backwards, and it was a pain to desolder and clean the hole on the grounded leg. I should have used thinner spokes on the reliefs, and made sure that there was no additional ground traces hiding under the plane fill. It would also help if I replaced the solder sucker I broke a few years ago.

- Just because silkscreen art looks good in the KiCAD 3d render doesn't mean it will look the same on a finished board. For grins I converted a photo of a picture one of my kids drew in school to a KiCAD symbol. What was supposed to be a skull looks more like an upside down diseased pear. Elecrow and other PCB manufacturers have a resolution limit to their silkscreen.

Next I need to get the rest of the connectors in, test downstream power control, make up a test cable for the downstream ports (more later), and maybe plug in some more Zeros. But that will have to wait for the weekend.

ajlitt

ajlitt