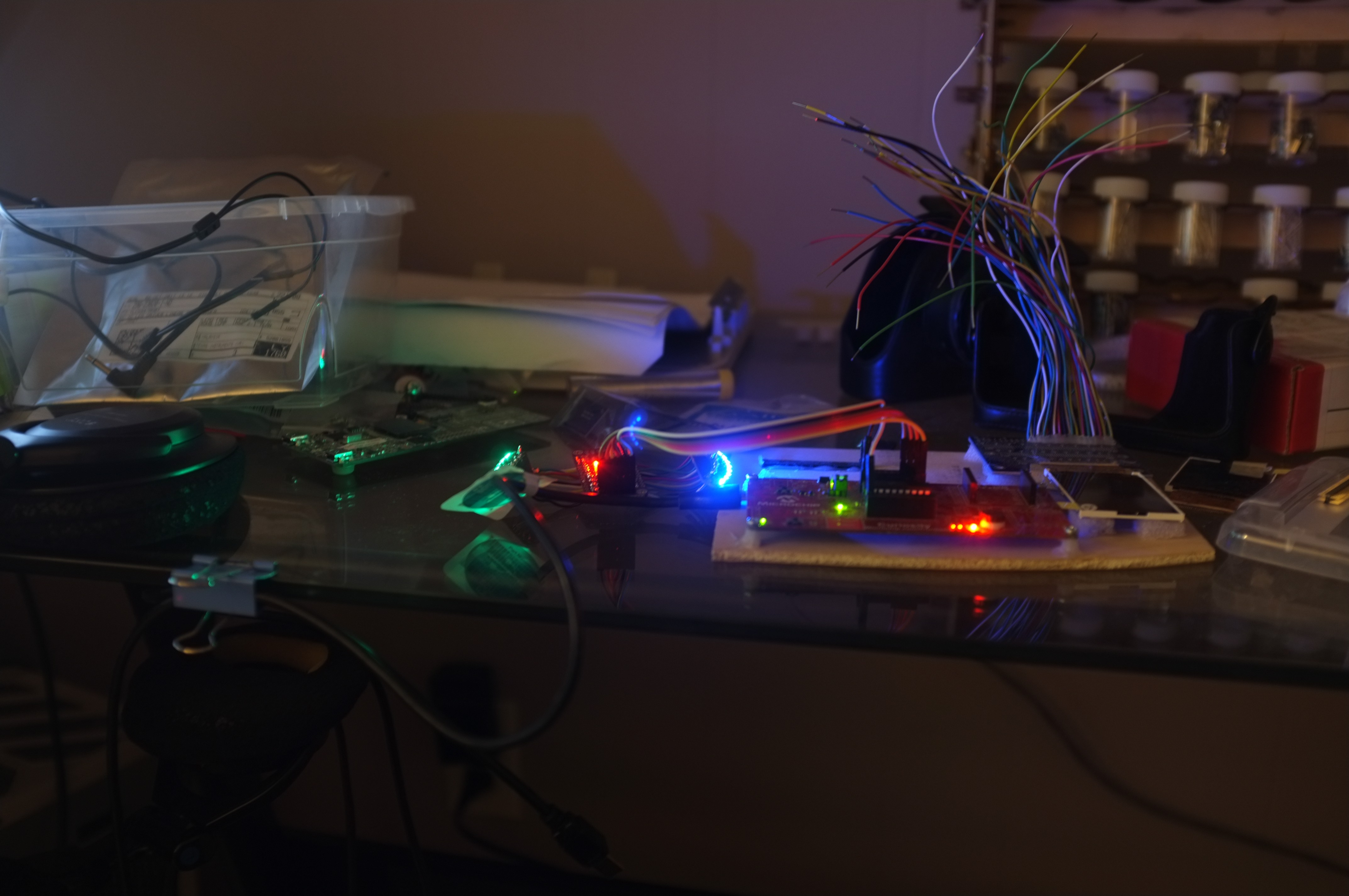

My test jig is becoming invaluable, as I wait for more materials to arrive on the slow boat from China.

Project files are being posted here as I go, for those that missed it.

It makes my workbench look like an 80s cyberpunk fantasy.

The Python tool I've written to convert images to a POV-friendly format has been great.

Give it a command like:

python convert.py -i World_map.png -f c -v -c blueMap -x 152 -y 20 -o blue

And it spits out generated C code, CSV files, or images after conversion. For more info, type:

python convert.py --help

It'll print out a handy menu of all the options.

The C generated output gets stored as a big chunk of memory and transferred out via SPI to the LED controller. Interestingly, the compiler chokes at any more than 6080 bytes of contiguous memory, even though it only uses up 78% of total memory. That'll be a memory paging issue, but I think it'll be fine. That is two displays (green and blue), 20 pixels high, and 152 pixels around.

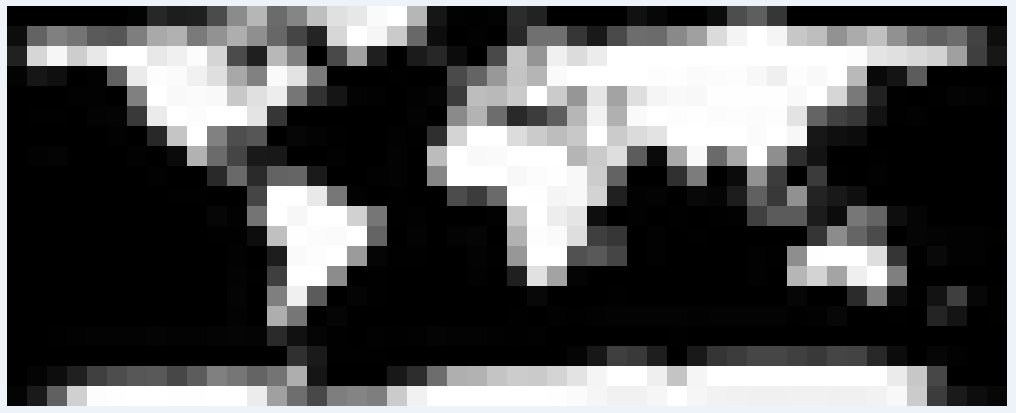

For my test jig, I did much smaller - 10 pixels high and 50 across, and then waved it back and forth in front of camera a bunch.

Hey... that doesn't look like anything familiar.

Oh wait. No, I guess that's about right.

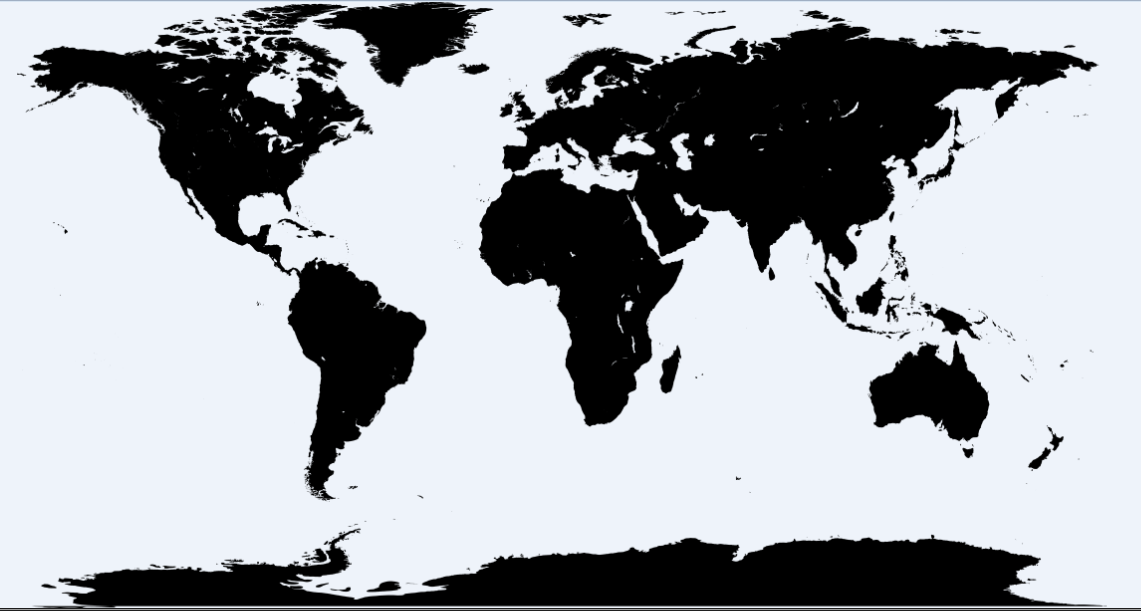

Here's the original:

(Greyscale values inverted because green must be 0xFF)

The final

Version at 20 pixels high will look more like this:

Recognisable, but it would still be nice if it were finer. Maybe revision 2.

Jarrett

Jarrett

Discussions

Become a Hackaday.io Member

Create an account to leave a comment. Already have an account? Log In.