The project has been scripted in MATLAB because of it's amazing matrix math functions and simply because I had it installed on my laptop.

What I eventually would like to do is develop a SLAM (Simultaneous Localization and Mapping) algorithm to generate maps with only the use of a LiDAR and no other navigation aid (incl. encoders).

This project however only addresses the issue of localization of a robot in a known map - like the arena our robot is going to be in for the Trinity Fire Fighting Robot competition.

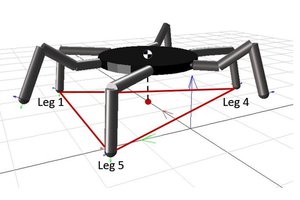

The key to the algorithm is calculating best-match scores for every possible linear and angular offsets for the pixelized LiDAR map on a pixelized known map. The best score is then obtained and the x and y indexes of the best score is used as the map offset. The LiDAR map is first rotated to make all lines vertical or horizontal - this leaves only for angular possibilities for the map-matching algorithm which significantly reduces the load on the processor. The rotation of the best match is inverted and the H/V rotation is also inverted to get the robot's position and heading.

For those who wish to use the algorithm in your own LiDAR-based mapping robot - here's my code:

(Hackaday could definitely use MATLAB or Octave syntax highlighting btw)

clear all; close all; clc; % Hardware Specifications LiDARRange = [20 600]; % [cm] LiDAR Range LiDARdtheta = 1; % [deg] LiDAR Angular Resolution LiDARdr = 0.2; % [cm] LiDAR Linear Resolution map = []; % Simulation Parameters run = 1; % run simulation flag tth = 1; % [cm] Thickness tolerance threshold dt = 0.1; % [s] simulation time step dd = 10; % [cm] Discontinuity check threshold dr = 0.1; % Linearity check range threshold da = 10; % [deg] Angularity tolerance mR = 4; % Minimum repetition required to be valid bounds = [0 244 0 244]; % [cm] Maze bounds (MATLAB axis) RobotPos = [80 10]; % [cm] Position of Robot (x,y) RobotHdg = 80; % [deg] Heading of Robot, with 0 deg pointing E, CCW + LiDARMap = [transpose(0:359), zeros(360,1)+600]; res = 16; % [px] Map resolution % Define Arena Walls walls = [ 0 0 0 244; % W Boundary 0 0 244 0; % S Boundary 244 0 244 244; % E Boundary 0 244 244 244; % N Boundary 126 0 126 45; % Room 1 W Wall [M] 126 91 198 91; % Room 1 N Wall 0 103 72 103; % Room 2 N Wall 72 56 72 103; % Room 2 E Wall 46 157 72 157; % Room 3 S Wall 72 157 72 244; % Room 3 E Wall 126 147 126 198; % Room 4 W Wall 126 147 196 147; % Room 4 S Wall [M] 196 147 196 198; % Room 4 E Wall 126 198 150 198]; % Room 4 N Wall [M] % Localization STEP 1 - Pixelize Known map <40x40> [ONE TIME PROCESS] pixMap_s = zeros(res); % Stored Pixel Map ds = 244/res; for w = 1:size(walls,1) p1 = walls(w,1:2); p2 = walls(w,3:4); if p1(1) == p2(1) % V Line length = p2(2) - p1(2); for s = 0:round(length/ds); pixMap_s(1+round(p1(1)/ds),1+round(p1(2)/ds) + s) = 1; end else % H Line (There are only H and V Lines on this map) length = p2(1) - p1(1); for s = 0:round(length/ds); pixMap_s(1+round(p1(1)/ds) + s,1+round(p1(2)/ds)) = 1; end end end while run % clc; LiDARMap = [transpose(0:359), zeros(360,1)+600]; % LiDAR Generate Range Map for theta = 0:359 % Line12 - line from robot to LiDAR range along theta x1 = RobotPos(1); y1 = RobotPos(2); x2 = RobotPos(1) + LiDARRange(2)*cosd(RobotHdg + theta); y2 = RobotPos(2) + LiDARRange(2)*sind(RobotHdg + theta); for wall = 1:size(walls,1) % Line34 - wall x3 = walls(wall,1); y3 = walls(wall,2); x4 = walls(wall,3); y4 = walls(wall,4); % Find Intersection point den = (x1 - x2)*(y3 - y4) - (y1 - y2)*(x3 - x4); numTerm1 = x1*y2 - y1*x2; numTerm2 = x3*y4 - y3*x4; Px = (numTerm1*(x3 - x4) - numTerm2*(x1 - x2))/den; Py = (numTerm1*(y3 - y4) - numTerm2*(y1 - y2))/den; % Check if intersection point is between bounds if ((Px >= x1-tth && Px <= x2+tth) || (Px >= x2-tth && Px <= x1+tth)) && ... ((Py >= y1-tth && Py <= y2+tth) || (Py >= y2-tth && Py <= y1+tth)) && ... ((Px >= x3-tth && Px <= x4+tth) || (Px >= x4-tth && Px...Read more »

Silverwing Industries

Silverwing Industries

Reed Foster

Reed Foster

Vladimir

Vladimir

Hi, I'm working with Rplidar at my University. And I have seen that you had connected rplidar with MATLAB without beaglebone, arduino or others. I have really problems in connecting the RPLidar only to matlab and in obtaining measurements. Please, if you can give me some help, i will thank you so much.

Thanks

Ángela