I was working on my power supply design today, and found myself in need of a variable voltage supply (to simulate the output of a DAC driven by a microcontroller, which will eventually allow for variable voltage and maximum current setpoints). Well, wait a second here... I already have a DAC!

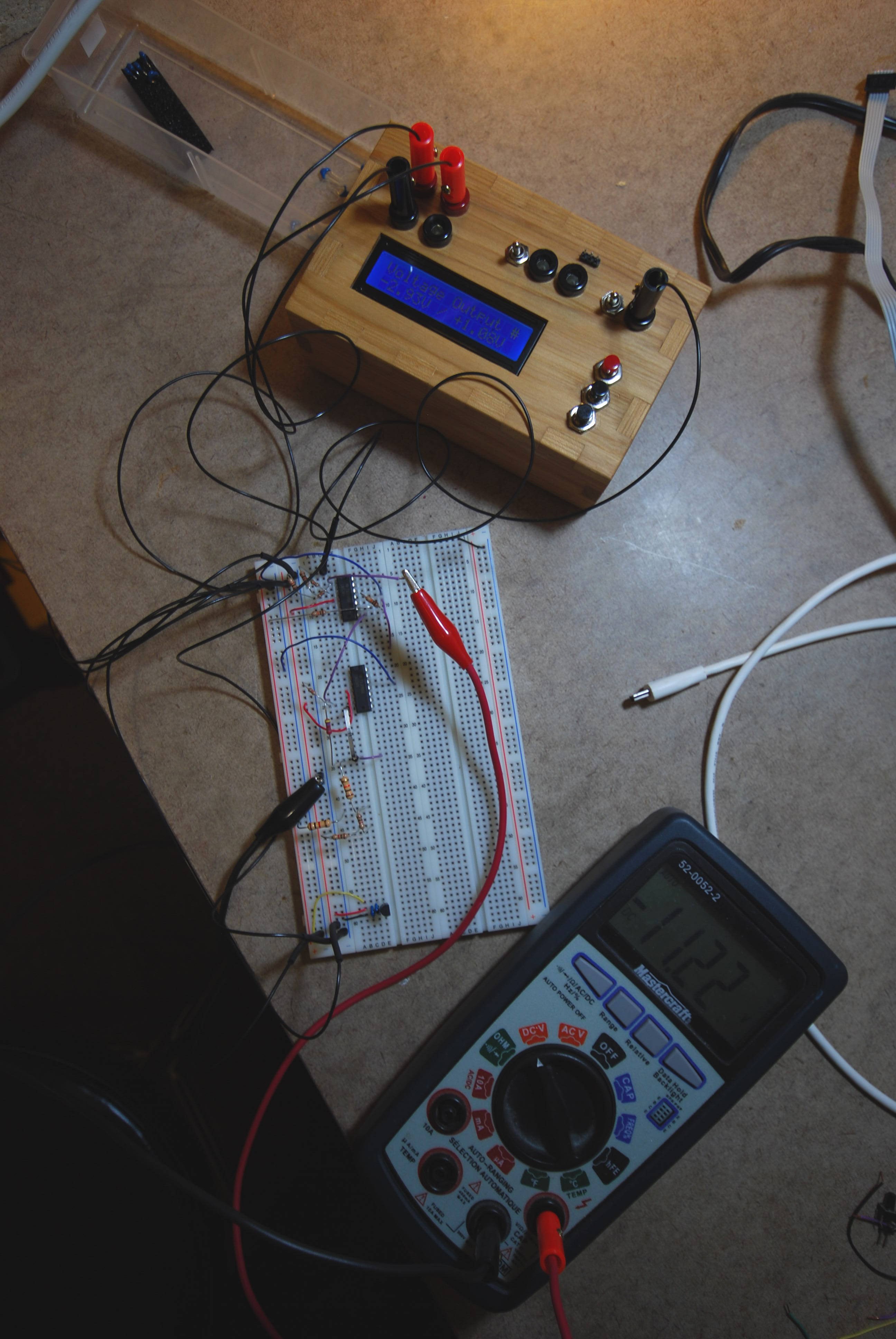

The new mode (creatively called "Voltage Output"), simply writes an 8 bit value to the DAC, and lets it output a voltage. Since the analog channel can have a range of -5 to 5V or 0 to 5V, I show both values (i.e. "-2.93V / +1.08V). Depending on the position of the range switch, the output will be one or the other of these two values.

(In this picture the multimeter is measuring the post-op amp voltage, not the input voltage, which is why it shows -11.22 instead of +1.08)

I have calibrated it in the source code with actual values found in my system. The min / max values are therefore dead on; in between things are not perfect to two decimal places, but it is generally accurate to one decimal place. (I assume that it is due to non linearities in the DAC and op amp that cause the differences; regardless, it is perfectly fine for what I need, and best of all it cost nothing more than an hour of programming time).

Cheers

The Big One

The Big One

Discussions

Become a Hackaday.io Member

Create an account to leave a comment. Already have an account? Log In.