-

PiCamera, IMU and Raspi powering hack

06/18/2015 at 01:24 • 0 commentsOk, it's been some time since last log, and I changed a lot of things. Until then, I worked a lot on the hardware, and just coded a basic pygame script to experiment my changes. So, this code was very unoptimized, with one single big loop. It was time to work on this part.

- I started to rewrite the code to get more performance from it. I threaded the 3 main parts : usb capture, image processing, and displaying. From there, I could measure "framerates" of those threads, and find the bottlenecks.

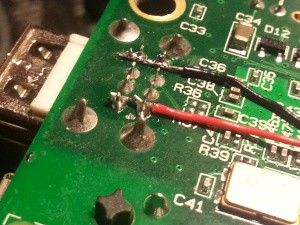

- First obvious bottleneck was usb capture framerate, locked around 10fps. The USB is rather limited on the Raspberry Pi, especially on B : first, USB and ethernet share the same chip, so bandwith suffers. Second, the RPi powering circuit is very basic, and USB could only get ~200mA. So I decided to hack the board and directly route the 5V from power input to USB. This did the trick, and I could capture at almost full speed (27-30fps). I documented this hack here.

![]()

![]()

- Second bottleneck is in the display thread. I found I reached the SPI max bandwidth : if I remove 1 screen, framerate jumps to 22-24fps. So, after many tweaks, I could not get more than 10-12 fps on each screen. This is not much, but it's very stable and both displays are perfectly synced. Unfortunately, I don't find a solution to this, except using a HDMI display I don't have (yet).

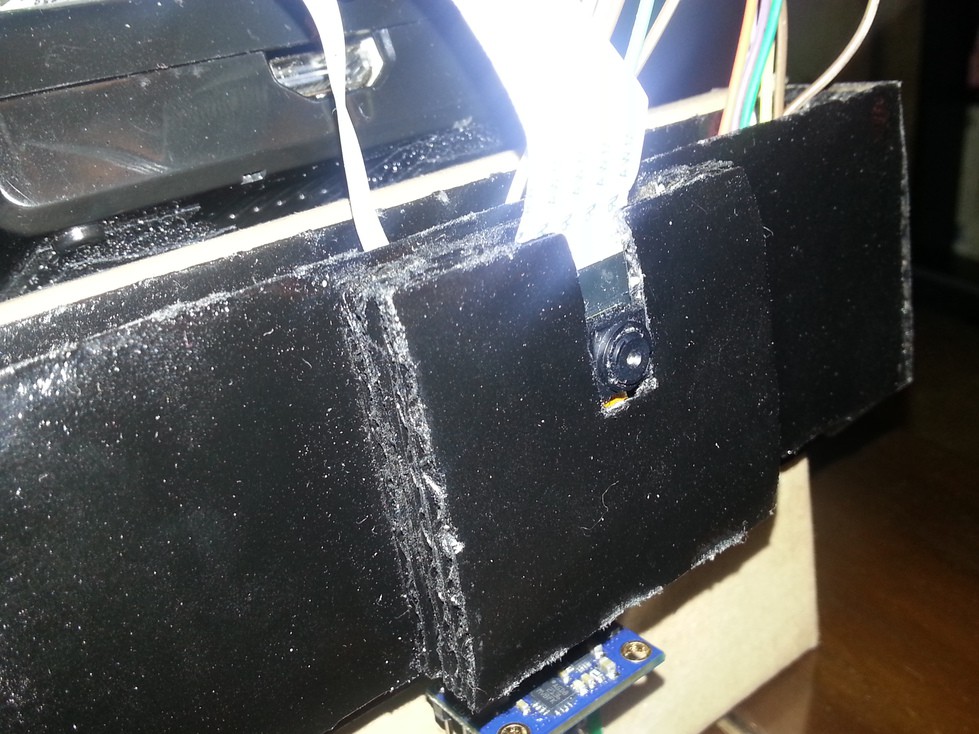

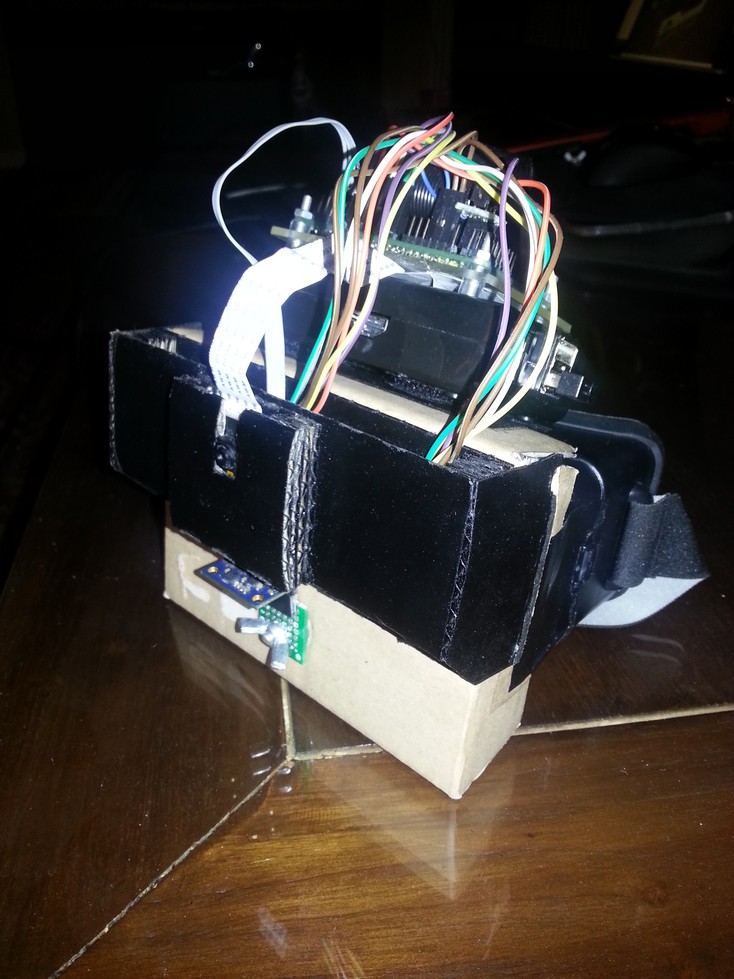

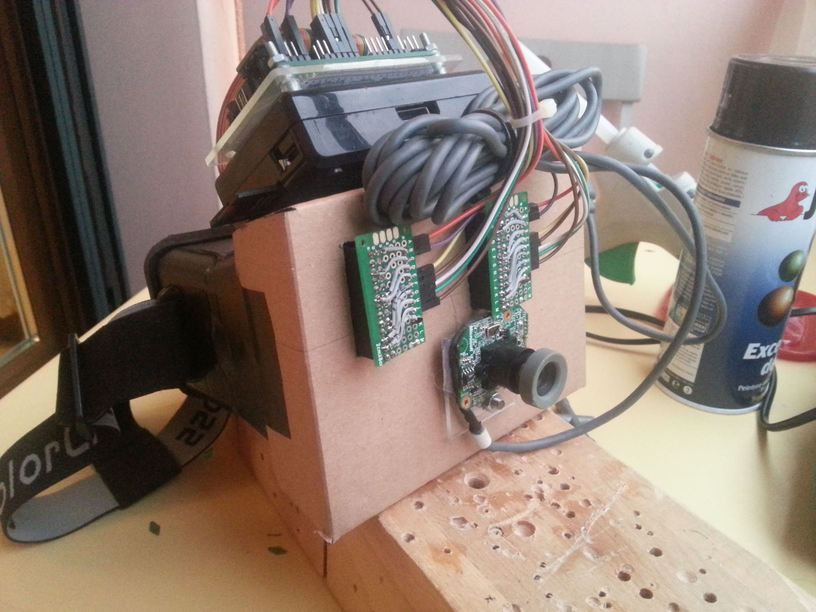

- At this point, I decided to try replacing the USB camera with the Pi camera module (NoIR version) to get a better image. So I built an additional shell with cardboard to hold the module, while allowing to slide in and out. It also hides part of the cables, and I painted it black.

![]()

- Then, I had to find a way to capture Pi camera module at full speed in pygame. Adafruit uses a nice trick in their camera script, but 10fps was not enough. So I ended using an alternative UV4L driver. With it, the Pi camera is used as a regular webcam, but it still uses the GPU. So, my pygame script could capture at full speed. It is running so nice that I decided to write a python lib for it.

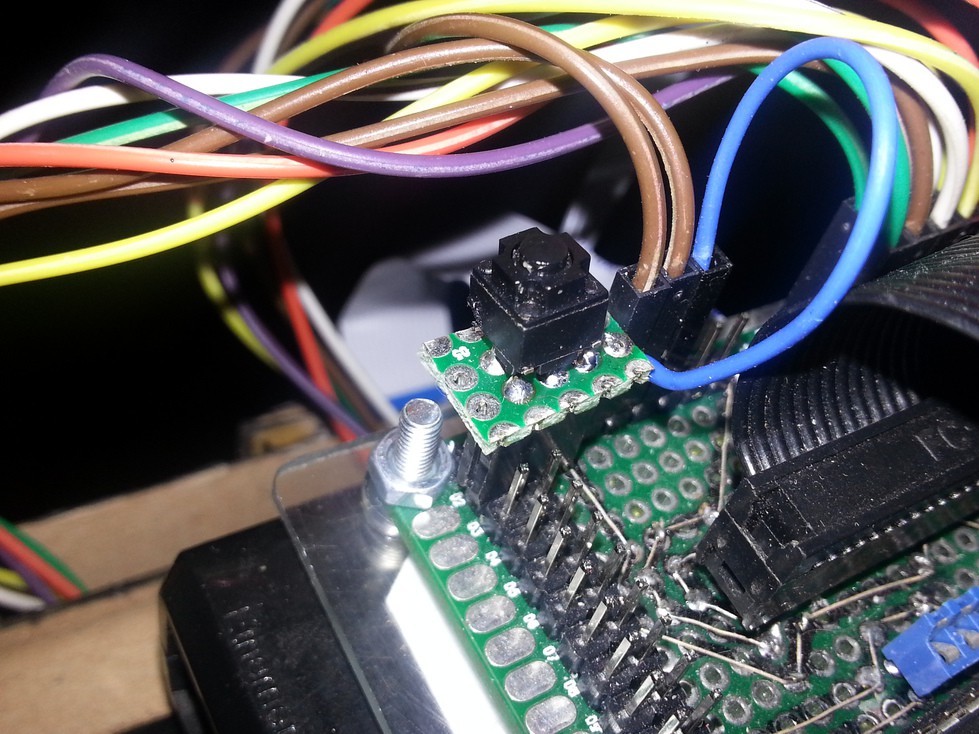

- I added a GY-80 10 axis IMU to the device. This is a gyro/mag/accel/baro combo I'm using in a custom headtracker I built to play Elite: Dangerous and flight sims (working very well by the way, I plan to sell it on Tindie as soon as I rebuilt it wireless). This IMU is a bit tricky to use, and I had to partially write 5 python libs to make it work. It is still not very well calibrated, especially the magnetometer. But it's ok for now.

![]()

- With the IMU working, I added some "telemetry" to the display : 2 lateral scales show pitch ant altitude, and a central bar gives the horizon. I don't use roll and yaw for the moment. I also show the ambient temperature in a corner.

- Finally, I added a single button to the breakout board. The python code I wrote can do few things with this single button : short press calls/stops the HUD script, a longer press reboots the device, and an even longer one power it off. From now, I don't have to plug a keyboard or access it remotely anymore, except when developing on it.

![]()

So, so far, the device is running well. The biggest problem is about the displays. Once I will have upgraded to a HDMI display, I won't be limited by framerate. I also plan to upgrade to a Raspberry Pi 2, to give some room for OpenCV video processing. I have so many ideas at this point...

But even at 10fps and with 240*320 resolution per eye, it's a great device and I don't feel any VR sickness with it.

I will release the full code on my GitHub once the cleanup is finished.

A last detail : I tried to use a BeagleBone at a point, because I have a very nice 7" 1024*600 display for it. But I quickly found the USB bandwith is also limited, and I could not capture at more than 10fps with a USB webcam. There's nothing I could do to it, so I abandonned the idea. I don't want to kill my only beagleboard doing a similar hack I did on the Pi.

![]()

-

MkII : first hardware modifications

06/07/2015 at 23:29 • 0 commentsThe first version of the HMD had a few little problems :

- one screen flickers a little because of the connections

- PS3 eye camera capture quality is not so great

- elements on every face is a problem to put the device on a table

- some light is entering the device, from the borders of the screen.

So I decided to make some modifications :

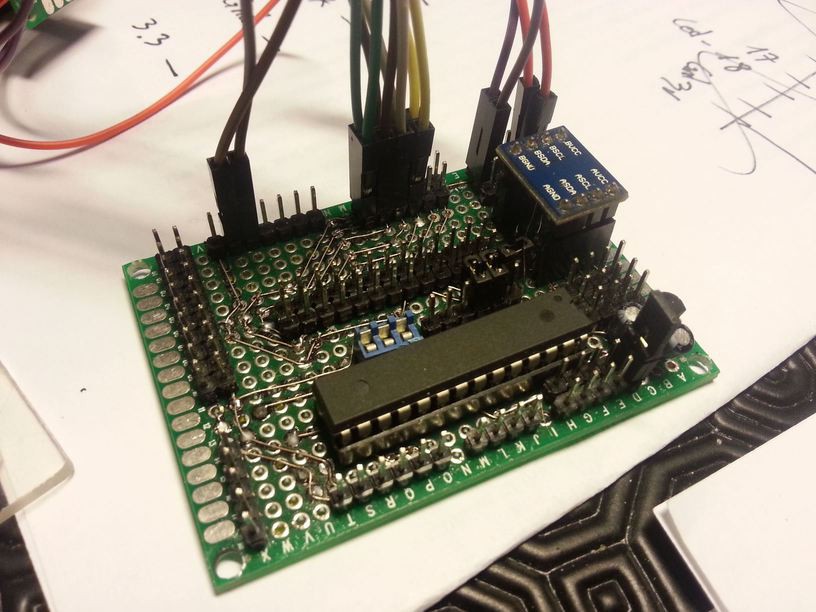

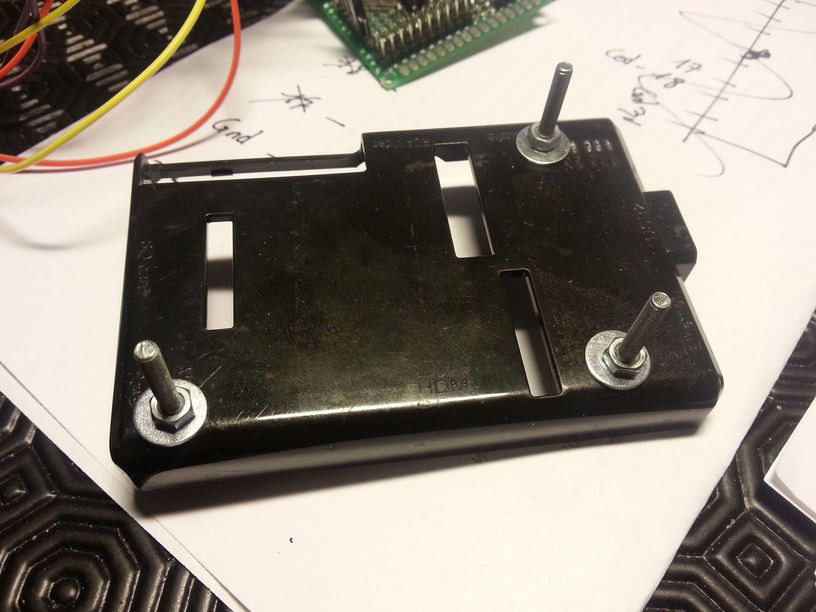

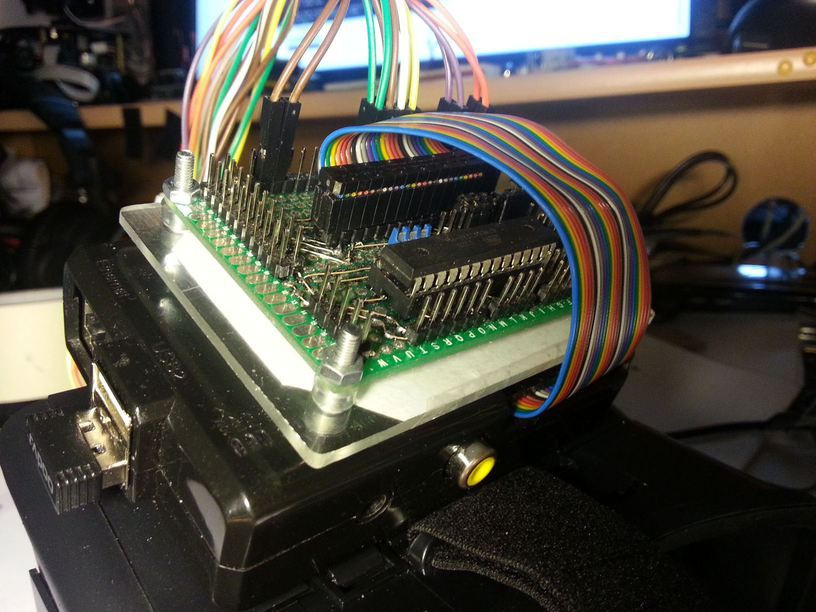

- I added my DIY arduino hat (from my domotic project) because connections are good, there's a I2C level shifter that will be usefull, and both SPI channels are fully exposed. There are also an ATmega328 running at 3.3v/8MHz, and a Microchip MCP1702 is providing 3.3V to the board from the Raspberry Pi 5V. I think I will build a new board without the atmega, but with added features for the HMD (IMU, RGB and IR leds, on/off button, for instance).

- I replaced the PS3 camera with a Xbox360 camera (with IR filter and case removed)

- I created a cardboard "shell" to put on top of the screens. It's painted black on the inside, and I added a fixture for the camera module.

All these modifications allow me to put the raspberry on the top of the HMD. It's far less bulky, more robust and I can put it on a table...

With these modifications, I can say the issues I was facing are totally gone : image quality is far better, no more light is entering the device, and flickering has stopped.

Here are a few pictures, see more on this page.

![]()

![]()

![]()

![]()

![]()

Better look, isn't it ?

-

Day 2, Mark I : assembling

05/28/2015 at 14:30 • 0 commentsIn Day 1, I made both SPI displays to work in clone mode. They could achieve 50fps which is very good, and they seem to be synced (I will have to check this when the helmet is working)

Today, I'm starting to physically build the device.

Here is how both displays are connected :

Common to both displays :

- MOSI (pin 19)

- MISO (pin 21)

- SCLK (pin 23)

- 3.3v

- GND

For each display :

- Raspberry SP0 and SP1, which are SPI cable select (pins 24 and 26), will go to SP0 on each display (pin 24),

- GPIO 24 (pin 18) and GPIO 25 (pin 22) will go to each display's pin 22.

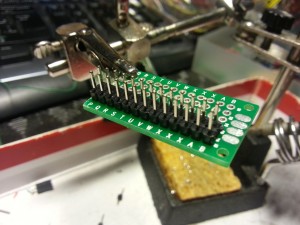

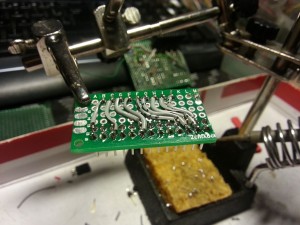

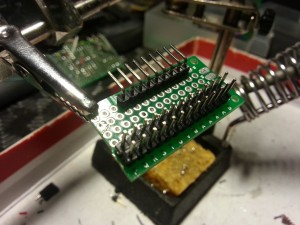

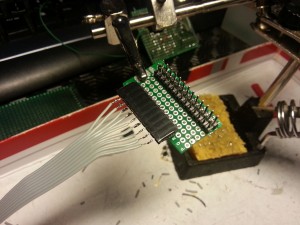

Because I want to reuse displays in other projects in the future, I don't want to alter them. So I will build some adapters, to go from both display's 26 pins connector to the Raspberry Pi's 26 pins connector.

![]()

![]()

![]()

![]()

Unfortunately, after a quick test in the shell, I notice I can't use the displays horizontally : they are too far away from each other to fit the lenses. So, I will use them vertically.

![]()

When used vertically, both display fit perfectly in the ColorCross shell.

I can now test the lenses alignment. Once perfect alignment is found, I will attache both displays to the shell with a little hot glue (sticks well and is easy to remove)

![]()

![]()

Displays are rotated in the pictures, but the overlay I linked to in Day 1 corrects this.

Without any surprise, view is pixellated, but it's not really important right now, and I have plans to drastically improve it in a future upgrade.

Good news is : displays are perfectly synced ! So this build is viable, after all !

![]()

![]()

PS3 Eye camera and Raspberry Pi have been temporary attached on top and bottom of the shell with velcro.

Very sexy, isn't it ? :D

-

Day 1, Mark I : dual SPI displays, clone mode

05/23/2015 at 00:01 • 0 commentsThe first stage is to get both SPI displays to work in clone mode. I will call this first version "Mark I" :)

First, we have to physically connect them.

Then, we have to build a new dual_hy28b_nots overlay. Display and touchpanel both use a SPI chip select (CS) line and Raspberry Pi have only one SPI channel with two CS lines, so we have to remove touchscreens in order to activate both displays.

Finally, we will add some modifications to X11 to allow clone view with both SPI displays.

![]()

Full guide is available in french on this link

I think you could easily follow the quotes, if not, please send me a message !

Arcadia Labs

Arcadia Labs