So this is another deal breaker for the project - I really want the template CPAP model in the Processing sketch to be modifiable by the physical measurements by the calipers and a facial scan of the infant. Luckily Processing can import a OBJ file. I downloaded a facial scan from the website of the manufacturer of the cheap 3D scanner I am using. It was pretty straight forward to get Processing to display the scan.

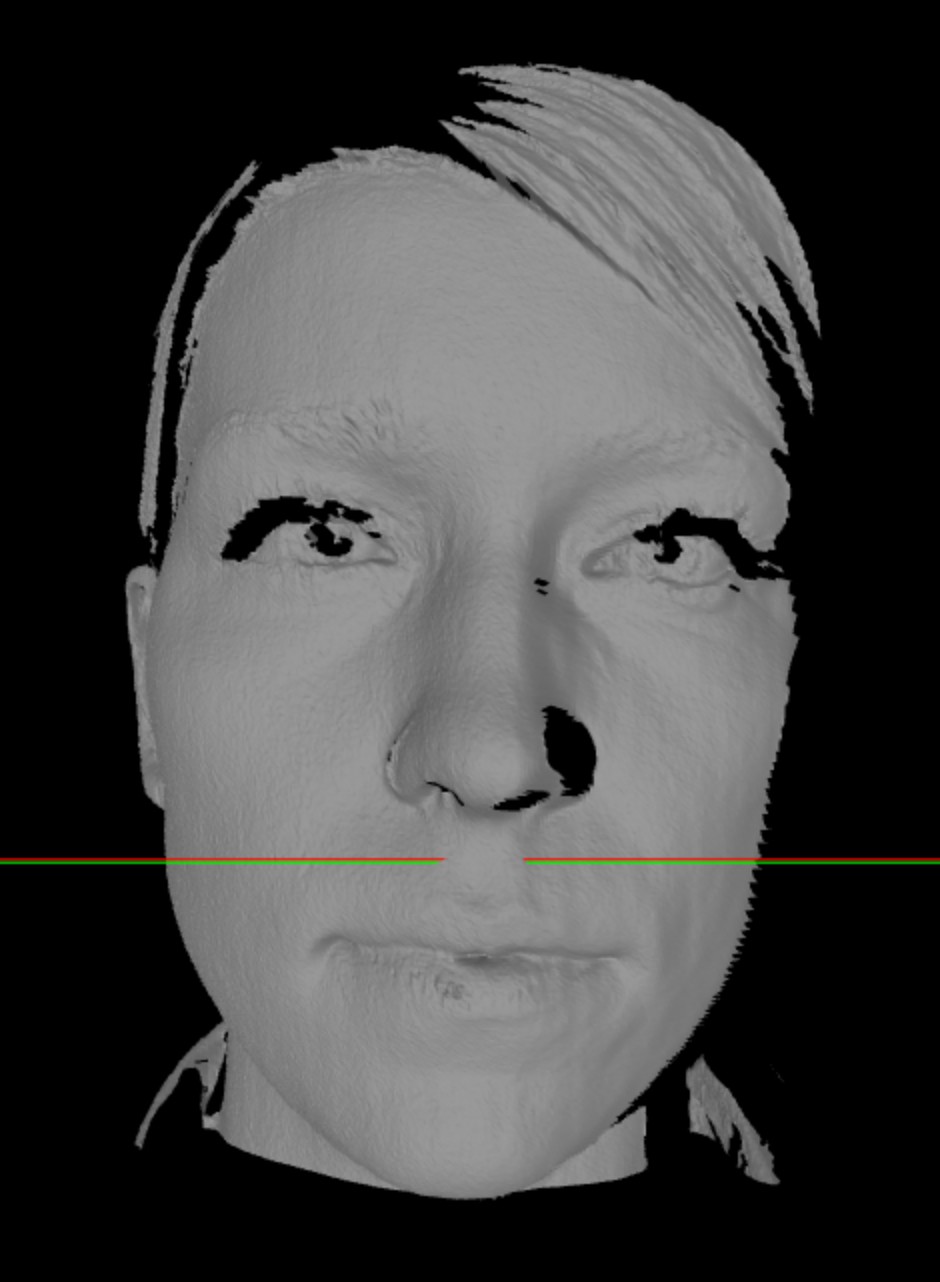

Its not a baby but here's the output from some of my test code...

The red and green lines are just me stuffing around to locate the image in 3D space in the sketch ( I had to manipulate the scale and location to display properly).

Now getting data out of the OBJ file so I can modify the CPAP template may prove a little more difficult.

My understanding is that the OBJ file is just a list of x, y and z co-ords of each vertex that creates the mesh of the scanned surface. So I figure it should be possible to pull out the x and z co-ords of a plane of y's that sit below the nose in the scan. But to do this I need to understand how Processing imports the OBJ.

The OBJ file is imported as a PShape - and there are functions to extract the vertices.

So far I have been able to determine that the PShape of the scan above has 206,376 'children' (it is made up of 206,376 separate shapes). Importing the same scan into Blender shows that the scan has 206,376 faces. So each face appears to be dealt with in Processing as a separate 'child'. I have tried a couple of ways to save x and z co-ords that correspond to a particular y plane into an array - or even just colour them red on the scan - but the sketch freezes - may just be asking a bit much of Processing.

So not completely successful but some progress...

Ben Hartmann

Ben Hartmann

Discussions

Become a Hackaday.io Member

Create an account to leave a comment. Already have an account? Log In.