Edit: the image was wrong, it's fixed now.

One of 3R's Achilles' heel is when dealing with large numbers: efficiency drops linearly... 3R loves small numbers and eats through runs of zeroes but large numbers have looked like an enemy to avoid.

Until today.

The trick is to use additional auxiliary information, which is the range of all the numbers, the maximum value that each one can have. This information is ignored and absent in the classic 3R algorithm but adding it can change the whole behaviour...

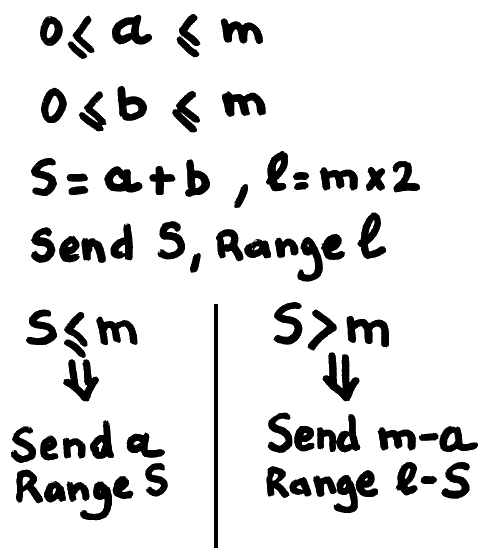

Here is the new algorithm to encode two numbers a and b, knowing their respective limit m:

This is a crazy breakthrough, compared to sending S then a with range S every time !!!

With this new transform, many things I thought about 3R must be reconsidered...

This "trick" looks like it is somehow linked with "economy codes", and came to my attention while designing a new colorspace for use with 3R... More details are provided in the new dedicated project #σα code.

Nothing comes for free however: the added compaction performance adds a conditional branch (likely to be mispredicted), a comparison, a subtraction, and all of these add a bit of latency for the encoder AND the decoder, which will be a bit slower in FPGA (it's still crazy fast but it might lose 50% of throughput).

Yann Guidon / YGDES

Yann Guidon / YGDES

Discussions

Become a Hackaday.io Member

Create an account to leave a comment. Already have an account? Log In.