Jam on air for real!

Rockin' on with the Litar.

Original photograph by Frank Schwichtenberg. Remixed under CreativeCommons*.

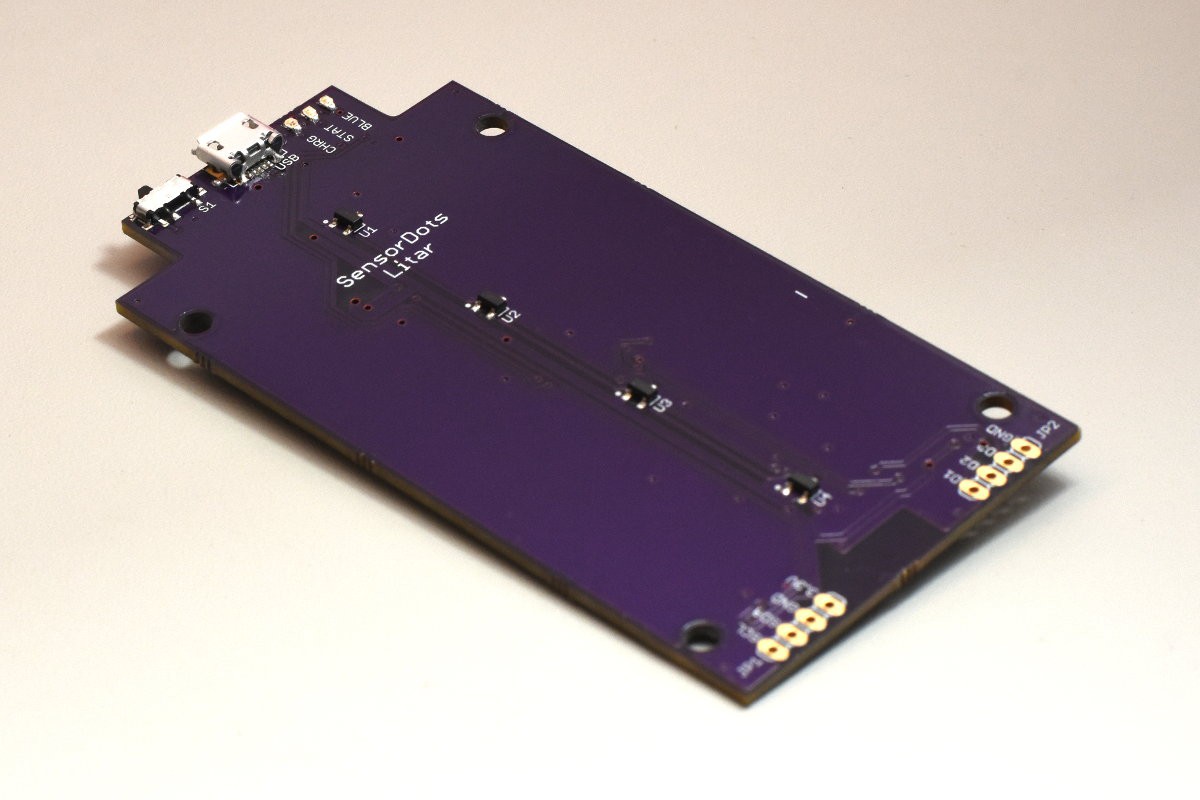

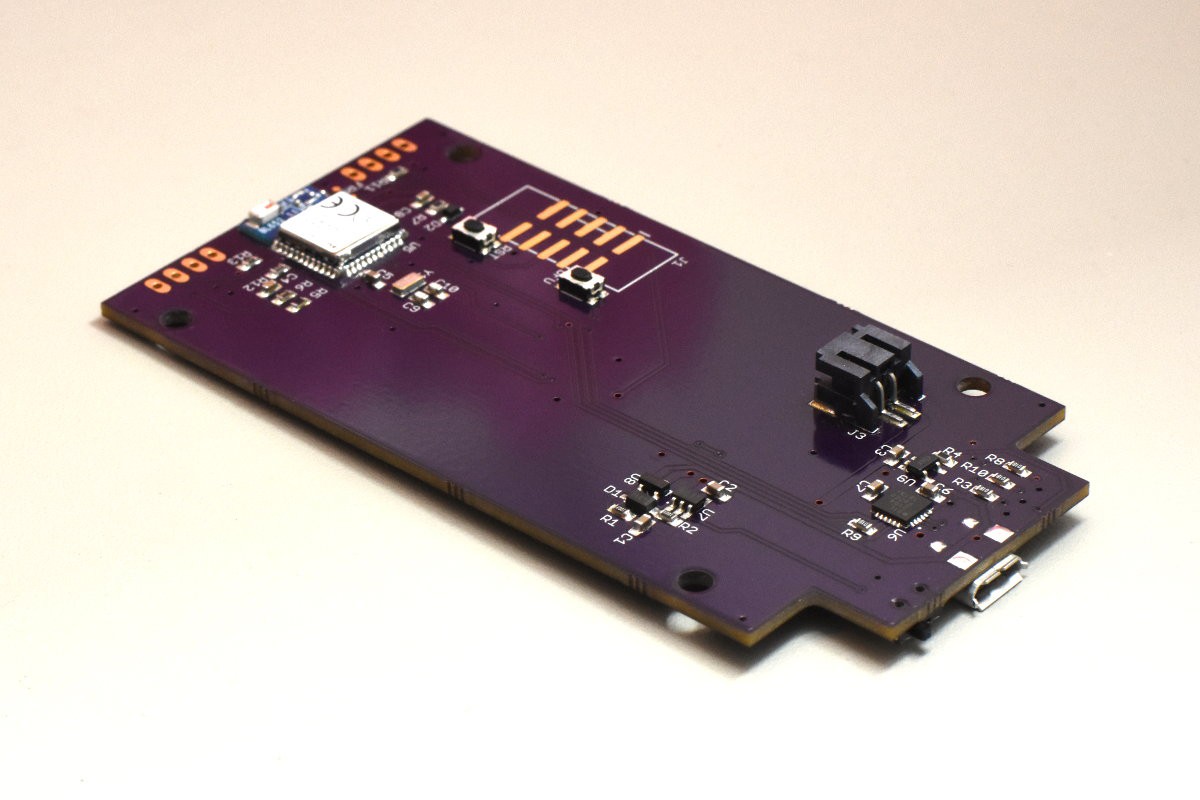

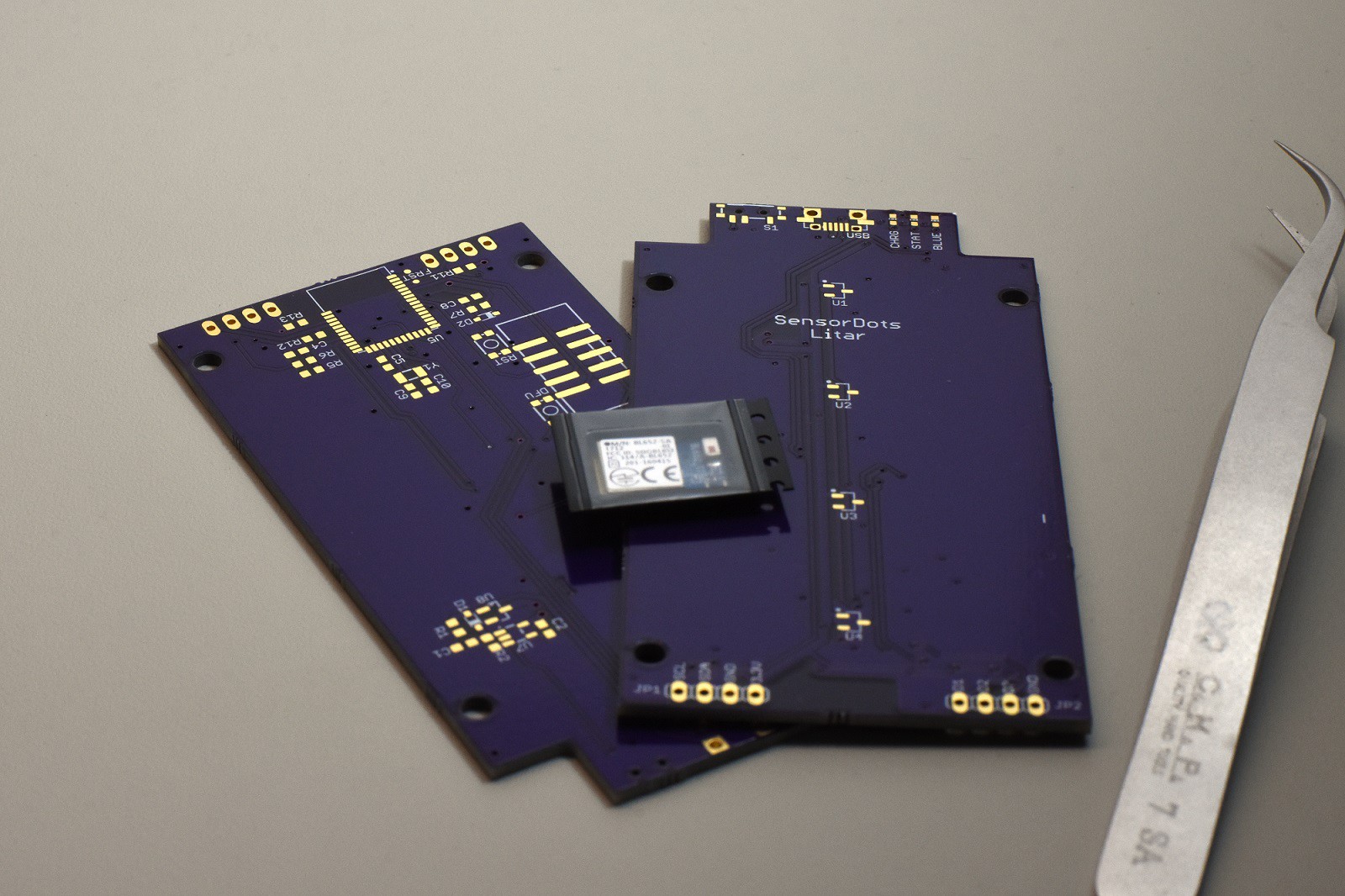

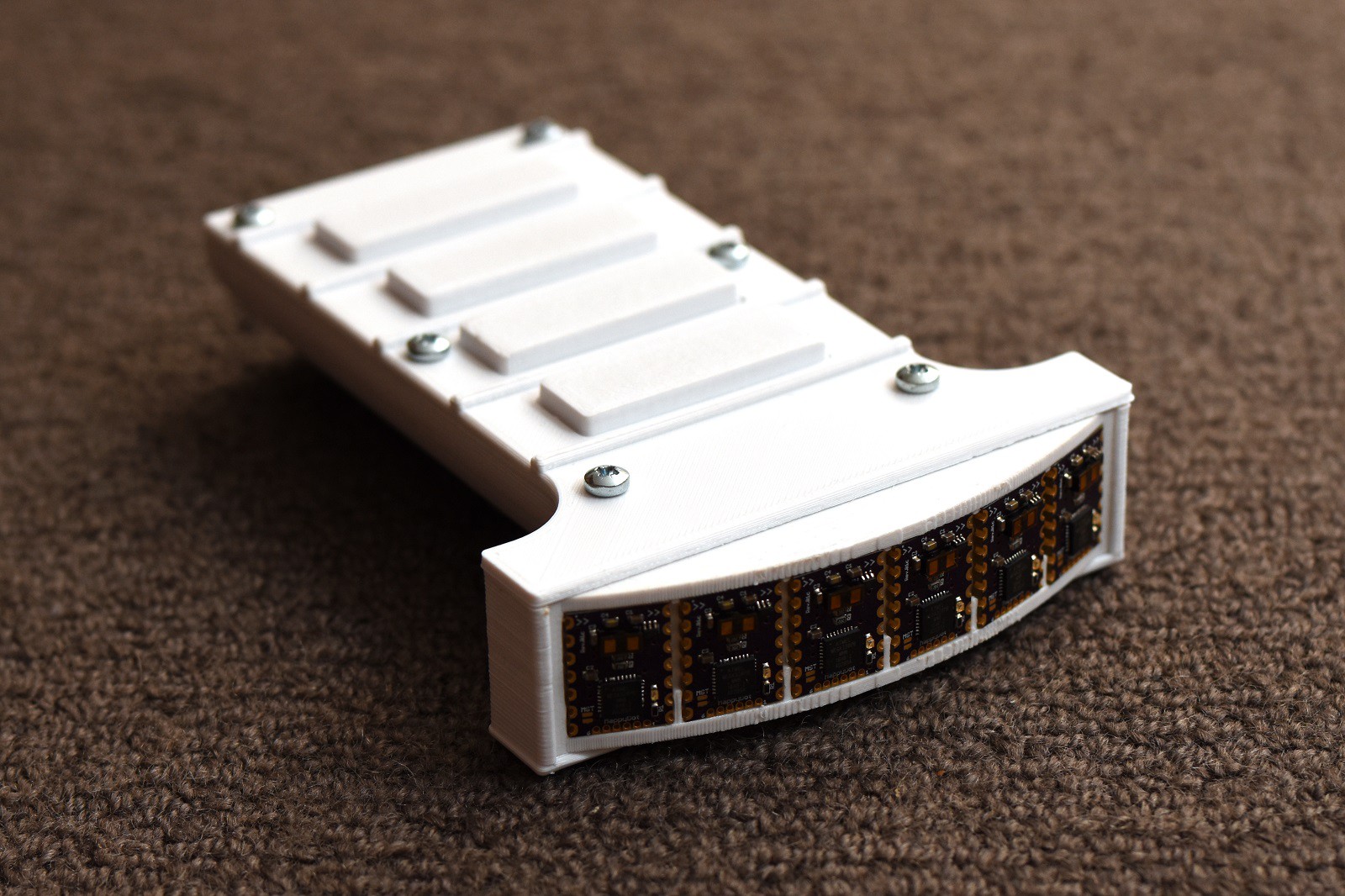

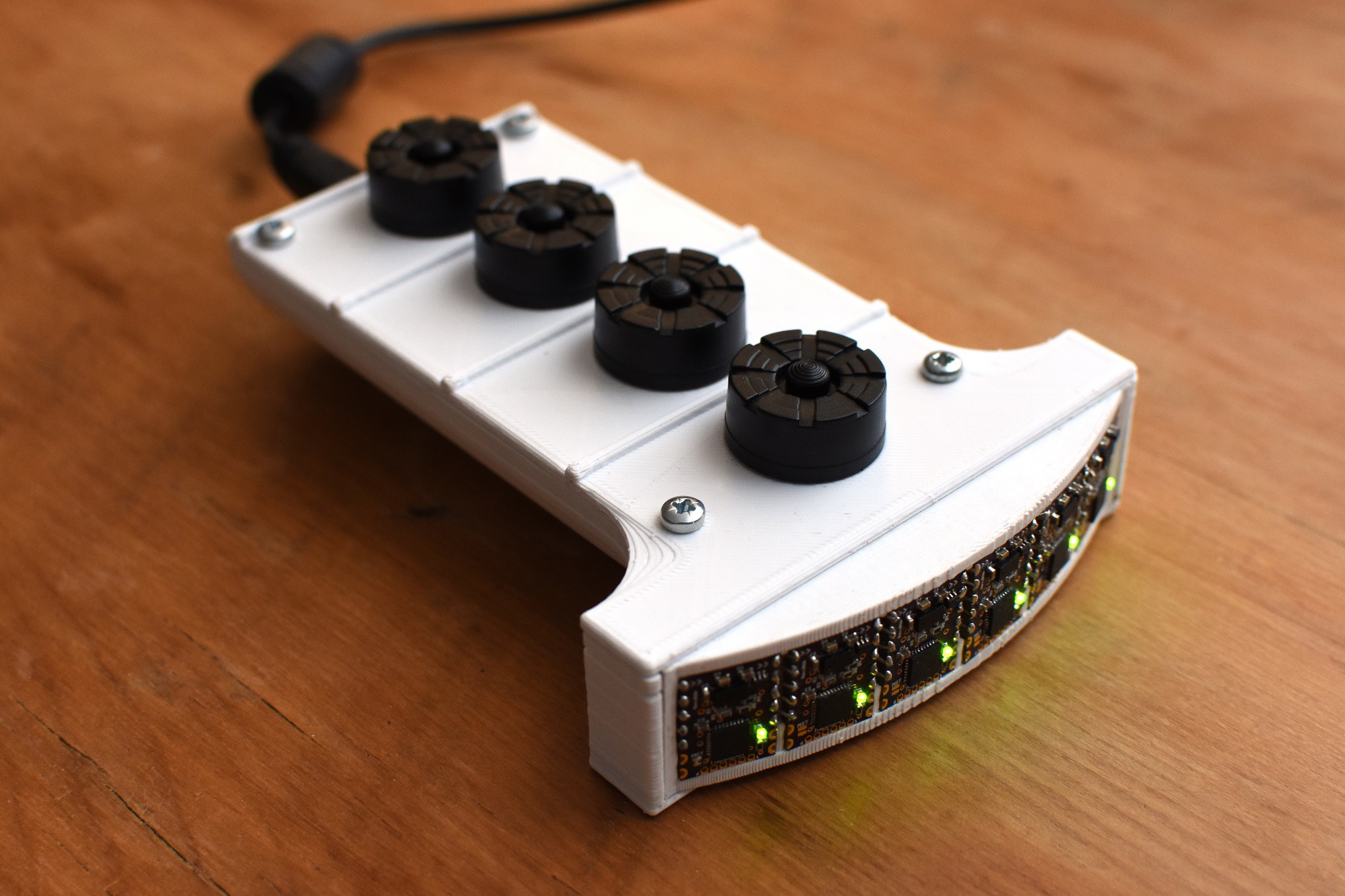

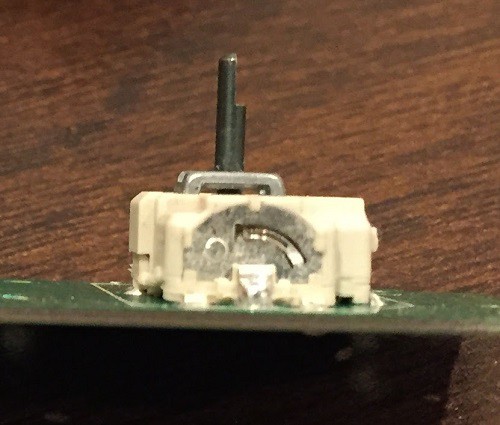

Building on from last year's Hackaday prize entry the MappyDot, the Litar makes use of the VL53L1x LiDAR sensors from ST Micro to create an array of air strings that you can interact with.

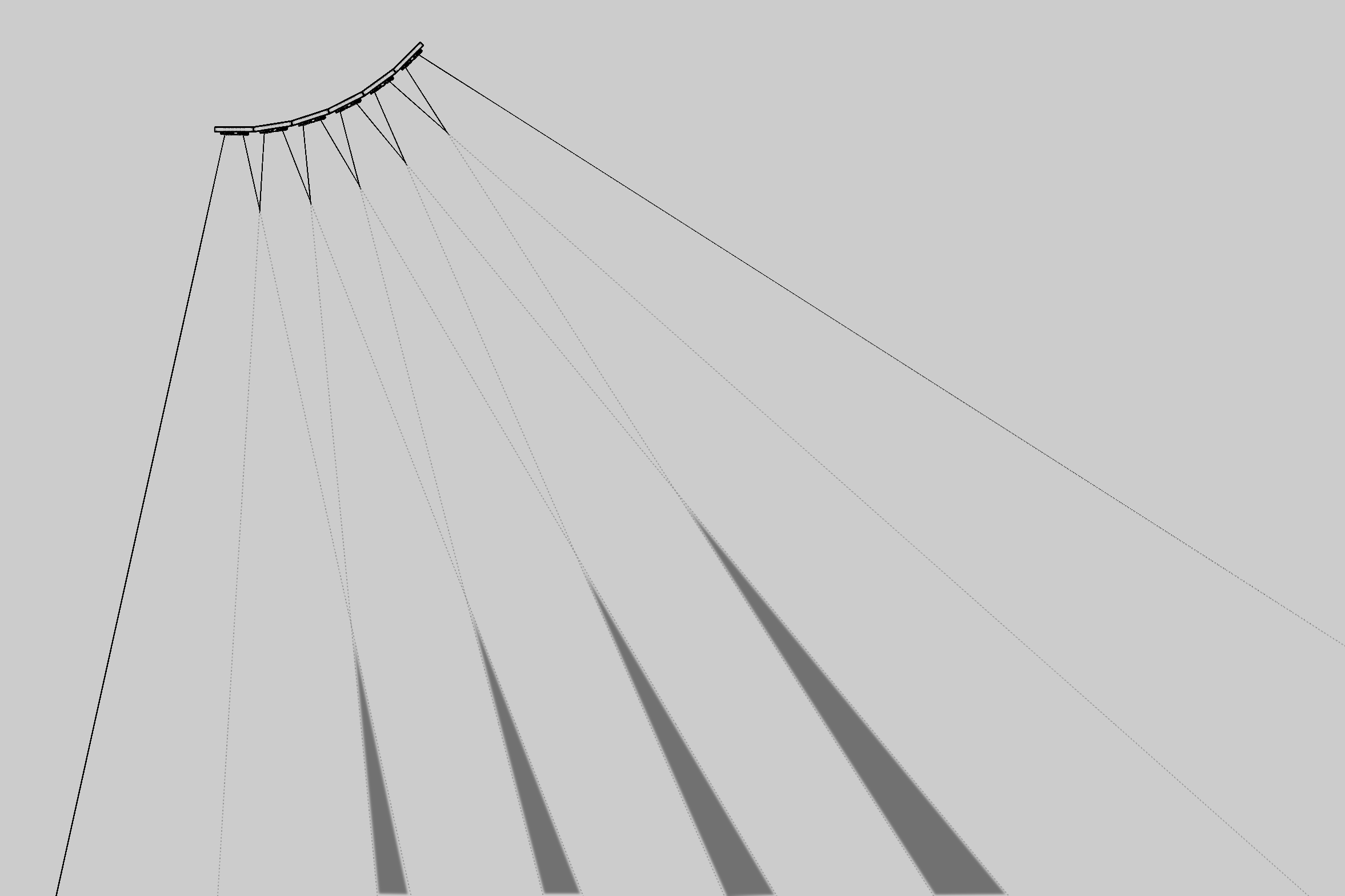

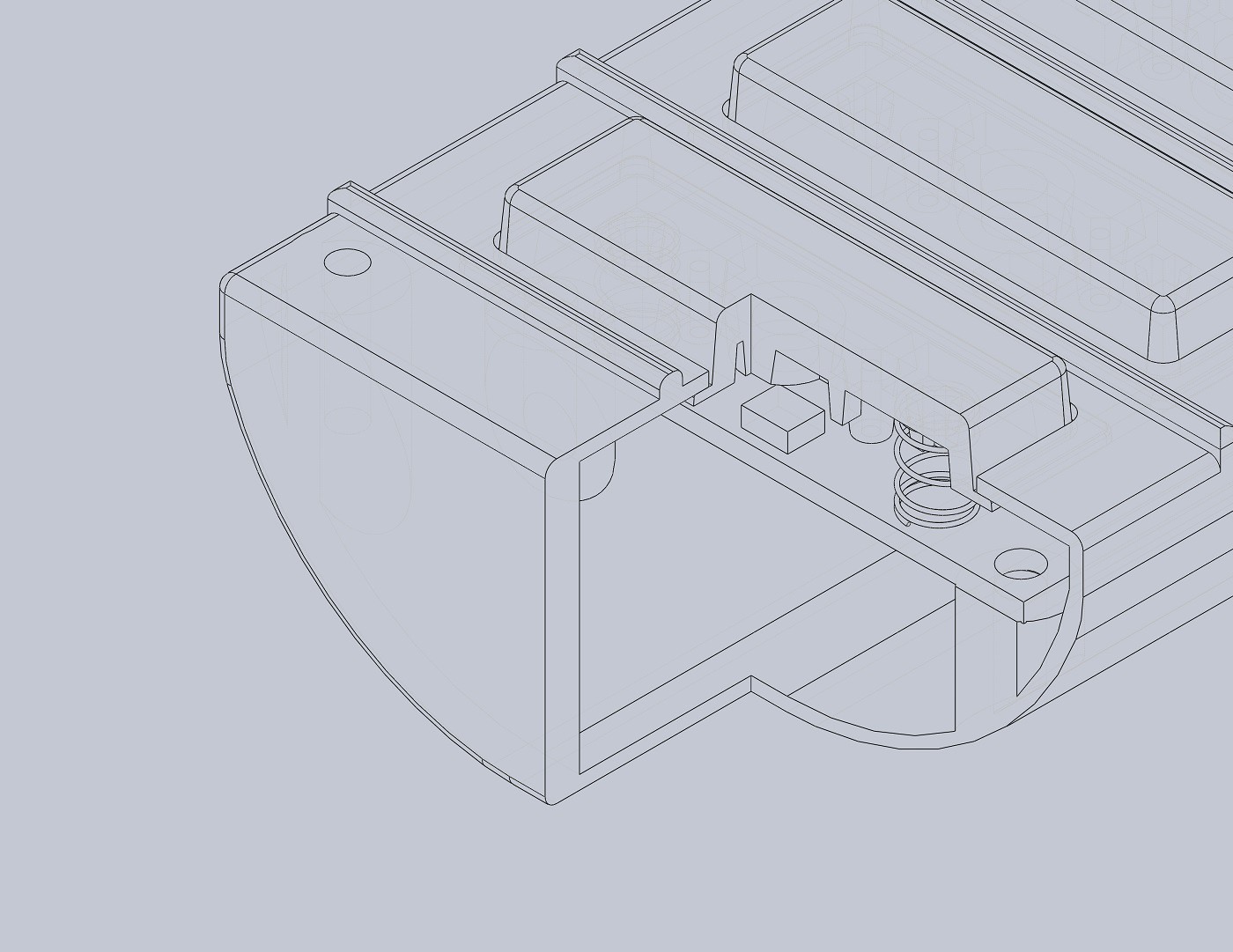

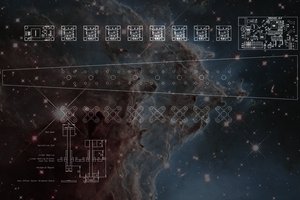

The sensors are aligned in such a way that three sensors see a single string in space; two sensors define the string border and a third sensor obtains the string distance. This enures that you don't get miss plucks and it also allows the strings to be packed tighter together over one or two sensor setups (individual sensors have a 25 degree field of view). To visualise this, the image below shows the sensor field of views, with the grey areas highlighting the virtual air strings:

With each string, the Litar also obtains the distance that the string was plucked at, which can be directly fed into a MIDI channel for processing.

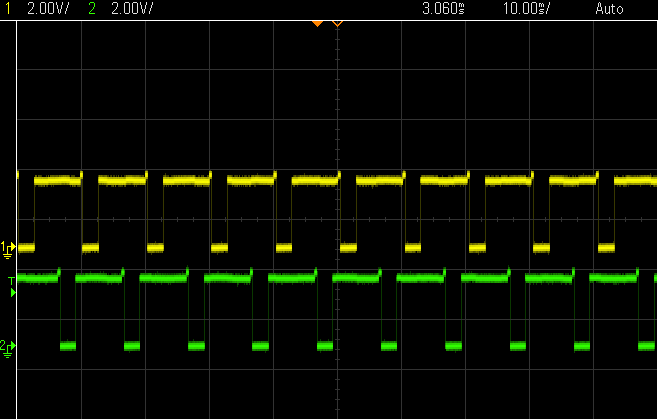

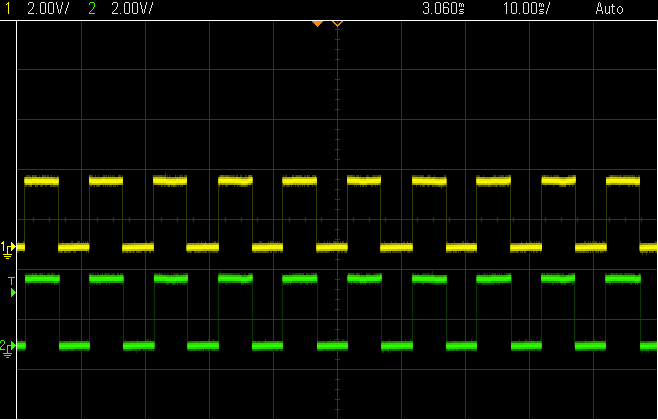

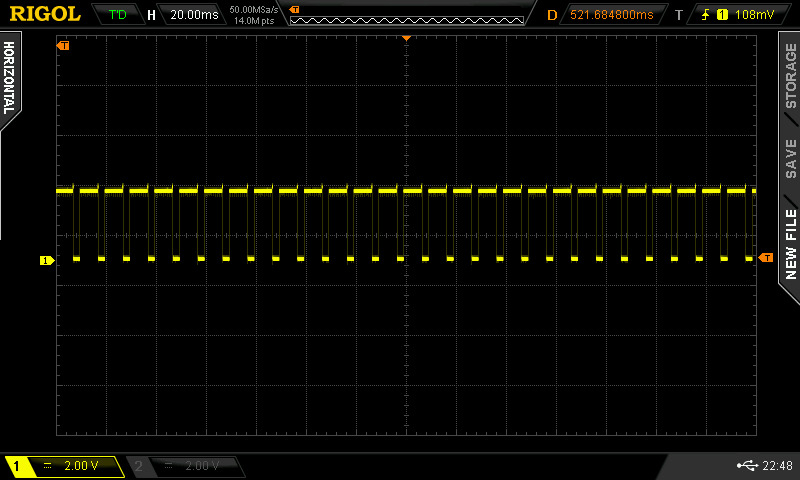

The Litar isn't just about a sensing array though. Smart code algorithms need to process the incoming values in the lowest latency possible to before you're smooth sailing. The human brain generally works well with latency values under 50ms, although anything over 20ms is considered perceivable by some. The sensors themselves measure at 100Hz (10ms), so there's not much room to breath. Throw Bluetooth into the mix and you're on the highway to hell'a bad latency if you're not careful.

Coupled with this requirement, is the design of an ergonomic grip and chord input. Various prototypes have been tested already, but it's a long way to the top if you want to design an easy to use and strain free interface that fits most.

Once everything is working, hardware kits will be made available so you can get your hand on it and strum away.

All Litar code on these project pages or subsequent code repositories are licensed under the GNU General Public License (GPL) unless otherwise stated. Documentation on these project pages are licenced under the Creative Commons Attribution-Share Alike 4.0 license.

* Modification to image includes cropping and Litar in hand.

Blecky

Blecky

Minimum Effective Dose

Minimum Effective Dose

CaptMcAllister

CaptMcAllister

oneohm

oneohm

ventosus

ventosus

Your Idea is great! clever how you arranged the distance sensors. Time ago I was working on a project that i have abandoned with a IR distance sensor and a dsPIC that plays a sound.

I am looking forward to see a video of it in action as I am curious how it sounds.

great project!