Background and Objectives

Modern music could benefit from availability of new unorthodox input controllers. This project aims to address each of the following issues with contemporary instruments:

Difficulty of construction and development

Traditional instruments - the violin, horn, and piano, for instance - were historically crafted by experts who devoted their lives to guaranteeing sound quality. Conversely, electronic instruments can be specified and relatively easily manufactured, but most remain closed-source in design. More so, neither of these classes of instruments are easy to modify without domain-specific knowledge.

WhistleSynth should be simple enough in design for an average hobbyist to construct, likely using off-the-shelf components and manufacturing processes available to makers. Its designs should be openly available to the public.

Portability

Transporting instruments can be an endeavor. For large electronic setups, there is often a high setup time required for configuring signal paths (MIDI or analog), and an additional risk of theft, since setups are often expansive. For traditional instruments, transportation is risky due to the threat of damage to the instrument, especially for large-bodied string instruments like cellos.

WhistleSynth should be easy to transport, quick to set up at a venue, and light enough to continue playing for long durations.

Accessibility and required skill level

Without musical training or years of instrument practice, making complex music can be challenging. At the same time, quality equipment can be prohibitively expensive. FOSS music composition software attempts to address some of these issues, but is often complex, and compromises on the real-time expression associated with performance music.

WhistleSynth should have an approachable interface that doesn't sacrifice nuanced performance or cost.

Utilization of expression vectors

Humans have a limited number of ways in which they can physically express themselves: "expression vectors". This matters for performance artists - when a musician's expression vectors are fully utilized, they can't create any more simultaneous voices. Both classically and today, most musical UI elements (buttons, knobs, strings, etc.) are designed with the hands in mind, leaving precious few other expression vectors available.

WhistleSynth should require only the mouth as its controlling expression vector, and should actively avoid occupying the hands. In this way, the performer can use them for other inputs.

Music system compatibility

Modern digital music synthesis has been transformative, enabling complex composition and sound design. Even classical instruments have been adapted (via external attachments) to be able to be sampled and filtered via contemporary techniques. It would be a shame for a new instrument to lack native compatibility with this environment.

WhistleSynth should be able to interface with modern digital audio workstation environments. It doesn't necessarily need to be a true sound synthesizer, it just needs to be capable of sending control signals to systems which will perform sound synthesis.

Prior Art

Ugo Conti - Whistle Synthesizer

Ugo Conti's patent US4757737, "Whistle Synthesizer" details the following device:

A whistle synthesizer comprises a microphone, a housing, system electronics within the housing, and controls outside the housing. The player whistles into the microphone, the signals from which are processed to provide an instrument output signal suitable for communication to an external amplifier. The controls are located within easy reach of the player so that they may be manipulated all the while the player is whistling.

Conti provides several figures detailing the implementation of this instrument.

The date of patent is July 19, 1988. Under US law, the term of patent for patents filed before June 8, 1995 is 20 years. Therefore it should be legally acceptable to use features of this system as inspiration or directly in the proposed solution.

Conti describes several advantages of this instrument format:

- It enables those who cannot play a piano or wind instrument to take advantage of the audio filtering and tuning provided by synthesized sound.

- The instrument is self-contained, and does not require time-consuming configuration or setup.

- The instrument leverages the human whistle as its primary input; the whistle provides time-domain data (ADSR) as well as frequency-domain data (mono-tonal pitch).

There are several drawbacks, however:

- Since the device is a self-contained synthesizer without patch bays, it cannot be extended without additional external filtering.

- It has no digital interface, meaning interfacing it with digital environments requires sampling, which cannot reproduce the original pure whistle frequency.

- The device still requires the user's hands to play.

- The device implementation is purely in the analog domain, which is harder to maintain and/or extend in functionality.

Patricio Cordova - Human Whistle Interface

In his paper, "Human Whistle Interface", Patricio Cordova proposes to use the human whistle as a user interface control element on mobile devices. His argument is that voice recognition for command execution is unnecessarily complex for the tasks that the technique is currently capable of performing consistently. In addition, vocal information is difficult to parse accurately across languages and dialects; whistle tones are cross-cultural, and have much less spectral information by comparison.

His proposed implementation is a smartphone application that takes pre-amplified microphone data, performs a windowed Fourier transform, and churns the resulting spectral information through statistical analysis.

Advantages:

- Mobile devices are ubiquitous and therefore accessible.

- Implementation can be in pure software; zero maintenance required, and adding new functions only requires familiarity with the code base.

- Does not require hands as input, except to bring the phone mic close enough to parse input.

Disadvantages:

- The solution is over-engineered in the context of sound generation. Most mobile phones have excessive speed and therefore high power consumption when considering the frame of this project.

- Mobile phone architecture, performance, and hardware is not consistent between models.

- High latency when attempting to connect with desktop synthesizer environments.

Wintergatan - Modulin

Martin from Wintergatan debuted a new instrument this past year named the "Modulin", which has the form-factor of a violin and the sound processing of a modular analog synthesizer. The instrument features a linear potentiometer which senses position and pressure of a single contact finger on its surface. This pair of input values is transduced and used to drive inputs to an modular synthesis rack using the "ribbon to MIDI interface". From there, the signal can be transformed based on the modules in the rack.

Advantages:

- Output can be modulated on the fly by adjusting rack module settings.

- Expression is highly nuanced due to the multi-mode nature of the input control.

Disadvantages:

- Requires both hands to play with full effectiveness.

- High cost (modular synthesizers are not cheap).

Onyx Ashanti - "Beatjazz" instrument

Onyx Ashanti's "Beatjazz" instrument is a full-body system encompassing hand-based inputs (buttons, joysticks, orientation sensors), an electronic wind input at the mouth, electrodes for trans-cranial direct stimulation as physical feedback, and (planned) pressure sensors at the feet. Clearly, he understands the need to expand the selection of expression vectors available to performers. The system is capable of driving a myriad of digital audio environments (especially powerful desktop-based DAWs), though Ashanti's chosen system is often a built-in Raspberry Pi software synthesizer. His TED talk, "Thoughts and insights on engineering a sonic fractal matrix" details his development process.

Advantages:

- Highly portable; the instrument is a series of wearable devices that each expose a different human-machine interface for musical interaction.

- High utilization and efficiency of expression vectors, with high flexibility.

- Open-sourced and highly compatible with other music systems (relies on digital protocols).

Disadvantages:

- Fairly complex system (necessarily so).

- The wind controller used only takes advantage of amplitude control, though the mouth can also frequency modulate.

Solution and Features

WhistleSynth shall be an FPGA-based MIDI controller driven by whistling. The proposed behaviors are as follows:

- Required Convert identified human whistle frequency to quantized MIDI note on/off, with amplitude supplied as velocity.

- Required Convert any frequency difference truncated by quantizing into a virtual MIDI pitch bend wheel.

- Required Octave shifting / transposition. This is necessary for handling outputs in octave ranges which the human whistle cannot handle (most low frequencies).

- Optional Sense vibrato and express it as purely a virtual MIDI pitch bend wheel change (to avoid requiring high-frequency note change).

- Optional Additional quantization options (to specific ranges, or aligning to specific scales).

- Optional External note cutout for simulating staccato notes.

- Optional Manual pitch bend wheel, which sums with the virtual pitch bend.

- Optional Secondary input interface for additional configuration.

Advantages:

- Using an FPGA to implement signal processing in the digital domain has a number of advantages:

- There will be less hardware configuration and tuning otherwise present to account for tolerances in analog components.

- There will be fewer components in the system, allowing for smaller form-factors and easier assembly.

- Since FPGAs can be highly parallelized, adding new functionality does not impede performance. In addition, adding new functionality only requires connecting external hardware (if even necessary) and modifying the HDL design.

- Since implementations of digital signal processing systems can be incredibly small, and the input device is a generic microphone, the system is highly portable. The only hardware requirement is a system that can interpret MIDI to synthesize the output sound (a Raspberry Pi would do).

- By using the MIDI standard, FOSS music synthesis software, and open-source hardware, WhistleSynth mitigates cost-related barriers to entry.

- Using the human whistle as an input method has distinct advantages:

- Anyone who can already whistle now has the ability to emulate other instruments without ay additional training.

- The hands can be kept free as a separate expression vector.

Disadvantages:

- FPGA design verification is not as simple as with traditional microcontrollers.

- Using the human whistle as input does prevent simultaneous vocalizing.

- The human whistle is less capable of hitting short-duration notes than other instruments, meaning some sounds it won't be able to recreate well.

- MIDI quantization causes some of the acoustic nuance of the whistle dynamics to be lost.

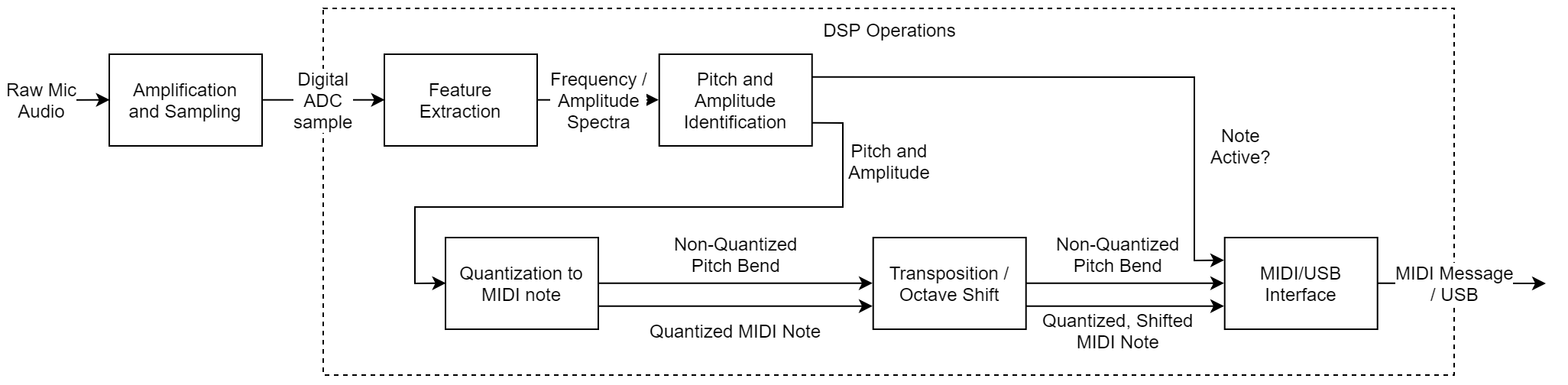

Architecture

Amplification and Sampling: This subsystem takes mono-channel audio from an audio jack, amplify the signal, and sample it into the digital domain.

Feature Extraction: This subsystem produces frequency spectra for the sampled sound, from which the singular musical pitch (and its corresponding amplitude) can be determined.

Pitch and Amplitude Identification: This subsystem deduces the correct pitch and associated amplitude which has been whistled (perhaps with filtering).

Quantization to MIDI Note: This subsystem simply maps the determined pitch to its corresponding closest MIDI note. In addition, any pitch which was truncated through quantization should be expressed as a pitch bend value on top of the MIDI note output.

Transposition / Octave Shift: This subsystem shifts the output MIDI note according to input transposition settings. This component also includes input UI elements for dynamically changing transposition.

MIDI/USB Interface: This subsystem provides the MIDI over USB engine for writing MIDI Note messages and MIDI Pitch Bend messages as output over the USB interface.

Implementation details will be left in individual project log updates.

Michael Erberich

Michael Erberich