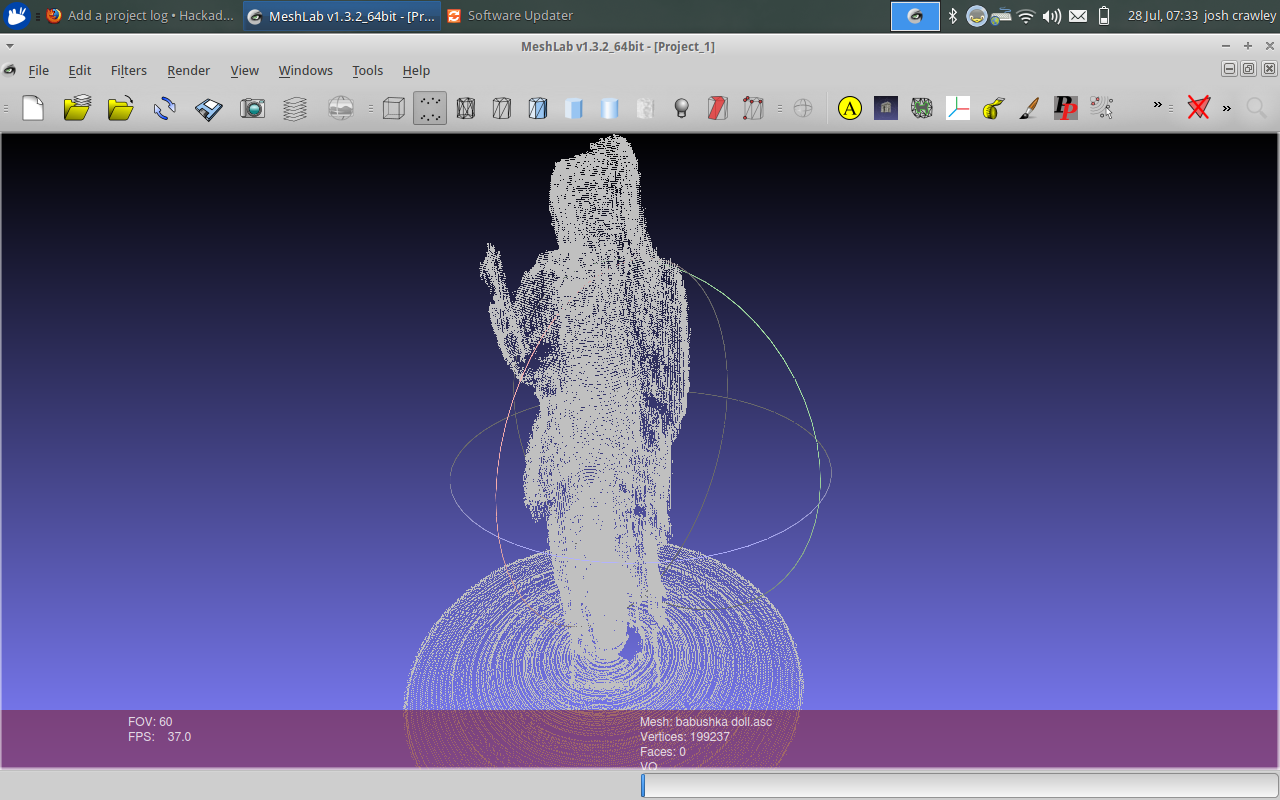

Right now, you can be up and running with your own 3D scanner! I have the hardware design, Arduino sketch, and the Processing programs all avilable.

What makes my project special vs other 3D scanning projects: I solve the pointcloud for any vertical arbitrary position for the camera (horizontal: -pi/2<X<pi/2),(vertical: 0<Y<pi/2)! I know of no other projects that do this. I also do so only requiring to know the center of the platter and the angle of laser line incident to vertical.

Right now, the Processing skit works! You just have to edit it manually for the correct values. That's why I'm working on a Java version, with as much calibration I can include to make things easier for everyone who just wants to scan.

The Instructables link was the project I started to base mine from! Of course, I knew I'd migrate away from Processing, and even the Arduino platform. However, I include that link as a nod to my personal inspiration! Thank you, cube000 !

Joshua Conway

Joshua Conway

Eric Link

Eric Link

Ben Hartmann

Ben Hartmann

E/S Pronk

E/S Pronk

Chris Mitchell

Chris Mitchell

So help:-

Build a 30$ laser Scanner

http://www.instructables.com/id/Build-a-30-laser/

####

Incredibly low-cost line lasers for 3D scanning

http://www.3ders.org/articles/20120305-incredibly-low-cost-line-lasers-for-3d-scanning.html