In this log we will give some understand about the game design and a sneak peek into the current game for mindfulness training. We will also get some looks into visuals and design elements of the game. This log contains only small amount of hardware content compared to our earlier logs and delves more into aspects of design from a VR game POV.

The primary reason we wanted to build NeuroCuddl was born from what we found lacking in traditional relaxation themed games. Games surprisingly provide a good medium to relax primarily due to the detachment we get from from stresses and strains of everyday life. Virtual reality in particular has a unique position to provide an extremely high degree of immersion. While designing the game we looked for experiences that would give us similar effects.

First game we found inspiring from an art and music perspective was Journey:

We next looked at games like The Long Dark which had a minimalist art style yet was highly immersive and we could clock hours both fearing and in awe of the cold Artic wilderness.

In virtual reality experiences we looked at games like LUMEN VR which provided a serene experience of glowing trees under an aurora. We even took a look at a highly simple mobile game called Pause, where the user has to slowly focus on follow a growing bubble across the screen.

We analyzed the above games to understand some design rules and constraints to keep in mind while developing our NeuroCuddl experience. The factors we found be crucial were:

1. The game need not be graphically intensive, Infact many successful relaxation games are minimal

2. The game must be very simple to use and need only minimal user agency in setup

3. Audio plays a key role in engaging the user in such a session

4. The color tones and temperature play a key role too

A need hence emerges, to create an environment in which, the stimuli and the being can interact in a controlled manner. In order to make the experience multisensory, it was vital that we marry visuals, sounds and touch into a single experience. It was therefore no surprise that we chose to use VR as the medium to create our project. We decided early on that the project would be implemented on mobile devices. This meant, that we had to be overcome technical limitations creatively. We knew we had to push our game design in such a way that we could provide a beautiful aesthetic despite having limited graphical fidelity. The challenges of providing a multisensory experience within the limitations of a cheap mobile device was evident from the get go. We were fully aware that if we bogged down our game with high poly models and complex shaders we would introduce lag to the point that the immersion in our game would be shattered. We decided to hence to adopt a design style that would be visually pleasing whilst still maintaining a low load.

The application had to serve as a platform for other senses to take center stage and allow that user to project their inner emotions. We wanted to make an environment that could be easily moulded to the incorporate the psyche of the user. For these reasons we adopted a minimalistic approach. In choosing a suitable game engine, Unity was chosen as the right candidate. This was due to the fact that making mobile applications and its compatibility with android. Upon choosing the engine, it was time to build the application. Our due diligence led to us analyzing existing google cardboard experiences. We decided to stray away from the conventional 2D pointer mechanism of the existing cardboard API and decided to build those aspects from the ground up. We settled on a 3D pointer mechanism that would interact more realistically with the environment.

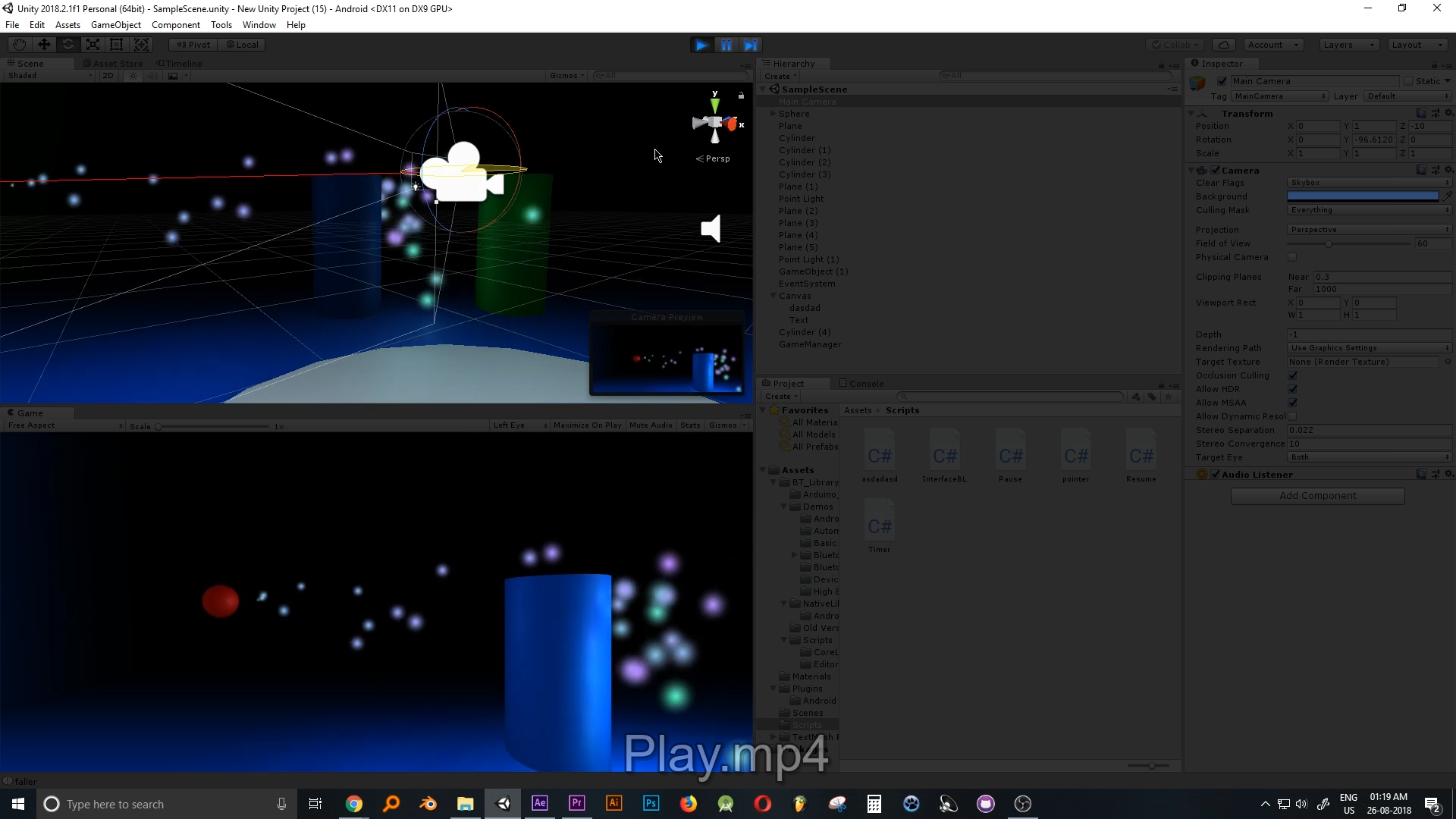

The pointer needs to be smooth flowing and detect obstacles. We decided to use a ray from the center of the screen to serve as a location indicator. The benefit of using a 3D reticle that exists in the world is that it can be used to collide with objects realistically and can be expanded and contracted based on the breathing of the user. The primary intent of this basic game play set up, is to see whether smooth tracking is possible with a latency that doesn’t distract the user. The first stage for the game is a simple scene that involves a floor plane and an angled plane. The pointer object will have to move smoothly across the 3D environment at a certain distance from the camera if the player points at nothing, and to interact with the surface, if the player is pointing to a wall. The first obstacle to overcome is that the ray fires infinitely far and determining a default distance that the object would differ to in the absence of any collision obstacles was the first step. 10 units was considered to be the ray length. The ray would fire only a distance of 10 units, if no collider was detected, the end of the ray would be the target position of the pointer. The next step involved smooth tracking. This was achieved using the Linear Interpolation functionality of Unity ( Lerp) to generate a target position and move the pointer to it gradually. This lead to a smooth gliding motion of the pointer that would gently follow the players gaze. The center of the screen was chosen as the screen space point to be converted into world space coordinate. With the pointer system ready we are ready to interface to the external world. We used the Bluetooth asset library from the Unity Store to interface with a HC-05 bluetooth module. The respiration effort is received over bluetooth as a function of nasal temperature, this value is used to determine the pointer size and the amount the particles and luminosity of particles emitted by the ball. In order to make the game into a VR application that would work well in a mobile platform. It was crucial to observe the technical and graphical limitations of the platform. This demanded a rudimentary prototype build that would enable us to study the game in its intended platform of delivery. The required Android SDK was installed, as was the Google Cardboard and Google Daydream packages. However, a decision was taken to not use any of the prebuild Google VR assets and code. This was mainly to see how far we could push the technology without relying on the crutches of existing material. In order to push the lighting engine to its limit, a fog atmosphere was introduced and several particle simulations were added into the game. These particle systems emitted light and would float off the objects. The room was darkened by closing it. It was at this stage temporary music was added.

This is how it looks.

Vignesh Ravichandran

Vignesh Ravichandran

Discussions

Become a Hackaday.io Member

Create an account to leave a comment. Already have an account? Log In.