At first, I thought the best approach to handling video refresh with the VDC-II core was to use ping-pong scanline buffers, like how the Kestrel-2's MGIA core did its video refresh.

I think that's still an approach that could work; but, I have to wonder if it wouldn't be simpler to just use a collection of modestly-sized queues instead?

At its core, a video controller consists of two parts: the timing chain (which I've already completed) and what amounts to a glorified serial shift register.

By its very nature, getting data from video memory to the screen happens in a very pipelined fashion. Everything is synchronized against the dot clock, and usually, also a character clock. Competing accesses to video memory, however, could cause a small amount of jitter; perhaps enough to cause visible artifacts on the display. Queues would apply naturally here, and can smooth out some of that jitter.

The disadvantage to using queues, though, is that video RAM access timing is much more stringent than with whole-line ping-pong buffers. I can't just slurp in 80 bytes of character data, 80 bytes of attribute data, and then resolve the characters into bitmap information (totalling 240 memory fetches), then sit around until the next HSYNC. I will need to constantly keep an eye on the video queues and, if they get too low, commence a fetch of the next burst of data.

The disadvantage to using ping-pong buffers, though, is a ton of DFFs and logic elements will go into making up the buffers. Like, if I want to support a maximum of 128 characters on a scanline (128 characters at 5 pixels/character can also provide a nice 640-pixel wide display), I'll need 384 bytes worth of buffer space: 128 for the character data, 128 for attribute data, and another 128 for the font data for each character on that particular raster. 384*8=3072 DFFs, and if memory serves, I think you need one LE per DFF. There are only 7680 LEs on the FPGA. I can't use block RAM resources because those are already dedicated for use as video memory (in this incarnation of the project, at least; I'll work on supporting external video memory in a future design revision).

So, while it's possible to implement a design using ping-pong buffers, it would make very inefficient use of the FPGA's resources. Since logic would be strewn all about the die, it could also introduce sufficient delays that the circuit fails to meet timing closure.

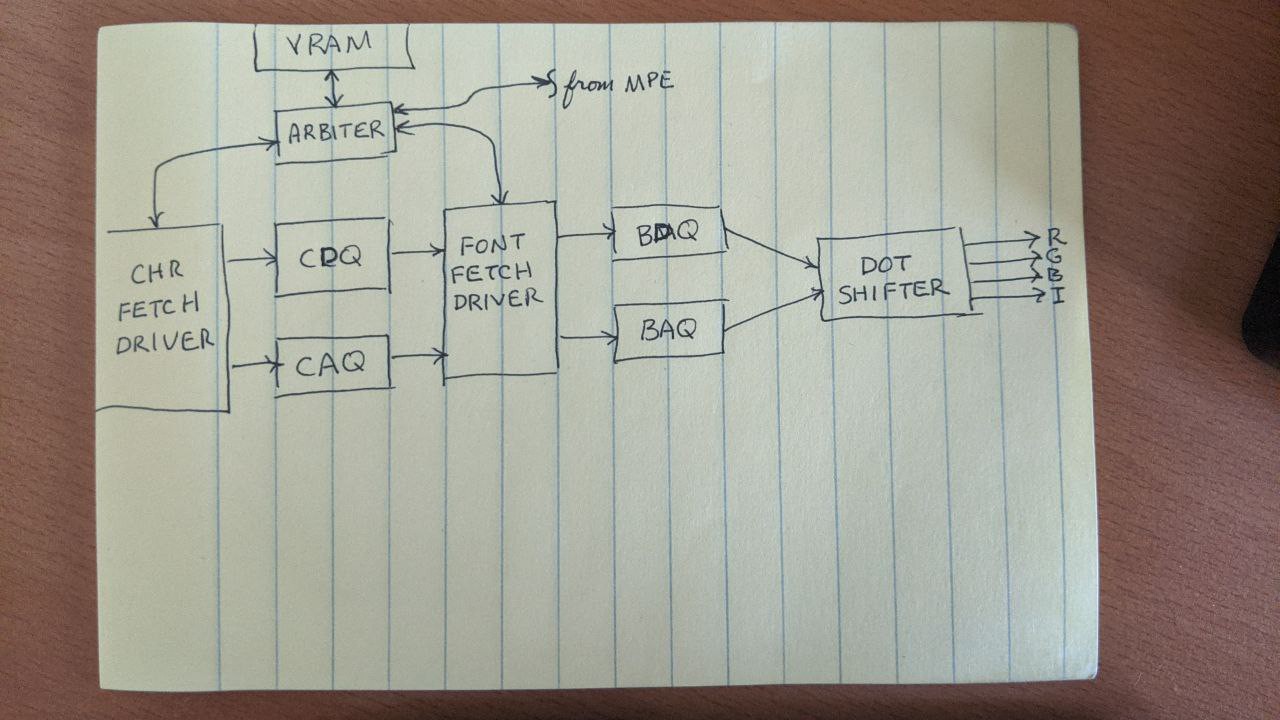

The more I look at things, the more I think using a set of fetch queues makes sense. I'm thinking a design similar to this would work:

Key:

- CDQ -- Character Data Queue

- CAQ -- Character Attribute Queue

- BDQ -- Bitmapped Data Queue

- BAQ -- Bitmapped Attribute Queue

Of course, I'm still not quite sure how to handle bitmapped graphics mode. The most obvious approach (which isn't always the best approach!) is to configure the font fetch driver to just pass through the data. But, this will require some additional though.

Samuel A. Falvo II

Samuel A. Falvo II

Discussions

Become a Hackaday.io Member

Create an account to leave a comment. Already have an account? Log In.