Please note that our solution is not an automatic feeding device, it was designed to help users with motor disabilities who want to eat alone to have greater independence in their lives. The user must have control of the device through its interface at all times.

Interfaces:

The control interface and its suitability for the capabilities of the user is essential when you have a disability. It conditions the effective use of the assistive device whatever its nature (wheelchair, computers, call bell...)

There are many interface commands available on the market and the best way to determine the command interface is to use the services of an occupational therapist who will evaluate and provide the solution that best meets the person's needs and possibilities.

Regarding the ordering of commercialized meal-taking solutions, the interfaces commonly used are satisfactory in most cases but remain standard and do not use the latest technologies available.

They are activated by switch which is a standard in the special need field.

The switch closes an electrical circuit which will be detected by the device to be used. It has a 3.5 mono male jack connector and the device must be equipped with a 3.5 mono female jack.

There are different types of switch which have different sizes and sensitivity and adapt to many motor possibilities. They are placed opposite in a zone or a reliable, repeatable and non-fooling movement. Typically, they can be installed near the hand, a finger, but it can also be at the head or anywhere else where we obtain an exploitable movement on the part of the user.

What we want to do on the interfaces is unprecedented work that will allow us to adapt as much as possible to the needs of the user and to the equipment he already has:

- We want to offer the possibility of connecting switches.

- The possibility of connecting special power wheelchair controls.

- Control the device by a smartphone application.

- The ability to control the device with the joystick of a power wheelchair via bluetooth.

- And go further by offering voice commands and vision detection (this option could be very interesting for people whose posture changes during the meal and cannot standup)

Our work can be fed by many open source projects which already allow the use of the wheelchair joystick to control a computer or a game console, for example.

For the moment we have concentrated our efforts on the general design of the robotic arm and we are voluntarily limited initially to a control by switch.

Thus, our solution has :

- two 3.5 mm jacks ports to connect switches

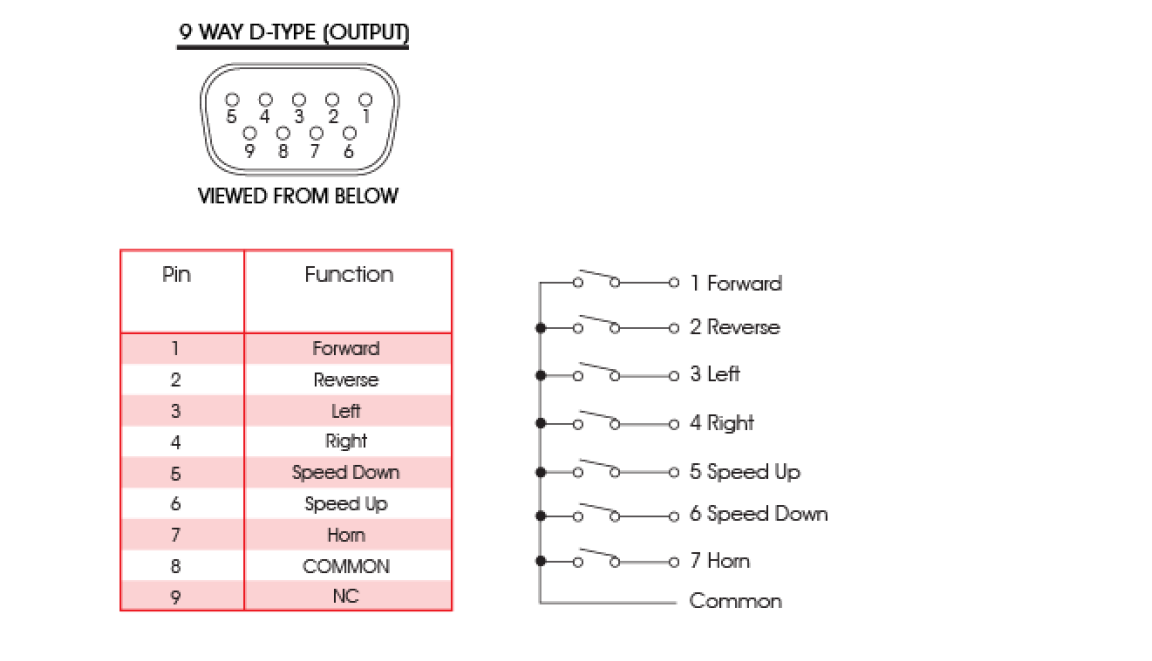

- a Sub D 9 socket (standard specific power wheelchair special controls)

- A bluetooth module HC 05 (for app control and wheelchair joystick control)

Subsequently, we will work on the use of special controls (hence the presence of a 9-way socket) and other feature's interaction mode, but we have to proceed step by step.

Operation:

The user sits in front of the table on which the robot is placed. Human assistance is needed to serve the food on the plate and initialize the position the spoon should reach.

The position of the spoon is recorded by the third person by pressing the button embedded in the arm. Then it's up to the user to take control.

an action on the command interface leads to the control of robot actions

the descent of the spoon on the plate then will pick up food from the plate and go up to the user's mouth

another action explores the contents of the plate.

We plan to offer different usage and control profiles that will adapt to the user's needs, desires and abilities.

So, we can imagine being able to come and choose a portion of the plate to eat a particular food, or imagine a greater control of the robot's movements.

The operation must be adapted to the possibilities of comprehension of each one and limit as possible the actions which could be tiring for the user.

Julien OUDIN

Julien OUDIN

Discussions

Become a Hackaday.io Member

Create an account to leave a comment. Already have an account? Log In.