Prior to collaborating on this project's challenge, I have been working on using Brain-Computer Interfaces for Smart Home control for people with locked-in syndrome.

Smart homes have been an active area of research, however despite considerable investment, they are not yet a reality for end-users. Moreover, there are still accessibility challenges for the elderly or the disabled, two of the main potential targets for home automation. In this exploratory study we designed a control mechanism for smart homes based on Brain Computer Interfaces (BCI) and apply it in the smart home platform in order to evaluate the potential interest of users about BCIs at home. We enable users to control lighting, a TV set, a coffee machine and the shutters of the smart home. We evaluated the performance (accuracy, interaction time), usability and feasibility (USE questionnaire) on 12 healthy subjects and 2 disabled subjects and reported the results.

This is an on-going research, including 2 published scientific contributions, if you want to learn more.

This post entry will be divided into several parts. I will share more details with you about the research as well as the set of the open-source tools we have used in this work.

Part 1.

Imagine you could control everything with your mind. Brain Computer Interfaces (BCIs) make this possible by measuring your brain activity and allowing you to issue commands to a computer system by modulating your brain activity. BCIs can be used in many applications: medical applications to control wheel chairs or prosthetics (Wolpaw et al., 2002) or to enable disabled people to communicate and write text (Yin et al., 2013); general public applications to control toys (Kosmyna et al., 2014), video games (Bos et al., 2010) or computer applications in general. One of the more recent fields of applications of BCIs are smart homes and the control of their appliances. Smart homes allow the automation and adaptation of a household to its inhabitants. In the state of the art of BCIs applied to smart home control, only younger healthy subjects are considered and the smart home is often a prototype (single room or appliance). BCIs have never been applied and evaluated with potential end-users in realistic conditions. However, smart homes are of the interest to disabled people or to elderly people with mobility impairments who are able to operate appliances within the house autonomously (Grill-Spector, 2003; Edlinger et al., 2009). Studies on disabled users are just as rare as studies in realistic smart homes (with healthy subjects or otherwise). However, the expectations and needs of healthy subjects are biased, as they cannot fully conceive of the difficulties of disabled people and thus of their needs, so performing experiments both with healthy and disabled subjects is of interest for smart home research.

Smart Homes and BCIs

There are several works that use BCIs in smart home environments. A good part of those take place in virtual smart home environment as opposed to a real or prototyped smart home. This can be problematic as it is difficult to recreate in situ conditions in-vitro (Kjeldskov and Skovl, 2007). There are several types of BCI control:

- Navigation (virtual reality only): The BCI issues continuous or discrete commands that make the avatar of the user move through the virtual home. Motor imagery (MI), where users imagine moving one or more limbs to generate continuous control is the most commonly used BCI for navigation.

- Trigger/Toggle: The BCI issues a punctual command that triggers a particular action or toggles the state of an object in the house (e.g., looking at a blinking led on the wall to make the light turn on). Many paradigms can be used for such actions:

- P300: The user looks at successively flashing items to choose from. When the desired item flashes, the brain produces a special signal (P300) that can be detected.

- Steady-State Evoked Potentials (SSVEP): The user looks at flickering targets at different frequencies. We can detect the target the user is looking at and trigger a corresponding action.

- Facial Expression BCI: The facial expressions of the user are detected though their EEG signals.

In this work we use only trigger-type controls. Most BCIs applied to smart homes use paradigms (P300, SSVEP) that require a display with flashing targets that the user must look at. Whereas, here, we use mental imagery, that requires no external stimuli. Mental imagery has never been applied to any practical control application, let alone smart homes. With conceptual imagery the semantics of the interaction is compatible with the semantics of the task. The principle is that users imagine the concept of a lamp (e.g., by visualizing, "imagining" a lamp in their mind) and the BCI recognizes the concept and trigger a command that turns on the light. No movement, voice command is needed from the person, only their brain activity.

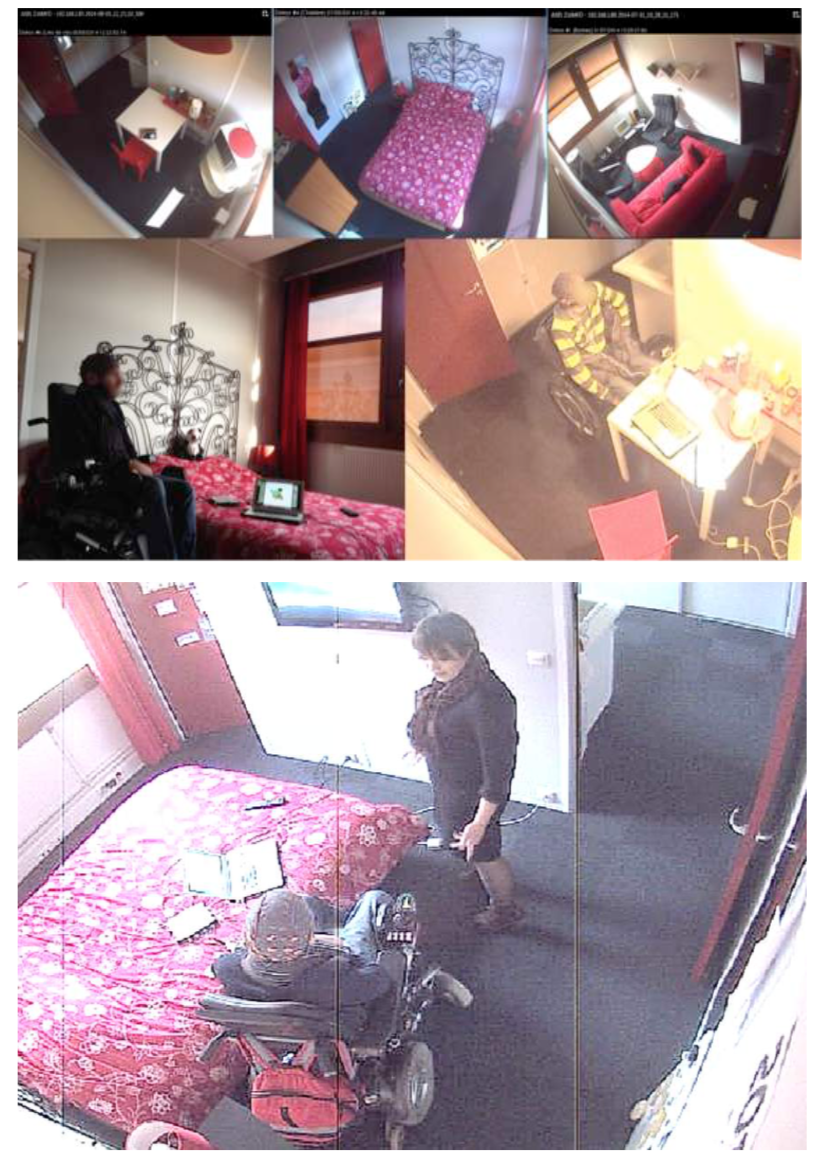

Image of the participant in the wheel chair performing a test in the smart home. The quality of the images is reduced due to the cameras currently installed and used in the smart home. Please contact nkosmyna AT mit DOT edu in case you want to re-use the image.

All the citations will be provided in Part 3 of this log post, but if you want to check them out now, please go here to get an open-access, free copy of the full, in-depth article of mine!

Discussions

Become a Hackaday.io Member

Create an account to leave a comment. Already have an account? Log In.