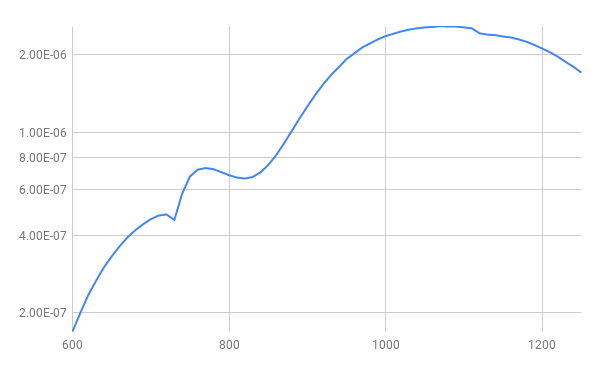

It hit me the other night why I was seeing such terrible response at the NIR end of the spectrum, silicon sucks at detecting much past 1100nm. I have a UDT 261 Germanium sensor that is specifically designed for the 800 to 1750nm range. I machined an adapter to mount the .75"-32 threads to the monochromator of more black delrin. At least I think its delrin, it cuts a little funny.

Much better response!

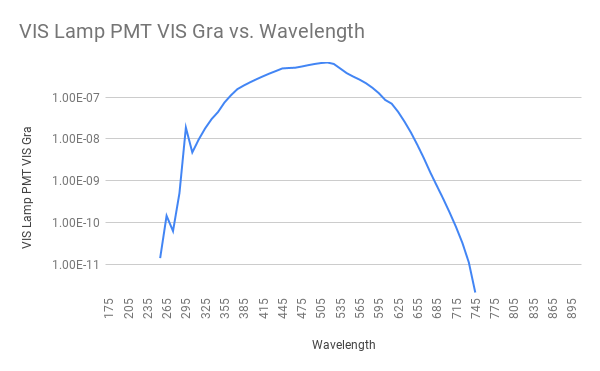

The sensor I gave been using for the visible spectrum is a UDT UV100 silicon photo diode. It works pretty well for brighter conditions using the larger slits but when you start using the smallest slits you start getting into the noise floor. One possible solution to this was a Hamamatsu HC120 PMT module that I had pulled out of a Verity end point detector. I had no other information than this data sheet and my modules were a custom for Verity so Hamamatsu would not give me the details. I machined another adapter for that. It also needs a +/-15v power supply and an additional pot to control the internal high voltage power supply.

I plotted the curve of the PMT and it looks like it is probably a side window version of the HC120-01 module which really dies out around 650nm. This is with the PMT voltage set to 226v, the voltage was adjusted to not oversaturate the radiometer input.

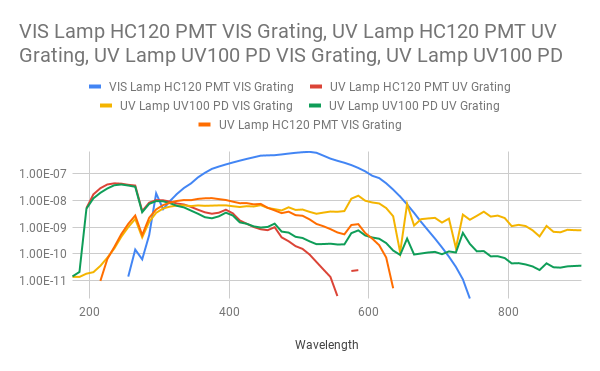

Seeing it does work well for the UV end I warmed up the deuterium lamp and ran that though using both the UV and VIS gratings to see what it all looked like as well as ran the UV100 sensor.

At the UV end both sensors match up well, you can see at about 450nm the PMT takes a nosedive where the UV100 keeps going though the end. So the PMT will be really good for any UV, especially since it is currently set at 226v with a maximum of 1100v, so it should be fine using the smaller slits.

One thing you can do with the deuterium lamp is used it to calibrate the spectrometer. There are two lines you can look at, Ha and Hb, the Ha line being the peak at 656.28 which you can see in each of the scans taken with the UV lamp above, the peak is not defined because I was using a 10nm step. To calibrate you just get close to 656nm and watch the output of the radiometer to peak and check the indicator on the monochromator and calculate the offset from what it reads and what it really is, in the case of my unit the visible offset was +1.5nm and the UV offset was +1.3.

Still have not found out how to normalize the output.

The two sensors I made adapters for.

Jerry Biehler

Jerry Biehler

Discussions

Become a Hackaday.io Member

Create an account to leave a comment. Already have an account? Log In.